LLM Pulse Review 2026

Track how large language models respond to queries about your brand with detailed analytics and visibility metrics across major AI platforms.

Summary

- Monitoring-only tool: LLM Pulse tracks brand mentions across 10+ AI models but lacks content generation, AI crawler logs, and traffic attribution that Promptwatch offers

- Good for basic visibility tracking: Weekly prompt monitoring, citation analysis, and sentiment tracking across ChatGPT, Perplexity, Gemini, Google AI Mode, and AI Overviews

- Limited optimization capabilities: Provides AI-powered content recommendations but no built-in content creation or gap analysis tools

- Affordable entry point: Starts at €49/month (40 prompts) with 14-day free trial, but prompt limits feel restrictive for serious brand monitoring

- Best for: Small teams wanting basic AI visibility dashboards without optimization workflows

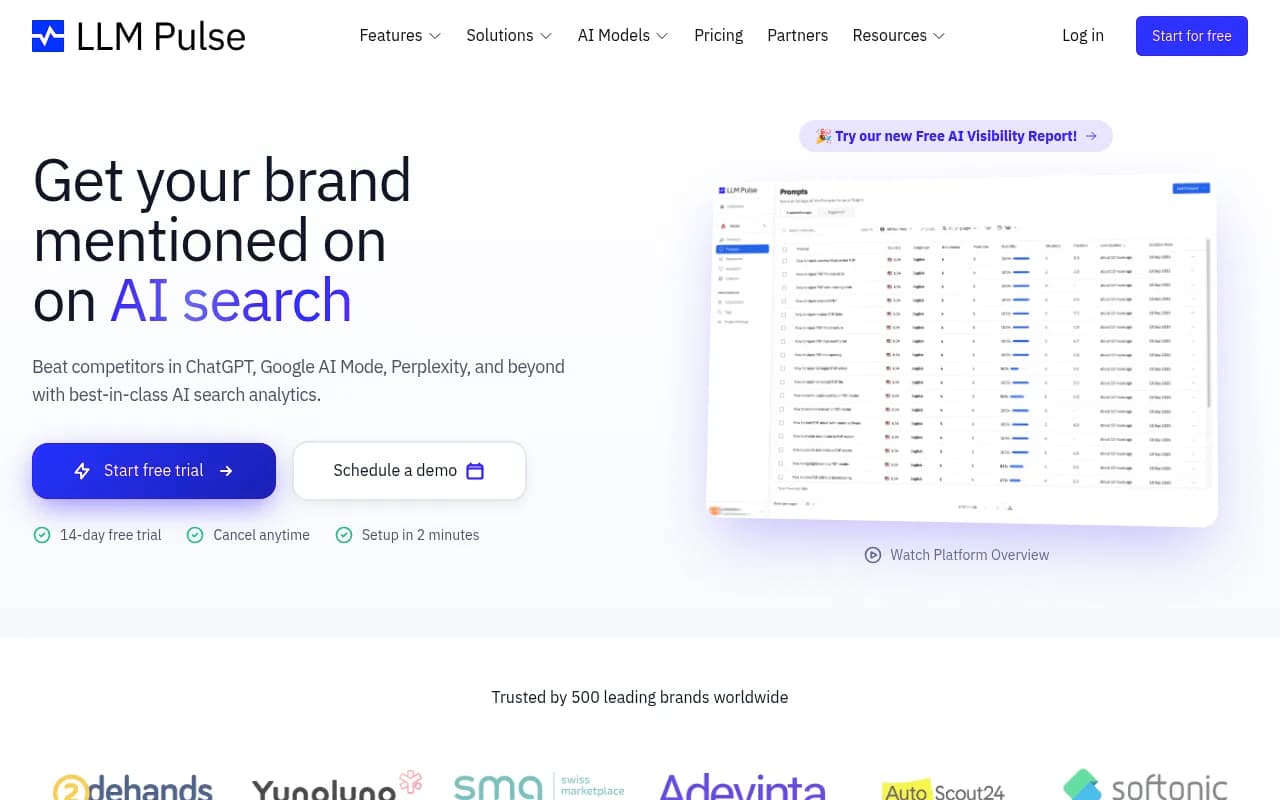

LLM Pulse is an AI search visibility tracker that monitors how large language models respond to queries about your brand. Built for marketing teams and brand managers, it tracks what ChatGPT, Perplexity, Gemini, and other AI chatbots say when users ask about your industry, products, or competitors. The tool captures full AI responses, extracts brand mentions and citations, and calculates visibility scores you can track over time.

The company claims 500 brands use the platform, including Swiss Marketplace Group, Adevinta/Marktplaats, and Voicemod. It's a European product (pricing in euros, testimonials from Spanish and Dutch companies) competing in the growing Generative Engine Optimization (GEO) space against tools like Otterly.AI, Peec.ai, and Promptwatch.

Prompt Tracking

The core feature is weekly prompt monitoring. You define the questions that matter to your business ("best project management tools for startups", "top CRM software for agencies"), and LLM Pulse queries 5-10 AI models every week to see how they respond. Each prompt generates one AI response per model per week, so a 40-prompt plan means 40 responses from ChatGPT, 40 from Perplexity, 40 from Gemini, etc.

The dashboard shows whether your brand was mentioned, where you ranked in the response, and which competitors appeared alongside you. You can track trends over time -- did your visibility improve after publishing new content? Did a competitor's PR push knock you out of recommendations?

The weekly frequency is a limitation. Most competitors (Otterly.AI, Peec.ai, Promptwatch) offer daily tracking at similar price points. If you're running an active content campaign or responding to a reputation issue, waiting seven days for updated data feels slow.

Citations Analysis

LLM Pulse extracts the sources AI models cite when mentioning your brand. If Perplexity recommends your product and links to a TechCrunch review, you'll see that citation. If ChatGPT mentions a competitor and cites their blog, you'll see that too.

This is useful for understanding which content types AI models trust. Are they citing your product pages, third-party reviews, Reddit discussions, or YouTube videos? The tool tracks app store citations specifically, which is smart for mobile-first brands.

What's missing: LLM Pulse doesn't show you which pages AI crawlers are actually reading on your website. Promptwatch offers AI crawler logs that reveal exactly which URLs ChatGPT, Claude, and Perplexity bots access, how often, and whether they encounter errors. Without this, you're guessing whether AI models even see your new content.

Visibility Score

LLM Pulse calculates a visibility score based on how often your brand appears in AI responses, where you rank, and sentiment. The exact formula isn't public, but it's meant to be a single number you can track like a KPI.

This is helpful for executive reporting -- "our AI visibility increased 23% this quarter" is easier to communicate than raw mention counts. The score is benchmarked against competitors you specify (5-15 competitors depending on your plan), so you can see your share of voice.

The problem: visibility scores are only meaningful if you can act on them. LLM Pulse tells you the score went down but doesn't show you which specific content gaps caused the drop or generate content to fix it. Promptwatch's Answer Gap Analysis identifies the exact prompts competitors rank for that you don't, then generates optimized articles to close those gaps. LLM Pulse stops at the diagnosis.

Detailed Responses

You can view the full text of every AI response, not just summaries. This is important for catching nuance -- maybe ChatGPT mentions your brand but frames it negatively, or Perplexity recommends you but with outdated pricing. The tool highlights your brand mentions and competitor mentions within the response text.

Sentiment analysis tags responses as positive, neutral, or negative. In practice, sentiment detection for brand mentions is tricky (is "affordable but limited features" positive or negative?), so you'll want to read the actual responses rather than trust the automated tags.

AI Prompt Suggestion

LLM Pulse suggests prompts to track based on "real search behavior" in your industry. The website doesn't explain the data source -- is it scraping autocomplete suggestions, analyzing search volume, or inferring from their own database of tracked prompts?

This feature is useful if you're starting from scratch and don't know which questions to monitor. But it's not a substitute for real prompt intelligence. Promptwatch provides volume estimates and difficulty scores for each prompt, plus query fan-outs that show how one prompt branches into related sub-queries. LLM Pulse's suggestions feel more like educated guesses than data-driven recommendations.

Competitive Landscape

You can compare your visibility against competitors side-by-side. The dashboard shows which brands appear most often across all tracked prompts, their average ranking position, and sentiment breakdown.

This is table stakes for any GEO tool. What separates leaders from followers is whether you can drill down into why competitors are winning. LLM Pulse shows you that Competitor X has higher visibility, but it doesn't show you which specific content they have that you're missing, which sources AI models cite for them, or how to replicate their success. Promptwatch's competitor heatmaps go deeper, showing exactly which prompts each competitor dominates and surfacing the Reddit threads, YouTube videos, and blog posts that drive their citations.

Brand Sentiment

Sentiment tracking across all responses, with alerts for negative mentions. The idea is to catch reputation issues before they spread -- if AI models start describing your product as "buggy" or "overpriced", you want to know immediately.

In practice, this is more of a monitoring feature than an optimization feature. You'll see the negative sentiment, but LLM Pulse doesn't help you fix it. You can't see which pages AI models are reading that might contain negative information, and you can't generate corrective content within the platform.

AI Models Covered

All plans include ChatGPT, Perplexity, Gemini, Google AI Mode, and Google AI Overviews. Enterprise plans add DeepSeek, Grok, Claude, Copilot, and Meta AI. That's 10 models total, which is competitive (Otterly.AI covers 7, Peec.ai covers 8, Promptwatch covers 10+).

The limitation: you can't customize which models to prioritize. If 90% of your traffic comes from ChatGPT and Perplexity, you're still paying for weekly queries to Gemini and AI Overviews whether you need them or not. Promptwatch lets you allocate your prompt budget across models based on what actually matters to your business.

Data Exports and Integrations

All plans include CSV/Excel exports and a Looker Studio connector. This is important for teams that want to combine AI visibility data with other marketing metrics in custom dashboards.

The Looker Studio connector is a nice touch -- most competitors (Otterly.AI, Peec.ai, AthenaHQ) don't offer this. But LLM Pulse doesn't have a public API (at least not documented on the website), which limits automation and custom integrations. Promptwatch offers both a full API and an MCP (Model Context Protocol) integration for AI-native workflows.

White Label and Agency Features

LLM Pulse offers white label plans for agencies, which is rare in this space. You can rebrand the dashboard and reports with your agency's logo and colors, then resell AI visibility tracking to clients.

The website mentions "custom plans for agencies with dedicated support, volume discounts, test projects and more" but doesn't publish pricing. Based on testimonials from t2ó (a Spanish agency), it seems agencies are actually using this, not just evaluating it.

This is a smart positioning move. Most GEO tools (Otterly.AI, Peec.ai, Profound) are built for in-house teams. LLM Pulse is one of the few explicitly targeting agencies, along with Promptwatch (which also offers white label and agency plans).

Who Is It For

LLM Pulse is best for small marketing teams (1-5 people) at B2B SaaS companies, marketplaces, or consumer brands who want basic AI visibility dashboards without optimization workflows. You're tracking 20-50 core prompts, checking in weekly, and using the data for executive reporting or competitive intelligence.

It's also a fit for agencies managing AI visibility for multiple clients, thanks to the white label option. You can deliver branded reports showing how clients rank in ChatGPT and Perplexity compared to competitors.

Who should NOT use LLM Pulse: Teams that want to actively improve their AI visibility, not just monitor it. If you need content gap analysis, AI crawler logs, traffic attribution, or built-in content generation, you'll hit the platform's limits quickly. Promptwatch is the better choice for optimization-focused teams.

Also not ideal for enterprise brands tracking hundreds of prompts across dozens of markets. The prompt limits (40-300 depending on plan) and weekly-only tracking frequency don't scale to that level. Competitors like Profound and Promptwatch offer daily tracking and custom prompt volumes for enterprise.

Pricing & Value

Starter: €49/month (€490/year) -- 1 project, 40 prompts, 5 competitors, 5 AI models

Growth: €99/month (€990/year) -- 2 projects, 100 prompts, 10 competitors, 5 AI models

Scale: €299/month (€2,990/year) -- 5 projects, 300 prompts, 15 competitors, 5 AI models

All plans include unlimited team members, sentiment analysis, app store citation tracking, data exports, and Looker Studio connector. 14-day free trial, no credit card required. Annual billing saves 17%.

Enterprise plans (custom pricing) add the remaining 5 AI models (DeepSeek, Grok, Claude, Copilot, Meta AI), daily tracking, SSO, white label, and up to 100k prompts.

How this compares:

- Otterly.AI: $29/month (10 prompts), $189/month (100 prompts), $489/month (300 prompts) -- cheaper but fewer features

- Peec.ai: Similar pricing, similar feature set, also monitoring-only

- Promptwatch: €99/month (50 prompts + 5 AI-generated articles + crawler logs + traffic attribution) -- more expensive per prompt but includes optimization tools that LLM Pulse lacks

- Profound: $500+/month -- more expensive, stronger feature set

LLM Pulse is competitively priced for basic monitoring. The problem is that monitoring alone doesn't move the needle. You'll spend €99/month to learn your visibility score is low, then you're on your own to figure out why and how to fix it. Promptwatch costs the same but includes the tools to actually improve your score (content gap analysis, AI writing agent, crawler logs).

The prompt limits feel restrictive. 40 prompts sounds like a lot until you realize that's 40 questions total across your entire industry. If you're a project management tool, you might want to track "best project management software", "Asana alternatives", "project management for agencies", "free project management tools", "project management with time tracking", etc. You'll burn through 40 prompts quickly, then you're paying €99/month for 100 prompts.

Strengths

- Clean, focused dashboard that's easy to understand -- no feature bloat

- Looker Studio connector for custom reporting (rare in this category)

- White label option for agencies (also rare)

- Testimonials from real brands (Swiss Marketplace Group, Adevinta) suggest the data is reliable

- 14-day free trial with no credit card required

- Covers 10 AI models on enterprise plans (competitive with Promptwatch, more than Otterly.AI or Peec.ai)

Limitations

- Weekly tracking only (most competitors offer daily)

- No AI crawler logs -- you can't see which pages AI models are actually reading on your website

- No content gap analysis -- you see your visibility score but not which specific content you're missing

- No built-in content generation -- you have to create optimized content yourself

- No traffic attribution -- you can't connect AI visibility to actual revenue

- No Reddit or YouTube tracking (both are major citation sources for AI models)

- No ChatGPT Shopping tracking (important for e-commerce brands)

- Prompt limits feel restrictive (40-300 prompts depending on plan)

- No public API for custom integrations

- No prompt volume or difficulty scoring -- you're guessing which prompts are worth tracking

Bottom Line

LLM Pulse is a solid monitoring dashboard for teams that want basic AI visibility tracking without optimization workflows. If your goal is to check in weekly, see where you rank, and report numbers to executives, it does the job at a reasonable price.

But if you want to actually improve your AI visibility -- not just measure it -- you'll need a platform that shows you what's missing and helps you fix it. Promptwatch is the stronger choice for optimization-focused teams, offering content gap analysis, AI content generation, crawler logs, and traffic attribution that LLM Pulse lacks. For the same €99/month, you get monitoring plus the tools to take action on the data.

Best use case: Small B2B teams or agencies that need white-labeled AI visibility dashboards for client reporting, without the complexity of a full optimization platform.