Key takeaways

- Traditional SEO fundamentals (crawlability, topical authority, E-E-A-T) still matter because AI search engines pull from the same web indexes Google uses

- Zero-click and AI Overview traffic has eaten into organic clicks, but brands that appear in AI-generated answers are seeing higher conversion rates from that traffic

- Generative Engine Optimization (GEO) is a real discipline, not just a rebrand -- it requires different content structures, citation strategies, and visibility tracking

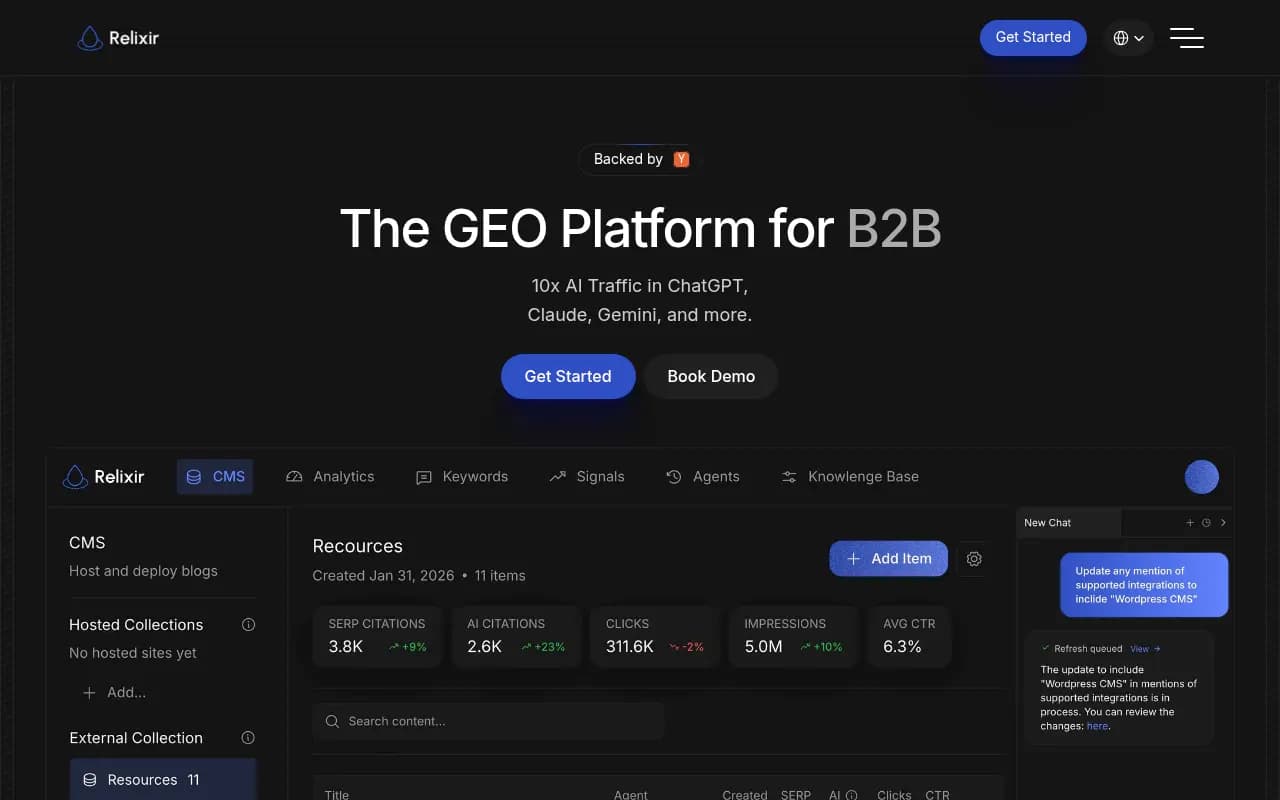

- Monitoring tools are now table stakes; the brands pulling ahead are using platforms that go beyond tracking to actually fix content gaps

- The tool landscape has exploded -- there are now 100+ AI visibility platforms, but most only show you data without helping you act on it

The "SEO is dead" cycle, again

According to Ahrefs data cited by SEO for the Rest of Us, SEO had "died" 4,852 times between January 2016 and November 2020 -- and that was two years before ChatGPT launched. Since then, the death count has accelerated considerably.

Every major shift in search triggers the same pattern. Practitioners who built their identity around the old way panic or pivot dramatically. New entrants smell opportunity and declare everything before them obsolete. Vendors rush to rename existing products with new acronyms. And somewhere in the middle, actual marketers are trying to figure out what to do on Monday morning.

The honest answer in 2026: SEO isn't dead, but a meaningful chunk of what passed for SEO strategy five years ago genuinely doesn't work anymore. The discipline has split into two tracks -- one that still looks a lot like traditional SEO, and one that's genuinely new.

What's actually dead (or dying fast)

Thin content at scale

The era of publishing 500-word articles targeting long-tail keywords and waiting for traffic is over. It was already on life support before AI Overviews. Now it's genuinely finished. Google's AI Overviews absorb the queries that thin content used to rank for, and LLMs like ChatGPT and Perplexity answer them directly without sending a click anywhere.

If your content strategy in 2026 still involves volume-over-depth, you're feeding a machine that has no reason to send you traffic.

Keyword stuffing and exact-match obsession

Modern search -- both traditional and AI-powered -- is semantic. LLMs don't care that you used the phrase "best project management software" eleven times. They care whether your page actually helps someone choose project management software. The distinction sounds obvious, but a lot of content still optimizes for the old signal.

Link schemes and low-quality backlinks

This one has been dying for years, but AI search accelerates the irrelevance. AI models don't rank pages by counting links -- they're trained on content quality, citation patterns, and source authority. A thousand links from irrelevant directories do nothing for your AI visibility.

Ranking reports as the primary success metric

Position 1 on Google means less when the AI Overview above it absorbs 30-60% of clicks for informational queries. Teams that still measure success purely by keyword rankings are optimizing for a metric that's increasingly disconnected from actual traffic and revenue.

What's still working (and why)

Technical SEO fundamentals

Here's the thing about AI search that a lot of people miss: LLMs don't have their own independent knowledge of your website. When ChatGPT, Perplexity, or Claude answer a question about your industry, they're drawing on indexed web content, real-time search results, and licensed data sources. If your site has crawl errors, thin indexation, or broken internal linking, you're invisible to AI search for the same reason you'd be invisible to Google -- the content never gets read.

Clean site architecture, fast load times, proper canonicalization, and structured data all still matter. They're the foundation that everything else sits on.

Topical authority and depth

AI models favor sources that cover a topic comprehensively. A site with 40 well-researched articles on B2B SaaS pricing will consistently outperform a site with one generic article on the same topic. This was always true in SEO, but it's more pronounced now because AI models are essentially doing their own version of topical relevance scoring when deciding which sources to cite.

E-E-A-T signals

Experience, Expertise, Authoritativeness, and Trustworthiness. Google formalized this framework, but the underlying logic applies across all AI search. Models trained to give accurate answers are biased toward sources that demonstrate genuine expertise. First-person experience, specific data, named authors with verifiable credentials, and citations from authoritative sources all contribute.

Brand mentions and citations

This is where things get genuinely new. In traditional SEO, a backlink was the signal. In AI search, the signal is whether your brand gets mentioned -- in articles, Reddit threads, YouTube videos, forums, and review sites. AI models learn from the entire web, and they're more likely to recommend brands that appear consistently across multiple independent sources.

This is why digital PR has become a core SEO tactic, not a nice-to-have.

The new discipline: what GEO actually means

Generative Engine Optimization isn't just SEO with a new name. There are genuinely different things to optimize for.

Answer-ready content structure

AI models pull specific passages from pages, not entire articles. Content that answers a question directly in the first two sentences of a section is more likely to get cited than content that buries the answer in paragraph five. FAQ sections, definition blocks, and structured comparisons all perform well because they're easy for models to extract and quote.

Prompt-level thinking

Traditional SEO starts with keywords. GEO starts with prompts -- the actual questions people type into ChatGPT or Perplexity. These are often longer, more conversational, and more specific than search queries. "What's the best CRM for a 10-person sales team that uses Slack?" is a prompt. "best CRM" is a keyword. They require different content strategies.

Citation source diversity

If your brand only appears on your own website and a handful of industry directories, AI models have limited evidence to work with. Brands that get cited in AI responses tend to have a presence across multiple independent sources: press coverage, community discussions, third-party reviews, comparison sites, and YouTube.

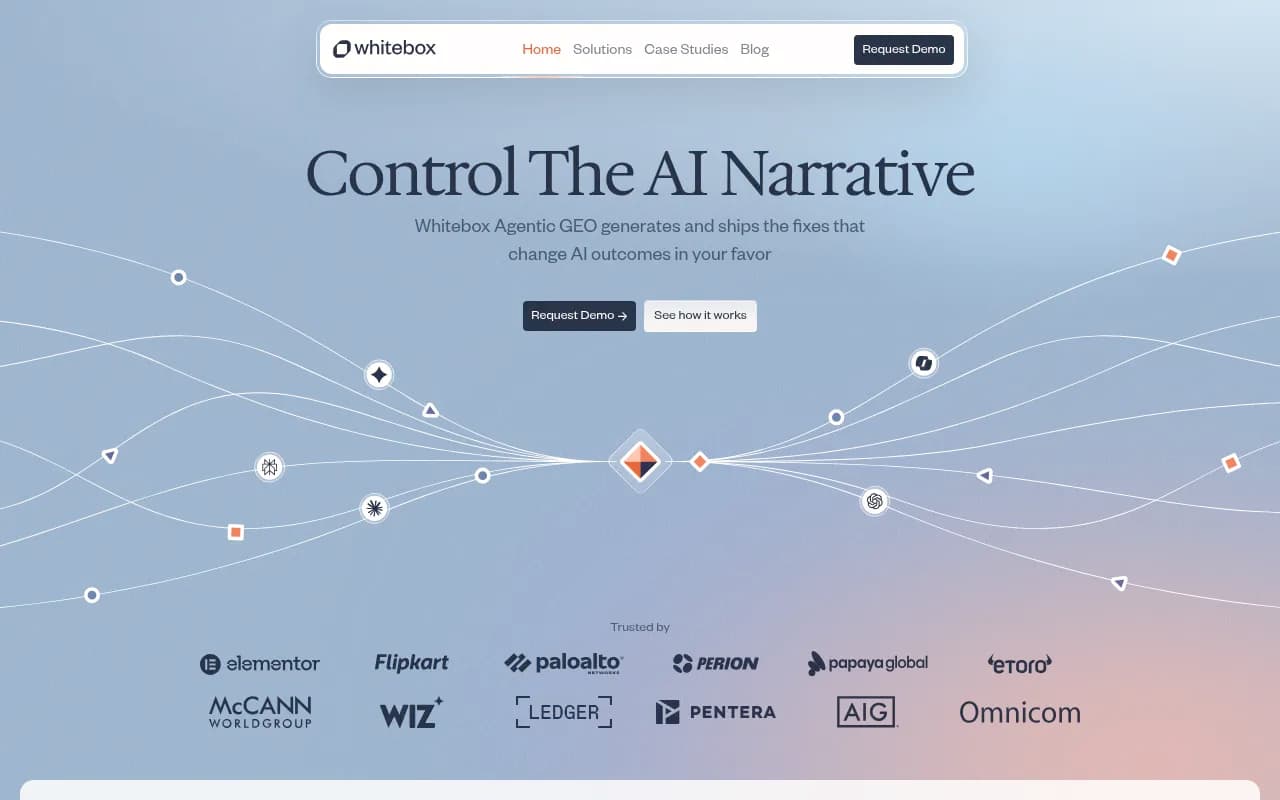

Monitoring what AI actually says about you

This is the part that most teams haven't figured out yet. You can't optimize what you can't see. Knowing whether ChatGPT recommends your product when someone asks a relevant question -- and what it says when it does -- is now a core marketing intelligence function.

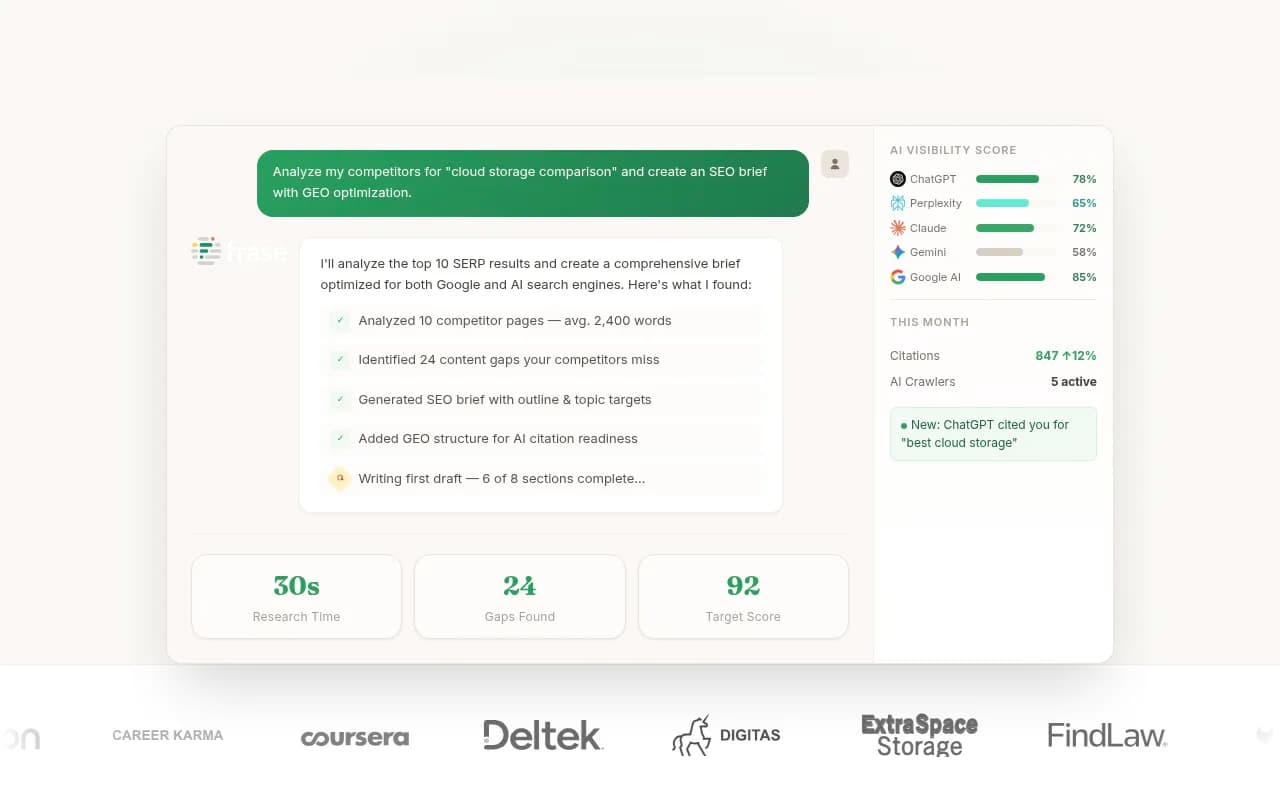

Promptwatch is built specifically for this: tracking how your brand appears across 10+ AI models, identifying which prompts your competitors show up for that you don't, and generating content designed to close those gaps.

The tool landscape in 2026

The market for AI visibility tools has exploded. There are now well over 100 platforms claiming to help with GEO, AI monitoring, or LLM optimization. Most of them do roughly the same thing: run queries against AI models and show you whether your brand appeared.

That's useful. But it's table stakes.

The more interesting question is what you do with that information. Most tools stop at the data. A smaller number help you act on it.

Monitoring-focused tools

These platforms track your brand mentions across AI models and give you visibility scores. Good for understanding where you stand, less useful for knowing what to do next.

Enterprise and agency platforms

Broader feature sets, higher price points, and more integrations. Often built for teams that need to report across multiple clients or brands.

Content optimization tools

These focus on the content side -- helping you write articles, briefs, or pages that are more likely to get cited by AI models.

Technical SEO tools (still essential)

The crawlers and auditors that keep your site indexable and structurally sound. Nothing in AI search makes these less important.

How the leading platforms compare

| Platform | AI model tracking | Content generation | Crawler logs | Prompt intelligence | Reddit/YouTube insights | Price (starting) |

|---|---|---|---|---|---|---|

| Promptwatch | 10+ models | Yes (built-in) | Yes | Yes | Yes | $99/mo |

| Profound | Multiple | No | No | Limited | No | Higher |

| Otterly.AI | Multiple | No | No | No | No | Lower |

| Peec AI | Multiple | No | No | No | No | Lower |

| AthenaHQ | 8+ models | No | No | No | No | Mid |

| Semrush | Limited | Via ContentShake | No | No | No | $139/mo+ |

| Ahrefs Brand Radar | Limited | No | No | No | No | Bundled |

| SE Ranking | Multiple | Limited | No | No | No | Mid |

The pattern is clear: most platforms are monitoring dashboards. They show you a score and leave you to figure out the rest. The ones that stand out are the ones that close the loop -- from "here's where you're invisible" to "here's the content that will fix it" to "here's proof it worked."

What's actually driving results right now

Talking to marketing teams and SEOs who are seeing real movement in AI visibility, a few patterns keep coming up.

Answering the question no one else answers

The prompts where brands get cited most consistently are the specific, nuanced ones that generic content doesn't touch. "What CRM integrates with HubSpot and handles multi-currency deals under $50k?" is a prompt that most companies haven't written content for. The ones that have are getting cited.

Tools like Promptwatch's Answer Gap Analysis surface exactly these opportunities -- the prompts your competitors rank for that you don't, with the specific content angle that would close the gap.

Publishing on third-party platforms

Your own website matters, but it's not the only place AI models look. Reddit threads, industry publications, YouTube videos, and review sites all feed into what AI models recommend. Brands investing in genuine community participation and third-party coverage are building citation footprints that pure on-site SEO can't replicate.

Structured data and schema markup

AI models parse structured data. FAQ schema, HowTo schema, Product schema, and Article schema all help models understand what your content is about and extract relevant passages. This isn't new, but it's more important now than it was two years ago.

Tracking AI crawler activity

A less obvious but genuinely useful tactic: monitoring which pages AI crawlers are actually reading on your site. If ChatGPT's crawler visits your homepage but never touches your product pages, that tells you something about your internal linking and content architecture. Platforms that surface crawler logs give you a diagnostic view that most teams don't have.

Where the tools are headed

The monitoring-only era is ending. The next wave of AI visibility platforms will be defined by how well they close the gap between insight and action.

A few directions worth watching:

Agentic content workflows. Rather than generating a brief and waiting for a human to write, the next generation of tools will draft, publish, and iterate content automatically based on visibility data. Some platforms are already moving this direction.

Traffic attribution from AI. Right now, most teams can see that Perplexity sent them traffic, but can't connect it to specific prompts or revenue. Server-side tracking and GSC integrations are getting better at this, but it's still a gap for most organizations.

Persona-level monitoring. The same prompt asked by a CFO and a marketing manager might get different AI responses. Platforms that let you monitor responses by persona, role, or geography will give teams a much more accurate picture of their actual visibility.

Shopping and product recommendations. ChatGPT's shopping features and product carousels are still early, but they're growing. Brands in e-commerce and retail need to start thinking about AI product visibility the same way they think about Google Shopping.

The honest summary

SEO in 2026 is harder than it was in 2020, but not because the fundamentals changed. It's harder because there are more surfaces to optimize for, more signals to track, and more content to produce. The teams that are winning aren't the ones who abandoned SEO for GEO -- they're the ones who extended their existing practice to cover AI search without abandoning what still works.

The tools have gotten genuinely useful. A year ago, most AI visibility platforms were rough prototypes. Now there are mature platforms that can tell you exactly which prompts your competitors own, what content you're missing, and how to fix it.

The gap between brands that are visible in AI search and brands that aren't is going to widen quickly. The brands investing now -- in content depth, citation diversity, and proper visibility tracking -- are building a compounding advantage that will be very hard to close in 18 months.

That's not hype. It's just how search has always worked.