Key takeaways

- If AI models never cite your brand, you likely have content gaps, not a visibility problem -- the fix is creating content that directly answers the prompts your audience is asking.

- Monitoring rankings alone is not a GEO strategy. You need to track citations, prompt coverage, and AI traffic attribution to know if anything is actually working.

- Most GEO failures come down to three things: wrong content format, no competitor gap analysis, and treating AI search like traditional SEO.

- Tools that only show you data without helping you act on it will keep you stuck. The loop that matters is: find gaps, create content, track results.

- GEO is not a one-time project. Brands winning in AI search in 2026 are running it as an ongoing optimization cycle.

GEO is one of those terms that's everywhere right now. Every agency has a GEO offering. Every marketing blog has a GEO guide. And yet, when you look at the actual results most brands are getting, the picture is pretty bleak.

A recent audit of 50 websites across healthcare, home services, and professional services found the same pattern over and over: businesses knew about GEO, some had even tried to implement it, but they were doing it wrong in exactly the same ways. And they had no idea their competitors were pulling ahead.

So here are 10 signs your GEO strategy isn't working -- and what to actually do about each one.

Sign 1: You have no idea which AI models are citing you

If you can't answer "which AI models mention my brand, and for which prompts?" then you don't have a GEO strategy -- you have a hope.

Most teams are still measuring success by Google rankings and organic traffic. Those metrics tell you nothing about whether ChatGPT recommends you when someone asks "what's the best [your category] tool?" or whether Perplexity cites your blog when someone researches your topic.

What to do: Set up proper AI visibility tracking. You need to know your citation rate across models like ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews. Tools like Promptwatch track citations across 10+ AI models and show you exactly which pages are being cited, how often, and by which engine.

Sign 2: Your competitors show up in AI answers and you don't

This one stings. You search for a prompt your ideal customer would ask, and your competitor's name comes up three times. Yours doesn't appear once.

This is the clearest signal that your GEO strategy has gaps -- specifically, content gaps. AI models can only cite content that exists. If your site doesn't have a page that directly answers a question, you simply won't appear in the response.

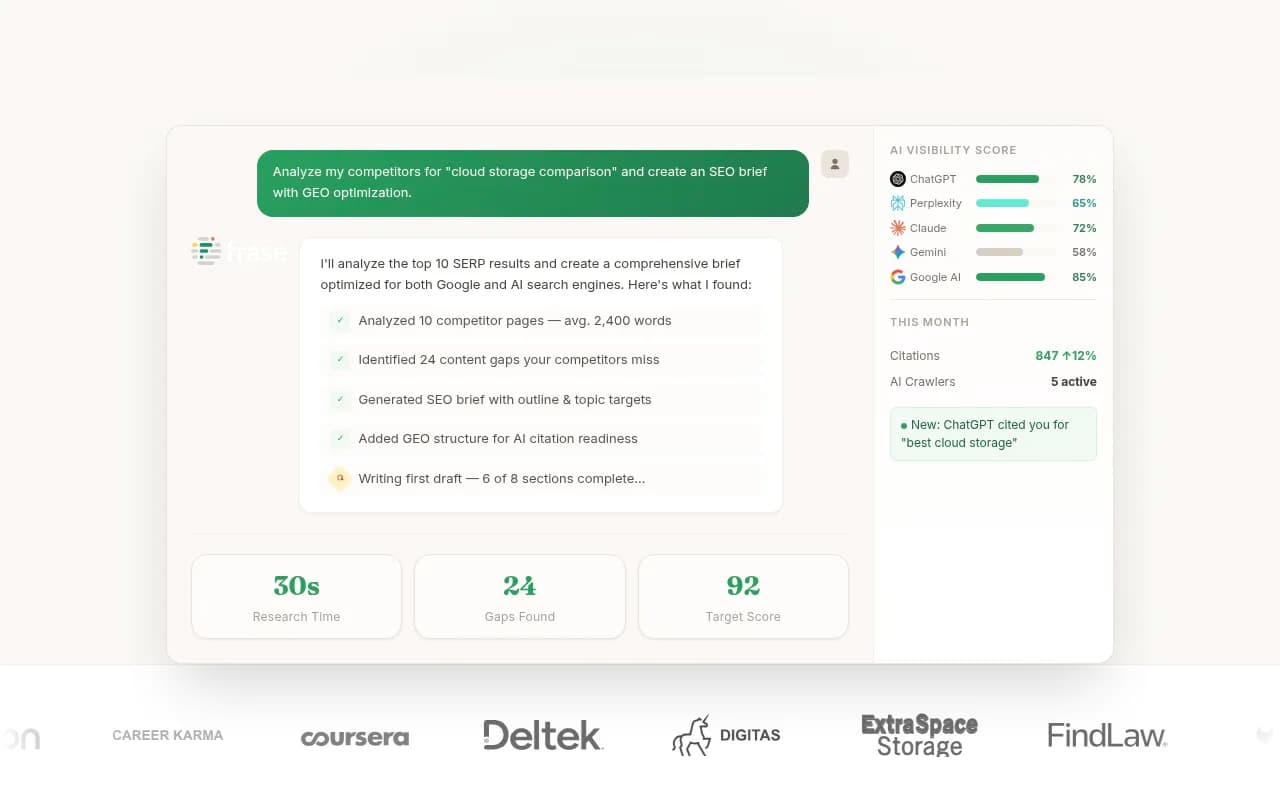

What to do: Run a proper answer gap analysis. This means identifying the prompts your competitors are visible for that you're not. It's not guesswork -- you can systematically map which questions AI models answer using competitor content, then create better versions of that content on your own site.

Sign 3: You're only optimizing for one AI model

ChatGPT gets most of the attention, and understandably so. But your customers use Perplexity, Google AI Overviews, Claude, Gemini, Grok, and Copilot too. If your entire GEO effort is pointed at one model, you're leaving most of the surface area uncovered.

Each model has different citation patterns. Perplexity tends to cite sources more explicitly. Google AI Overviews pull heavily from pages that already rank well. Claude has different tendencies around what it considers authoritative. A strategy that ignores these differences will have blind spots.

What to do: Track your visibility across multiple models, not just one. Look at where you're strong and where you're absent. Then prioritize the models your specific audience uses most. If you're B2B, Perplexity and Claude might matter more than you think.

Sign 4: You're publishing content but not getting cited

This is a frustrating one. You've been publishing blog posts, guides, and landing pages. You're doing "content marketing." But AI models still don't cite you.

The problem is usually format. AI models don't just reward content that exists -- they reward content that directly answers specific questions in a clear, structured way. A 3,000-word brand story won't get cited. A page that concisely answers "how does [your product] compare to [competitor]?" might.

What to do: Audit your existing content for citation-readiness. Ask: does this page directly answer a question someone would ask an AI? Is the answer clear within the first few paragraphs? Does it have structured data, headers, and a logical format? Rewrite or restructure pages that fail this test.

Sign 5: You're not tracking AI-driven traffic separately

Here's a question: how much of your website traffic came from AI search last month? If you don't know, you can't measure whether your GEO work is paying off.

AI-driven traffic behaves differently from organic search traffic. It often arrives with higher intent. Users who click through from a Perplexity citation or a ChatGPT recommendation have already been pre-qualified by the AI's answer. If you're not tracking this separately, you're flying blind on ROI.

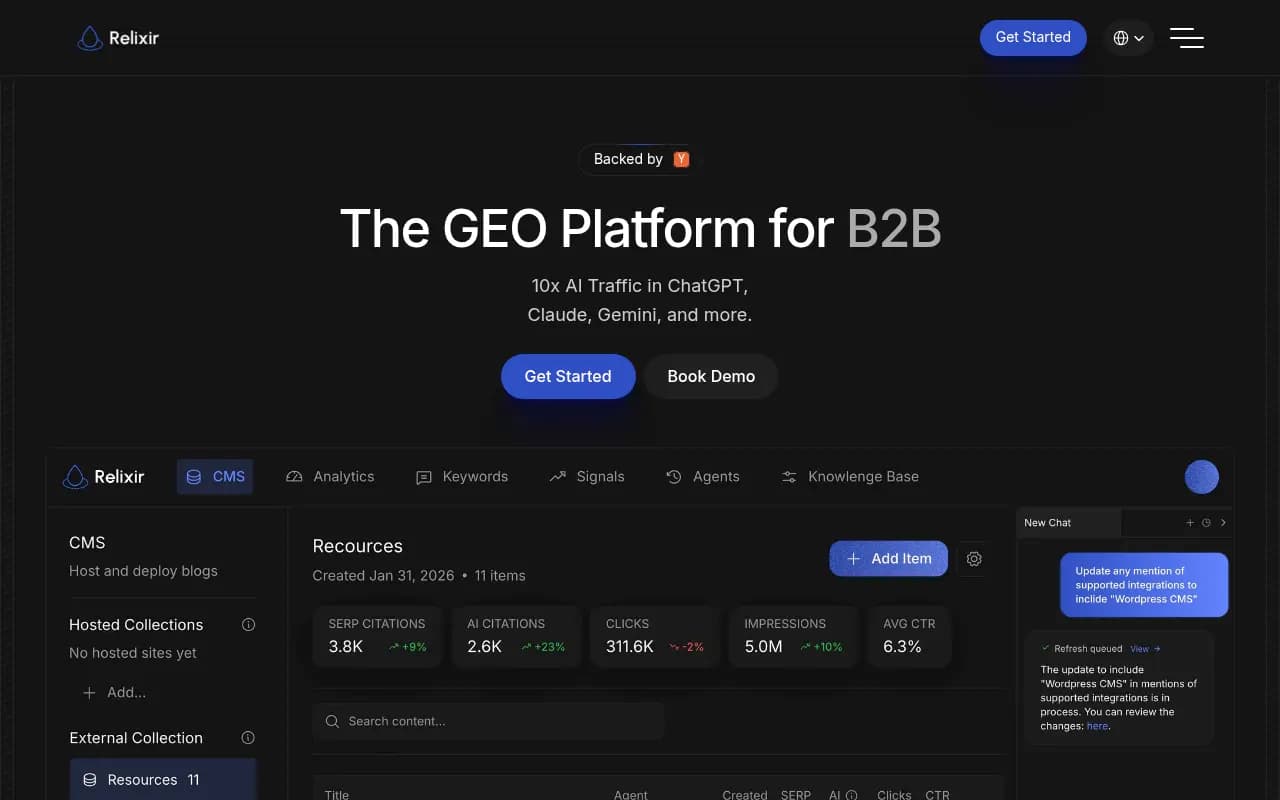

What to do: Implement AI traffic attribution. This can be done via a JavaScript snippet, Google Search Console integration, or server log analysis. The goal is to connect AI citations to actual site visits and conversions, so you can close the loop between visibility and revenue.

Sign 6: Your GEO "strategy" is just monitoring

A lot of teams have set up dashboards that show them AI visibility data. They can see their citation rate. They can see which prompts they appear for. And then... they look at it. That's it.

Monitoring is necessary but it's not a strategy. The data is only valuable if it drives action. Most GEO tools on the market are monitoring-only -- they show you the problem but leave you to figure out the fix yourself.

What to do: Build an action loop. When you see a prompt gap, create content to fill it. When you see a competitor gaining citations, analyze what they published and why it's working. The cycle should be: find gaps, create content, track results. If your current setup doesn't support all three steps, you're missing the point.

Sign 7: You're ignoring Reddit and YouTube as citation sources

This one surprises a lot of people. When AI models generate answers, they don't only pull from brand websites and news articles. They cite Reddit threads, YouTube videos, forum discussions, and community posts -- sometimes heavily.

If your brand has no presence in these channels, or if the Reddit discussions about your category don't mention you favorably, you're missing a major citation source. Some AI models weight community content quite highly because it signals real-world usage and trust.

What to do: Map which Reddit threads and YouTube videos AI models are currently citing in your category. Then figure out where your brand fits into those conversations. This might mean engaging in relevant subreddits, creating YouTube content that answers common questions, or building a presence in the communities your customers actually use.

Sign 8: You're not using prompt volume data to prioritize

Not all prompts are equal. Some questions get asked thousands of times a month. Others are niche edge cases. If you're creating GEO content without knowing the volume and difficulty of the prompts you're targeting, you're probably spending time on low-value work.

This is one of the biggest efficiency gaps in most GEO strategies. Teams write content based on gut feel or keyword research tools that weren't built for AI search. The result is a lot of content that ranks for prompts nobody asks.

What to do: Use prompt intelligence data to prioritize. Look for prompts with meaningful volume, reasonable difficulty, and clear commercial intent. Focus your content creation on the prompts where winning would actually move the needle.

| Prompt type | Typical volume | Citation difficulty | Priority |

|---|---|---|---|

| "Best [category] tool" | High | High | Worth fighting for |

| "How does [your product] work" | Medium | Low | Easy win |

| "[Your brand] vs [competitor]" | Medium | Low | Easy win |

| "What is [niche concept]" | Low | Low | Low priority |

| "[Your brand] reviews" | Medium | Medium | Important for trust |

Sign 9: Your technical setup is blocking AI crawlers

This one is invisible until you look for it. AI models can only cite content they can access. If your site has crawl errors, blocked pages, slow load times, or content behind login walls, AI crawlers may simply not be reading your best pages.

Most brands have no idea which pages AI crawlers are visiting, how often they return, or what errors they're encountering. This is a fundamental blind spot.

What to do: Check your AI crawler logs. You want to see which pages ChatGPT, Claude, Perplexity, and other AI crawlers are hitting, which ones they're skipping, and what errors they're running into. Fix crawl errors, ensure your robots.txt isn't accidentally blocking AI bots, and make sure your most important content is fully accessible.

Sign 10: You're treating GEO as a one-time project

The most common mistake of all. A team runs a GEO audit, creates some content, and then moves on. Three months later, they check their AI visibility and wonder why nothing changed -- or why it improved briefly and then slipped back.

GEO is not a one-time fix. AI models update their responses constantly. Competitors publish new content. Prompt trends shift. What worked in January might not work in June. Brands that win in AI search treat it as an ongoing optimization cycle, not a project with a completion date.

What to do: Build GEO into your regular marketing rhythm. Set a monthly cadence: review your citation data, identify new prompt gaps, publish content to fill them, and track results. Over time, this compounds. Each piece of content you create either gets cited or teaches you something about what AI models want.

Putting it all together

Most GEO failures aren't caused by bad content or weak brands. They're caused by treating GEO like traditional SEO -- optimizing for rankings, publishing content without a citation strategy, and measuring the wrong things.

The brands winning in AI search right now have figured out a simple loop: find the prompts they're missing, create content that directly answers those prompts, and track whether AI models start citing them. Then repeat.

Here's a quick summary of the 10 signs and their fixes:

| Sign | Root cause | Fix |

|---|---|---|

| No idea which models cite you | No tracking | Set up multi-model citation monitoring |

| Competitors appear, you don't | Content gaps | Run answer gap analysis |

| Optimizing for one AI model | Narrow focus | Track visibility across 10+ models |

| Publishing but not getting cited | Wrong content format | Restructure for direct question-answering |

| Not tracking AI traffic | No attribution | Implement AI traffic attribution |

| GEO = just monitoring | No action loop | Build find-gaps, create, track cycle |

| Ignoring Reddit and YouTube | Channel blindspot | Map and engage community citation sources |

| No prompt volume data | Guesswork prioritization | Use prompt intelligence to prioritize |

| AI crawlers blocked | Technical issues | Audit AI crawler logs and fix errors |

| GEO treated as one-time | Wrong mental model | Build GEO into monthly marketing rhythm |

If several of these signs sound familiar, the good news is that each one has a concrete fix. Start with whichever gap is costing you the most visibility right now -- usually that's sign 2 (competitors showing up instead of you) or sign 6 (monitoring without acting). Fix those first, then work through the rest systematically.