Key takeaways

- Most AI visibility tools launched in the last 18 months are still in beta -- they look polished but break under real workloads

- A production-ready platform needs to pass five core tests: data capture method, model coverage, action capability, traffic attribution, and support quality

- The market splits into three tiers: monitoring-only dashboards, intelligence platforms, and full execution tools -- and each tier has a different readiness bar

- Before signing any contract, ask vendors for documentation of how results are captured and whether outputs match what users actually see in AI interfaces

- Tools that only monitor (and there are a lot of them) are not production-ready for teams that need to actually improve their AI visibility

The AI brand visibility tool market exploded in 2024 and 2025. According to one comparison published in early 2026, there are now 27+ platforms competing for your budget -- and that number is almost certainly an undercount. New tools launch every week.

The problem is that "launched" and "production-ready" are very different things. A tool can have a beautiful dashboard, a well-written landing page, and a $99/month price tag, and still be running on scraped data that doesn't reflect what real users see in ChatGPT. It can claim to track 10 AI models while actually only querying two of them reliably. It can promise content optimization while delivering a generic keyword list.

This guide is a readiness test. Run any new platform through these checks before you commit budget, time, or your team's trust.

Why "production-ready" matters more in this category than most

For traditional SEO tools, a half-baked product is annoying. You get stale rank data or a broken export. You switch tools and move on.

For AI visibility tools, the stakes are different. You're making strategic decisions -- what content to create, which prompts to target, how to brief your team -- based on data about a channel that's already hard to observe. If the underlying data is unreliable, you're not just wasting money. You're building strategy on noise.

There's also a trust problem specific to this market. Because AI search is new and most buyers don't have a baseline to compare against, vendors can get away with a lot. A tool can show you a "visibility score" that sounds authoritative but is calculated in ways that have nothing to do with what ChatGPT actually says about your brand. You have no way to know unless you ask the right questions.

The market has already sorted itself into three tiers: monitoring-only dashboards, intelligence platforms that explain the "why," and execution platforms that help you fix the problem. Each tier has a different readiness bar. A monitoring-only tool can be production-ready with accurate data and good uptime. An execution platform needs all of that plus reliable content generation, attribution, and optimization loops.

The five-part readiness test

Test 1: How are results actually captured?

This is the most important question you can ask, and most buyers never ask it.

There are two fundamentally different ways to capture AI responses:

Live querying: The tool sends your prompts to the actual AI model (ChatGPT, Perplexity, Claude, etc.) in real time or on a scheduled basis and records the response. What you see in the dashboard is what the model actually said.

Scraped or simulated data: The tool approximates responses using web scraping, cached data, or proxy methods. What you see may not match what a real user would see.

Live querying is more expensive (API costs are real) and slower, but it's the only method that produces trustworthy data. Scraped approaches can look similar on the surface but diverge significantly when AI models update their training data or change how they weight sources.

Ask vendors directly: "Can you show me documentation of how results are captured? Does the output in your dashboard match what I'd see if I queried ChatGPT myself right now?" If they can't answer that clearly, treat it as a red flag.

A few tools worth examining here:

Promptwatch queries live AI models and logs the actual responses, which is why its citation database has grown to over 1.1 billion data points. That scale only comes from real queries over time.

Otterly.AI is one of the more affordable options in the monitoring tier and uses live querying, which is part of why it's held up as a credible baseline even in independent reviews.

Test 2: Which models do they actually track -- and how well?

"We track 10 AI models" sounds impressive. The follow-up question is: how often, with what prompt volume, and with what consistency?

Some tools query ChatGPT daily but only check Perplexity weekly. Some track Google AI Overviews but not Google AI Mode (which behaves differently). Some claim to track Claude but are actually using an older API version that doesn't reflect current Claude behavior.

The models that matter most in 2026 are ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Claude, and Gemini. Grok, DeepSeek, Copilot, and Meta AI matter for specific audiences. Any tool claiming comprehensive coverage should be able to show you query frequency per model, not just a logo grid on their website.

| Model | Why it matters | Questions to ask |

|---|---|---|

| ChatGPT | Largest AI referral traffic share (87.4% of AI referrals per Conductor 2026) | Query frequency? Which GPT version? |

| Perplexity | High citation density, growing fast | Real-time or cached? |

| Google AI Overviews | Appears in ~47% of searches | Separate from AI Mode tracking? |

| Google AI Mode | Different behavior from Overviews | Tracked separately? |

| Claude | Growing enterprise adoption | Which Claude version? |

| Gemini | Google's native model | Integrated with AI Overviews data? |

Tools like Profound and AthenaHQ cover multiple models with reasonable depth, though both lean toward monitoring rather than optimization.

Test 3: Does it help you do something, or just show you data?

This is where most tools fail the production-readiness test for teams that actually need to improve their AI visibility.

Monitoring is table stakes. Knowing that your brand appears in 23% of relevant ChatGPT responses is useful for one meeting. What your team needs after that meeting is: which prompts should we target? What content is missing? What do we write, and how do we write it so AI models will cite it?

A production-ready platform for optimization (not just monitoring) needs to answer those questions. The checklist:

- Does it show you which prompts competitors rank for that you don't? (Answer Gap Analysis)

- Does it tell you what content is missing from your site -- not just that something is missing, but what specifically?

- Does it generate content, or just brief it?

- Is the generated content grounded in real citation data, or is it generic AI output?

- Can you track whether new content improved your visibility scores?

Most tools stop at the first bullet. A few reach the second. Very few close the full loop.

Promptwatch's approach here is worth understanding: its Answer Gap Analysis shows the specific prompts where competitors appear and you don't, then its built-in writing agent generates articles grounded in citation data from 880M+ analyzed citations. The loop closes when page-level tracking shows whether the new content started getting cited. That's a different category of tool than a monitoring dashboard.

Peec AI offers smart suggestions and is often cited as a step above pure monitoring, though it doesn't generate content.

Scrunch AI is another platform that moves toward intelligence, with good model coverage and some optimization guidance.

Test 4: Can it connect AI visibility to actual revenue?

This is the test that separates tools built for demos from tools built for production use.

Showing a CMO that your brand appears in 40% of relevant AI responses is a good story. Showing them that AI-referred visitors converted at 4x the rate of organic traffic and drove $180K in pipeline last quarter is a business case.

Production-ready platforms need at least one of these attribution methods:

- JavaScript snippet that captures AI referral traffic in your analytics

- Google Search Console integration that surfaces AI-driven clicks

- Server log analysis that identifies AI crawler and referral patterns

- Direct integration with your CRM or revenue data

Ask vendors: "How do I connect what I see in your dashboard to traffic and revenue in my analytics?" If the answer is "you can export a CSV," that's not attribution -- that's homework.

AI crawler logs are a related capability that most tools skip entirely. Knowing which AI crawlers are hitting your site, which pages they read, and how often they return is foundational data for understanding whether your content is even being indexed by AI models. Without it, you're optimizing blind.

DarkVisitors is worth knowing about here -- it specifically tracks AI agents and bots visiting your site, which complements visibility tracking nicely.

Test 5: What does support actually look like?

New tools in fast-moving markets have a support problem: the product changes faster than the documentation, and the team is usually small. This isn't a dealbreaker, but it shapes what "production-ready" means for your use case.

Questions worth asking before you sign:

- Is there a dedicated onboarding process, or do you get a help center link?

- What's the SLA for data freshness? If ChatGPT changes how it responds to a prompt category, how quickly does the platform update?

- Is there a changelog? Can you see what changed and when?

- What happens when a model updates its behavior and your historical data becomes inconsistent?

For agencies managing multiple client accounts, this matters even more. A tool that works fine for one brand can become a support nightmare when you're running 20 accounts and need consistent data across all of them.

The three-tier framework and what readiness means at each level

The Ekamoira comparison (27 platforms, February 2026) organized the market into three capability levels. It's a useful frame for calibrating your readiness expectations.

Level 1: Monitoring (do I show up?)

These tools answer one question: is my brand mentioned in AI responses to relevant prompts? They're the easiest to build and the most common. Production readiness here means: accurate live data, reliable uptime, and enough model coverage to be meaningful.

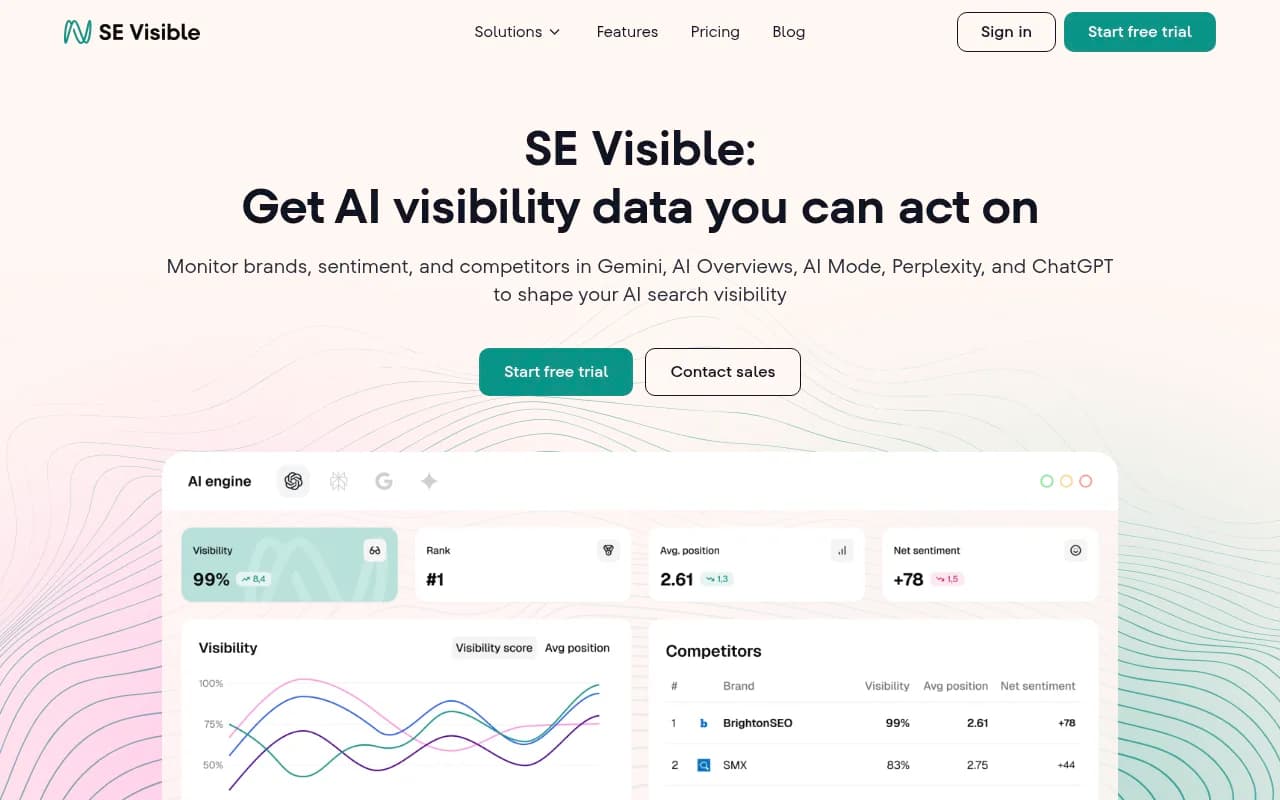

Good options in this tier include Otterly.AI, Peec AI, and SE Visible.

If you're just starting out and need a baseline, monitoring tools are fine. But go in knowing they won't tell you what to do next.

Level 2: Intelligence (why am I or am I not showing up?)

Intelligence platforms add context: competitor comparisons, prompt difficulty scores, citation source analysis, and some form of gap identification. Production readiness here requires accurate data plus reliable competitive benchmarking and prompt-level analytics.

Profound sits here, as does AthenaHQ. Both have strong feature sets but lean toward showing you the problem rather than helping you solve it.

Level 3: Execution (how do I fix it?)

Execution platforms close the loop: they identify gaps, generate content to fill them, and track whether the content improved visibility. This is the hardest tier to build and the smallest category.

Production readiness here is the highest bar: accurate data, reliable content generation grounded in real citation data, attribution, and a feedback loop that actually works.

Promptwatch is the clearest example of a platform built around this loop. The combination of Answer Gap Analysis, AI writing agent, page-level tracking, and traffic attribution is designed specifically so teams don't get stuck after the monitoring step.

Red flags to watch for when evaluating new platforms

A few specific patterns that suggest a tool isn't production-ready:

Vague methodology documentation. If a vendor can't explain in plain language how they query AI models, how often, and what they do with the responses, the data quality is probably inconsistent.

No historical data. A tool that launched six months ago and only shows current snapshots can't tell you whether your visibility is improving or declining. Trend data requires history.

Fixed prompt sets. Some tools (including some well-known ones) only track a predetermined list of prompts. If your brand's relevant queries aren't in their set, you're invisible to the tool even if you're visible to AI. Always ask whether you can add custom prompts.

No model-level breakdown. A single "visibility score" that aggregates across all AI models hides more than it reveals. You need to know which models cite you and which don't, because the fix is different in each case.

Pricing that doesn't scale with usage. AI queries cost money. If a tool offers unlimited prompts at a very low price, either the data quality is poor or the business model is unsustainable. Both are problems for production use.

A practical evaluation checklist

Before committing to any AI visibility platform, run through this list:

- Ask for documentation of how results are captured (live query vs. scraped)

- Test the tool yourself: run a prompt you know the answer to and compare the tool's output to what you actually see in ChatGPT or Perplexity

- Confirm which models are tracked and at what query frequency

- Ask whether you can add custom prompts (not just use their preset list)

- Check whether there's a content gap or answer gap feature -- not just monitoring

- Ask how you connect visibility data to traffic and revenue

- Look for a changelog or release history to gauge development pace

- Check independent reviews (r/GEO_optimization, r/SaaS, agency blogs) for real user feedback

- Ask about data retention -- how far back does historical data go?

- Confirm SLA for data freshness and what happens when AI models update

The bottom line

The AI visibility tool market in 2026 is full of products that look production-ready and aren't. The monitoring tier is crowded with tools that will show you a dashboard but leave you without a path forward. The execution tier is thin but growing.

The readiness test isn't complicated: ask how the data is captured, verify model coverage is real not just marketed, check whether the tool helps you act on what it shows you, and confirm you can connect visibility to revenue. Most tools will fail at least one of these. The ones that pass all five are worth your budget.

If you're evaluating platforms right now, the combination of live query methodology, genuine answer gap analysis, content generation grounded in citation data, and traffic attribution is what separates a production tool from a beta experiment dressed up in a nice UI. That combination is rare -- but it exists, and it's worth holding out for.