Key takeaways

- AI brand visibility tracking is a real, measurable discipline in 2026, but the category is still maturing fast -- most tools launched in the last 18 months.

- The core metrics (mention rate, share of voice, citation frequency) are now reliable enough to act on, but traffic attribution from AI search remains genuinely hard.

- Most tools stop at monitoring. A smaller set -- including platforms like Promptwatch -- close the loop by helping you create content that actually gets cited.

- Prompt coverage is the hidden variable: a tool tracking 50 prompts gives you a very different picture than one tracking 500.

- The right tool depends on your use case: agency reporting, enterprise brand tracking, solo SEO, or full-cycle optimization each have different requirements.

Two years ago, "AI brand visibility" wasn't a real job function. Now there are over 100 tools claiming to track it, a growing stack of acronyms (GEO, AEO, LLM SEO), and marketing teams being asked to report on their "AI share of voice" in quarterly reviews.

That's a lot of progress. It's also a lot of noise.

This guide is an honest assessment of where AI brand visibility tracking actually stands in 2026 -- what the tools can reliably measure, where the data is still shaky, and what you should actually do with all of it.

What "AI brand visibility" actually means

Before evaluating tools, it helps to be precise about what we're measuring.

When someone asks ChatGPT "what's the best project management tool for remote teams?" and the model responds with a list, your brand either appears in that response or it doesn't. That's the core unit of AI brand visibility: mention rate -- how often your brand shows up in AI-generated answers to relevant prompts.

From that foundation, tools build out several derived metrics:

- Share of voice: your mention rate relative to competitors across a prompt set

- Sentiment: whether mentions are positive, neutral, or negative

- Citation rate: whether the model links to or cites your specific pages

- Position: where in the response your brand appears (first mention vs. buried in a list)

- Model coverage: which AI engines mention you (ChatGPT vs. Perplexity vs. Gemini vs. others)

These metrics are now reasonably well-defined. The harder question is whether any given tool is measuring them accurately -- and that depends heavily on methodology.

What the tools can reliably tell you

Mention rate and share of voice

This is the most mature part of the category. Tools query AI models with a set of prompts, record whether your brand appears, and aggregate that into a visibility score. Done at scale, this is genuinely useful data.

The catch is prompt selection. A tool tracking 50 prompts gives you a narrow, potentially misleading picture. A tool tracking 500 prompts across multiple intent types (informational, comparison, transactional) gives you something you can actually make decisions with.

Most tools in the market now let you define custom prompts, which is the right approach. Pre-built prompt sets are fine for benchmarking but shouldn't be your only data source.

Promptwatch tracks across 10 AI models and lets you build custom prompt sets -- worth looking at if prompt coverage is a priority.

Tools like Profound and AthenaHQ also offer solid mention tracking, particularly for enterprise use cases.

Competitor benchmarking

Knowing your mention rate in isolation is less useful than knowing it relative to competitors. Most platforms now offer this, and it's one of the more reliable outputs -- you're comparing apples to apples since the same prompts run against the same models for everyone.

Competitor heatmaps (showing which brands win which prompts) have become a standard feature. They're useful for identifying where you're losing ground and why.

Sentiment analysis

Sentiment in AI responses is more nuanced than in traditional social listening. Models don't just mention your brand -- they describe it, compare it, and sometimes recommend against it. Tracking whether those descriptions are positive or negative is valuable, especially for reputation management.

The quality of sentiment analysis varies a lot between tools. Some use simple positive/negative scoring; others do more granular analysis of the actual language used. For most teams, basic sentiment tracking is enough to flag problems.

What the tools still struggle with

Traffic attribution

This is the biggest unsolved problem in the category. When someone clicks through from an AI response to your website, that traffic often shows up as direct or "unknown" in analytics. The referrer data is inconsistent across models -- Perplexity passes referrer data reasonably well, ChatGPT less so.

A few approaches exist: UTM-tagged links in AI citations (only works when the model actually links to you), server log analysis (catches more but requires technical setup), and JavaScript snippets that try to infer AI origin from session patterns.

None of these are perfect. Platforms like Promptwatch have built traffic attribution into their core product, using a combination of methods to get closer to the real number -- but even then, you're working with estimates, not exact counts.

The honest answer: in 2026, you can get directionally accurate AI traffic data, but you can't get the precision you'd expect from Google Analytics for traditional search. Plan your reporting accordingly.

Prompt volume data

Traditional SEO has keyword volume data from Google Search Console and third-party tools. AI search has nothing equivalent -- there's no official API that tells you how many times per month people ask ChatGPT about "best CRM for startups."

Some platforms now offer volume estimates derived from their own query data, user panels, or proxy signals. These estimates are useful for prioritization but should be treated as rough guides, not hard numbers. A prompt with an estimated volume of 10,000 might be 5,000 or 20,000 in reality.

Real-time tracking

Most AI visibility tools run queries on a schedule -- daily, weekly, or even less frequently for lower-tier plans. AI model responses can change quickly, especially when a model is updated or when a major news event shifts how it talks about a topic.

This means your visibility data is always somewhat lagged. For most strategic decisions, that's fine. For reputation management or crisis response, it's a real limitation.

Understanding why you're cited (or not)

Tools can tell you that you're not appearing in responses to certain prompts. They generally can't tell you exactly why -- whether it's a content gap, a technical issue with how AI crawlers access your site, a lack of authoritative sources linking to you, or simply that a competitor has more comprehensive coverage of the topic.

This is where the gap between monitoring tools and optimization platforms becomes most visible. Monitoring tells you the score. Optimization helps you understand the cause and fix it.

The monitoring vs. optimization divide

This is the most important distinction in the category right now, and it's worth being direct about.

Most AI visibility tools are dashboards. They show you your mention rate, your share of voice, your sentiment score. That's genuinely useful -- but it leaves you with a question: now what?

A smaller set of platforms have built out the "now what" layer. This typically includes:

- Content gap analysis: identifying which prompts competitors rank for that you don't, and mapping those gaps to missing content on your site

- Content generation: creating articles, comparisons, and listicles specifically engineered to get cited by AI models

- Crawler log analysis: seeing which pages AI bots actually visit, how often, and what errors they encounter

Promptwatch is the clearest example of this full-cycle approach -- find the gaps, generate the content, track the results. But it's not the only one.

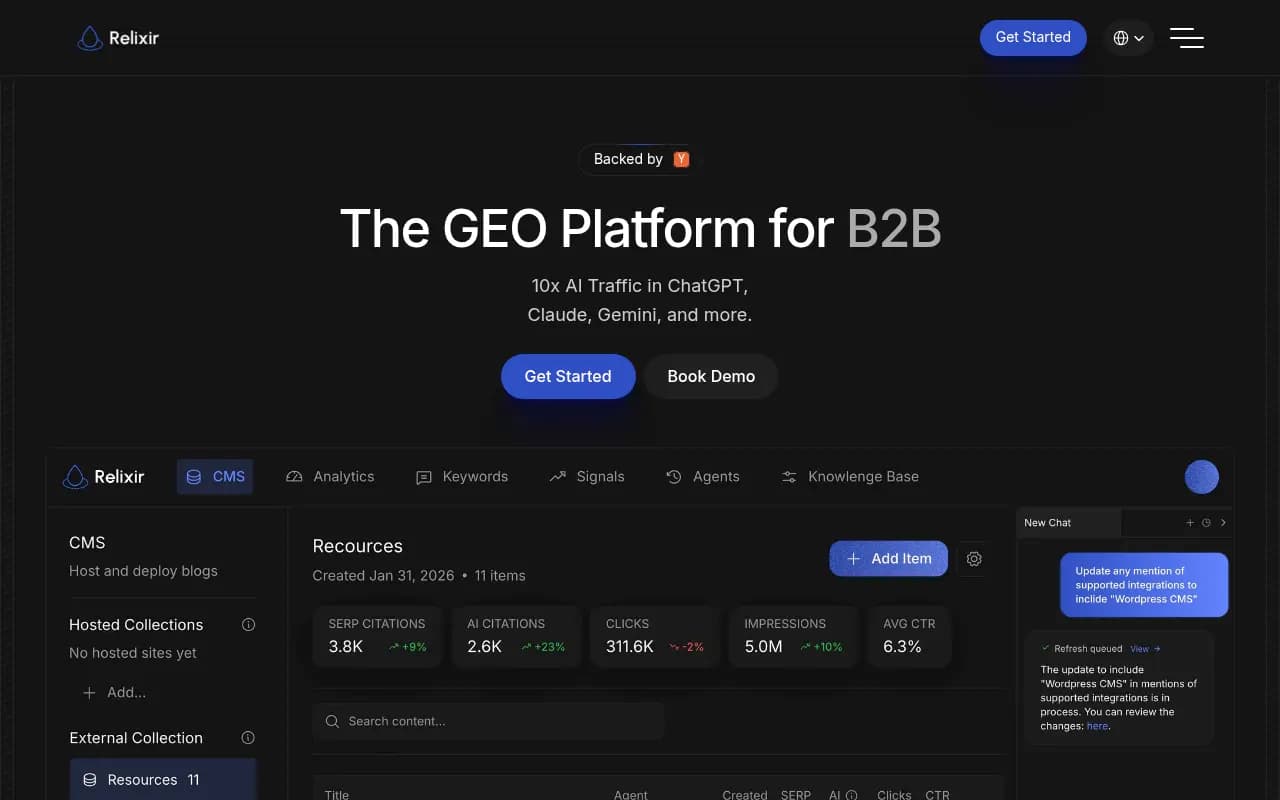

Relixir has built an AI-native CMS specifically for GEO content. Whitebox takes an agentic approach, automatically generating and shipping content fixes.

For teams that just need monitoring and have their own content process, a lighter tool is often the right call. For teams that want the full loop, the optimization platforms are worth the higher price.

A practical comparison of tool categories

The market has sorted into a few distinct segments. Here's how they compare:

| Category | Examples | Strengths | Limitations |

|---|---|---|---|

| Full-cycle optimization | Promptwatch, Relixir | Content gap analysis, AI writing, traffic attribution, crawler logs | Higher price point, more setup required |

| Enterprise monitoring | Profound, AthenaHQ, BrightEdge | Deep data, integrations, multi-brand | Monitoring-focused, less content tooling |

| Mid-market trackers | Otterly.AI, Peec AI, Rankscale | Good coverage, reasonable pricing | Limited optimization features |

| Solo/SMB tools | Airefs, SE Visible, Trakkr.ai | Affordable, easy setup | Narrower prompt sets, fewer models |

| Traditional SEO + AI | Semrush, Ahrefs Brand Radar | Familiar workflow, bundled pricing | Fixed prompt sets, weaker AI-specific features |

| Niche/specialist | Scrunch AI, GetCito, Orchly | Specific use cases | Limited scope |

Which models should you be tracking?

In 2026, the AI search landscape has consolidated around a handful of dominant models, but the long tail still matters for some industries.

The models worth tracking for most brands:

- ChatGPT (OpenAI): still the highest consumer mindshare, especially for product recommendations

- Perplexity: the model most likely to drive actual referral traffic, since it links to sources

- Google AI Overviews / AI Mode: critical for any brand with significant organic search traffic

- Gemini: growing fast, especially on mobile

- Claude: strong in professional and B2B contexts

Models like Grok, DeepSeek, Meta AI, and Copilot matter more for specific audiences (tech-forward users, enterprise Microsoft shops) but are lower priority for most brands starting out.

The practical implication: if a tool only tracks two or three models, you're getting an incomplete picture. If it tracks ten but you're only on a plan that covers 50 prompts, the model breadth doesn't help you much.

What good AI visibility reporting actually looks like

A lot of teams are being asked to report on AI visibility without much guidance on what "good" looks like. Here's a framework that works in practice.

Monthly reporting should cover:

- Overall mention rate across your core prompt set (and trend vs. prior month)

- Share of voice vs. top 3 competitors

- Which models are citing you most and least

- Any significant sentiment shifts

- Top 3 prompts where you gained visibility and top 3 where you lost it

Quarterly reporting should add:

- Content gap analysis -- which prompts are competitors winning that you're not

- Traffic attribution estimate from AI sources

- Which pages are being cited and how often

- Progress on content created specifically for AI visibility

This structure gives you something actionable, not just a score. The score tells you where you are; the gap analysis tells you what to do next.

The honest limitations you should communicate upward

If you're presenting AI visibility data to leadership, be upfront about these constraints:

The data is probabilistic, not deterministic. AI models don't return the same response every time. A tool running a prompt 10 times might see your brand mentioned in 6 of those runs. That 60% mention rate is a real signal, but it's not the same as a Google ranking position.

Prompt selection shapes the results. A carefully chosen set of prompts that happen to favor your brand will show great visibility. A broader, more neutral set might show something different. Be transparent about how your prompt set was built.

Attribution is still imperfect. The traffic numbers from AI search are estimates. They're useful for trend analysis but shouldn't be used to make precise ROI calculations.

The tools themselves are still evolving. Several platforms in this space launched in the last 12 months. Methodologies are still being refined. What a "visibility score of 73" means on one platform is not comparable to the same number on another.

None of this means the data isn't worth collecting. It means you should present it with appropriate confidence intervals and focus on trends rather than absolute numbers.

How to pick the right tool for your situation

A few questions that cut through the noise:

Do you need to act on the data, or just report it? If you need to actually improve your AI visibility, not just measure it, you need a platform with content gap analysis and ideally content generation. If you just need to report on share of voice, a monitoring tool is fine.

How many prompts do you need to track? Small brands with a narrow product focus can get by with 50-100 prompts. Brands in competitive categories or with broad product lines need 300+.

Which models matter most for your audience? B2B brands should weight Claude and Perplexity more heavily. Consumer brands should prioritize ChatGPT and Google AI Overviews.

Do you have a technical team? Crawler log analysis and server-side attribution require some technical setup. If you don't have that resource, look for tools with simpler implementation paths.

What's your budget? Entry-level tools start around $50-100/month. Full-cycle optimization platforms run $250-600/month for most use cases, with enterprise pricing above that.

Where the category is heading

A few trends worth watching:

Query fan-outs are becoming a standard feature. The idea that one prompt branches into dozens of sub-queries is increasingly well-understood, and tools are starting to map these relationships. This changes how you think about content strategy -- instead of targeting one prompt, you're targeting a cluster.

AI crawler logs are moving from niche to mainstream. A year ago, almost no tool offered visibility into which AI bots were crawling your site. Now it's a differentiating feature. Expect it to become standard.

The line between GEO and traditional SEO is blurring. Google's AI Mode means that optimizing for AI citations and optimizing for Google rankings are increasingly the same activity. Tools that bridge both worlds will have an advantage.

Attribution will get better, but slowly. The fundamental problem -- AI models don't consistently pass referrer data -- requires cooperation from the model providers. Some progress is happening, but don't expect a clean solution in the next 12 months.

The category will consolidate. There are too many monitoring-only tools competing on price. The platforms that survive will be the ones that help teams do something with the data, not just look at it.