Key takeaways

- No single GEO platform does everything well. Agencies running serious AI visibility programs are building stacks of 3-5 tools covering monitoring, content, technical, and reporting layers.

- The biggest mistake agencies make is stopping at monitoring. Knowing your brand isn't cited in ChatGPT is only useful if you can act on it.

- The tools that matter most in 2026 are the ones that connect visibility data to content production and then to revenue attribution.

- Reddit, YouTube, and UGC are increasingly important citation sources for AI models -- most agencies still ignore this channel entirely.

- Crawler logs and AI bot traffic data are emerging as a critical diagnostic layer that most monitoring tools don't offer.

There's a pattern showing up across GEO agencies right now. A client asks "why isn't our brand showing up in ChatGPT?" The agency logs into their monitoring tool, pulls a share-of-voice report, and sends back a PDF showing the client is at 12% visibility versus a competitor at 34%. The client says "okay, so what do we do about it?" And the agency... doesn't have a great answer.

This is the gap between a GEO monitoring program and an actual GEO optimization program. The first one tells you where you stand. The second one changes it.

In 2026, the agencies winning GEO retainers aren't the ones with the fanciest dashboards. They're the ones who've built a stack that goes from diagnosis to content to proof of results -- and can do it repeatedly across multiple clients. Here's how that stack actually looks.

Why a single tool isn't enough

The GEO tool market has exploded. There are now well over 50 platforms claiming some version of "AI visibility tracking," and the number keeps growing. But most of them are doing one thing: querying AI models on a schedule and reporting back what they found.

That's useful. But it's table stakes.

A full AI visibility program needs to answer four distinct questions:

- Where are we visible (and where aren't we)?

- Why aren't we visible in the gaps?

- What content do we need to create or fix?

- Is the work we're doing actually driving traffic and revenue?

No single tool answers all four well. The agencies building the strongest programs have accepted this and built stacks accordingly -- typically 3-5 tools covering monitoring, content intelligence, technical diagnostics, and attribution.

The four layers of a GEO tech stack

Layer 1: AI visibility monitoring (the foundation)

Every GEO stack starts here. You need a platform that regularly queries AI models with prompts relevant to your clients' categories, tracks which brands appear in responses, and gives you a share-of-voice view over time.

The key things to look for at this layer:

- Coverage across multiple AI models (ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews at minimum)

- Prompt volume and difficulty data so you're prioritizing the right queries

- Competitor comparison so clients can see where they're losing ground

- Multi-region and multi-language support for international clients

Promptwatch covers this layer well and goes further than most -- it tracks 10 AI models and includes prompt volume estimates and difficulty scores so you can prioritize which gaps are worth closing first.

For agencies that want a dedicated monitoring tool, a few others are worth knowing:

Profound has strong agency features including pitch environments and multi-brand workspaces. Good for agencies that need to demo results to prospective clients before they sign.

AthenaHQ is monitoring-focused with clean dashboards across 8+ AI engines. Solid for clients who just want a visibility score and a trend line.

Otterly.AI is one of the more affordable options for agencies managing smaller clients or doing initial scoping work.

| Tool | AI models tracked | Prompt volume data | Content generation | Crawler logs | Agency pricing |

|---|---|---|---|---|---|

| Promptwatch | 10 | Yes | Yes (built-in) | Yes | Custom |

| Profound | 6+ | Yes | No | No | Agency mode |

| AthenaHQ | 8+ | Limited | No | No | Per seat |

| Otterly.AI | 5+ | No | No | No | Per brand |

| Rankscale | 5+ | Limited | No | No | Per brand |

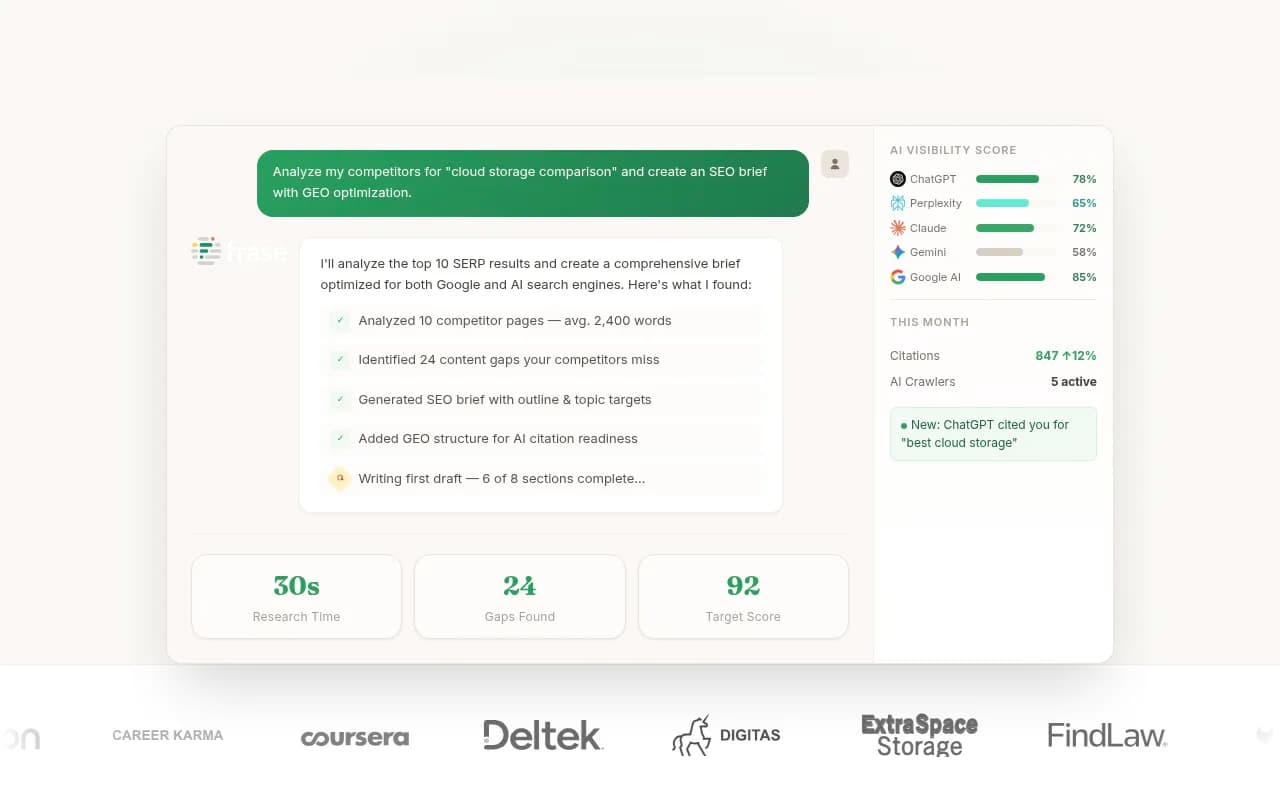

Layer 2: Content gap analysis and content creation

This is where most agencies fall short. Monitoring tells you the gap exists. This layer tells you what to do about it.

The core workflow here is: identify which prompts competitors are visible for that you're not, understand what content those AI models are citing in those responses, and then create content that fills the gap.

This sounds simple. In practice it requires knowing which specific topics, angles, and questions are missing from a client's website -- and then producing content that's actually structured to get cited, not just indexed.

Promptwatch's Answer Gap Analysis does this directly: it shows you the exact prompts where competitors appear and you don't, then the built-in writing agent generates content grounded in citation data from 880M+ analyzed citations. The output isn't generic SEO filler -- it's structured around what AI models actually want to cite.

For agencies that prefer to use separate content tools:

MarketMuse is strong for content planning and topic modeling. It won't tell you about AI citations specifically, but it's good at identifying semantic gaps in a content strategy.

Clearscope is a reliable content optimization tool for making sure articles cover the right topics and entities. Useful for the editing pass after you've identified what to write.

Frase combines research and writing in one interface. Good for agencies that need to produce briefs and drafts quickly.

Surfer SEO has added GEO-specific features in 2026 and remains a solid choice for content teams that want to optimize for both traditional search and AI overviews.

The honest take: if you're managing GEO for more than 2-3 clients, having content generation built into your monitoring platform (rather than stitching together separate tools) saves a lot of time. The gap between "here's what's missing" and "here's the draft article" is where a lot of agency hours disappear.

Layer 3: Technical diagnostics

This layer is underrated and often skipped entirely. But it's increasingly important as AI models get more sophisticated about how they crawl and index content.

The key question here is: can AI crawlers actually read your client's website? And are they reading it?

AI crawler logs show you which AI bots (ChatGPT's GPTBot, Perplexity's PerplexityBot, Anthropic's ClaudeBot, etc.) are visiting which pages, how often, and whether they're hitting errors. If a client's most important product pages are returning 404s to AI crawlers, no amount of content optimization will fix their visibility problem.

Promptwatch includes AI crawler logs at the Professional plan and above -- you can see exactly which pages each AI model is reading and flag technical issues. Most monitoring-only tools don't offer this at all.

For deeper technical SEO diagnostics:

Screaming Frog remains the standard for technical audits. It won't show you AI crawler behavior specifically, but it's essential for identifying crawlability issues that affect all search engines.

Sitebulb is a strong alternative to Screaming Frog with better visualization of site structure issues.

DarkVisitors is worth knowing about -- it tracks AI agents and bots visiting your site and helps you understand which AI systems are accessing your content and when.

Botify is the enterprise option here. It has added GEO-specific features and is strong for large sites with complex crawl budgets.

A few things to check at this layer for every GEO client:

- Is

robots.txtblocking any AI crawlers unintentionally? - Are key pages returning clean 200 responses to bot user agents?

- Is structured data (schema markup) implemented correctly on product, FAQ, and article pages?

- Is page speed acceptable? AI crawlers deprioritize slow pages just like Google does.

Layer 4: Attribution and reporting

This is the layer that keeps GEO retainers alive. If you can't show a client that your work is driving traffic and revenue, the engagement eventually ends -- regardless of how good your visibility scores look.

AI traffic attribution is genuinely hard right now. Most AI models don't pass referrer data cleanly, so "direct" traffic in GA4 is often actually AI-referred traffic. There are a few approaches agencies are using:

UTM parameters on cited pages: When you know a page is being cited by an AI model (from your monitoring data), you can add UTM parameters to the URLs you're promoting in those contexts. This is imperfect but better than nothing.

Server log analysis: AI crawler visits in server logs can be correlated with traffic spikes. Promptwatch supports this via server log analysis as part of its traffic attribution setup.

GSC integration: Google Search Console data can be connected to separate out AI Overview clicks from organic clicks. Promptwatch integrates with GSC directly.

Dedicated attribution tools:

HockeyStack is strong for B2B agencies -- it unifies marketing touchpoints and can help attribute pipeline to AI search visibility improvements.

Northbeam is a good option for ecommerce clients where you need first-party attribution that doesn't rely on platform-reported data.

For reporting to clients, most agencies are either building custom Looker Studio dashboards (Promptwatch has a Looker Studio integration) or using their existing agency reporting tools and pulling in GEO data via API.

How leading agencies are combining these layers

Here's what a practical GEO stack looks like for a mid-size agency running 10-20 clients:

Core monitoring and optimization: Promptwatch (covers monitoring, gap analysis, content generation, crawler logs, and attribution in one platform -- reduces the number of separate tools needed)

Content quality layer: Clearscope or Surfer SEO for the editing pass on AI-generated drafts

Technical audits: Screaming Frog for quarterly technical audits, DarkVisitors for ongoing AI bot monitoring

Attribution: GSC integration (built into Promptwatch) plus HockeyStack for B2B clients

Client reporting: Looker Studio with Promptwatch data export

That's five tools total, with Promptwatch doing the heavy lifting across three of the four layers. The agencies that have tried to build this stack with separate tools for each layer -- a monitoring tool, a separate gap analysis tool, a separate content tool -- report spending more time managing tool integrations than doing actual work.

The channels most agencies are still ignoring

One thing that keeps coming up in conversations with GEO practitioners: Reddit and YouTube are significant citation sources for AI models, and most agencies aren't tracking them.

When ChatGPT or Perplexity answers a question about "best project management software for remote teams," it's often pulling from Reddit threads and YouTube reviews, not just brand websites. If your client's brand isn't mentioned positively in those discussions, it won't appear in AI responses -- regardless of how well-optimized their website is.

Promptwatch surfaces Reddit and YouTube discussions that directly influence AI recommendations, which is a channel most monitoring tools ignore entirely. For agencies, this opens up a new service line: influencing the UGC and community content that feeds AI citations.

The Yotpo research from early 2026 makes a similar point -- verified reviews and user-generated content provide what they call "Information Gain" that AI models prioritize. Fresh, human-validated experiences are a strong signal. Agencies that are helping clients build review volume and community presence are seeing it pay off in AI visibility.

Avoiding the common stack mistakes

Buying too many monitoring tools: There's a temptation to subscribe to multiple monitoring platforms to get "broader coverage." In practice, the data is similar across platforms and you end up with conflicting numbers that confuse clients. Pick one primary monitoring platform and stick with it.

Skipping the technical layer: A lot of agencies jump straight to content creation without checking whether AI crawlers can actually access the pages they're optimizing. Crawler logs should be part of every GEO onboarding.

Not connecting visibility to revenue: Share-of-voice reports are fine for internal tracking, but clients ultimately care about traffic and revenue. Build attribution into the stack from day one, even if it's imperfect.

Treating GEO as a one-time project: AI model responses change frequently. A page that's being cited today might not be cited next month. Ongoing monitoring and content iteration is the actual service -- not a one-time audit.

Building the stack for different agency sizes

For solo consultants and small agencies (1-5 clients), a lean stack works fine: one solid monitoring platform that includes content generation (Promptwatch's Essential or Professional plan covers this), Screaming Frog for technical audits, and GSC for attribution. Total cost: under $400/month.

For mid-size agencies (10-20 clients), the stack above -- Promptwatch plus Clearscope or Surfer, DarkVisitors, and HockeyStack for B2B clients -- covers most needs. Budget: $800-1,500/month depending on client count.

For large agencies and enterprise teams, the conversation shifts toward custom API integrations, white-label reporting, and dedicated customer success. Promptwatch's Business and Agency tiers support this, as do enterprise platforms like Botify and BrightEdge for clients with very large sites.

Evertune is worth knowing about at the enterprise end -- it's built specifically for Fortune 500 brands and has strong narrative monitoring features, though the price point reflects that.

The bottom line

The GEO tech stack in 2026 isn't complicated, but it does require thinking in layers. Monitoring alone is not a program. Content creation without monitoring is guesswork. Technical optimization without attribution is hard to justify to clients.

The agencies building durable GEO practices are the ones who've connected all four layers -- and who've chosen tools that reduce the integration overhead rather than adding to it. The fewer handoffs between "here's the gap" and "here's the content" and "here's the traffic impact," the faster you can deliver results that clients can see.