Key takeaways

- ChatGPT citations come from a combination of crawlability, content structure, and topical authority -- not just good writing

- "Answer capsules" (short, self-contained answers to specific questions) dramatically improve your chances of being pulled into a RAG response

- Most brands are invisible in AI search because they've never checked -- the first step is measuring where you actually stand

- Monitoring-only tools tell you what's happening; optimization platforms like Promptwatch tell you what to do about it

- The fastest path to citations runs through gap analysis: find the prompts your competitors rank for that you don't, then create content that fills those gaps

Getting cited in ChatGPT used to feel like winning a lottery. Your competitor shows up in a response about your exact product category, and you have no idea why them and not you. In 2026, that mystery is mostly solved. There's a repeatable process, and there are tools built specifically to run it.

This guide breaks down that process step by step, with specific tools for each stage. Not every tool is right for every team -- so I'll flag who each one is best for.

Why ChatGPT citations work differently than Google rankings

Google ranks pages. ChatGPT synthesizes answers. That distinction matters more than most people realize.

When ChatGPT (or Perplexity, or Claude) generates a response, it's not picking the "best" page from a list. It's pulling fragments of information from sources it considers credible and relevant, then assembling them into a coherent answer. Your page doesn't need to rank #1 for a keyword. It needs to contain a clear, extractable answer to a specific question -- and it needs to be crawlable, indexed, and trusted enough for the model to reach for it.

The technical term for this process is Retrieval-Augmented Generation (RAG). The model does a real-time semantic search across the web, finds content that matches the intent of the query, and cites the sources it used. If your content isn't structured to be extracted, or if the crawler can't reach it, you simply don't exist in that pipeline.

Three things determine whether you get cited:

- Can AI crawlers actually access and index your content?

- Does your content directly answer the questions users are asking?

- Does your domain have enough topical authority for the model to trust it?

Let's go through each.

Step 1: Make sure AI crawlers can actually find you

This sounds obvious, but a surprising number of sites block AI crawlers either accidentally (through overly aggressive robots.txt rules) or intentionally without realizing the tradeoff.

OpenAI's crawler is called OAI-SearchBot. Perplexity uses PerplexityBot. Claude uses ClaudeBot. If any of these are blocked in your robots.txt, you're invisible to that model's live web retrieval -- full stop.

Check your robots.txt file right now. If you see Disallow: / under any of these user agents, fix it before doing anything else.

Beyond blocking, there are subtler issues: pages returning 404s or 5xx errors when crawled, JavaScript-rendered content that bots can't parse, or pages that simply aren't being crawled frequently enough to stay current.

Tools that help here:

Promptwatch has a real-time AI Crawler Logs feature that shows you exactly which pages each AI bot has visited, how often, and what errors they encountered. It's one of the few platforms that gives you this level of visibility into the AI crawl specifically (not just Googlebot).

For broader technical SEO crawl analysis, Screaming Frog remains the standard for auditing crawlability issues at scale.

DarkVisitors is worth bookmarking -- it maintains a live database of AI agents and bots so you know exactly which user agents to allow or block.

Step 2: Structure your content as answer capsules

This is where most content teams get it wrong. They write long-form articles optimized for Google's ranking signals -- comprehensive, well-structured, authoritative. That's not bad, but it's not enough for AI citation.

ChatGPT's RAG pipeline is looking for extractable answers. It wants a short, self-contained block of text that directly answers a specific question, which it can lift and cite without needing the surrounding context. Some people call these "answer capsules" or "modular answer blocks."

In practice, this means:

- Put a direct answer in the first 1-2 sentences after a question-style heading

- Don't bury the answer in the middle of a paragraph

- Use plain language -- the model needs to understand it, not be impressed by it

- Include the specific question as a heading (H2 or H3), not just a topic label

For example, instead of a section titled "Our Approach to Pricing," write "How much does [product] cost?" and answer it immediately in the next sentence.

The other thing that drives citations is information gain -- data, research, or perspectives the model doesn't already have in its training weights. If you're just restating what Wikipedia says, there's no reason for the model to cite you. Original surveys, proprietary data, unique case studies, and specific numbers all give the model a reason to reach for your content.

Tools for content optimization:

Clearscope is strong for semantic content optimization -- it helps you cover the topics and questions that matter for a given keyword, which translates well to AI citation readiness.

Frase is worth looking at if you want a tool that bridges traditional SEO content briefs with AI search optimization.

MarketMuse handles content planning at a topical authority level -- useful if you're trying to build out a cluster of content around a topic rather than optimizing individual pages.

Step 3: Track where you're actually visible (and where you're not)

You can't optimize what you can't measure. Before you start creating content, you need a baseline: which prompts is ChatGPT citing you for, which ones are your competitors winning, and what's the gap?

This is where GEO monitoring tools come in. The market has exploded in the last 18 months, so here's a practical breakdown of what's available.

Full-featured platforms (monitoring + optimization)

These tools go beyond tracking to help you actually improve your visibility.

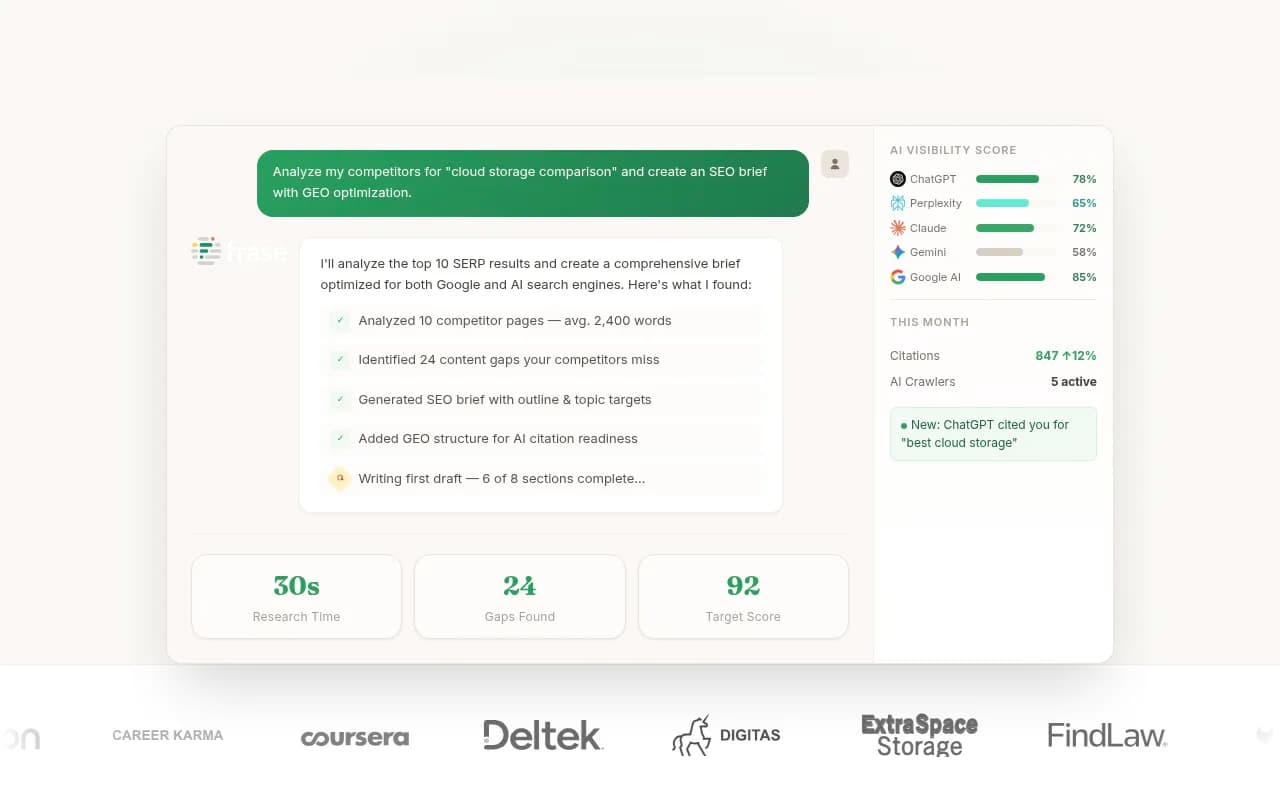

Promptwatch is the most complete option in this category. It tracks 10 AI models (ChatGPT, Perplexity, Claude, Gemini, Grok, DeepSeek, and more), runs Answer Gap Analysis to show you exactly which prompts your competitors rank for that you don't, and has a built-in AI writing agent that generates content specifically engineered to get cited. It also has the crawler logs mentioned above, ChatGPT Shopping tracking, and traffic attribution via GSC integration or server log analysis. Pricing starts at $99/month.

Profound is another strong option at the higher end -- good feature depth for enterprise teams that need detailed AI search analytics.

AthenaHQ covers 8+ AI engines and is solid for monitoring, though it's more analytics-focused than action-focused.

Monitoring-focused tools

These are better for teams that just want visibility data and will handle content creation separately.

Otterly.AI is one of the more affordable options and covers the main AI models. Good starting point if budget is tight.

Peec AI is worth considering if you need multi-language tracking -- it handles non-English markets better than most.

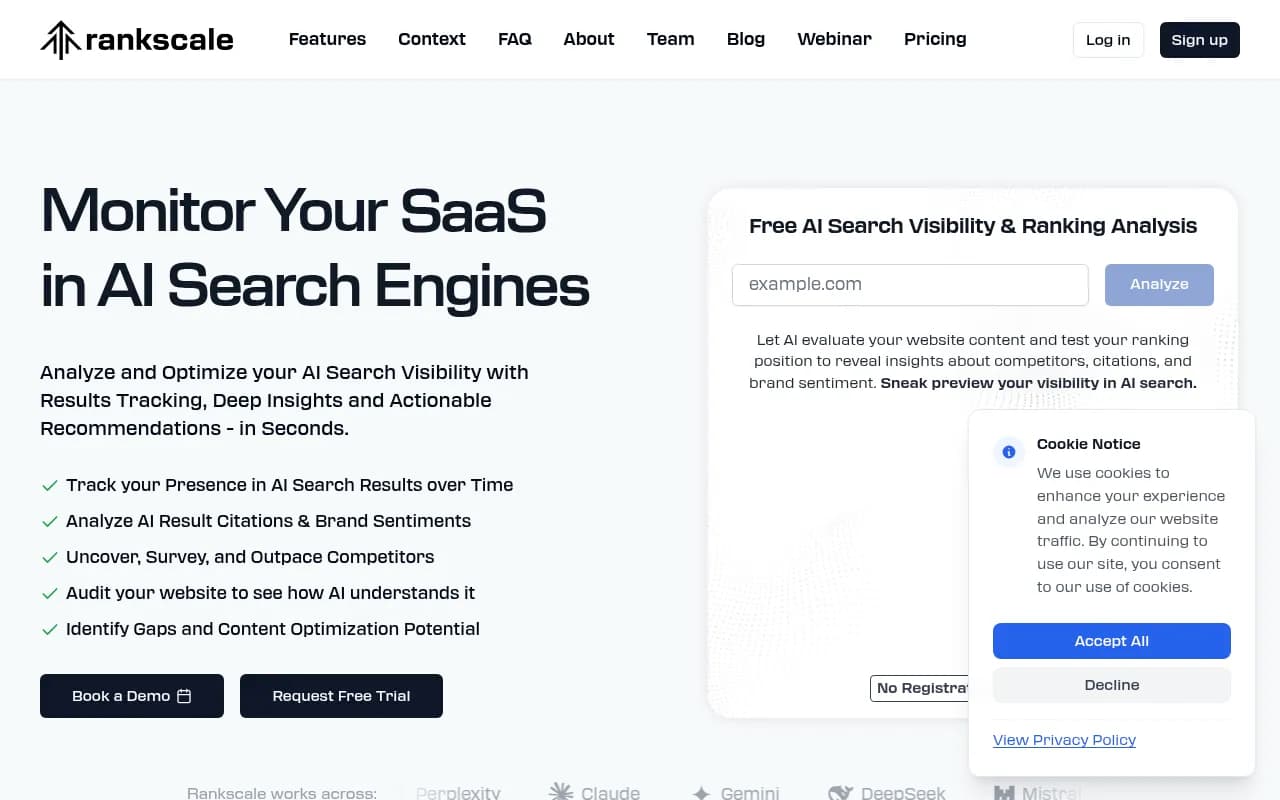

Rankscale has a clean interface and solid prompt tracking. Good for teams that want straightforward rank monitoring without complexity.

Trakkr.ai covers ChatGPT, Claude, and Perplexity with a focus on brand mention tracking.

SE Ranking has added an AI visibility toolkit to its existing SEO platform, which makes it convenient if you're already using it for traditional SEO.

Comparison: which tool fits which team

| Tool | Best for | AI models tracked | Content generation | Crawler logs | Starting price |

|---|---|---|---|---|---|

| Promptwatch | Full optimization cycle | 10+ | Yes (built-in AI writer) | Yes | $99/mo |

| Profound | Enterprise analytics | 6+ | No | No | Higher |

| AthenaHQ | Monitoring + reporting | 8+ | No | No | Mid-range |

| Otterly.AI | Budget monitoring | 4-5 | No | No | Low |

| Peec AI | Multi-language tracking | 5+ | No | No | Low-mid |

| SE Ranking | Existing SE Ranking users | 4+ | No | No | Add-on |

| Trakkr.ai | Brand mention tracking | 3 | No | No | Low |

The honest summary: if you just want to know whether you're being cited, most of these tools work. If you want to actually improve your citation rate, you need something that closes the loop between "here's the gap" and "here's the content to fill it."

Step 4: Run answer gap analysis to find what to create

This is the highest-leverage activity in the entire process. Answer gap analysis shows you the specific prompts where your competitors appear in AI responses and you don't. Those are your targets.

The logic is simple: if a competitor is getting cited for "best project management tool for remote teams" and you're not, there's a content gap. Either you don't have a page that answers that question, or the page you have isn't structured well enough to be extracted.

With Promptwatch's Answer Gap Analysis, you can see the exact prompts, the competitors appearing in them, and the content your site is missing. The platform then lets you generate articles directly from that data -- content that's grounded in real citation patterns, not keyword guesses.

This is the core loop that separates optimization from monitoring:

- Find the prompts you're missing

- Generate content that fills the gap

- Track whether your visibility improves

Most monitoring tools only do step 3. Promptwatch runs all three.

For teams that want to do gap analysis more manually, Semrush's AI visibility features and Ahrefs Brand Radar both offer some prompt tracking, though neither has the content generation layer.

Step 5: Build topical authority, not just individual pages

One underrated factor in ChatGPT citations is domain-level trust. A single well-optimized page on a thin domain is less likely to get cited than a moderately optimized page on a domain that clearly owns a topic.

This means publishing consistently on a topic cluster, not just creating one "answer capsule" page and hoping for the best. If you want to be cited for questions about project management software, you probably need 15-20 pages covering different angles of that topic -- comparisons, how-tos, use cases, FAQs, and original research.

Reddit threads and YouTube videos also influence AI recommendations more than most brands realize. Perplexity in particular cites Reddit heavily. If your brand or product is being discussed positively in relevant subreddits, that's a citation signal. Promptwatch tracks Reddit and YouTube sources specifically, which most competitors don't.

For content production at scale, a few tools worth knowing:

Byword generates SEO-optimized articles at volume with good quality control -- useful for building out topic clusters quickly.

Jasper handles longer-form content with more brand voice control, better for teams that need consistency across a large content operation.

GrowthBar is a solid mid-market option that combines keyword research with AI content generation.

Step 6: Attribute AI traffic to revenue

Getting cited is great. Knowing whether those citations are driving actual business outcomes is better.

This is still an emerging area, but the tools are catching up. The main approaches:

- Google Search Console integration (tracks clicks from AI Overviews and some AI search referrals)

- Server log analysis (shows raw traffic from AI model user agents)

- UTM-based attribution for AI referral traffic

Promptwatch supports all three methods and connects citation data to traffic data in one dashboard. That's genuinely useful -- it lets you see not just "we got cited 40 times this week" but "those citations drove 800 sessions and contributed to 12 conversions."

Bear AI is worth looking at specifically for teams focused on converting AI search traffic into revenue, with attribution built around that goal.

LLM Clicks focuses specifically on citation-to-click tracking, which is useful if you want granular data on which citations are actually sending traffic.

The fastest path, summarized

If you want citations in ChatGPT as fast as possible, the order of operations is:

- Fix crawlability first -- check

robots.txt, fix errors, confirm AI bots can access your key pages - Audit your existing content for answer capsule structure -- pick your 5 most important pages and rewrite the openings to directly answer specific questions

- Set up monitoring so you have a baseline -- even a simple tool like Otterly.AI or Trakkr.ai is better than flying blind

- Run answer gap analysis against 2-3 competitors -- find the prompts they're winning that you're not

- Create content specifically for those gaps -- structured as answer capsules, with original data where possible

- Track visibility changes weekly and iterate

The brands getting cited consistently in 2026 aren't doing anything magical. They've just built a system for finding gaps and filling them faster than their competitors. The tools exist to do this efficiently -- the question is whether you're using them.