Key takeaways

- Writing for humans, Google, and AI models is no longer three separate jobs -- the best content satisfies all three simultaneously

- AI-generated drafts are useful, but human expertise, real experience, and genuine opinions are what get cited by AI models and trusted by readers

- Generative Engine Optimization (GEO) is now a real discipline alongside SEO -- brands that ignore it are invisible in ChatGPT, Perplexity, and Google AI Overviews

- Structured, well-organized content with clear answers to specific questions performs best across all three audiences

- Tracking where your content appears in AI responses is now as important as tracking Google rankings

Content marketing used to be a two-party negotiation. Write something useful for humans, make sure Google could understand it, and you were mostly done. That era is over.

In 2026, there's a third audience sitting at the table: AI models. ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews -- these systems are now answering millions of questions every day, and they're pulling from the web to do it. If your content isn't in those answers, you're invisible to a growing chunk of your potential audience.

The good news is that writing for all three audiences isn't as contradictory as it sounds. The bad news is that most content teams are still optimizing for only one or two of them.

Here's how to think about all three -- and how to write content that works across the board.

Why the three-audience problem matters now

A few years ago, "AI search" was a niche concern. Now it's mainstream. Google's AI Overviews appear on a significant portion of searches. Perplexity has millions of daily active users. ChatGPT's web browsing and search features mean people are asking it questions they used to type into Google.

The shift changes the economics of content. When an AI model answers a question, it often cites two or three sources -- not ten blue links. If you're not one of those sources, you don't just rank lower. You don't exist in that answer at all.

At the same time, Google hasn't gone anywhere. Traditional organic search still drives enormous traffic. And humans -- actual people reading your content -- still need to find it useful, trustworthy, and worth their time.

So the question isn't "should I optimize for AI or for Google?" It's "how do I write content that works for both, without sacrificing the human experience?"

What each audience actually wants

Before getting into tactics, it helps to be clear about what each audience is looking for -- because they overlap more than you'd think.

What humans want

People want content that answers their actual question, doesn't waste their time, and feels like it was written by someone who knows what they're talking about. They want specifics, not vague generalities. They want to feel like the author has actually done the thing they're writing about.

Trust is the core currency. A reader who doesn't trust you will bounce. One who does will share, link, and come back.

What Google wants

Google's quality guidelines have been pointing in the same direction for years: E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). The addition of "Experience" to the original E-A-T framework was significant -- Google wants to see that content comes from people who have firsthand knowledge of a topic, not just people who read about it.

Technically, Google also wants fast-loading pages, clean structure, proper use of headings, internal linking, and content that matches search intent.

What AI models want

This is the newer piece. AI models like ChatGPT and Perplexity tend to cite content that:

- Directly answers specific questions with clear, factual statements

- Is structured in a way that's easy to parse (headers, lists, short paragraphs)

- Comes from domains with established authority

- Contains original data, research, or expert opinions that can't be found elsewhere

- Is frequently referenced by other sources on the web

Notice anything? These overlap heavily with what Google and humans want. The difference is that AI models are even more literal. They're looking for content they can extract a clean answer from. Vague, hedging, or overly promotional content gets skipped.

The content strategy that works across all three

Lead with a real answer

The single most effective thing you can do for all three audiences is answer the question in the first paragraph. Not after a lengthy preamble. Not buried in section four. Right at the top.

This sounds obvious, but most content still buries the lede. Writers pad introductions with context that the reader doesn't need. AI models often skip these entirely. Google's featured snippets reward directness.

If someone searches "how long does it take to rank on Google," your first paragraph should contain a direct answer. Then you can explain the nuance.

Use structure as a signal, not just a style choice

Headers, bullet points, numbered lists, and short paragraphs aren't just easier to read -- they're signals. Google uses them to understand content hierarchy. AI models use them to extract specific answers. Humans use them to scan before committing to reading.

A well-structured article with clear H2s and H3s that map to real questions will outperform a wall of text even if the wall of text contains better information. Structure is how you make your information accessible.

Write from genuine experience

This is where human content has a durable advantage over pure AI output. An AI model can synthesize existing information. It can't tell you what it was actually like to run a content audit on a 50,000-page site, or what surprised you about a product after using it for six months.

That firsthand experience -- specific, concrete, sometimes uncomfortable -- is exactly what Google's E-E-A-T guidelines reward. It's also what AI models look for when deciding which sources to cite. And it's what humans find compelling.

The practical implication: don't just describe things. React to them. Include what you noticed, what surprised you, what you'd do differently. That specificity is hard to fake and hard to ignore.

Include original data and expert perspectives

Content that contains information no one else has is inherently more citable. This doesn't require a massive research budget. It can be:

- A survey of your own customers

- Data from your internal analytics (anonymized where needed)

- Quotes from subject matter experts you interviewed

- Your own test results or experiments

When AI models are choosing between two articles that cover the same topic, the one with original data wins. When Google is deciding which content to feature, unique information carries weight. When a human is deciding whether to trust you, evidence matters.

Match intent precisely

"Content marketing" is not one topic. "How to write a content brief" and "what is content marketing" are completely different intents, and they need different content. Writing a long explainer when someone wants a quick how-to, or a shallow overview when someone needs depth, fails all three audiences.

Before writing anything, be clear about what the person searching for this topic actually wants to do. Are they researching? Comparing options? Ready to act? The content structure, length, and tone should all follow from that.

The GEO layer: optimizing specifically for AI citations

Traditional SEO and writing for AI models share a lot of DNA, but there are specific things you can do to improve your chances of being cited by ChatGPT, Perplexity, and similar systems.

Answer questions explicitly

AI models respond to questions. Structure your content around questions -- use them as headers, answer them directly, and make the answer self-contained enough that it could be extracted and still make sense.

"What is the best time to post on LinkedIn?" followed by a direct, specific answer is more citable than a paragraph that meanders toward a conclusion.

Build topical authority, not just individual articles

AI models tend to cite sources that cover a topic comprehensively. One great article on a subject is less powerful than ten interconnected articles that cover the topic from every angle. This is the same logic as SEO topical authority, but it matters even more for AI citations.

If you want to be the source AI models recommend for content marketing advice, you need to cover content strategy, content briefs, content distribution, content measurement, and related topics -- not just one piece.

Get cited and mentioned elsewhere

AI models learn from the web. If your content is referenced, linked to, and discussed on Reddit, in other articles, and across the web, models are more likely to treat it as authoritative. This is why link building and PR still matter -- not just for Google, but for AI visibility.

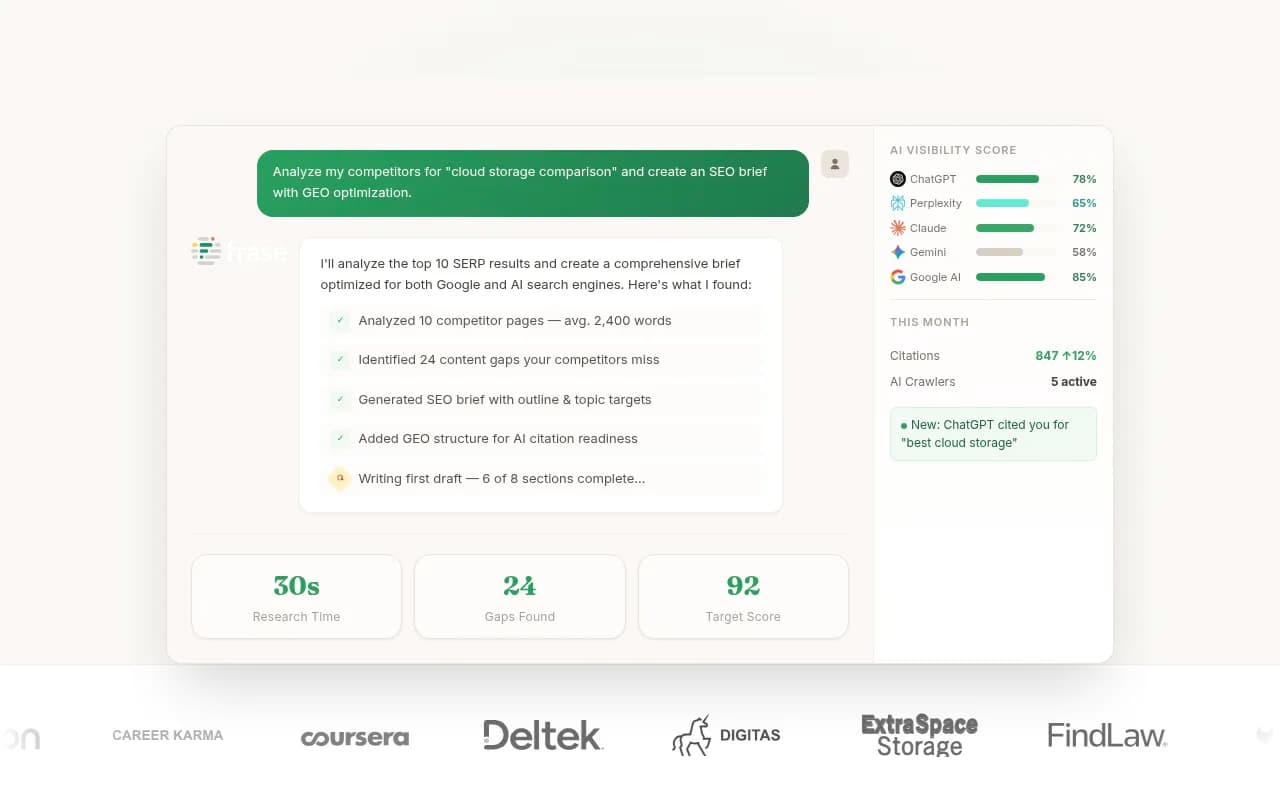

Track what's actually happening

You can't improve what you don't measure. Knowing which of your pages are being cited by AI models, which prompts trigger those citations, and how your visibility compares to competitors is now a real discipline. Tools like Promptwatch exist specifically to track this -- showing you where you're appearing in AI responses and, more usefully, where competitors are appearing that you're not.

The human creativity advantage

Here's something worth sitting with: the rise of AI content generation has made human creativity more valuable, not less.

When anyone can generate a competent 1,500-word article on any topic in 30 seconds, competent and generic becomes worthless. What stands out is perspective. Opinion. The willingness to say something specific and potentially controversial. The kind of content that could only come from a person who has actually lived through something.

This isn't a soft, feel-good point. It's a competitive reality. AI models are trained to synthesize consensus. They're not great at surfacing genuinely contrarian or novel perspectives. Content that takes a real position -- and backs it up -- gets noticed by humans and increasingly by AI systems that are trying to surface diverse, high-quality viewpoints.

The practical implication for content teams: use AI tools for the mechanical parts (research synthesis, draft outlines, SEO optimization) and reserve human effort for the parts that require genuine judgment, experience, and voice.

Tools that help you write for all three audiences

The tooling landscape has matured significantly. Here are some categories worth knowing.

Content optimization for Google and humans

Tools like Surfer SEO and Clearscope help you optimize content for search intent and keyword coverage. They analyze top-ranking pages and tell you what topics, terms, and structures your content needs.

Frase takes a similar approach with a stronger focus on research and brief creation -- useful if you're briefing writers or trying to understand what questions a topic needs to answer.

AI writing assistance

For drafting and ideation, tools like Jasper and Copy.ai have become genuinely useful -- not as replacements for human writers, but as accelerators. They're best used for generating options, overcoming blank-page paralysis, and handling repetitive content formats.

AI visibility and GEO tracking

This is the newest category and the one most content teams are still ignoring. If you're not tracking how your content performs in AI search results, you're flying blind on an increasingly important channel.

Promptwatch tracks your brand and content across 10 AI models, shows you which prompts you're appearing for, and -- critically -- shows you which prompts competitors are winning that you're not. That gap analysis is what turns monitoring into action.

For teams that want additional perspectives, tools like Profound and AthenaHQ offer AI visibility monitoring, though they're more focused on tracking than on helping you close the gaps.

Content brief and planning tools

Content Harmony and MarketMuse are worth mentioning for teams that need to plan content at scale. Both help you understand what a piece of content needs to cover to be competitive.

A practical framework for 2026 content

Here's how to think about any piece of content you're creating:

Start with the question. What specific question is this content answering? Write it down. If you can't state it in one sentence, the content isn't focused enough.

Answer it immediately. Put a direct answer in the first paragraph. You can expand on it for the rest of the article, but give the reader (and the AI model) the answer upfront.

Structure for scanning. Use headers that are themselves questions or clear statements. Use bullet points for lists of items. Keep paragraphs short. Make it easy to extract information.

Add what only you can add. What's the firsthand experience, original data, or specific opinion that no one else has? That's the part that makes the content worth citing.

Check the intent match. Does the structure and depth match what someone searching for this topic actually needs? A quick answer for a quick question, depth for a research query.

Track the results. Which pages are getting cited in AI responses? Which prompts are you winning? Which are you losing to competitors? Adjust accordingly.

The comparison: how different content approaches stack up

| Approach | Ranks on Google | Gets cited by AI | Trusted by humans | Scalable |

|---|---|---|---|---|

| Pure AI-generated, unedited | Sometimes | Rarely | Low | Yes |

| Human-written, no SEO | Sometimes | Rarely | High | No |

| SEO-optimized, no GEO | Yes | Sometimes | Medium | Yes |

| Expert-led + structured + GEO-optimized | Yes | Yes | High | With effort |

| Original data + expert voice + full optimization | Yes | Yes | Very high | Hard but worth it |

The top row is where a lot of teams are operating right now. The bottom row is where the durable competitive advantage is.

What this means for content teams

The practical reality is that content teams need to expand their definition of success. "Ranking on page one" is still a goal, but it's no longer the only goal. Being cited in a ChatGPT response, appearing in a Perplexity answer, showing up in Google AI Overviews -- these are now real traffic and visibility channels that deserve measurement and optimization.

That doesn't mean abandoning what works. It means adding a layer. The content that performs best in 2026 is content that was already good -- genuinely useful, well-structured, authoritative -- with additional attention paid to how AI models discover and cite it.

The brands that figure this out early will have a significant head start. AI models develop citation habits. Once they start consistently citing a source for a topic, that source tends to stay cited. Getting in early matters.

The content marketing fundamentals haven't changed: be useful, be specific, be trustworthy. What's changed is the number of audiences you need to be useful, specific, and trustworthy for -- and the tools available to measure whether you're succeeding with each of them.