Key takeaways

- Most traditional technical SEO tools (Screaming Frog, Sitebulb, Oncrawl) are built for Googlebot and don't surface how LLM crawlers like GPTBot or ClaudeBot interact with your site.

- LLM crawlability is a distinct problem: AI models don't just index pages, they synthesize answers from content they can actually read and trust -- so structure, schema, and accessibility matter differently.

- A handful of newer platforms now log AI crawler activity in real time, letting you see which pages LLMs are reading, how often, and what errors they hit.

- The best 2026 stack combines a traditional crawler for Google-facing issues with an AI visibility platform that closes the loop between crawlability, citations, and content gaps.

- Tools like Promptwatch go beyond monitoring to show you what content LLMs want but can't find on your site -- and help you create it.

Why "technical SEO" means something different in 2026

For most of the last decade, technical SEO had a clear definition: make sure Googlebot can crawl your site, index your pages, and understand your content. Fix your Core Web Vitals. Add schema markup. Submit your sitemap. Done.

That playbook still matters. But it's no longer the whole game.

LLMs like ChatGPT, Claude, Perplexity, and Gemini are now answering millions of questions that used to send users to search results. And these models have their own crawlers -- GPTBot, ClaudeBot, PerplexityBot, and others -- that visit your site independently of Googlebot. They have different behaviors, different access patterns, and different content preferences.

The problem is that most technical SEO tools were built entirely for Google. They flag broken links, missing meta descriptions, slow page loads, and crawl budget issues. All useful. But they're blind to what GPTBot saw when it visited your product page last Tuesday, whether it hit a 403 error on your pricing page, or whether it's been ignoring your blog entirely because your content structure doesn't lend itself to AI synthesis.

This guide covers both sides of the technical optimization problem in 2026: the traditional crawlers that still matter for Google, and the newer platforms that actually tell you what's happening when AI engines visit your site.

The two-layer technical SEO problem

Before getting into tools, it helps to understand what you're actually solving for.

Layer 1: Traditional crawlability (still matters)

Googlebot crawlability is still foundational. If your pages aren't indexed by Google, they're less likely to appear in the training data or retrieval pipelines that feed LLMs. Many AI models use Google's index as a signal of content quality and authority. So the basics -- clean site architecture, fast load times, proper canonicalization, no broken internal links -- still apply.

Layer 2: LLM crawlability (the new problem)

LLM crawlers behave differently from Googlebot in a few important ways:

- They visit pages more selectively and less frequently than Googlebot.

- They're more sensitive to content that's hard to parse: JavaScript-heavy pages, paywalled content, poorly structured HTML, and thin pages with low information density.

- They respond well to clear question-and-answer structures, FAQ schema, and content that directly addresses specific queries.

- They can be blocked by your

robots.txt-- intentionally or accidentally. Many sites have blocked GPTBot without realizing it, which cuts off any chance of being cited in ChatGPT responses. - They don't just crawl for indexing -- they crawl to understand what your content says and whether it's worth citing.

Fixing Layer 2 requires tools that can actually log and analyze LLM crawler activity, not just Googlebot behavior.

Traditional technical SEO crawlers (still essential)

These tools handle the Google-facing side of technical optimization. They're not built for LLM visibility, but they're still a necessary part of any serious SEO stack.

Screaming Frog

The industry standard for desktop crawling. Screaming Frog scans your entire site for broken links, missing tags, redirect chains, duplicate content, and hundreds of other technical issues. It's fast, configurable, and handles large sites well. The main limitation: it's entirely Google-centric. It tells you nothing about how AI crawlers interact with your site.

Sitebulb

Sitebulb takes a similar approach to Screaming Frog but adds better visualization and prioritized recommendations. Its "hints" system is genuinely useful for teams that need to triage issues quickly. Like Screaming Frog, it's built around Googlebot behavior.

Oncrawl

Oncrawl is the enterprise option for large-scale sites. It handles millions of URLs, integrates with Google Search Console and log files, and offers deeper analysis of crawl budget and page performance. If you're running a site with hundreds of thousands of pages, Oncrawl's log file analysis is particularly valuable -- though again, it's analyzing Googlebot logs, not LLM crawler logs.

JetOctopus

JetOctopus sits between Screaming Frog and Oncrawl in terms of scale and complexity. It's strong on JavaScript rendering and log file analysis, and it's faster than many competitors at processing large crawls. Worth considering if you're outgrowing Screaming Frog but don't need Oncrawl's full enterprise feature set.

Botify

Botify is one of the more forward-thinking enterprise SEO platforms. It has started adding GEO (Generative Engine Optimization) capabilities alongside its traditional crawl and log analysis tools. If you're an enterprise team that needs both Google crawl analysis and early LLM visibility features in one platform, Botify is worth evaluating.

Tools that address LLM crawlability specifically

This is where the market is still catching up. Most platforms in this category are primarily AI visibility trackers -- they tell you whether your brand appears in ChatGPT or Perplexity responses. But a few are starting to address the underlying technical question: why isn't your content being crawled and cited?

AI crawler log analysis

The most direct way to understand LLM crawlability is to analyze your server logs for AI crawler activity. This tells you:

- Which AI crawlers are visiting your site (GPTBot, ClaudeBot, PerplexityBot, etc.)

- Which pages they're reading and how often

- Which pages they're skipping or hitting errors on

- How their behavior has changed over time

Most traditional log analysis tools don't surface this data in a useful way. You'd need to manually filter logs for known AI crawler user agents -- which is doable but tedious.

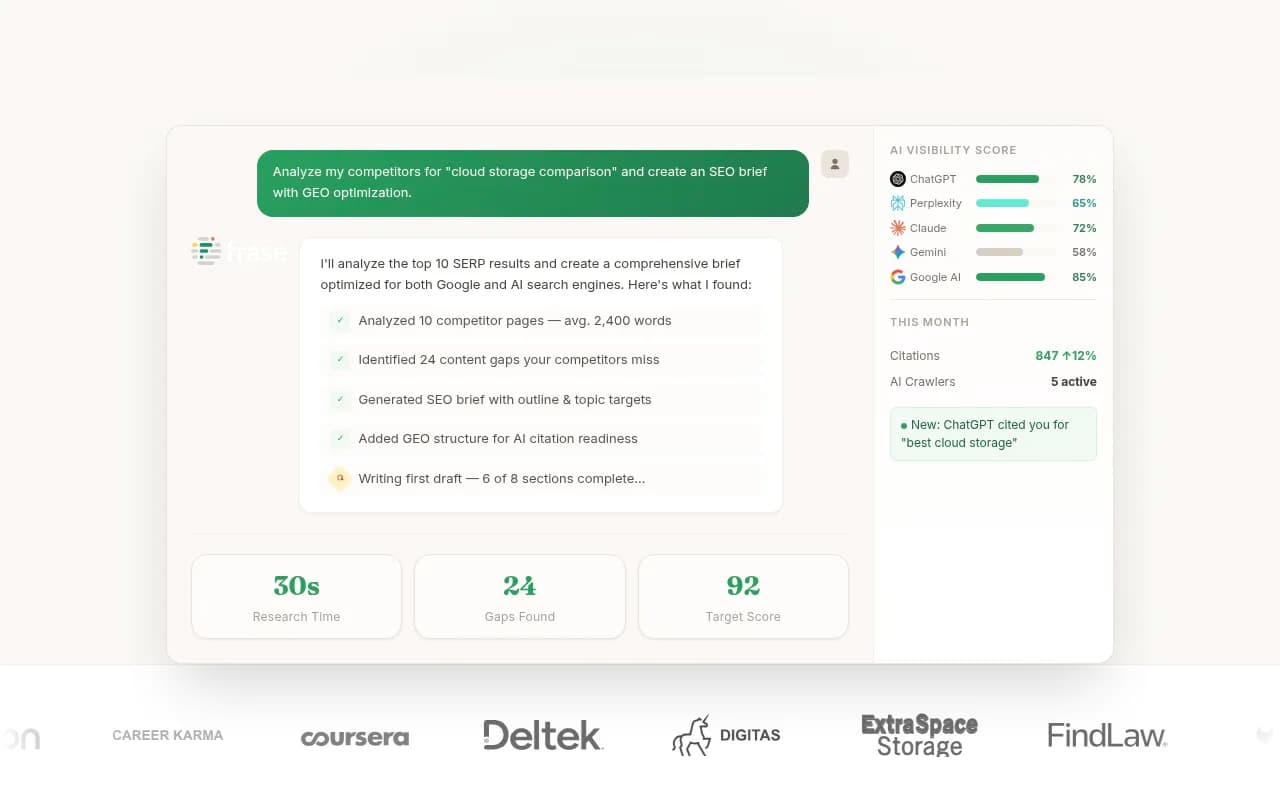

Promptwatch has built AI crawler log analysis directly into its platform. You get real-time logs of AI crawler activity, page-level breakdowns, and error reporting. It's one of the few tools that connects crawler behavior to citation outcomes -- so you can see whether the pages LLMs are reading are actually being cited in their responses.

Promptwatch also flags indexing issues specific to AI crawlers, which is different from what GSC or Screaming Frog would surface.

DarkVisitors

DarkVisitors takes a different angle: it tracks known AI agents and bots visiting your site and helps you manage access via robots.txt. If you want to control which AI crawlers can access your content (or verify that you haven't accidentally blocked them), DarkVisitors is a useful reference. It maintains a database of known AI crawler user agents and their behaviors.

Lumar

Lumar (formerly DeepCrawl) has been expanding its feature set to include GEO-related checks alongside traditional technical SEO. It's an enterprise platform that handles large-scale crawls and has started incorporating AI search visibility analysis. If you're already using Lumar for technical SEO, it's worth checking whether its GEO features have matured enough for your use case.

Comparison: technical SEO tools by LLM crawlability support

| Tool | Traditional crawl | LLM crawler logs | AI citation tracking | Content gap analysis | Best for |

|---|---|---|---|---|---|

| Screaming Frog | Excellent | No | No | No | Google-focused technical audits |

| Sitebulb | Excellent | No | No | No | Visualized technical audits |

| Oncrawl | Excellent | No | No | No | Enterprise Google crawl analysis |

| JetOctopus | Very good | No | No | No | Large-scale JS-heavy sites |

| Botify | Very good | Partial | Partial | No | Enterprise GEO + technical SEO |

| Lumar | Very good | Partial | Partial | No | Enterprise GEO + technical SEO |

| DarkVisitors | No | Yes (access control) | No | No | Managing AI bot access |

| Promptwatch | No | Yes | Yes | Yes | Full LLM visibility + optimization |

The pattern is clear: traditional crawlers are strong on Google-facing issues but blind to LLM behavior. The newer platforms are strong on LLM visibility but don't replace a dedicated technical crawler for Google-facing issues.

What good LLM crawlability actually looks like

Before you can fix LLM crawlability issues, you need to know what you're optimizing for. A few things that actually matter:

Content structure and parsability

LLMs prefer content that's easy to extract meaning from. That means:

- Clear headings that signal topic hierarchy

- Direct answers to questions, not buried in marketing copy

- Minimal JavaScript dependency for core content (LLM crawlers often don't execute JS)

- Clean HTML with semantic markup

A page that renders beautifully in a browser but relies on JavaScript to load its main content may be invisible to LLM crawlers. This is a real technical issue that traditional SEO tools don't flag.

Schema markup for AI context

Structured data (FAQ schema, HowTo schema, Article schema) helps AI models understand what your content is about and how to use it. This is an area where traditional SEO and LLM optimization overlap -- schema that helps Google also helps AI models. Tools like Semrush and Ahrefs surface schema issues, and this is worth prioritizing.

robots.txt and crawler access

Check your robots.txt for any rules that might be blocking AI crawlers. Common mistakes include blanket Disallow: / rules for unknown user agents, or rules added during a security review that inadvertently block GPTBot or ClaudeBot. DarkVisitors maintains a list of known AI crawler user agents to check against.

Page authority and citation likelihood

LLMs don't cite every page they crawl -- they cite pages that appear authoritative and relevant. This is where the technical and content sides of LLM optimization intersect. A page that's technically accessible but thin on substance won't get cited. This is why tools that combine crawler analysis with citation tracking are more useful than crawlers alone.

The content side of the technical problem

Here's something that often gets missed in technical SEO discussions: LLM crawlability problems aren't always technical. Sometimes LLMs are crawling your site just fine -- they're just not finding content worth citing.

This is an answer gap problem. Your competitors are being cited for certain queries because they have content that directly addresses those questions. You're not being cited because you don't have that content, or because your existing content doesn't answer the question clearly enough.

Tools that surface these gaps are technically relevant even if they don't touch your server configuration. Promptwatch's Answer Gap Analysis shows exactly which prompts competitors are visible for that you're not -- and the built-in content generation tools help you create pages that address those gaps. The crawler logs then close the loop: you can see whether AI crawlers are picking up your new content and whether it's translating into citations.

A few other tools worth knowing for the content side:

Clearscope is strong for content optimization against traditional search signals, and its recommendations often align with what LLMs want to see: comprehensive topic coverage, clear structure, and semantic depth.

MarketMuse takes a similar approach with more emphasis on topical authority -- building out content clusters that signal expertise to both Google and AI models.

Frase combines research, content briefs, and optimization in one workflow. It's particularly useful for teams that need to move quickly from identifying a content gap to publishing something that addresses it.

Building a 2026 technical SEO stack for LLM visibility

Given all of the above, here's a practical way to think about your tool stack:

For Google-facing technical issues: Screaming Frog or Sitebulb for regular crawl audits. Oncrawl or JetOctopus if you're at enterprise scale or need log file analysis for Googlebot.

For LLM crawler visibility: Promptwatch for real-time AI crawler logs, citation tracking, and content gap analysis. DarkVisitors for auditing your robots.txt and understanding which AI bots are accessing your site.

For content optimization: Clearscope or Frase for ensuring your content is structured and comprehensive enough to be worth citing. Promptwatch's built-in content generation for creating pages specifically engineered to appear in AI responses.

For ongoing monitoring: You need to track whether your changes are working. Page-level citation tracking (which Promptwatch provides) tells you whether specific pages are being cited by specific AI models -- which is the actual outcome you're optimizing for.

The key insight is that LLM technical SEO is a loop, not a checklist. You fix crawler access, improve content structure, create content for identified gaps, and then track whether citations increase. Traditional technical SEO tools handle the first part. AI visibility platforms handle the rest.

A note on robots.txt and the access question

One thing worth flagging explicitly: there's an active debate in 2026 about whether to allow or block AI crawlers. Some site owners are blocking GPTBot and ClaudeBot because they don't want their content used for AI training without compensation. Others are blocking them accidentally.

If your goal is to appear in AI-generated responses, you need to allow AI crawlers to access your content. Blocking them is a fast path to invisibility in AI search. If you have concerns about content licensing or training data use, that's a legitimate consideration -- but understand the tradeoff. You can't be cited by ChatGPT if GPTBot can't read your pages.

DarkVisitors is useful here for understanding exactly what you're allowing and blocking, and for making that decision deliberately rather than by accident.

What most teams are still missing

The honest state of the market in 2026: most SEO teams have their Google-facing technical stack sorted. Screaming Frog audits, GSC monitoring, Core Web Vitals tracking -- this is standard practice.

What most teams are missing is any visibility into what happens after an AI crawler visits their site. They don't know which pages LLMs are reading. They don't know which pages are generating errors for AI crawlers. They don't know whether their content is being cited, and if not, why not.

That's the gap that the newer generation of AI visibility platforms is filling. The tools that combine crawler log analysis with citation tracking and content gap analysis are the ones that turn technical SEO from a maintenance task into an actual growth lever for AI search visibility.

The traditional crawlers aren't going anywhere -- you still need them. But if you're only running Screaming Frog and calling it done, you're optimizing for a search landscape that's already changing underneath you.