Key takeaways

- AI search engines (ChatGPT, Perplexity, Google AI Overviews, Claude) pull citations from trusted, well-structured web content -- not from ad spend or domain authority alone

- Getting cited requires a different approach than traditional SEO: answer-first structure, entity clarity, and presence across third-party sources all matter

- Content gaps are the biggest opportunity: find the prompts your competitors appear for that you don't, then create content specifically targeting those gaps

- Tracking AI visibility requires dedicated tooling -- standard Google Analytics won't show you where you're being cited or how often

- The full loop is: audit your visibility, fix content gaps, create AI-optimized content, then measure results

AI search has quietly become the first stop for a growing share of buying decisions. Someone asks ChatGPT "what's the best project management tool for remote teams" and gets a confident, sourced answer -- no scrolling, no clicking through ten blue links. If your brand isn't in that answer, you don't exist for that query.

The good news is that AI models don't favor the biggest brands by default. They favor the brands most clearly associated with specific concepts. That's a real opening for focused, well-positioned businesses to outperform larger competitors who haven't adapted yet.

This guide walks through the full process: how AI search actually works, what makes content get cited, and how to track whether any of it is working.

How AI search actually works

Before optimizing for something, it helps to understand what you're optimizing for.

When someone asks ChatGPT or Perplexity a question, the model doesn't search the web in real time (with some exceptions -- Perplexity does live retrieval, and ChatGPT's browsing mode does too). What's happening under the hood is a combination of:

- The model's training data, which includes billions of web pages crawled up to a cutoff date

- Retrieval-augmented generation (RAG), where the model pulls in fresh web content to supplement its knowledge

- Citation weighting, where sources that are frequently referenced, clearly structured, and semantically relevant get surfaced more often

The practical implication: you need to be present in the training data (which means having indexed, crawlable content), and you need to be the kind of source that gets cited when AI models do live retrieval. Both matter.

Google AI Overviews works slightly differently -- it's more tightly integrated with Google's existing index, so traditional SEO signals carry more weight there. But across all platforms, the fundamentals converge: clear, authoritative, well-structured content wins.

Step 1: Audit your current AI visibility

You can't fix what you can't see. Before writing a single word of new content, spend time understanding where you currently appear (and where you don't) in AI responses.

The manual approach is to open ChatGPT, Perplexity, and Claude and ask the questions your customers actually ask. "What are the best [category] tools?" "How do I [solve problem your product addresses]?" "Which [product type] is best for [use case]?" Note where your brand appears, where competitors appear, and what sources get cited.

This gets tedious fast. At scale, tools like Promptwatch automate this across 10+ AI models simultaneously, tracking prompt-level visibility, which pages get cited, and how your share of voice compares to competitors.

Other tools worth knowing about for visibility auditing:

What you're looking for in an audit:

- Which prompts does your brand appear in?

- Which competitor prompts are you missing from?

- Which of your pages are being cited, and which aren't?

- Are there entire topic areas where you have zero AI presence?

That last question is where the real opportunity lives.

Step 2: Find your content gaps

The most valuable output of any AI visibility audit is a list of prompts where competitors appear but you don't. These are your content gaps -- the specific questions AI models are answering without citing you.

This matters more than generic keyword research because AI models answer questions, not keywords. A gap might look like: "ChatGPT recommends Competitor A and Competitor B when someone asks 'best CRM for small agencies' -- but never mentions us, even though we serve that exact market."

Once you have that list, you can prioritize by:

- Prompt volume (how often is this question being asked?)

- Competitive difficulty (how entrenched are the current citations?)

- Relevance (does winning this prompt actually drive your target customers?)

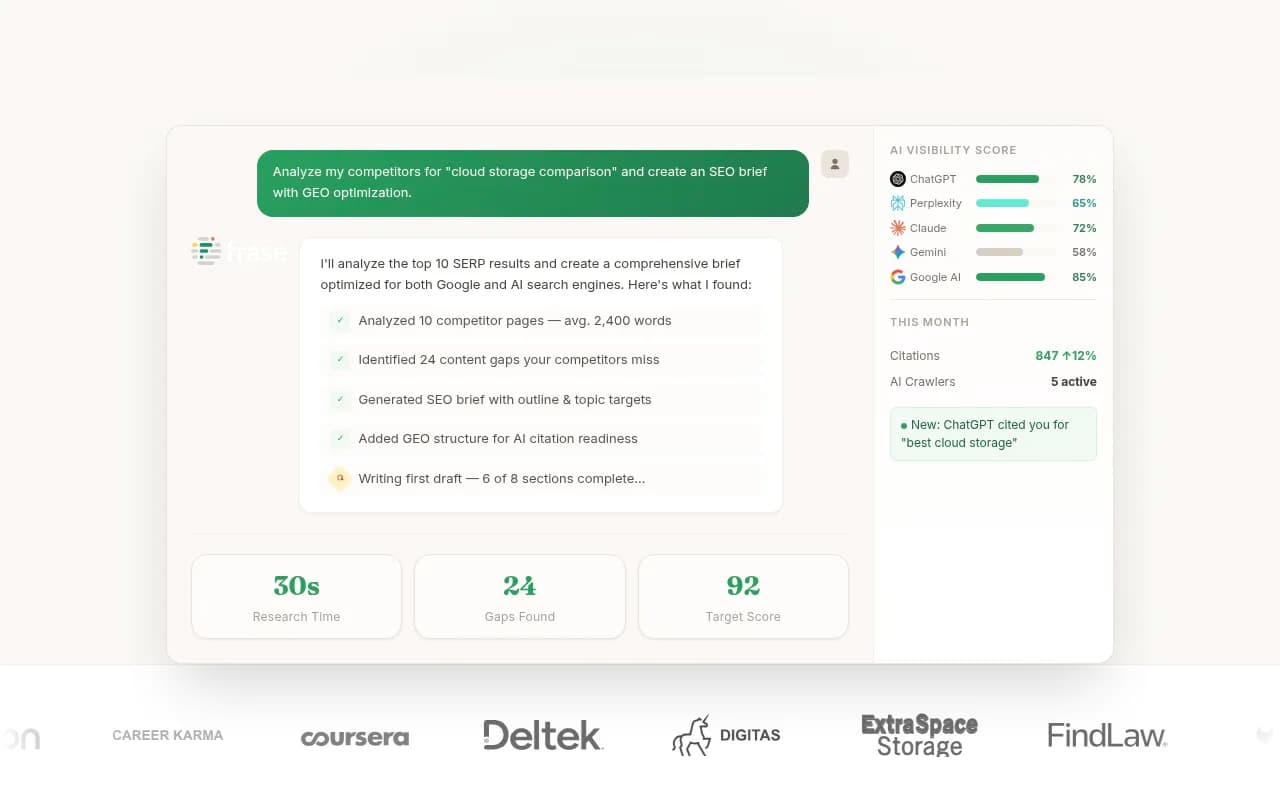

Promptwatch's Answer Gap Analysis does this automatically, showing you the specific content your site is missing and which competitors are filling that space. Most monitoring-only tools stop at showing you the gap -- they don't help you close it.

For manual gap analysis, you can also look at what content competitors have published recently, what Reddit threads and YouTube videos AI models tend to cite in your category, and what questions appear in "People Also Ask" sections that you haven't addressed.

Step 3: Write content AI loves to cite

This is where most guides get vague. "Write high-quality content" isn't advice -- it's a platitude. Here's what actually makes content get cited by AI models.

Lead with a direct answer

AI models are trying to answer a question. If your page buries the answer in paragraph six after three paragraphs of preamble, the model has to work harder to extract it. Pages that open with a clear, direct answer to the question the content addresses get cited more often.

Structure it like this: question in the heading, direct answer in the first 1-2 sentences, then supporting detail and context below. This mirrors how featured snippets work in traditional search -- and for similar reasons.

Use question-based headings

AI models parse content by heading structure. When your H2s and H3s are phrased as questions ("How does X work?" "What's the difference between X and Y?"), you're essentially pre-labeling the content for the model. It can match a user's question to your heading and pull the relevant section.

Compare:

- "Our methodology" (vague, hard to match to a query)

- "How do we calculate pricing?" (direct, matchable)

The second version gets cited. The first gets ignored.

Cover topics with real depth

AI models don't just want a surface-level answer -- they want to cite sources that demonstrate genuine expertise. A 300-word overview of a topic is less likely to be cited than a 1,500-word piece that covers the nuances, edge cases, and common misconceptions.

This doesn't mean padding. It means actually answering the follow-up questions a reader would have after reading your main answer. Exposure Ninja's work with The Ordinary is a useful example here: they didn't just create content about skincare -- they systematically ensured every piece reinforced the same core concepts (good value, scientifically backed) across their own site and third-party publications. That consistency is what drove AI citations.

Use schema markup

Structured data (FAQ schema, HowTo schema, Article schema) gives AI crawlers explicit signals about what your content contains and how it's organized. It's not a magic bullet, but it reduces ambiguity -- and AI models prefer sources they can parse cleanly.

For FAQ schema specifically: mark up the actual questions and answers on your page. This is especially effective for pages targeting informational queries.

Keep sentences and paragraphs short

AI models chunk content when they process it. Long, dense paragraphs are harder to chunk cleanly. Short paragraphs, clear sentences, and logical breaks between ideas make it easier for models to extract and cite specific sections.

Step 4: Build topical authority across your site

One well-optimized page isn't enough. AI models develop a sense of which domains are authoritative on which topics based on the breadth and depth of content across the whole site.

If you have one article about project management software but a competitor has 40 articles covering every angle of the topic (comparisons, use cases, how-tos, buyer guides), the competitor's domain reads as more authoritative on that topic. AI models reflect that.

The practical approach is to build topic clusters: a central "pillar" page covering a broad topic, supported by cluster pages that go deep on specific subtopics. Each cluster page links back to the pillar, and the pillar links out to the clusters. This signals topical depth to both traditional search engines and AI models.

Tools like Topical Map AI can help you map out what a full topic cluster should look like for your niche.

For content creation at scale, platforms like Surfer SEO and Clearscope help you optimize individual pieces for semantic coverage -- making sure each article covers the concepts and entities AI models expect to see on that topic.

Step 5: Get cited in third-party sources

Your own website is only part of the picture. AI models pull citations from across the web -- including Reddit threads, YouTube videos, industry publications, review sites, and forums. If your brand is only mentioned on your own domain, that's a thin presence.

The Exposure Ninja framework calls this "strategic ubiquity": making sure your brand appears across the trusted sources that AI models already cite in your category. That means:

- Getting mentioned in relevant industry publications and roundups

- Building a presence in Reddit communities where your customers ask questions

- Creating YouTube content that answers the questions your customers search for

- Earning reviews on platforms like G2, Capterra, or Trustpilot (which AI models frequently cite for product recommendations)

- Getting quoted in journalist pieces and expert roundups

This is essentially digital PR, but with a specific goal: not just brand awareness, but citation presence in the sources AI models trust.

One thing worth tracking: which third-party sources are AI models currently citing when they answer questions in your category? That tells you exactly where to focus your outreach. Promptwatch's Citation & Source Analysis surfaces this data -- showing you which Reddit threads, YouTube videos, and external domains are being cited in AI responses for your target prompts.

Step 6: Optimize for multiple AI platforms, not just one

Different AI models have different citation patterns. Perplexity does live web retrieval and tends to cite recent, well-indexed content. ChatGPT's browsing mode favors authoritative domains. Google AI Overviews leans heavily on traditional SEO signals. Claude tends to cite sources that are clearly structured and factually precise.

Optimizing for one platform and ignoring the others leaves visibility on the table. The good news is that the fundamentals overlap significantly -- clear structure, direct answers, topical depth, and third-party presence help across all platforms.

Where they diverge: Google AI Overviews rewards traditional SEO signals more than other platforms, so your technical SEO foundation matters more there. Perplexity rewards recency, so keeping content updated helps. ChatGPT's product recommendations (Shopping) are a separate surface worth tracking if you sell physical products.

Here's a quick comparison of what matters most on each major platform:

| Platform | Key citation signals | Recency sensitivity | Schema impact |

|---|---|---|---|

| Google AI Overviews | Traditional SEO, E-E-A-T, structured data | Medium | High |

| Perplexity | Live retrieval, indexed content, recency | High | Medium |

| ChatGPT (browsing) | Domain authority, clear structure | Medium | Medium |

| Claude | Factual precision, clear structure | Low | Low |

| Gemini | Google index signals, E-E-A-T | Medium | High |

| Grok | X/Twitter presence, recency | High | Low |

Step 7: Monitor AI crawler activity on your site

This one gets overlooked. AI models don't just pull from their training data -- they actively crawl the web. ChatGPT's crawler (GPTBot), Perplexity's crawler (PerplexityBot), and Claude's crawler (ClaudeBot) all visit websites to retrieve fresh content.

If these crawlers are hitting your site and encountering errors (404s, blocked pages, slow load times), they may not be indexing your content properly. You'd never know unless you're looking at your server logs.

Tools like DarkVisitors let you see which AI crawlers are visiting your site and what they're accessing.

Promptwatch goes further with dedicated AI Crawler Logs -- real-time visibility into which AI crawlers are hitting which pages, how often they return, and what errors they encounter. This is the kind of data that tells you whether your content is actually being discovered, not just whether it's been published.

Step 8: Track your results and close the loop

Publishing content and hoping for the best isn't a strategy. You need to know whether your AI visibility is improving, which pages are driving citations, and whether those citations are translating into actual traffic.

Standard analytics tools don't show you this. Google Analytics can't tell you that a user came to your site because ChatGPT cited your article. You need dedicated tracking.

What to measure:

- AI visibility score (how often your brand appears in AI responses for target prompts)

- Citation rate (what percentage of AI responses for your target prompts include a link to your content)

- Share of voice vs competitors (are you gaining or losing ground?)

- AI-referred traffic (actual sessions coming from AI platforms)

- Page-level citations (which specific pages are being cited, and by which models)

Several tools handle different parts of this:

For the full picture -- visibility tracking, traffic attribution, and content optimization in one place -- Promptwatch connects all of these dots, including GSC integration and server log analysis to tie AI citations to actual revenue.

Putting it all together: the optimization loop

The mistake most marketers make is treating AI visibility as a one-time project. It's not. It's an ongoing loop:

- Audit your current visibility across AI platforms

- Identify content gaps (prompts where competitors appear but you don't)

- Create content that directly addresses those gaps, structured for AI citation

- Build third-party presence in the sources AI models trust

- Monitor crawler activity to ensure your content is being discovered

- Track visibility scores and traffic attribution

- Repeat -- find the next set of gaps, create the next batch of content

The brands winning in AI search right now aren't doing anything exotic. They're doing the fundamentals well and consistently: clear content, direct answers, topical depth, and a presence across the web that AI models can find and trust.

The window to get ahead of competitors who haven't started yet is narrowing. But it's still open.

Tools to help you get started

Here's a summary of the tools mentioned in this guide, organized by what they're best for:

| Tool | Best for | Pricing |

|---|---|---|

| Promptwatch | Full-loop: visibility tracking, gap analysis, content generation, traffic attribution | From $99/mo |

| Profound | AI visibility monitoring and reporting | Custom |

| Otterly.AI | Affordable entry-level AI monitoring | Freemium |

| Peec AI | Multi-language AI visibility tracking | From $49/mo |

| Ahrefs Brand Radar | Brand monitoring across AI search results | Included with Ahrefs |

| Surfer SEO | On-page content optimization for AI and search | From $89/mo |

| Clearscope | Semantic content optimization | From $170/mo |

| DarkVisitors | AI crawler monitoring and bot management | Free tier available |

| Topical Map AI | Topic cluster planning | From $29/mo |

The most important thing isn't which tool you pick -- it's that you start measuring. You can't optimize what you can't see, and right now, most brands are flying blind on AI visibility.