Key takeaways

- Traditional Google SEO isn't dead, but it's no longer enough on its own. Google still handles ~13.7 billion searches per day, while ChatGPT processes around 1 billion — and that gap is closing.

- AI search engines (ChatGPT, Perplexity, Gemini, Claude) work differently from Google. They synthesize answers rather than return links, so visibility depends on whether AI models understand, trust, and cite your content.

- A modern AI SEO strategy runs in a loop: find the prompts where competitors get cited but you don't, create content that fills those gaps, then track whether AI models start citing you.

- Entity association and topical authority matter more than ever. AI models build mental maps of who is an expert on what — you need to be on that map.

- Measuring success now includes AI citation counts, brand mentions in LLM responses, and AI-driven traffic — not just Google rankings.

Why 2026 is different from every other "SEO is changing" year

Every year someone declares SEO is dead. They're usually wrong. But 2026 is genuinely different, and the reason is structural rather than algorithmic.

For the last two decades, SEO meant one thing: rank higher in Google's blue-link results. The mechanics shifted — from keyword stuffing to PageRank to E-E-A-T — but the underlying system stayed the same. Users type a query, Google returns a list, users click.

That system still exists. But now there's a second, parallel system running alongside it. When someone asks ChatGPT "what's the best project management tool for remote teams?" or asks Perplexity "which CRM should I use for a 10-person sales team?", they're not getting a list of links to evaluate. They're getting a synthesized answer. And your brand either appears in that answer or it doesn't.

The Clearscope 2026 SEO report put it well: "SEO isn't disappearing. It's diverging." One world still revolves around traditional rankings. The other is emerging inside AI-driven answers.

The brands that figure out how to win in both worlds simultaneously will have a significant advantage. Here's how to build that strategy from scratch.

Step 1: Understand the two-layer search environment

Before you write a single piece of content, you need to understand what you're actually optimizing for.

Layer 1: Traditional Google search

Google still dominates by volume. Ranking in the top three results for high-intent keywords still drives real revenue. Technical SEO, backlinks, on-page optimization, Core Web Vitals — none of this is obsolete. If you're ignoring traditional SEO to chase AI visibility, you're making a mistake.

Layer 2: AI-generated answers

This is where the new opportunity lives. AI models like ChatGPT, Perplexity, Claude, and Gemini generate answers by synthesizing content from sources they've crawled, indexed, and deemed credible. When they cite a source, that brand gets visibility — and increasingly, clicks.

The key difference: AI models don't rank pages by keyword match. They build associations. They "know" that Backlinko is an authority on link building, that HubSpot knows CRM, that NerdWallet knows personal finance. If your brand isn't associated with your topic in the model's training data and live browsing, you won't appear in answers — regardless of how well your page is optimized for Google.

This is why Brian Dean's approach at Backlinko and Exploding Topics worked: he focused on entity association first, then content, then traditional SEO. The result was citations in AI answers for high-intent searches while still generating leads from Google.

Step 2: Build your entity foundation

"Entity SEO" sounds abstract but the concept is simple. AI models and Google's Knowledge Graph both build a mental model of who you are, what you're an expert on, and how you relate to other entities in your space. Your job is to make that model as clear and comprehensive as possible.

Define your core entity

What does your brand stand for? Pick one or two specific areas of expertise, not ten. AI models reward depth over breadth when it comes to association. If you're a project management tool, you want AI to associate you with "project management for remote teams" or "agile project tracking" — not just "productivity software."

Build an entity hub

An entity hub is a central page (or set of pages) that clearly establishes your expertise on a topic. Think of it as your "home base" for a subject. It should:

- Comprehensively cover the topic from multiple angles

- Link out to supporting content that goes deeper on subtopics

- Include structured data (schema markup) that helps both Google and AI crawlers understand what the page is about

- Earn backlinks from authoritative sources in your space

Seed your entity across the web

AI models don't just crawl your website. They synthesize information from Reddit threads, YouTube videos, Wikipedia, industry publications, and third-party reviews. If your brand is only mentioned on your own site, you're invisible to the model's broader understanding.

Practical seeding tactics:

- Get mentioned in relevant Reddit discussions (authentically — not spam)

- Publish on third-party platforms like Medium, LinkedIn, or industry publications

- Earn coverage in niche publications that AI models treat as authoritative

- Create YouTube content that answers the questions your customers ask

Step 3: Map the prompts your customers are actually using

Traditional keyword research asks "what do people type into Google?" AI SEO asks a different question: "what do people ask AI models, and who gets cited in the answer?"

These are related but not identical. Someone searching Google might type "best CRM software." The same person asking ChatGPT might say "I run a 15-person B2B sales team, we're currently using spreadsheets, what CRM would you recommend and why?" The AI's answer to that second question will cite specific brands — and those brands got there by having content that directly addresses that kind of detailed, conversational query.

How to find the right prompts

Start with your customer's actual questions. Talk to your sales team. Look at support tickets. Check what people ask in relevant subreddits. These natural-language questions are closer to AI prompts than traditional keywords.

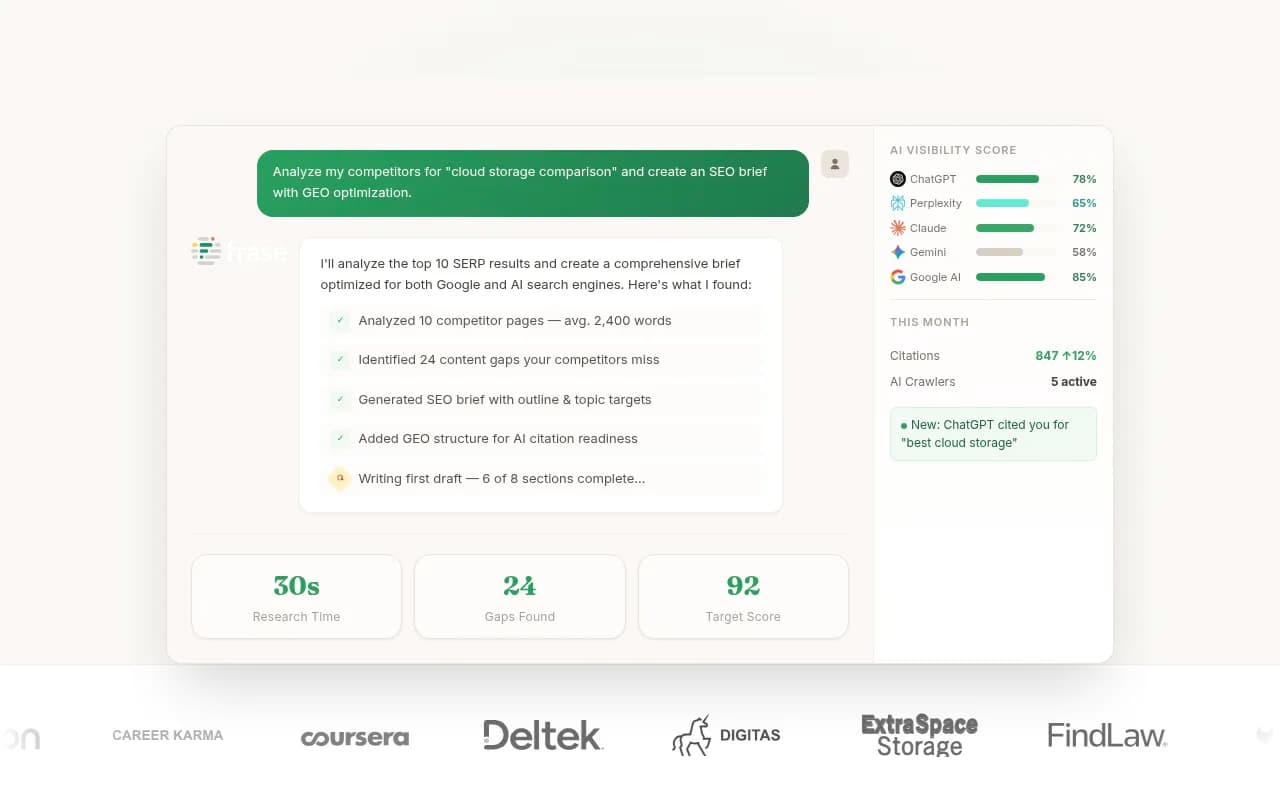

Then look at where your competitors are getting cited. Tools like Promptwatch have an Answer Gap Analysis feature that shows you exactly which prompts competitors appear in but you don't — giving you a concrete list of content to create.

For traditional keyword research feeding into this process, tools like Semrush and Ahrefs remain useful for understanding search volume and intent.

Step 4: Create content engineered for AI citation

This is where most guides get vague. "Create high-quality content" is not a strategy. Here's what actually works.

Write for conversational queries, not just keywords

AI models respond to natural language questions. Your content should directly answer those questions — in the first paragraph, not buried after 500 words of preamble. If someone asks "what's the difference between agile and waterfall project management?", your page should answer that question clearly and immediately.

Use the "answer, then depth" structure

Lead with a direct, quotable answer. Then go deeper. AI models often pull the clearest, most direct answer they can find. If your page buries the answer in qualifications and caveats, a competitor's cleaner answer will get cited instead.

Cover topics with genuine depth

Surface-level content doesn't get cited. AI models favor sources that demonstrate real expertise — content that covers edge cases, includes specific examples, addresses common misconceptions, and goes beyond what a generic overview would say.

Include structured data

FAQ schema, HowTo schema, and Article schema all help AI crawlers understand your content structure. This isn't a magic bullet, but it removes friction for AI models trying to parse your pages.

Content tools worth using

For content optimization and brief creation:

For AI-assisted content creation that's grounded in SEO data:

Step 5: Fix your technical foundation for AI crawlers

Traditional technical SEO (site speed, crawlability, mobile optimization) still matters. But AI search adds new technical considerations most teams haven't addressed yet.

Make sure AI crawlers can access your content

AI models crawl the web using their own bots. ChatGPT uses GPTBot, Anthropic uses ClaudeBot, Perplexity uses PerplexityBot. If your robots.txt is blocking these crawlers, you're invisible to those models.

Check your robots.txt file right now. Many sites accidentally block AI crawlers because they copied a generic robots.txt template that predates AI search.

DarkVisitors is useful here — it tracks which AI agents and bots are visiting your site and helps you manage access rules.

Monitor how AI crawlers interact with your site

Beyond just allowing access, you want to know which pages AI crawlers are actually reading, how often they return, and whether they're hitting errors. This is a newer capability that most SEO tools don't offer yet.

For traditional crawling and technical audits, Screaming Frog remains the standard. For AI-specific crawler monitoring, Promptwatch's crawler logs feature shows real-time data on which AI bots are hitting your pages and what they're finding.

Ensure your content loads cleanly

AI crawlers don't execute JavaScript the same way browsers do. If your content is rendered client-side via JavaScript, some AI crawlers may not see it at all. Audit your key pages to confirm the content is in the HTML source, not just rendered in the browser.

Step 6: Distribute across the full search ecosystem

Eric Siu's framing of "search everywhere optimization" is right. Your customers aren't just on Google. They're on YouTube, Reddit, LinkedIn, and increasingly asking AI models directly. A strategy that only targets one channel is fragile.

YouTube

YouTube is the second-largest search engine and AI models frequently cite YouTube content in their answers. Creating video content on your core topics builds both direct YouTube visibility and AI citation potential.

Reddit has become a significant source for AI model training data and live retrieval. Perplexity in particular frequently cites Reddit threads. Being present in relevant subreddit discussions — genuinely, not spammily — builds the kind of third-party mentions that influence AI responses.

LinkedIn and industry publications

For B2B brands, LinkedIn articles and posts from credible individuals at your company contribute to entity association. When multiple sources across the web associate your brand with a topic, AI models pick up on that pattern.

Step 7: Track the right metrics

This is where most teams fall down. They optimize for AI visibility but keep measuring success with 2020 metrics. Here's what to track in 2026.

Traditional metrics (still relevant)

- Organic traffic from Google

- Keyword rankings for target terms

- Backlink profile growth

AI visibility metrics (new and essential)

- Brand mention rate in AI responses: how often does your brand appear when someone asks AI about your category?

- Citation share: of all the sources AI models cite for your target prompts, what percentage are yours?

- AI-driven traffic: actual clicks and sessions coming from AI platforms (measurable via UTM parameters, server logs, or GSC integration)

- Prompt coverage: how many of your target prompts do you appear in, across which AI models?

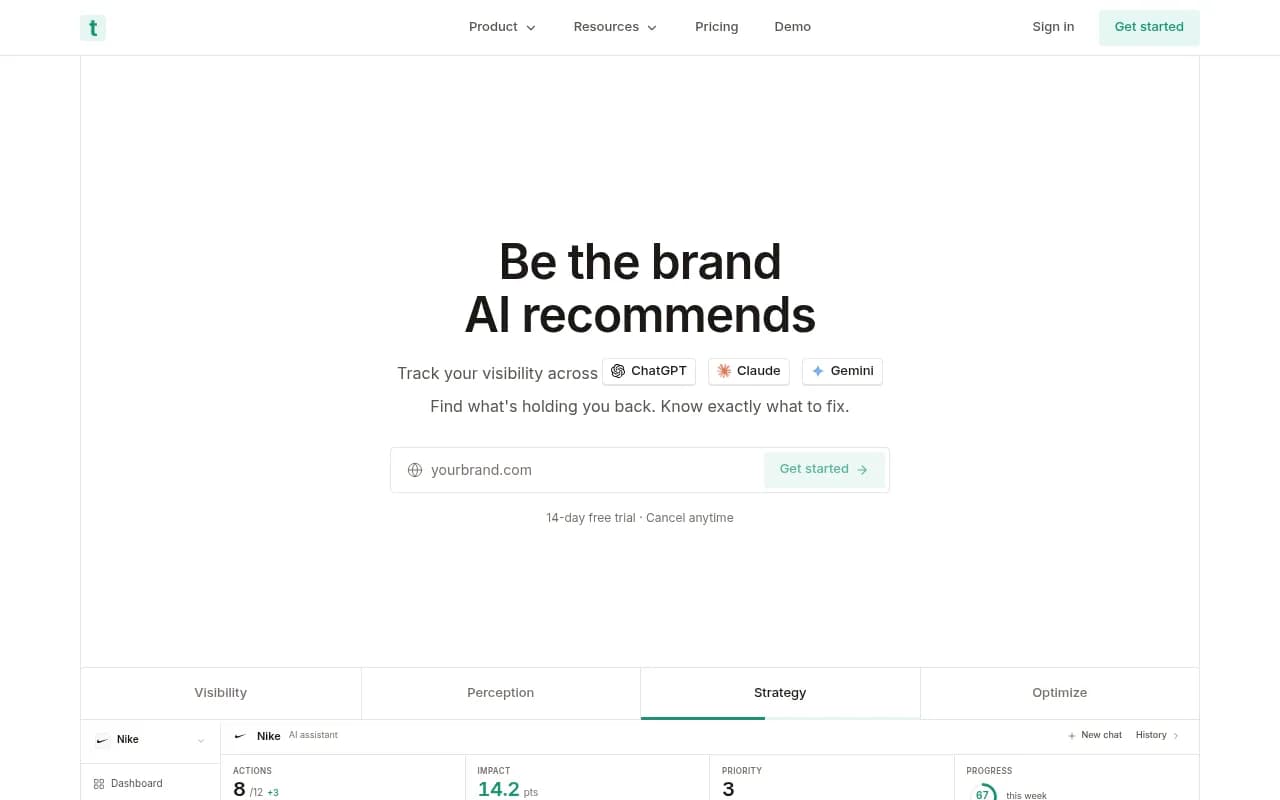

Tools for tracking AI visibility

There are now quite a few platforms built specifically for this. Here's a comparison of the main options:

| Tool | AI models tracked | Content generation | Crawler logs | Best for |

|---|---|---|---|---|

| Promptwatch | 10+ (ChatGPT, Claude, Perplexity, Gemini, Grok, etc.) | Yes (built-in AI writer) | Yes | Full-cycle optimization |

| Semrush One | ChatGPT, Perplexity, Gemini | No | No | Teams already on Semrush |

| Ahrefs Brand Radar | ChatGPT, Perplexity, Gemini | No | No | Traditional SEO teams |

| Profound | ChatGPT, Perplexity, Gemini, Claude | No | No | Enterprise monitoring |

| Otterly.AI | ChatGPT, Perplexity, Gemini | No | No | Budget monitoring |

| AthenaHQ | 8+ AI engines | No | No | Monitoring-focused teams |

The core difference between these tools: most of them show you data. Promptwatch is built around acting on it — the Answer Gap Analysis tells you which prompts to target, the built-in writing agent creates content designed to get cited, and the tracking closes the loop by showing whether it worked.

For teams that want a simpler entry point into AI visibility tracking:

Step 8: Close the loop with attribution

Visibility without revenue impact is just vanity. The final step is connecting your AI search presence to actual business outcomes.

Track AI-referred traffic

Set up UTM parameters for any links you control that appear in AI contexts. Monitor referral traffic from domains like perplexity.ai, chatgpt.com, and claude.ai in Google Analytics or your analytics platform of choice.

Use server log analysis

Server logs capture every request to your site, including from AI crawlers and AI-referred users. This is often more reliable than JavaScript-based analytics, which can miss server-side rendering scenarios.

Connect to revenue

The ultimate question is: does appearing in AI answers drive leads, signups, or purchases? Set up conversion tracking that captures the full journey from AI referral to conversion. This is harder than traditional attribution but increasingly necessary as AI traffic grows.

Putting it all together: the action loop

The most useful mental model for AI SEO in 2026 is a loop, not a checklist:

- Find the gaps: which prompts are your competitors appearing in that you're not?

- Create content: build pages that directly answer those prompts with genuine depth

- Track results: monitor whether AI models start citing your new content

- Repeat: use the new data to find the next set of gaps

This loop is what separates brands that grow their AI visibility systematically from those that publish content and hope for the best.

The tools, tactics, and platforms will keep evolving. But the underlying logic — understand how AI models discover and cite content, create content that earns citation, measure the result — will stay relevant regardless of which AI model is dominant in 2027 or 2028.

Start with your entity foundation. Map the prompts that matter to your business. Create content that answers them better than anyone else. And track what's actually working.

That's the playbook.