Key takeaways

- Claude prioritizes verifiable, structured, fact-dense content over conversational or promotional writing -- this is fundamentally different from how ChatGPT selects citations.

- Enterprise B2B buyers increasingly use Claude to analyze uploaded documents (RFPs, whitepapers, technical specs), so your PDFs and documentation matter as much as your web pages.

- Claude's Constitutional AI training makes it especially skeptical of vague claims -- every assertion needs a source or a specific number behind it.

- Getting cited in Claude requires a different content strategy than ranking on Google; 73% of brands that dominate traditional search are completely invisible in AI citations.

- Tracking your Claude visibility requires dedicated tools -- traditional rank trackers won't show you whether you're being cited in AI responses.

There's a scenario playing out in boardrooms right now that most marketing teams haven't caught up to yet. A procurement manager opens Claude, uploads three vendor whitepapers, and asks which solution meets their compliance requirements. Claude synthesizes everything and names two competitors with specific feature citations. Your company doesn't appear -- not because your product is worse, but because your documentation wasn't written in a way Claude could confidently cite.

This is the Claude visibility gap. And it's different from the visibility gaps you face on ChatGPT or Perplexity, because Claude operates on different principles.

Research analyzing 129,000 domains found that 73% of brands never appear in ChatGPT citations even when they dominate traditional search. The numbers for Claude are similar. But the fix isn't the same. Claude has its own citation logic, its own content preferences, and its own relationship with trust and verifiability. Understanding those differences is the whole game.

How Claude decides what to cite

Claude is built on Anthropic's Constitutional AI framework. Without getting too deep into the technical weeds, this means Claude is trained to prioritize responses that are honest, harmless, and helpful -- and that training directly shapes what it cites.

In practice, this means Claude is unusually skeptical of vague or promotional language. Where ChatGPT might cite a well-trafficked blog post, Claude gravitates toward content that reads like it was written by someone who actually knows what they're talking about: specific numbers, clear definitions, logical structure, and verifiable claims.

Claude also has a massive context window -- the current Claude Sonnet models support up to 200,000 tokens (roughly 300 pages of text), with enterprise versions expanding to 1 million tokens. This is why it's become the go-to tool for complex document analysis. When a buyer uploads five vendor documents and asks Claude to compare them, Claude isn't doing a web search. It's reading everything in the context window and synthesizing it. Your web content isn't even in the room.

This has a direct implication: your PDFs, whitepapers, case studies, and technical documentation are first-class citizens for Claude optimization, not afterthoughts.

Claude vs. other AI platforms: citation behavior compared

| Platform | Citations per response | Content preference | Key differentiator |

|---|---|---|---|

| ChatGPT | 2-4 sources | Encyclopedic, Wikipedia-style | Cites Wikipedia for 47.9% of top sources |

| Perplexity | 6.61 sources | Fresh content, Reddit/YouTube | Articles <30 days get cited 3.2x more |

| Claude | 3-5 sources | Structured, verifiable, fact-dense | Favors technical docs and deep-tier assets |

| Google AI Overviews | 3-4 sources | Authoritative web pages | Heavily influenced by traditional SEO signals |

The table makes the point clearly: Claude is the outlier. It's not chasing recency like Perplexity, and it's not defaulting to encyclopedic sources like ChatGPT. It wants structured, verifiable content -- and that opens a real opportunity for brands willing to create it.

The content types Claude actually cites

Technical documentation and whitepapers

This is where Claude differs most sharply from other platforms. When enterprise buyers use Claude for vendor research, they're often uploading documents directly. Your security whitepaper, API documentation, compliance overview, or integration guide can be in the context window alongside your competitors' materials.

The implication: these documents need to be written for Claude, not just for humans. That means:

- Clear entity definitions at the start of each section (don't assume Claude knows your product's terminology)

- Specific, verifiable claims with numbers ("reduces deployment time by 40%" beats "significantly faster")

- Logical structure with explicit headings that match the questions buyers ask

- No marketing fluff -- every sentence should carry information

A document that says "our platform is the leading solution for enterprise security" is useless to Claude. A document that says "the platform achieved SOC 2 Type II certification in Q3 2024 and supports SSO via SAML 2.0 and OAuth 2.0" gives Claude something it can actually cite.

FAQ and Q&A content

Claude is frequently used for conversational queries -- people ask it full questions, not keywords. Content structured around specific questions performs well because it matches the query format directly.

The best approach is to write FAQ sections that mirror how your target audience actually phrases their questions. Not "What is [Product]?" but "How does [Product] handle multi-tenant data isolation?" or "What's the difference between [Product] and [Competitor] for HIPAA compliance?"

These aren't just good for Claude -- they're good for any AI platform. But Claude's Constitutional AI training makes it especially likely to cite content that directly and accurately answers a specific question.

Case studies with specific outcomes

Generic case studies ("Company X improved their workflow with our platform") don't give Claude much to work with. Case studies that include specific metrics, named companies (where permitted), industry context, and before/after comparisons are far more citable.

Structure them like this:

- Company profile (industry, size, specific challenge)

- What they tried before and why it didn't work

- What they implemented and how

- Specific, measurable outcomes with timeframes

- Quotes from named individuals with titles

That last point matters. Claude's Constitutional AI training makes it favor content with attributable claims. A quote from "Jane Smith, VP of Operations at Acme Corp" is more citable than an anonymous testimonial.

On-page signals that influence Claude citations

Structured data and schema markup

Claude can parse structured data, and using schema markup helps it understand what your content is about. For most brands, the most relevant schema types are:

ArticleorTechArticlefor guides and documentationFAQPagefor Q&A contentOrganizationfor your brand's core informationHowTofor process-oriented contentProductfor product pages with specifications

This isn't magic -- schema markup won't guarantee citations. But it reduces ambiguity about what your content is and who created it, which matters for a model trained to prioritize verifiable information.

Clear authorship and entity signals

Claude pays attention to who wrote something. Content with clear author bylines, author bio pages with credentials, and consistent entity signals (your brand name, author names, and organization appearing consistently across your site) performs better than anonymous content.

This connects to a broader principle: Claude is trying to assess whether a source is trustworthy. Authorship signals are part of that assessment.

Heading structure and scannability

Claude parses document structure. Content with clear H2 and H3 headings, short paragraphs, and logical flow is easier for Claude to extract specific claims from. Long walls of text make it harder for Claude to identify the specific, citable assertion buried inside.

A practical rule: if you can't summarize each section in one sentence using the heading, the heading isn't specific enough.

Off-site signals: where Claude looks beyond your website

Third-party mentions and citations

Claude's training data includes a wide range of web content, and third-party mentions of your brand contribute to how Claude perceives your authority. This means:

- Getting cited in industry publications and research reports

- Being mentioned in analyst coverage (Gartner, Forrester, G2 reports)

- Appearing in comparison articles and "best of" lists

- Having your research or data cited by other credible sources

The goal isn't just backlinks for Google -- it's building a web of mentions that Claude's training data can draw on to establish your brand as a credible source in your category.

Wikipedia and reference content

ChatGPT cites Wikipedia for nearly half its top sources. Claude is less extreme about this, but having a Wikipedia presence (or being mentioned in relevant Wikipedia articles) still contributes to how AI models perceive your brand's legitimacy. If your brand is significant enough to have a Wikipedia entry, make sure it's accurate and well-sourced.

Reddit and community discussions

Perplexity cites Reddit heavily (46.7% of citations in some analyses). Claude is less Reddit-dependent, but community discussions that mention your brand in a factual, helpful context still influence AI perception over time. Participating in relevant subreddits, answering questions on Stack Overflow, or contributing to industry forums builds the kind of authentic third-party signal that AI models value.

The enterprise B2B angle: optimizing for document-based queries

This deserves its own section because it's genuinely underappreciated. Research shows 66% of UK B2B decision-makers now use AI tools for vendor research. Claude is disproportionately popular for this use case because of its long context window and its reputation for careful, structured reasoning.

When a buyer uploads your documentation alongside competitors' materials, Claude is essentially running a real-time comparison. Here's what that means for your content strategy:

Make your differentiators explicit. Don't bury your key advantages in marketing language. State them clearly in your technical documentation: "Unlike [competitor category], [Product] does X because of Y architecture decision."

Address the questions buyers actually ask. Think about the RFP questions your sales team receives. Those are the questions buyers are asking Claude. Create documentation that answers them directly.

Use consistent terminology. If buyers refer to a feature as "role-based access control" and your documentation calls it "permission management," Claude may not connect them. Align your terminology with how your buyers describe their needs.

Include comparison-friendly content. Comparison tables, feature matrices, and specification sheets are exactly the kind of structured content Claude can extract and synthesize. A well-structured comparison of your product vs. the category average is highly citable.

Tracking whether your strategy is working

Here's the uncomfortable truth: you can't tell if Claude is citing you by looking at your Google Analytics. AI citations often don't generate referral traffic in the traditional sense -- the user gets their answer in Claude and may never click through to your site.

This is why dedicated AI visibility tracking has become essential. You need tools that actually query Claude (and other AI platforms) with the prompts your buyers use, then check whether your brand appears in the response.

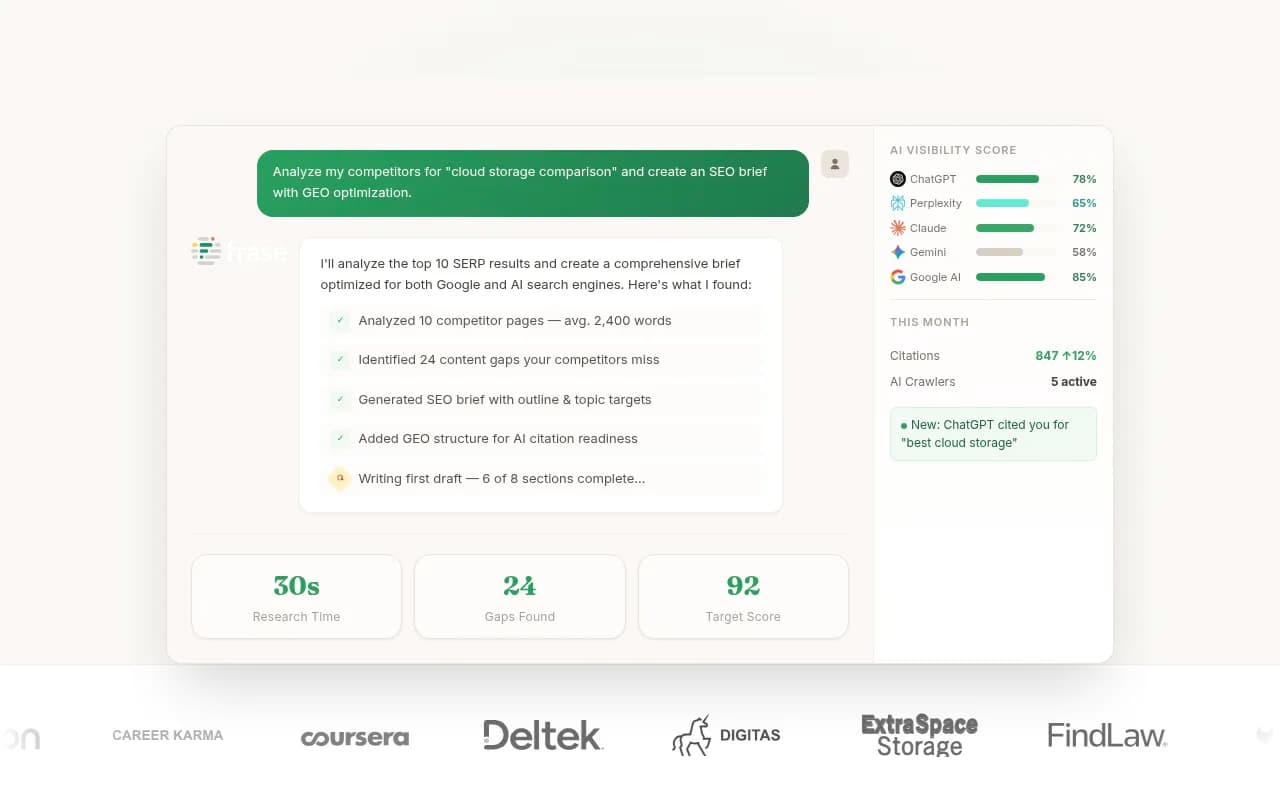

Promptwatch is built specifically for this -- it tracks your brand's visibility across Claude, ChatGPT, Perplexity, Gemini, and other AI platforms, and importantly, it goes beyond monitoring to help you identify which content gaps are causing you to miss citations and generate content to fill them.

For teams that want to track Claude visibility specifically, a few other tools are worth knowing about:

The key metrics to track:

- Citation frequency: how often does your brand appear when Claude answers relevant prompts?

- Citation position: are you mentioned prominently or as a footnote?

- Prompt coverage: which of the questions your buyers ask does Claude cite you for?

- Competitor comparison: who is Claude citing instead of you, and for which prompts?

A practical action plan

Rather than leaving you with a list of abstract principles, here's a concrete sequence:

Week 1-2: Audit your existing content for Claude-readiness. Go through your top pages, whitepapers, and case studies. For each one, ask: does this contain specific, verifiable claims? Is it structured with clear headings? Does it directly answer questions buyers ask? Flag everything that fails these tests.

Week 3-4: Rewrite your highest-priority assets. Start with the content that appears in your sales process -- the documents buyers actually read during evaluation. Add specific numbers, clear entity definitions, and FAQ sections that mirror real buyer questions.

Month 2: Build your off-site presence. Identify the publications, analyst reports, and comparison sites where your competitors are mentioned but you aren't. Develop a plan to get cited in those places.

Month 3+: Track, iterate, and expand. Set up AI visibility tracking so you can see which prompts Claude cites you for and which it doesn't. Use that data to prioritize your next round of content creation.

The brands that will win in Claude citations aren't necessarily the ones with the biggest content teams. They're the ones that understand what Claude is actually looking for -- and create content that delivers it.

Tools to help you get there

Beyond tracking, several tools can help with the content creation and optimization side of Claude visibility:

For technical SEO audits that ensure your site is properly structured and crawlable by AI systems:

And if you want a broader view of your AI visibility across all platforms, not just Claude:

The discipline of optimizing for Claude is still young enough that most of your competitors haven't figured it out. That's actually the opportunity. The brands that invest in structured, verifiable, enterprise-grade content now will have a meaningful head start by the time the rest of the market catches up.