Key takeaways

- Brand visibility trackers tell you where you appear in AI responses; answer engine optimizers help you change that picture by improving your content

- Most tools in 2026 lean heavily toward monitoring -- they show you data but leave the fixing to you

- A handful of platforms now combine tracking and optimization in one workflow, which is worth knowing about before you buy two separate subscriptions

- Whether you need both depends on your current AI visibility maturity -- teams just starting out often need a tracker first; teams already tracking need an optimizer next

- The tools that close the loop between "you're invisible here" and "here's the content to fix it" are the ones delivering real ROI right now

The category is getting crowded fast. Scroll through any marketing newsletter in 2026 and you'll see a new "AI visibility" tool announced every other week. Some call themselves brand monitors. Some call themselves GEO platforms. Some use "answer engine optimization" in their tagline. Most of them do roughly the same thing: they run your target prompts through ChatGPT or Perplexity, check if your brand shows up, and give you a score.

That's useful. But it's not the whole picture.

The real question isn't "which category of tool is better?" It's "what do I actually need to do next?" And the answer to that depends on where you are in your AI search journey.

What brand visibility trackers actually do

A brand visibility tracker monitors how often your brand appears in AI-generated responses. You give it a list of prompts -- things like "best CRM for small businesses" or "top project management tools" -- and it runs those prompts across AI models on a schedule, then logs whether your brand was mentioned, cited, or recommended.

The better trackers go further. They show you:

- Which AI models mention you (ChatGPT vs Perplexity vs Claude vs Google AI Overviews)

- Your share of voice compared to competitors

- Sentiment -- whether the mention is positive, neutral, or negative

- Which sources the AI cited when it mentioned you

Tools like Otterly.AI and Peec AI sit firmly in this camp. They're affordable, easy to set up, and good at giving you a dashboard view of your AI presence.

Nightwatch is another one worth knowing -- it combines traditional rank tracking with AI visibility monitoring, which makes it practical for teams that still care about Google rankings alongside their AI presence.

The limitation of pure trackers is that they stop at the data. You can see you're invisible for a prompt. You don't know why, and the tool doesn't help you fix it.

What answer engine optimizers actually do

Answer engine optimization (AEO) is about structuring your content so AI models can extract it, trust it, and cite it. An AEO-focused tool goes beyond tracking to help you understand why you're not being cited and what to do about it.

That might include:

- Content gap analysis (which topics are AI models answering without citing you?)

- Recommendations for how to restructure existing pages

- Identifying which third-party sources (Reddit threads, YouTube videos, review sites) AI models are pulling from instead of you

- Generating new content designed specifically to get cited

The distinction matters because tracking and optimizing require different workflows. Tracking is passive -- you set it up and check the dashboard. Optimization is active -- you analyze gaps, create content, publish it, and track whether it moved the needle.

The spectrum: where tools actually fall

Most tools in 2026 fall somewhere on a spectrum between "pure tracker" and "full optimizer." Here's an honest look at where the major players sit:

| Tool | Primary focus | Content generation | Competitor gap analysis | AI crawler logs | Best for |

|---|---|---|---|---|---|

| Otterly.AI | Monitoring | No | Basic | No | Budget brand monitoring |

| Peec AI | Monitoring | No | Basic | No | Multi-language tracking |

| Nightwatch | SEO + AI monitoring | No | No | No | Agencies with SEO + AI needs |

| Profound | Monitoring + analytics | Limited | Yes | No | Enterprise brand tracking |

| AthenaHQ | Monitoring + optimization | No | Yes | No | Mid-market brands |

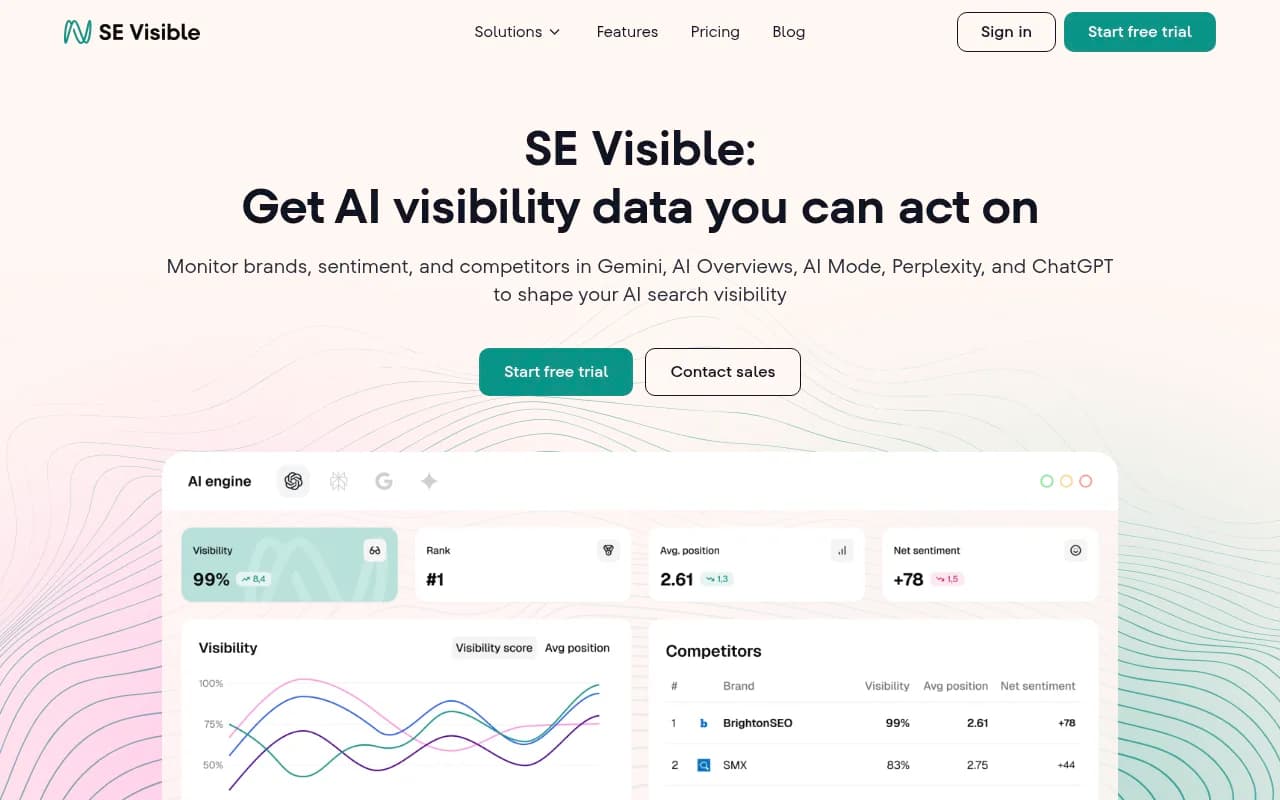

| SE Visible | Monitoring + sentiment | No | Yes | No | CMOs and brand specialists |

| Promptwatch | Full optimization loop | Yes (AI writing agent) | Yes (Answer Gap Analysis) | Yes | Teams that want to track and fix |

Promptwatch is the one that stands out here because it's built around closing the loop -- not just showing you where you're invisible, but helping you create the content that fixes it.

The case for starting with just a tracker

If you've never tracked your AI visibility before, starting with a monitoring tool makes sense. You need a baseline before you can optimize anything.

A tracker answers the foundational questions:

- Are we being cited at all?

- Which prompts are we visible for?

- Which competitors are consistently appearing where we're not?

- Which AI models are most relevant to our audience?

Spending $29-$99/month on a monitoring tool for 60-90 days gives you enough data to make smarter decisions. You'll know which gaps are worth closing and which prompts are high-volume enough to justify content investment.

The risk of jumping straight into optimization without this baseline is that you end up creating content for prompts that don't matter, or optimizing for AI models your customers don't actually use.

The case for optimization-first (if you already have data)

If you've been tracking for a while and you have a clear picture of where you're invisible, the monitoring dashboard stops being useful. You already know the problem. What you need is a way to fix it.

This is where the category split becomes a real cost issue. If you're paying for a tracker that shows you gaps but can't help you close them, you end up exporting data to a spreadsheet, briefing a content team, writing articles, publishing them, and then manually checking whether your visibility improved. That loop takes weeks and requires coordinating multiple people and tools.

Platforms that combine gap analysis with content generation compress that cycle significantly. Promptwatch's Answer Gap Analysis, for example, shows you the specific prompts where competitors are visible but you're not -- and then the built-in AI writing agent can generate content designed to close those gaps, grounded in citation data from 880M+ analyzed citations. You publish, track, and see whether the needle moves.

That's a meaningfully different workflow than "here's your score, good luck."

Do you actually need both?

The honest answer: probably not, if you pick the right tool.

The reason people end up running two separate subscriptions is that they chose a monitoring tool first (because it was cheaper and easier to justify) and then realized they needed optimization capabilities that the tracker doesn't offer. So they add a second tool.

If you're starting fresh in 2026, it's worth asking whether the tool you're evaluating can grow with you. A tracker that costs $29/month sounds appealing until you realize you'll need to add a $200/month optimizer in six months anyway.

That said, there are legitimate reasons to run separate tools:

- Your tracker has deep integrations with your existing reporting stack (Looker Studio, custom dashboards) that you don't want to rebuild

- You need enterprise-grade monitoring features (custom personas, multi-region, multi-language) that your optimization platform doesn't match

- You're an agency managing multiple clients with different needs -- some just need monitoring, some need the full optimization workflow

In those cases, running a lightweight tracker alongside a more capable optimization platform can make sense. Just be honest about whether you're actually using both or just paying for one.

What to look for in 2026 specifically

The category has matured enough that "does it track AI mentions" is table stakes. Here's what separates genuinely useful tools from dashboard theater:

Prompt volume and difficulty data. Knowing you're invisible for a prompt is only useful if you know whether that prompt gets enough queries to matter. Tools that surface volume estimates and difficulty scores let you prioritize instead of guessing.

Source and citation analysis. AI models don't make up answers -- they pull from sources. Knowing which pages, Reddit threads, or YouTube videos are being cited instead of you tells you exactly where to focus your content and distribution efforts.

AI crawler logs. This one is underappreciated. If AI crawlers can't access your pages, or they're encountering errors, your content won't get indexed regardless of how good it is. Seeing real-time logs of which AI crawlers are hitting your site (and which pages they're reading or skipping) is genuinely diagnostic information.

Traffic attribution. Visibility scores are nice. Revenue impact is better. The best platforms connect AI citations to actual traffic and conversions -- either through a code snippet, Google Search Console integration, or server log analysis.

Content generation grounded in citation data. Generic AI writing tools can produce articles. What you actually need is content engineered to get cited -- structured around the specific questions AI models are answering, informed by what sources they currently trust.

A practical decision framework

Here's a simple way to think about where you are and what you need:

You've never tracked AI visibility -- Start with a monitoring tool. Otterly.AI or Peec AI are fine entry points. Spend 60-90 days building a baseline.

You've been tracking for a few months and have clear gap data -- Move to a platform that can help you act on it. This is where Promptwatch, AthenaHQ, or Profound become relevant depending on your budget and team size.

You're tracking and optimizing but can't connect it to revenue -- Focus on traffic attribution. Look for platforms with GSC integration, server log analysis, or a tracking snippet that connects AI citations to actual visits and conversions.

You're an agency managing multiple clients -- You probably need a platform with multi-site support, white-label reporting, and enough prompt capacity to cover diverse client needs. Promptwatch's agency and enterprise tiers are built for this; so is Profound at the higher end.

The tools worth knowing about beyond the big names

A few tools that are worth a look depending on your specific situation:

GetCito and Rankshift are both solid options for teams that want straightforward AI visibility tracking without a lot of complexity.

Trakkr.ai and GEO Metrics are newer entrants that cover the major AI models at competitive price points.

For e-commerce specifically, Azoma focuses on ChatGPT shopping recommendations and product carousels -- a niche that most general-purpose trackers don't cover well.

And if you're an enterprise team that needs to connect AI visibility to broader marketing intelligence, BrightEdge and seoClarity both have AI tracking capabilities built into their existing enterprise SEO platforms.

The bottom line

The tracker vs optimizer framing is a bit of a false choice. What you actually need is a workflow that goes from "here's where you're invisible" to "here's what to do about it" to "here's whether it worked." Some tools handle one step. A few handle all three.

In 2026, the brands getting real ROI from AI search investment are the ones that closed that loop -- not the ones with the prettiest dashboards. Start with monitoring if you have no baseline. Move to optimization as soon as you know what to fix. And when you're evaluating tools, ask whether the platform helps you take the next step or just shows you more data about the problem you already know you have.