Key takeaways

- ChatGPT, Perplexity, and Claude have fundamentally different citation mechanics -- a strategy that works on one won't automatically transfer to another.

- Perplexity is the most trackable platform for attribution because it shows sources inline; ChatGPT and Claude rely more heavily on training data and crawled content.

- The biggest mistake brands make is monitoring visibility without acting on the gaps -- tracking tools are only useful if you're also creating content based on what you find.

- Platform-specific optimization isn't optional in 2026. Each AI model has different source preferences, crawl behaviors, and response formats.

- A handful of purpose-built GEO tools now let you track visibility per model, identify content gaps, and generate content engineered to get cited.

If you've been treating AI search visibility as one monolithic thing, you're already behind. ChatGPT, Perplexity, and Claude don't work the same way. They pull from different sources, weight authority differently, and respond to different types of content. Optimizing for one without understanding the others is like running a Google SEO campaign and wondering why it's not moving your Bing numbers.

This guide breaks down how each platform actually works, what makes content get cited on each one, and which tools are worth using to track and improve your visibility in 2026.

How each platform decides what to cite

Before getting into tools, it's worth understanding the mechanics. These three platforms are genuinely different in how they surface information.

ChatGPT

ChatGPT's responses come primarily from its training data, supplemented by web browsing when enabled. This means your content needs to have been crawled and indexed before it can influence responses -- and even then, there's a lag. The model tends to favor well-established domains, content that's been widely linked to, and pages that directly answer specific questions.

One thing that's become clearer in 2026 testing: ChatGPT is more likely to recommend brands that appear consistently across multiple sources. If your brand shows up in listicles, review sites, Reddit threads, and your own well-structured content, that signal compounds. A single well-written page isn't enough.

ChatGPT also has a shopping layer now. For product queries, it surfaces brand recommendations in a carousel format -- separate from the conversational response. Getting into that requires a different optimization approach than getting cited in a text answer.

Perplexity

Perplexity is a live search engine. It queries the web in real time and cites sources inline, which makes it the most transparent of the three. You can see exactly which pages it's pulling from, which makes attribution far more trackable.

The implication: Perplexity rewards fresh, well-structured content that's currently indexed and accessible. If your page has crawl issues, Perplexity won't find it. If your content is buried in JavaScript or behind login walls, it won't get cited. Technical SEO fundamentals matter here more than on the other two platforms.

Perplexity also tends to favor pages that directly answer the query -- not pages that dance around it. Concise, structured answers (with headers, bullet points, and clear factual claims) consistently outperform long-form narrative content in Perplexity citations.

Claude

Claude is the most opaque of the three. Anthropic doesn't publish much about how Claude selects sources, and Claude doesn't always cite sources at all. What we do know from testing: Claude tends to be more conservative about recommending specific brands, and it weights authoritative, well-sourced content heavily.

Claude is also trained on a large corpus of web content, but its web access (via Claude.ai) is more selective. It tends to pull from sources it deems credible -- established publications, official documentation, and content with clear authorship signals.

For brands trying to rank in Claude, the play is authority-building: getting mentioned in credible third-party sources, building clear E-E-A-T signals on your own content, and making sure your brand appears in the types of sources Claude trusts.

Platform comparison: what matters for ranking

| Factor | ChatGPT | Perplexity | Claude |

|---|---|---|---|

| Primary source | Training data + web | Live web search | Training data + selective web |

| Citation transparency | Low (no inline sources by default) | High (inline citations) | Low (often no citations) |

| Freshness sensitivity | Medium | Very high | Low-medium |

| Technical SEO impact | Medium | High | Medium |

| Authority signals | High | Medium | Very high |

| Reddit/forum influence | High | Medium | Medium |

| Structured content preference | Medium | High | Medium |

| Shopping/product layer | Yes (ChatGPT Shopping) | Limited | No |

| Best for trackable attribution | Medium | High | Low |

Why platform-specific strategy matters

A Medium post from Rentier Digital tested all three platforms by asking each one to recommend a specific SaaS product. Perplexity found it immediately because it searches live. ChatGPT and Claude didn't mention it at all -- because the brand hadn't built enough presence in the training data sources those models relied on.

This is the core problem. Most brands are optimizing for "AI search" as if it's one thing. It isn't. You need to think about:

- Which platforms your target customers actually use for the queries you care about

- What sources each platform trusts and how to get into them

- Whether your content is technically accessible to each platform's crawlers

- How to measure visibility per platform, not just in aggregate

The tools worth using in 2026

Tracking visibility across platforms

The first step is knowing where you stand. You can't optimize what you can't measure, and manual testing across three platforms at scale isn't realistic.

Promptwatch tracks visibility across 10 AI models including ChatGPT, Perplexity, and Claude, with page-level data showing which of your pages are being cited, how often, and by which model. What makes it different from most tracking tools is the action layer -- it doesn't just show you your visibility score, it shows you the specific prompts where competitors appear and you don't, then helps you create content to close those gaps.

For teams that want a more focused tracking view, Trakkr.ai monitors brand visibility across ChatGPT, Claude, and Perplexity specifically.

LLMrefs is another option worth looking at -- it tracks citations across the major LLMs and gives you a clear picture of which sources are being pulled into responses.

Finding content gaps (the part most tools skip)

Knowing you're invisible is only useful if you know why. The most valuable capability in 2026 isn't a visibility dashboard -- it's answer gap analysis. This means identifying the specific prompts where competitors are being cited and you aren't, then understanding what content you'd need to create to change that.

Promptwatch's Answer Gap Analysis does this directly: it surfaces the exact prompts where your competitors appear in AI responses but you don't, along with the content topics and angles that are missing from your site. This is the difference between a monitoring tool and an optimization tool.

Profound also has solid gap analysis capabilities and is worth evaluating if you're at the enterprise end.

AthenaHQ tracks visibility across multiple AI engines and has decent competitive analysis features, though it's more monitoring-focused than action-oriented.

Content creation engineered for AI citation

Once you know what's missing, you need to create content that actually gets cited. This is where most SEO content tools fall short -- they're optimized for Google rankings, not AI citation patterns.

The distinction matters. AI models tend to cite content that:

- Directly answers specific questions (not content that buries the answer)

- Has clear structure (headers, lists, defined terms)

- Comes from sources with established authority signals

- Covers topics comprehensively without padding

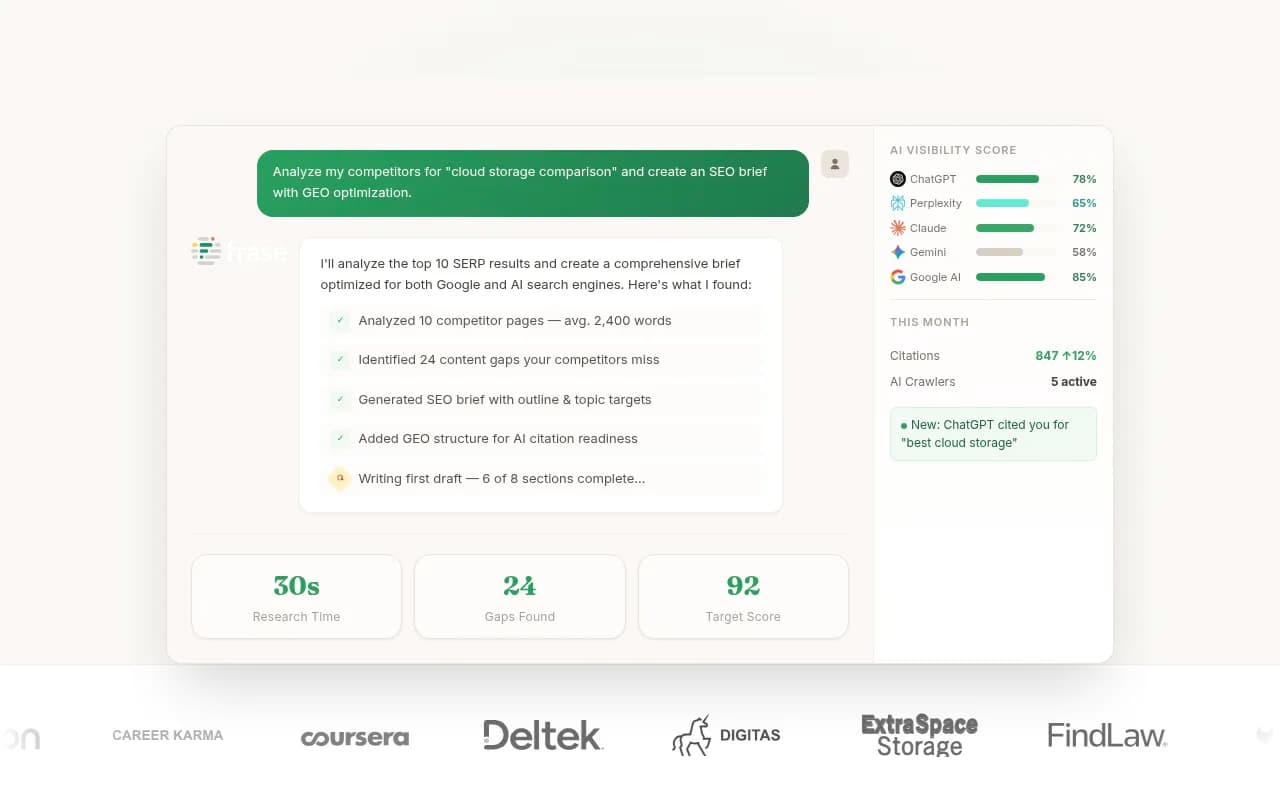

Promptwatch has a built-in AI writing agent that generates content grounded in citation data -- it analyzes 880M+ citations to understand what types of content get cited for specific prompts, then generates articles, listicles, and comparisons based on that data. This isn't generic content generation; it's content built around what AI models actually want to cite.

For more general AI content creation with SEO grounding, Frase is solid for research-backed content briefs.

Clearscope remains one of the better tools for content optimization -- it's primarily Google-focused but the semantic coverage it builds into content also helps with AI citation.

Technical accessibility: making sure AI crawlers can find you

This is the most underrated part of AI search optimization, especially for Perplexity. If AI crawlers can't access your content, none of the above matters.

Promptwatch includes AI Crawler Logs -- real-time logs of AI crawlers (ChatGPT's GPTBot, Perplexity's PerplexityBot, Claude's ClaudeBot, etc.) hitting your site. You can see which pages they're reading, which ones they're skipping, and any errors they're encountering. Most competitors don't offer this at all.

DarkVisitors is a useful free/low-cost tool for understanding which AI bots are crawling your site and managing their access.

For deeper technical SEO auditing that affects crawlability, Screaming Frog remains the standard.

Platform-specific: ChatGPT Shopping

If you're in e-commerce or have products that appear in ChatGPT's shopping carousels, this is a separate optimization track. ChatGPT Shopping surfaces brand recommendations in a visual carousel -- getting into it requires structured product data, strong brand presence across review sites, and ideally some presence in the sources ChatGPT trusts for product recommendations.

Promptwatch tracks ChatGPT Shopping appearances specifically, which is rare -- most tools don't cover this layer at all.

Azoma is worth looking at if ChatGPT Shopping is a priority -- it's built specifically for AI shopping optimization.

Platform-specific strategies, in practice

To rank in ChatGPT

- Build presence across multiple source types: your own content, third-party reviews, Reddit discussions, industry publications. ChatGPT aggregates signals.

- Make sure your brand is mentioned in the types of sources ChatGPT was trained on -- Wikipedia-style factual content, established review sites, well-linked blog posts.

- For product visibility, optimize for ChatGPT Shopping separately from conversational responses.

- Use a tool that tracks training data influence, not just live search citations.

To rank in Perplexity

- Prioritize technical accessibility. Fix crawl errors, ensure fast load times, avoid JavaScript-heavy pages that block bots.

- Structure your content to answer questions directly. Perplexity rewards pages that get to the point.

- Freshness matters. Perplexity searches live, so outdated content is a real disadvantage.

- Monitor which of your pages are being cited and which aren't -- Perplexity's inline citations make this trackable.

To rank in Claude

- Focus on authority signals: authorship, citations, links from credible sources.

- Get your brand mentioned in the types of sources Claude trusts -- established publications, official documentation, well-sourced long-form content.

- Claude is more conservative about brand recommendations, so third-party validation matters more here than on the other platforms.

- Don't expect quick wins. Claude's reliance on training data means changes take longer to show up.

Putting it together: a practical workflow

The brands seeing real results in AI search in 2026 are running a loop, not a one-time optimization:

- Track visibility per platform to understand where you're appearing and where you're not

- Run answer gap analysis to find the specific prompts where competitors are visible and you aren't

- Create content that directly addresses those gaps, structured for AI citation

- Monitor crawler logs to make sure AI bots can actually access your new content

- Track changes in visibility scores as the new content gets indexed and cited

- Repeat

The tools that support this full loop are more valuable than point solutions that only handle one step. Most monitoring-only tools stop at step one and leave you to figure out the rest yourself.

Which tool should you start with?

If you're just getting started with AI search visibility tracking, here's a practical starting point:

| Situation | Recommended starting point |

|---|---|

| You want to track all three platforms in one place | Promptwatch |

| You're primarily focused on Perplexity attribution | Promptwatch or Trakkr.ai |

| You need content gap analysis + content creation | Promptwatch |

| You're in e-commerce and care about ChatGPT Shopping | Promptwatch + Azoma |

| You need to fix technical crawl issues first | Screaming Frog + DarkVisitors |

| You're at enterprise scale with complex reporting needs | Promptwatch (Business/Enterprise) or Profound |

The honest answer is that most brands should start by measuring before optimizing. Pick a tracking tool, run it for a few weeks, and understand your baseline visibility across ChatGPT, Perplexity, and Claude before spending time on content creation. You'll make much better decisions once you know where the actual gaps are.

What you want to avoid is the trap of treating AI search visibility as a set-and-forget SEO task. The platforms update frequently, citation patterns shift, and competitors are actively optimizing. The brands that treat this as an ongoing loop -- track, find gaps, create, track again -- are the ones building durable visibility.