Key takeaways

- The GEO tool market has split into two camps: monitoring-only dashboards and full optimization platforms -- and the difference in value is significant

- Prompt intelligence (volume estimates, difficulty scores, query fan-outs) has become a standard expectation, not a premium feature

- Content generation grounded in real citation data is the biggest leap forward in 2025-2026, separating action-oriented platforms from passive trackers

- AI crawler log analysis is an emerging capability that most tools still don't offer -- but it's becoming critical for diagnosing why AI models ignore certain pages

- Traffic attribution from AI search remains the industry's messiest unsolved problem

A year ago, if you asked a marketer what GEO tools they were using, most would have looked at you blankly. Now there are over 50 platforms competing for the same budget line. That's fast. Maybe too fast.

The category has genuinely matured in some ways. But it's also accumulated a lot of noise -- tools that rebranded from social listening, tools that bolt an "AI visibility" tab onto a traditional SEO dashboard, and tools that are essentially a prompt runner with a bar chart. Telling them apart takes time most people don't have.

I've spent the last few months digging into what's actually changed since 2025, what's gotten meaningfully better, and where the whole category still has a long way to go.

The shift that started everything

The trigger for the GEO tool explosion wasn't gradual. It was a specific, measurable moment: organic search traffic started dropping for sites that were ranking perfectly fine on Google. Not from penalties. Not from algorithm updates. From people getting answers directly from ChatGPT, Perplexity, and Google's AI Overviews without clicking through.

McKinsey's October 2025 report put numbers to what marketers were already feeling anecdotally. AI-generated answers were absorbing a meaningful share of informational queries -- the kind that used to reliably send traffic to well-optimized blog posts and product pages.

That's when the demand for GEO tools went from "interesting experiment" to "urgent business need." And the market responded accordingly.

What's genuinely new in 2026

Prompt intelligence has become table stakes

In early 2025, most GEO tools would let you enter a list of prompts and tell you whether your brand appeared in the response. That was it. You'd get a yes/no, maybe a sentiment score, and a screenshot.

Now the better platforms give you prompt volume estimates, difficulty scores, and something called query fan-outs -- the idea that one top-level prompt ("best project management software") branches into dozens of sub-queries that AI models actually process before generating a response. Understanding that branching structure changes how you prioritize content work.

Tools like Promptwatch have built this into their core workflow, letting teams focus on high-volume, winnable prompts rather than guessing.

This shift matters because GEO without prioritization is just expensive guessing. Knowing that a prompt has high volume but low difficulty -- meaning your competitors aren't well-optimized for it either -- is genuinely actionable. That didn't exist as a standard feature 18 months ago.

Content generation grounded in citation data

This is the biggest functional leap the category has made. Early GEO tools were purely diagnostic. They'd tell you "ChatGPT doesn't mention your brand for this prompt" and leave you to figure out what to do about it.

The more advanced platforms now close that loop with built-in content generation -- but not generic AI writing. The good implementations analyze which sources AI models actually cite (specific pages, Reddit threads, YouTube videos, domains), then use that data to generate articles and comparisons engineered to earn citations. That's a fundamentally different product than a monitoring dashboard with a ChatGPT integration bolted on.

The distinction is worth being precise about: most tools still stop at diagnosis. A smaller number have built the full cycle -- find the gap, generate the content, track whether it worked.

AI crawler log analysis

This one is underappreciated and I think it'll be a bigger deal in 2026 than most people expect.

AI models don't just read your content once and remember it forever. They crawl, they re-crawl, they encounter errors, they skip pages. Understanding that behavior -- which pages ChatGPT's crawler is reading, how often Perplexity comes back, what errors it hits -- gives you a diagnostic layer that pure response monitoring can't provide.

A handful of platforms now offer real-time crawler logs for AI bots. It's still a relatively rare feature, but it's the kind of thing that separates a serious optimization platform from a visibility tracker.

Multi-model coverage has expanded significantly

In early 2025, most tools tracked two or three AI models, usually ChatGPT and Perplexity. Now the better platforms cover 10+ models: ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Grok, DeepSeek, Copilot, Meta AI, Mistral.

This matters because different AI models have different citation behaviors. A brand that's well-cited by Perplexity might be nearly invisible on Claude. Tracking one model and assuming it generalizes is a real blind spot.

What's improved (but not solved)

Answer gap analysis

The concept of "answer gap analysis" -- identifying which prompts your competitors appear for but you don't -- has gotten much more sophisticated. Early versions were basically a diff between two brand mention reports. Now the better implementations show you the specific content your site is missing, the topics and angles AI models want to answer but can't find on your pages.

That said, the quality varies enormously between platforms. Some are still doing surface-level keyword matching. Others are doing genuine semantic analysis of what AI models are actually looking for. The gap between those two approaches is large.

Competitive visibility heatmaps

Side-by-side competitor comparisons have improved from simple share-of-voice percentages to richer heatmaps showing which brand wins for each prompt, across each AI model. This is genuinely useful for prioritization -- you can see where a competitor has a strong position and decide whether to challenge it or focus on prompts where the field is more open.

Reddit and YouTube as citation sources

One thing that surprised a lot of marketers in 2025: AI models cite Reddit and YouTube heavily. Not just authoritative .edu and .gov domains -- actual forum threads and video transcripts.

A few platforms now surface which Reddit discussions and YouTube videos are influencing AI recommendations in your category. This opens up a distribution channel most SEO-focused teams weren't thinking about. It's still an emerging feature, but the platforms that have it are providing genuinely differentiated intelligence.

Persona and region customization

AI responses aren't uniform. The same prompt asked from different countries, in different languages, or framed from different user personas (a CFO vs. a developer, say) can produce very different results. Persona and region customization in GEO tools has improved substantially -- you can now simulate how different customer types are experiencing AI search results, which changes what you optimize for.

What still doesn't work

Traffic attribution from AI search

This is the category's most embarrassing gap. Everyone wants to know: is my AI visibility actually driving revenue? The answer is still mostly "we're not sure."

The problem is structural. AI search doesn't pass referral data the way traditional search does. Direct traffic spikes after an AI mention are real but hard to isolate. Some platforms offer workarounds -- JavaScript snippets, GSC integration, server log analysis -- but none of them give you the clean attribution path that Google Analytics gives you for organic search.

The platforms that are honest about this limitation are more trustworthy than the ones that claim to have solved it. It's a hard problem and the industry hasn't cracked it yet.

Prompt coverage at scale

Most GEO tools are built around a fixed set of prompts you define upfront. That works fine if you know exactly what your customers are asking AI. But AI search is long-tail and unpredictable -- the prompts that matter for your brand are often ones you wouldn't think to add to a tracking list.

Some platforms are starting to address this with automated prompt discovery, but it's still early. The tools that let you monitor a broad, dynamically updated set of prompts are rare.

Actionability for non-technical teams

A lot of GEO platforms are built for people who already understand how AI citation works. The dashboards assume you know what a citation rate means, why it matters that Claude cites different sources than Perplexity, and what to do with a list of answer gaps.

For marketing teams without a dedicated SEO or GEO specialist, the tools are often overwhelming. The category needs better onboarding, clearer "here's what to do next" guidance, and less raw data dumped into dashboards without context.

How the tool landscape has segmented

It's useful to think about GEO tools in three tiers right now:

| Tier | What they do | Examples | Best for |

|---|---|---|---|

| Monitoring-only | Track brand mentions across AI models, basic share of voice | Otterly.AI, Peec.ai, Orchly | Teams just getting started, limited budget |

| Monitoring + analysis | Prompt intelligence, competitor heatmaps, answer gap analysis | AthenaHQ, Profound, Search Party | Teams that want data depth but handle content internally |

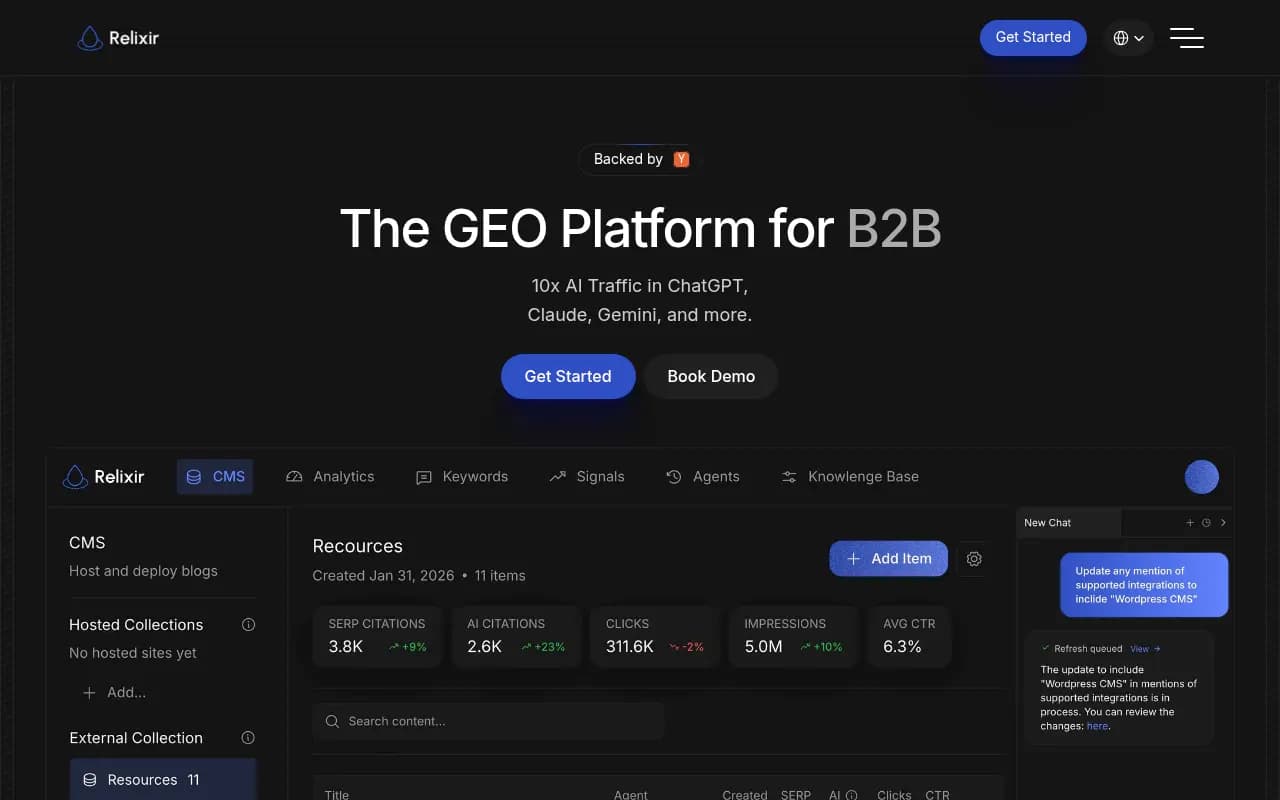

| Full optimization loop | Monitoring + gap analysis + AI content generation + traffic attribution | Promptwatch, Relixir | Teams that want to close the loop from visibility to revenue |

The monitoring-only tier has gotten crowded and commoditized. If you're paying for a tool that just tells you whether your brand appears in ChatGPT responses, you're paying for something that will be a free feature in most SEO platforms within 12 months.

The middle tier is where most of the interesting competition is happening right now. Platforms like AthenaHQ and Profound have built solid analysis capabilities, but they still leave the content work to you.

The full optimization loop is still rare. Most platforms that claim to offer it are actually offering monitoring plus a generic AI writing tool that isn't grounded in citation data. The difference between "AI-generated content" and "content engineered to earn AI citations" is real and significant.

The tools worth paying attention to in 2026

Beyond the platforms already mentioned, a few others are doing interesting things in specific niches:

For enterprise teams: BrightEdge and seoClarity have added AI visibility tracking to their existing enterprise SEO platforms. The GEO features aren't as deep as purpose-built tools, but the integration with existing SEO workflows is valuable for large organizations.

For agencies: Search Party and Rankability are building agency-specific workflows -- white-labeling, multi-client dashboards, and reporting formats that work for client presentations.

For e-commerce: ChatGPT Shopping tracking is an emerging capability. When ChatGPT recommends products in a shopping carousel, which brands appear? A handful of platforms are starting to track this specifically.

For solo operators and small teams: The budget end of the market has gotten more competitive. Tools like Airefs, Otterly.AI, and SE Visible offer basic AI visibility tracking at price points that make sense for smaller budgets.

The honest assessment

GEO tools have come a long way in a short time. The best platforms in 2026 are genuinely useful -- they surface real gaps, help you understand what AI models want to cite, and in some cases help you create the content that fills those gaps.

But the category is still young enough that a lot of tools are selling the promise of GEO more than the reality. The monitoring-only dashboards that dominate the market tell you where you're invisible without helping you fix it. That's a real limitation.

The question to ask when evaluating any GEO tool isn't "does it track my brand in ChatGPT?" -- almost all of them do that now. The better question is: "after I see the data, what does this tool help me do about it?" The answer to that question separates the useful platforms from the expensive dashboards.

The category will keep consolidating. Some of the 50+ tools that launched in 2025 won't exist by 2027. The ones that survive will be the ones that connect visibility data to actual content action and, eventually, to revenue. That's the direction the whole market is moving -- it's just moving at different speeds.