Key takeaways

- Most AI visibility platforms in 2026 track only 3-5 models, leaving significant blind spots in ChatGPT Shopping, DeepSeek, Grok, Mistral, and Meta AI.

- Coverage breadth matters less than what you do with the data -- monitoring-only tools leave you knowing you have a problem but unable to fix it.

- A handful of platforms have moved beyond dashboards to offer content gap analysis, AI writing tools, and crawler log access that actually close the visibility gap.

- When evaluating tools, ask specifically: which models do they query, how often, and can they show you page-level citation data and traffic attribution?

- Promptwatch is currently the only platform rated as a "Leader" across all evaluation categories in a 2026 comparison of 12 GEO platforms.

AI search is no longer a side channel. Adobe reported that AI-driven traffic to U.S. retail sites grew 1,100% year over year, and Pew Research found that when Google shows an AI summary, click-through rates on traditional results drop from 15% to 8%. The AI models aren't just answering questions -- they're intercepting purchase decisions.

That means your brand's presence in ChatGPT, Claude, Perplexity, Gemini, Grok, DeepSeek, Copilot, Mistral, Meta AI, and Google AI Overviews is now a real business metric. And yet, when you look at the tools built to track this, most of them cover only a fraction of those 10 models.

This guide breaks down which platforms actually cover all 10, what "coverage" really means in practice, and what separates a useful tool from a dashboard that just makes you feel informed.

Why model coverage is more complicated than it looks

When a vendor says they "track ChatGPT," that could mean a lot of things. It might mean they query the API once a day with a fixed prompt. It might mean they track web search results but not the chat interface. It might mean they support GPT-4 but not GPT-4o with browsing, which behaves differently.

The 10 models worth tracking in 2026 are:

- ChatGPT (OpenAI) -- including Shopping and browsing modes

- Perplexity

- Google AI Overviews

- Google AI Mode

- Claude (Anthropic)

- Gemini (Google)

- Meta AI / Llama

- DeepSeek

- Grok (xAI)

- Microsoft Copilot / Bing AI

Each of these has a different architecture, different citation behavior, and different user bases. Perplexity cites sources aggressively. ChatGPT's browsing mode pulls from live web results. Google AI Overviews are deeply tied to traditional SEO signals. DeepSeek and Grok are newer entrants with growing user bases but less coverage in most tools.

The practical problem: a brand that's visible in ChatGPT but invisible in Perplexity is missing a significant chunk of AI-driven research queries. A brand visible in Google AI Overviews but absent from Claude is invisible to a different segment entirely.

How platforms stack up on model coverage

Here's an honest look at how the major platforms compare on which models they actually support:

| Platform | ChatGPT | Perplexity | Google AI Overviews | Claude | Gemini | Grok | DeepSeek | Meta AI | Copilot | Mistral |

|---|---|---|---|---|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| Profound | Yes | Yes | Yes | Yes | Yes | Partial | No | No | Yes | No |

| AthenaHQ | Yes | Yes | Yes | Yes | Yes | No | No | No | Partial | No |

| Otterly.AI | Yes | Yes | Yes | Yes | No | No | No | No | No | No |

| Peec.ai | Yes | Yes | Yes | Partial | Yes | No | No | No | No | No |

| Search Party | Yes | Yes | Yes | Yes | No | No | No | No | No | No |

| Scrunch | Yes | Yes | Yes | Yes | Yes | No | No | No | Partial | No |

| Semrush | Yes | No | Yes | No | Yes | No | No | No | No | No |

| Ahrefs Brand Radar | Yes | No | Yes | No | No | No | No | No | No | No |

| Brandlight | Yes | Yes | Yes | Partial | Yes | No | No | No | No | No |

Coverage data based on publicly available feature documentation as of March 2026. "Partial" indicates limited or beta support.

The pattern is clear: most tools built their initial product around ChatGPT, Perplexity, and Google AI Overviews -- the three highest-traffic models -- and haven't kept pace with the expanding ecosystem. That's a reasonable starting point, but it's not the full picture in 2026.

Promptwatch is the only platform in this comparison that covers all 10 models, including the newer entrants like DeepSeek, Grok, and Mistral that most competitors have deprioritized.

What "coverage" actually means in practice

Raw model support is table stakes. The more important question is what the platform does with that coverage.

Query methodology

Some tools run a fixed set of prompts on a schedule. Others let you define custom prompts that match how your actual customers search. The difference matters enormously -- a fixed prompt like "what are the best CRM tools?" will give you different results than "what CRM should a 50-person B2B SaaS company use?" even though both are relevant.

Platforms with prompt volume estimates and difficulty scoring (Promptwatch calls these "Prompt Intelligence" features) let you prioritize which queries to optimize for first, rather than treating all prompts equally.

Citation depth vs. mention tracking

There's a difference between knowing your brand was mentioned and knowing which specific page on your site was cited, in what context, and how often. Page-level citation tracking is what connects AI visibility to actual content decisions -- you can see that your pricing page is never cited but your comparison article is cited frequently, and act on that.

Frequency and freshness

AI models update their knowledge and citation behavior continuously. A tool that queries models weekly will miss significant shifts. Daily querying is the baseline for actionable data.

The monitoring-only trap

Here's the honest problem with most AI visibility tools: they show you a dashboard, you see that competitors are more visible than you, and then... nothing. The tool has done its job. You're left figuring out what to do about it.

This is the monitoring-only trap. You have data but no path to action.

The platforms that break out of this pattern are the ones that connect visibility data to content creation. Specifically:

- Answer Gap Analysis that shows which prompts competitors rank for but you don't

- Content generation tools that use citation data to write articles engineered for AI citation

- Crawler logs that show which of your pages AI bots are actually reading (and which they're ignoring)

- Traffic attribution that connects AI citations to actual website visits and conversions

Most tools in the market -- Otterly.AI, Peec.ai, AthenaHQ, Search Party -- stop at the monitoring step. They're useful for understanding where you stand, but they don't help you move.

Tools worth knowing in 2026

Beyond the major platforms, a number of newer tools have carved out specific niches worth knowing about.

For teams that want action, not just data

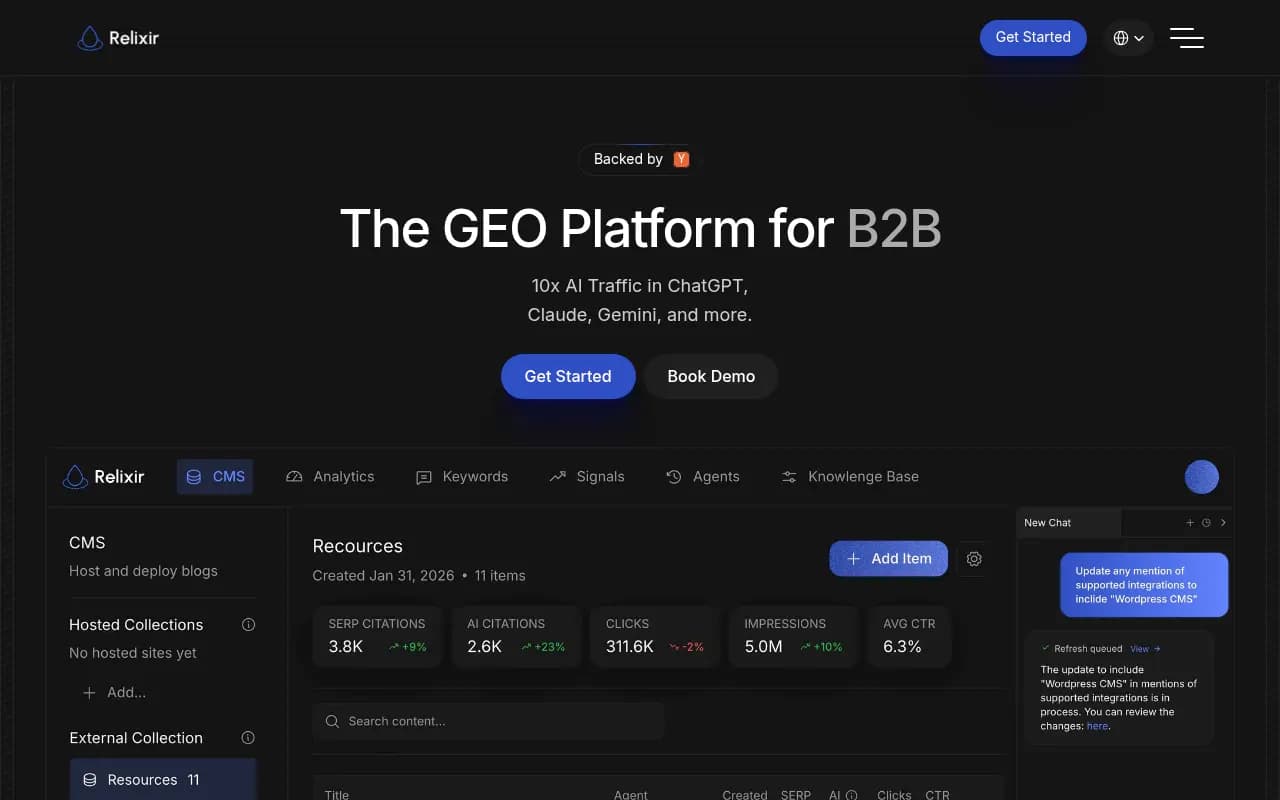

Relixir takes an interesting approach -- it's an "agentic" GEO platform that not only identifies gaps but autonomously generates and publishes content fixes. Worth evaluating if you want maximum automation.

Whitebox similarly focuses on automated narrative fixes, generating and shipping content changes without requiring manual intervention. Good for teams with limited content bandwidth.

For budget-conscious teams

Peec.ai covers multiple languages and several major models at a lower price point than enterprise platforms. Coverage is limited (no Grok, DeepSeek, or Mistral), but for teams just getting started with AI visibility tracking, it's a reasonable entry point.

Otterly.AI is affordable and covers the core four models (ChatGPT, Perplexity, Google AI Overviews, Claude). Monitoring-only, but the interface is clean and the price is accessible.

For enterprise SEO teams

BrightEdge has been in enterprise SEO for years and has added AI visibility tracking to its existing platform. The advantage is integration with existing SEO workflows; the limitation is that AI visibility feels like an add-on rather than the core product.

Evertune is positioned as an enterprise GEO platform with strong analytics depth. Coverage is solid across the major models, though it doesn't reach all 10.

Scrunch AI offers good coverage across the top 5-6 models and has solid competitor benchmarking features.

For specific use cases

Azoma focuses specifically on AI shopping optimization -- ChatGPT Shopping, Amazon Rufus, and similar product discovery surfaces. If e-commerce is your primary concern, this is worth a look.

LLM Clicks focuses specifically on citation tracking and the traffic that flows from AI citations. Narrower scope than a full GEO platform, but useful as a complementary tool.

The model coverage comparison in plain terms

To make this concrete: if you're a brand in a competitive category and you're only tracking ChatGPT, Perplexity, and Google AI Overviews, you're missing:

- Grok: xAI's model has grown significantly in 2025-2026, particularly among X/Twitter users who ask product and brand questions directly in the platform.

- DeepSeek: Rapidly growing user base, particularly in Asia and among technical audiences. Citation behavior is distinct from Western models.

- Meta AI: Embedded across WhatsApp, Instagram, and Facebook -- surfaces where brand discovery happens in conversational context.

- Copilot: Microsoft's integration into Windows, Edge, and Office means it reaches a massive enterprise audience that other models don't.

- Mistral: Smaller user base but growing adoption in European markets and privacy-conscious enterprise deployments.

None of these are hypothetical future concerns. They're active channels in 2026, and the brands that show up in them have a real advantage over those that don't.

What to actually look for when evaluating a platform

Rather than just checking a model coverage checklist, here's what to ask during any platform evaluation:

How are prompts queried? Fixed library or custom? How often? With what personas?

What does citation data look like? Brand mention only, or page-level attribution? Sentiment? Context?

Can you track competitors? Not just "are they mentioned" but which prompts they win, which models favor them, and why.

Is there any path to action? Content gap analysis, writing tools, optimization recommendations -- or just a dashboard?

How does traffic attribution work? Can you connect AI citations to actual visits and conversions, or does the data stop at the AI layer?

What's the crawler log situation? Knowing which AI bots are crawling your site, which pages they read, and how often is genuinely useful for understanding indexing behavior. Most tools don't offer this at all.

The honest verdict

If model coverage breadth is your primary concern, the field narrows quickly. Most platforms cover 3-5 models well and treat the rest as future roadmap items. Only a handful genuinely support all 10 major models with meaningful data depth.

But coverage alone isn't the right frame. A platform that covers 10 models but only shows you a mention count is less useful than one that covers 6 models and tells you exactly what content to create to improve your position.

The best tools in 2026 combine breadth (covering all the models your customers actually use) with depth (page-level citations, prompt intelligence, competitor analysis) and action (content gap analysis, writing tools, traffic attribution). That combination is rare.

Promptwatch sits at the intersection of all three -- 10 models, deep citation data, and a full content optimization loop. For teams that want to move beyond monitoring and actually improve their AI visibility, that's the relevant benchmark. For teams just starting out and working with tighter budgets, Otterly.AI or Peec.ai are reasonable starting points, with the understanding that you'll outgrow them as your GEO program matures.

The market is moving fast. A platform's model coverage in March 2026 may look different by Q3. The more durable question is whether the platform is built to optimize, not just observe.