Key takeaways

- No single tool covers every AI search channel well -- most agencies in 2026 run a stack of 3-5 tools across monitoring, content optimization, and attribution

- The biggest gap isn't tracking; it's acting on what you find. Most monitoring tools show you where you're invisible but don't help you fix it

- AI search visibility now matters even when users don't click -- brand mentions in AI responses build authority that influences purchase decisions

- A good agency stack has three layers: a core AI visibility platform, a content execution layer, and a traffic/attribution layer

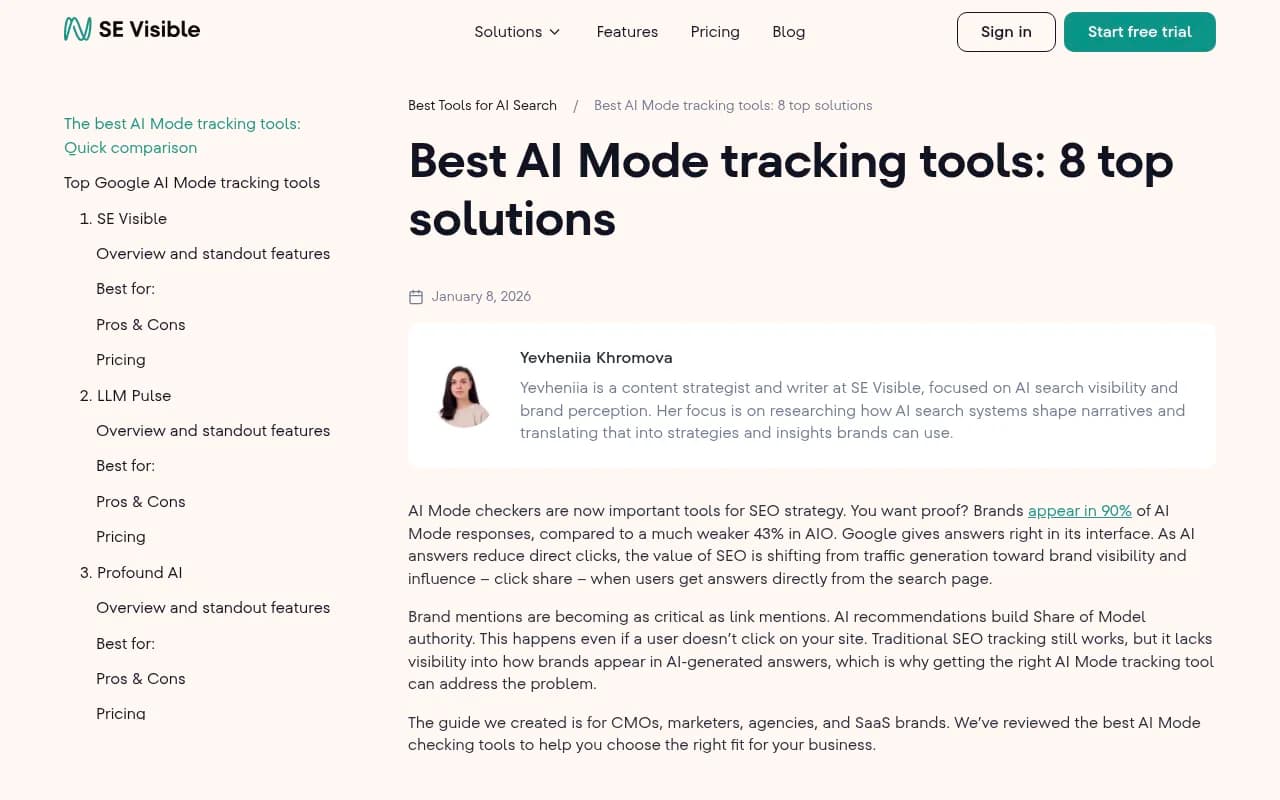

- Brands appearing in 90% of Google AI Mode responses (vs. 43% in AI Overviews) shows how much the landscape has shifted in just 12 months

Something changed in late 2024 and most agencies didn't notice until their clients started asking uncomfortable questions. Traffic was flat or declining. Rankings looked fine. But leads were down.

The answer, in most cases: AI search was eating the top of the funnel. ChatGPT, Perplexity, Google AI Mode, and Gemini were answering questions that used to send users to client websites. And the brands showing up in those AI answers weren't necessarily the ones with the best SEO -- they were the ones whose content AI models had learned to trust.

By 2026, this isn't a niche concern. It's the central challenge of brand visibility. And the agencies handling it well aren't doing it with one tool. They're building stacks.

This guide breaks down how those stacks work, which tools belong in each layer, and how to think about assembling one for your clients.

Why a single tool isn't enough

The AI search landscape is genuinely fragmented. ChatGPT, Perplexity, Google AI Mode, Claude, Gemini, Grok, DeepSeek, Copilot -- each model has different training data, different citation behavior, and different user intent patterns. A brand that's well-cited in Perplexity might be invisible in Google AI Mode. A competitor dominating ChatGPT shopping recommendations might not even appear in Gemini.

On top of that, different tools are built for different jobs. Some are excellent at monitoring (showing you where you appear and where you don't). Others are built for content optimization (helping you create content that gets cited). A third category handles attribution (connecting AI visibility to actual traffic and revenue).

Most tools do one of these well. Very few do all three. That's why agencies stack them.

The three-layer agency stack

Think of the stack in three layers. Each layer answers a different question.

Layer 1: Core AI visibility monitoring

This is the foundation. You need to know where your client's brand appears across AI platforms, how often, in what context, and how that compares to competitors.

The best tools at this layer track prompt-level visibility -- you define the queries your target customers are asking, and the platform runs those prompts across multiple AI models to see who gets cited. Some also track sentiment (is the mention positive or neutral?), share of voice, and citation sources.

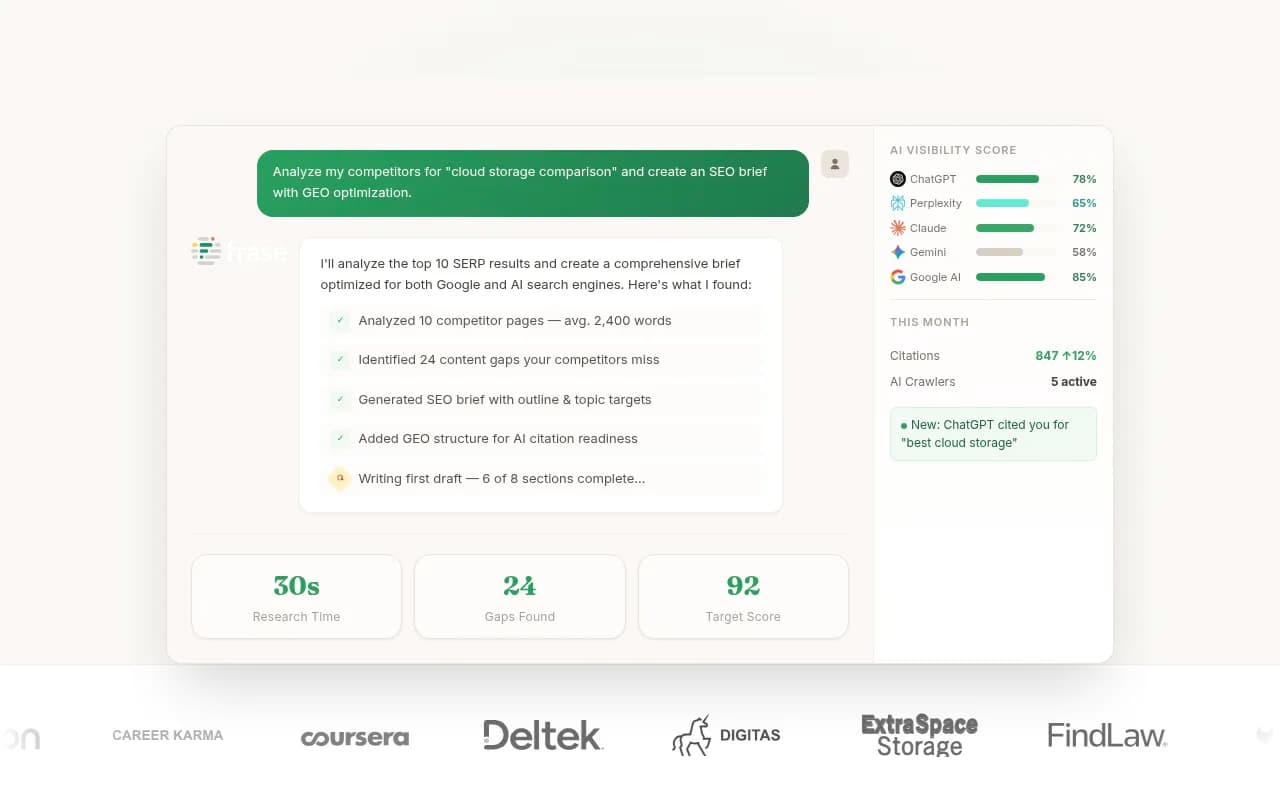

Promptwatch sits at the top of this layer for agencies that want to go beyond monitoring into action. It tracks visibility across 10 AI models (ChatGPT, Perplexity, Google AI Mode, Claude, Gemini, Grok, DeepSeek, Copilot, Meta AI, Mistral), but the differentiator is what happens after you see the data. Its Answer Gap Analysis shows exactly which prompts competitors are visible for that your client isn't -- and the built-in content agent helps you close those gaps. Most monitoring tools stop at the data. Promptwatch connects it to execution.

For agencies that want a more monitoring-focused option at a lower entry price, a few tools are worth knowing:

Otterly.AI is a solid entry-level choice. It's affordable (starting around $29/mo), handles automated prompt testing, and works well for agencies running GEO audits. It won't generate content or show you crawler logs, but for basic visibility tracking across a handful of clients, it does the job.

Peec AI is similar in scope -- clean dashboard, competitor benchmarks, multi-language support. Good for SaaS and B2B clients where you need a simple way to show "here's where you appear vs. your competitors."

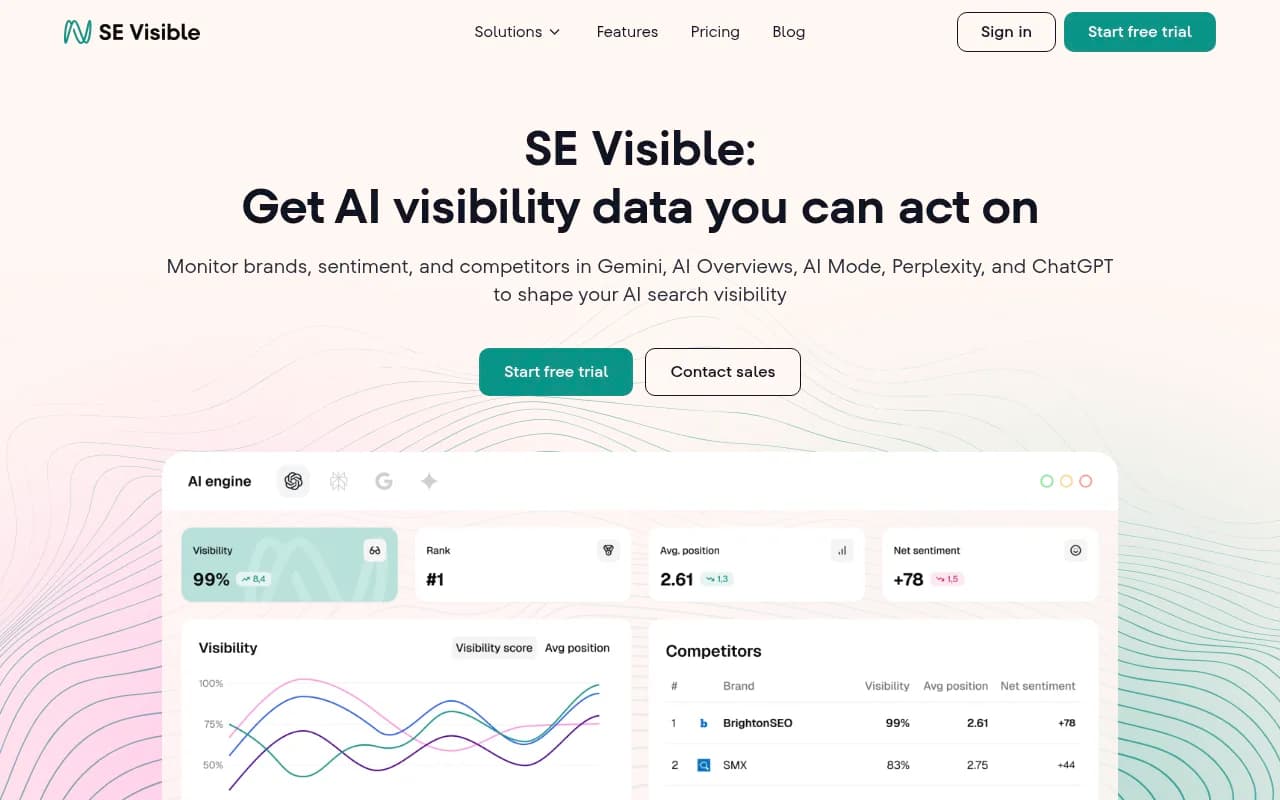

SE Visible (from SE Ranking) is worth considering if your clients are already in the SE Ranking ecosystem. It covers AI Mode and AI Overviews alongside traditional rank tracking, which makes reporting cleaner when clients want a unified view.

Profound is the enterprise option at this layer. Strong analytics, near real-time monitoring, API access. The tradeoff is price and complexity -- it's built for analyst-driven workflows, not agencies running 20 clients at once.

AthenaHQ covers 8+ AI search engines and is solid for monitoring, though it's lighter on the optimization and content generation side.

Layer 2: Content execution

Knowing where you're invisible is only useful if you can do something about it. This is where most monitoring-only tools fall short, and where agencies need a second layer.

The content execution layer handles two things: figuring out what content to create (based on prompt data and citation analysis) and actually creating it in a format that AI models are likely to cite.

This is genuinely hard to do well. Generic SEO content doesn't cut it -- AI models cite content that directly and authoritatively answers specific questions. The content needs to match the way prompts are phrased, cover the right angles, and come from a domain that AI models already trust.

Promptwatch's built-in AI writing agent handles this within the core platform -- it generates articles, listicles, and comparisons grounded in citation data from 880M+ analyzed citations. That's a meaningful advantage: the content recommendations aren't based on keyword volume guesses, they're based on what AI models are actually citing right now.

For agencies that want a dedicated content tool alongside their visibility tracker:

Writesonic has built out AI visibility features alongside its content generation capabilities. It's a reasonable choice if your team is already using it for content and wants to add AI search gap analysis without switching platforms.

Frase is strong for content briefs and optimization. It's not purpose-built for AI search citation, but it helps writers create thorough, well-structured content that tends to perform well in AI responses.

Clearscope is a content optimization standard for many agencies. It doesn't track AI visibility directly, but well-optimized content that covers topics comprehensively tends to get cited more often. Think of it as a supporting tool rather than a primary one.

Layer 3: Traffic attribution and ROI

This is the layer most agencies skip -- and the one that will matter most to clients in 12 months.

The problem: AI search sends traffic differently than traditional search. Users might see your brand in a ChatGPT response, then search for you directly, then convert a week later. Standard analytics attributes that to "direct" or "organic search" and loses the AI search connection entirely.

Attribution at this layer means connecting AI visibility improvements to actual traffic and revenue. This requires either a JavaScript snippet on the client's site, a Google Search Console integration, or server log analysis to identify AI crawler activity and referral patterns.

Promptwatch handles this with its traffic attribution module (code snippet, GSC integration, or server log analysis) and its AI Crawler Logs feature, which shows in real time which AI crawlers (ChatGPT, Claude, Perplexity, etc.) are hitting client pages, how often, and what errors they're encountering. That's data most agencies have never had access to before.

For broader attribution needs:

Brand24 is useful for tracking brand mention volume across the web, including forums and social platforms that AI models often cite. It's not an AI visibility tool per se, but knowing where your brand is being discussed helps you understand why AI models do or don't cite you.

DarkVisitors is a niche but genuinely useful tool for understanding AI bot activity on client sites. It tracks which AI agents are crawling pages and can help identify indexing issues.

How agencies actually assemble the stack

Here's a practical breakdown of how different agency types tend to approach this.

Small agency (1-10 clients, limited budget)

The constraint here is time and money. You need one tool that does most of the job, with minimal overhead.

The most practical setup: Promptwatch at the Professional tier ($249/mo) as the core platform, covering monitoring, content gap analysis, crawler logs, and attribution for up to 2 client sites. Add Otterly.AI for clients who need basic monitoring at a lower cost. Use Clearscope or Frase for content execution if your team is writing in-house.

Total stack cost: $350-500/mo for 3-5 clients. That's a reasonable line item to pass through to clients or bundle into a retainer.

Mid-size agency (10-30 clients, dedicated SEO/GEO team)

At this scale, you need multi-site support, white-label reporting, and the ability to run content production at volume.

A common setup: Promptwatch Business tier ($579/mo, 5 sites) or Agency/Enterprise custom pricing for larger client rosters. SE Visible or Nightwatch for clients who want traditional rank tracking alongside AI visibility. Writesonic or a dedicated content team using Frase for content execution. Brand24 for brand mention monitoring on clients in competitive or reputation-sensitive categories.

Enterprise agency or in-house team

At this level, the priority shifts to depth of data, API access, and integration with existing reporting infrastructure.

Promptwatch's API and Looker Studio integration make it a natural fit here. Profound is worth evaluating for clients with analyst teams who want raw data access. BrightEdge or seoClarity cover the enterprise SEO layer. For attribution, server log analysis through the Promptwatch crawler logs feature or a dedicated log analysis tool gives the most complete picture.

Comparison: Core AI visibility tools for agencies

| Tool | AI models tracked | Content generation | Crawler logs | Attribution | Starting price | Best for |

|---|---|---|---|---|---|---|

| Promptwatch | 10 | Yes (built-in agent) | Yes | Yes (snippet/GSC/logs) | $99/mo | Full-stack GEO optimization |

| Otterly.AI | 5+ | No | No | No | $29/mo | Budget monitoring |

| Peec AI | 5+ | No | No | No | €89/mo | B2B/SaaS monitoring |

| SE Visible | Google AI Mode + AIOs | No | No | No | $189/mo | SEO + AI Mode combined |

| Profound | 5+ | No | No | Limited | $99/mo | Enterprise analytics |

| AthenaHQ | 8+ | No | No | No | Custom | Monitoring-focused brands |

| Nightwatch | Google AI + traditional | No | No | No | $39/mo + add-on | Local SEO + AI tracking |

| seoClarity | Multiple | Limited | No | Limited | $2,500+/mo | Global enterprise |

The gap most agencies are still missing

Most of the conversation about AI search visibility focuses on monitoring -- "are we appearing in ChatGPT responses?" That's a reasonable starting point, but it's not where the value is.

The value is in the content gap. Every prompt where a competitor appears and you don't is a specific, addressable problem. It means there's a question your target customers are asking, an AI model is answering it, and your content isn't being cited. That's a content brief waiting to happen.

The agencies pulling ahead in 2026 are the ones treating AI visibility data as an editorial calendar input, not just a reporting metric. They're taking the prompts where clients are invisible, building content that directly addresses those prompts, and tracking citation rates over time.

That loop -- find gaps, create content, track results -- is what separates optimization from monitoring. Tools like Promptwatch are built around it. Most others aren't.

Practical tips for building your stack

A few things worth knowing before you start adding tools:

Start with prompt definition. The quality of your visibility data depends entirely on the prompts you're tracking. Spend time mapping the actual questions your client's customers ask at each stage of the buying journey. Vague prompts produce vague data.

Don't track everything. It's tempting to monitor every AI platform, but focus on the ones your client's customers actually use. For most B2B clients, that's ChatGPT and Perplexity. For consumer brands, add Google AI Mode and Gemini. Spreading too thin makes reporting noisy.

Connect visibility to revenue early. Set up attribution before you start optimizing. If you can't show that AI visibility improvements lead to traffic and conversions, the work is hard to justify in a quarterly review. The Promptwatch traffic attribution module or a GSC integration is the minimum viable setup.

Watch the crawler logs. AI crawler activity on client sites is a leading indicator of future citation. If ChatGPT's crawler is hitting a page frequently, that page is likely being considered for citation. If it's hitting error pages, that's a fixable problem. Most agencies have no visibility into this at all.

Audit citation sources. Before creating new content, look at what AI models are already citing in your client's category. Often it's Reddit threads, YouTube videos, or specific domains that have established trust with AI models. Understanding the citation ecosystem tells you where to publish and what format to use.

Where this is heading

The agencies that treat AI search as a separate channel to monitor are going to find themselves doing a lot of reporting without a lot of results. The ones building it into their core workflow -- using visibility data to drive content strategy, tracking citations the way they used to track rankings -- are the ones clients will want to keep.

The tools are there. The stack isn't complicated. The main thing is deciding to treat AI search visibility as something you optimize, not just something you observe.