Key takeaways

- AI search responses change constantly -- models update their training, new content gets cited, and competitors optimize. Without systematic tracking, you'll miss these shifts entirely.

- Traditional SEO metrics (rankings, clicks, impressions) don't capture AI visibility. You need a separate monitoring layer that queries AI models directly and records what they say.

- Change detection requires baselines: you can't know something moved if you never recorded where it started.

- The most useful data points to track over time are citation frequency, brand mention sentiment, which pages get cited, and share of voice against competitors.

- Several purpose-built tools now handle this automatically -- the best ones go beyond logging changes to helping you understand why they happened and what to do about it.

There's a problem that almost nobody talks about when they discuss AI search optimization: the responses aren't static. ChatGPT might recommend your brand today, stop mentioning you next week, and then cite a competitor's blog post instead. Perplexity might pull from your pricing page in January and your competitor's comparison article by March. These shifts happen silently, with no notification, no ranking drop you can see in Google Search Console, and no obvious explanation.

This is the version history problem. In traditional SEO, Google's algorithm updates are at least announced (or quickly reverse-engineered by the community). In AI search, the "algorithm" is a black box that updates continuously, and the responses it generates for any given query can change for dozens of reasons -- new training data, model updates, fresh citations, competitor content, or just probabilistic variation in how the model generates text.

If you're not tracking AI responses over time, you're flying blind.

This guide covers how to build a proper change detection system for AI search responses in 2026 -- what to track, how often, which tools to use, and how to interpret what you find.

Why AI responses change (and why it matters)

Before getting into the mechanics of tracking, it's worth understanding what actually causes AI responses to shift. There are a few distinct categories:

Model updates are the most dramatic. When OpenAI releases a new version of ChatGPT, or Google updates the underlying model powering AI Overviews, the entire citation landscape can shift overnight. Content that was previously cited might disappear. New sources might appear. The tone and framing of answers can change substantially.

Content freshness is a subtler driver. AI models increasingly favor recent, authoritative content. If a competitor publishes a comprehensive guide that gets widely linked and discussed, it can start appearing in AI responses within weeks -- displacing older content that had been there for months. AirOps research found that brands earning both a mention and a citation in AI-generated answers are up to 40% more likely to maintain ongoing visibility, which suggests that citation quality compounds over time.

Crawl patterns matter too. AI crawlers (the bots that feed content to models like ChatGPT and Perplexity) don't visit every page equally. A page that gets crawled frequently will have its content reflected more accurately in AI responses than a page that was last crawled six months ago.

Finally, there's just probabilistic variation. Large language models are stochastic -- they don't produce identical outputs every time. The same prompt asked twice might yield slightly different responses. This is noise you need to account for when interpreting change data.

Building your baseline: what to capture before you start tracking changes

You can't detect change without a baseline. This sounds obvious, but most teams skip this step and then wonder why their tracking data feels meaningless.

Define your prompt set

Start with a list of 20-50 prompts that represent how your actual customers search for your category. These should include:

- Category-level queries ("best project management software for agencies")

- Problem-based queries ("how do I track team productivity remotely")

- Comparison queries ("X vs Y vs Z")

- Brand-specific queries (your brand name, your competitors' brand names)

The mix matters. Category queries show you whether you're being considered at all. Comparison queries show you how AI models frame your brand relative to competitors. Brand queries show you what AI says when someone asks about you directly.

Record the full response, not just a summary

When you capture a baseline, save the complete AI response -- not just whether your brand was mentioned. You want to know: what was the exact wording? Which sources were cited? Where in the response did your brand appear (first mention vs. buried in a list)? What sentiment was expressed?

This level of detail is what lets you do meaningful change detection later. "We were mentioned" vs. "we weren't mentioned" is a binary that misses a lot of signal.

Timestamp everything and note the model version

AI models update frequently. Always record which model version you queried (e.g., GPT-4o, Claude 3.7 Sonnet, Perplexity with real-time web access enabled) and the exact date and time. Without this, you can't distinguish between "the model changed" and "our content changed."

Setting up ongoing monitoring: frequency and structure

How often to query

Daily monitoring is the right default for most brands. AI responses can shift quickly, and weekly monitoring means you might miss a significant change and not catch it for days. That said, daily monitoring across 50 prompts and 5+ AI models generates a lot of data -- you need tooling to manage it.

For manual spot-checks (useful for verifying what automated tools report), once a month is a reasonable cadence. Pick 10-15 of your most important prompts and run them yourself across two or three AI models. This keeps you calibrated to what automated tools are showing you.

What to log for each query

For each prompt-model combination, record:

- Whether your brand was mentioned (yes/no)

- Position of first mention (first paragraph, middle, end of response)

- Whether your brand was cited with a source link

- Which specific page was cited

- Competitor mentions in the same response

- Overall sentiment toward your brand (positive, neutral, negative)

- Any direct recommendations ("I'd recommend X for this use case")

Over time, this data lets you build a picture of trends -- not just snapshots.

Change detection: identifying what actually moved

Raw monitoring data tells you what AI says right now. Change detection tells you what's different from before. These are not the same thing.

Citation-level changes

The most actionable changes to track are citation-level shifts. If a specific page on your site was being cited in responses to a key prompt and then stops appearing, that's a concrete signal. Either the page lost authority in the model's view, a competitor published something better, or the page has a technical issue (crawl errors, recent content changes that introduced thin or low-quality sections).

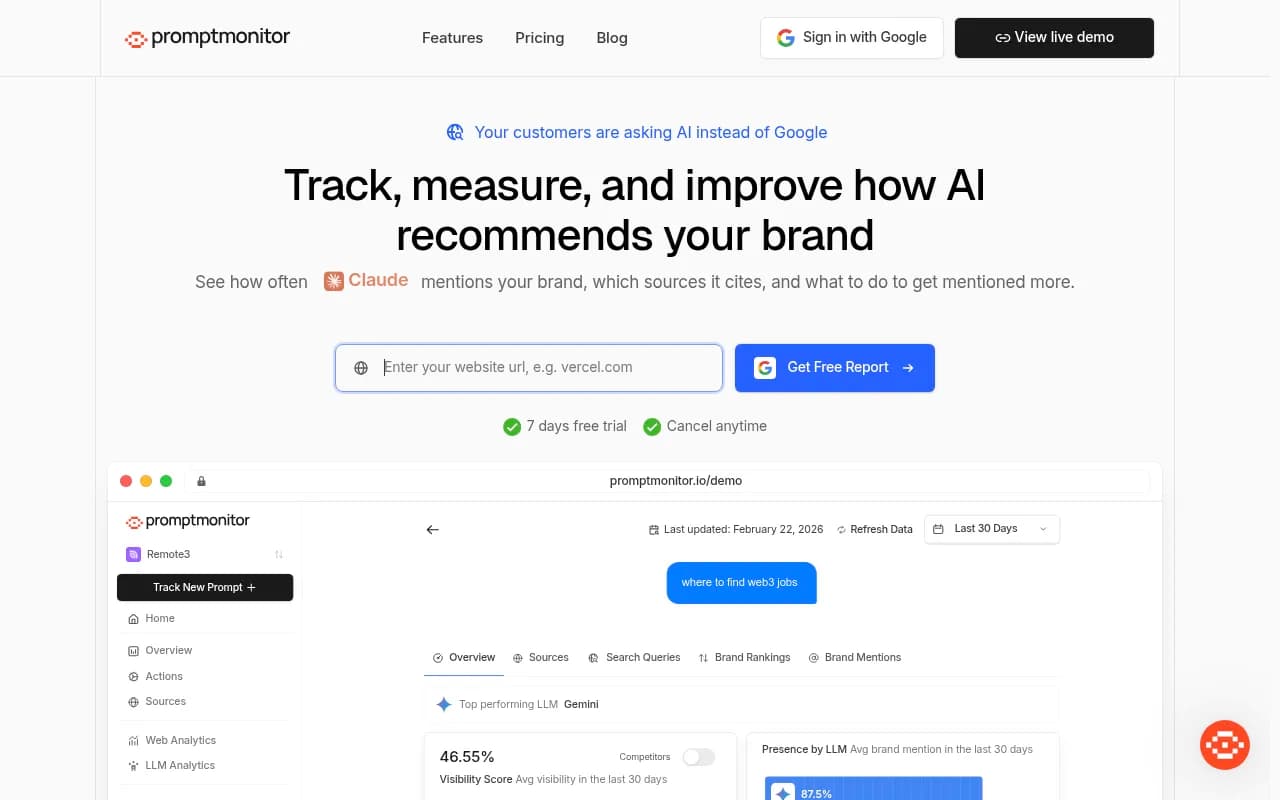

Tools like Promptwatch track this at the page level -- showing you exactly which pages are being cited, by which models, and how that changes over time.

Share of voice shifts

Share of voice (SOV) measures how often your brand appears in AI responses relative to competitors, across a defined set of prompts. A drop in SOV is often more meaningful than a drop in absolute mentions, because it tells you whether you're losing ground or just experiencing normal variation.

If your SOV drops from 45% to 30% over a month while a competitor's climbs from 20% to 40%, that's a clear signal that something changed in their favor. The question is what.

Sentiment changes

This one is undertracked. AI models don't just mention brands -- they characterize them. "X is a solid choice for small teams" is very different from "X can be expensive and has a steep learning curve." If the sentiment in AI responses toward your brand shifts, you want to know about it, even if your mention frequency stays flat.

New prompts where competitors appear but you don't

This is arguably the most valuable change to detect: prompts where you had no presence before and competitors are now appearing. These represent gaps -- topics and questions where AI models have decided someone else is the authority.

Tools for tracking AI response changes over time

The tooling landscape has matured significantly in 2026. Here's how the main options compare:

| Tool | Change detection | Page-level citation tracking | Competitor tracking | Content gap analysis | Pricing |

|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | From $99/mo |

| Profound | Yes | Limited | Yes | No | Higher tier |

| Peec AI | Basic | No | Yes | No | Mid-range |

| Otterly.AI | Basic | No | Limited | No | Budget |

| AthenaHQ | Yes | No | Yes | No | Mid-range |

| LLM Pulse | Yes | No | Yes | No | Mid-range |

| Trakkr.ai | Basic | No | Yes | No | Budget |

For teams that want to go beyond monitoring and actually act on what they find, Promptwatch is the most complete option -- it connects change detection to content gap analysis and has a built-in writing agent for creating content that addresses the gaps. Most other tools stop at showing you the data.

Purpose-built AI visibility trackers

Several newer tools are worth knowing about depending on your specific needs:

Diagnosing why responses changed

Detecting that something changed is step one. Understanding why is where the real work happens.

Check your AI crawler logs

If a page stopped being cited, the first thing to check is whether AI crawlers are still visiting it. AI crawler logs (available in tools like Promptwatch's Professional and Business plans) show you which bots are hitting your site, how often, and whether they're encountering errors. A page that returns a 404, has a noindex tag, or loads very slowly might simply not be getting crawled -- and therefore not getting cited.

This is a capability most monitoring tools lack entirely. Knowing that Perplexity's crawler visited your /pricing page three times last week but hasn't touched your /comparison page in 45 days is genuinely useful diagnostic information.

Compare your content against what's now being cited

If a competitor's page replaced yours in AI responses, read that page carefully. What does it cover that yours doesn't? Is it more recent? Does it have more specific data, more examples, a clearer structure? AI models tend to cite content that directly and comprehensively answers the question being asked. If your page is thin on a topic that the prompt requires depth on, that's fixable.

Check for recent content changes on your own pages

Sometimes you cause the problem yourself. If you updated a page recently -- removed a section, changed the tone, cut word count -- and citations dropped shortly after, the update might be the cause. This is why versioning your own content matters alongside tracking AI responses.

Look at what's happening in Reddit and YouTube

AI models, especially Perplexity and ChatGPT with web browsing, frequently pull from Reddit discussions and YouTube content. If a Reddit thread criticizing your product went viral, or a YouTube review shifted negative, that can affect how AI models characterize your brand. Monitoring these channels as part of your change detection workflow catches signals that pure citation tracking misses.

Building a change detection workflow

Here's a practical workflow that combines automated monitoring with periodic manual review:

Daily (automated)

- Run your full prompt set across target AI models via your monitoring tool

- Flag any prompts where your brand mention status changed (appeared or disappeared)

- Flag any prompts where a new competitor appeared

- Log citation-level changes (which pages are being cited)

Weekly (analyst review)

- Review flagged changes from the past week

- Categorize changes: model update, content change, competitor activity, or noise

- Prioritize any significant SOV drops for investigation

- Check AI crawler logs for crawl errors or reduced crawl frequency on key pages

Monthly (strategic review)

- Run manual spot-checks on 10-15 priority prompts

- Review share of voice trends over the past 30 days

- Identify content gaps that have emerged (prompts where competitors appear but you don't)

- Plan content responses to the most significant gaps

Quarterly (content audit)

- Review which pages are being cited most and least

- Update or expand pages that have lost citation frequency

- Publish new content targeting prompt gaps identified over the quarter

- Reassess your prompt set -- are you tracking the right queries?

Common mistakes in AI response tracking

A few patterns come up repeatedly when teams start doing this seriously:

Tracking too few prompts. If you're only monitoring 10-15 prompts, you're likely missing the majority of queries where AI models are forming opinions about your brand. The sweet spot for most brands is 50-150 prompts, covering the full range of how customers discover your category.

Ignoring model-level variation. Different AI models cite different sources and form different opinions. A brand that's well-represented in ChatGPT responses might be nearly invisible in Perplexity. Tracking only one or two models gives you an incomplete picture.

Treating all changes as equally significant. Not every change matters. A single response where your brand wasn't mentioned is noise. A consistent 20% drop in mention rate over three weeks across multiple models is a signal. Build your alerting thresholds accordingly.

Not connecting changes to content actions. Monitoring without acting is just expensive anxiety. The point of tracking AI response changes is to identify what to fix and what to create. If your workflow ends at "we detected a change," you're getting limited value from the investment.

What good looks like

A mature AI response tracking setup gives you a clear answer to these questions at any point in time:

- Which AI models mention our brand, for which prompts, and how often?

- Which specific pages on our site are being cited, and by which models?

- How has our share of voice changed over the past 30, 60, and 90 days?

- What are the prompts where competitors appear but we don't?

- Are AI crawlers successfully accessing our key pages?

If you can answer all five questions with data rather than guesses, you're in a strong position. Most brands in 2026 still can't answer any of them.

The gap between "we have a monitoring setup" and "we have a change detection workflow that drives content decisions" is where most of the competitive advantage lives right now. The brands that close that gap -- and act on what they find -- are the ones that will compound their AI visibility over the next 12-18 months while competitors wonder why their traffic is declining.