Key takeaways

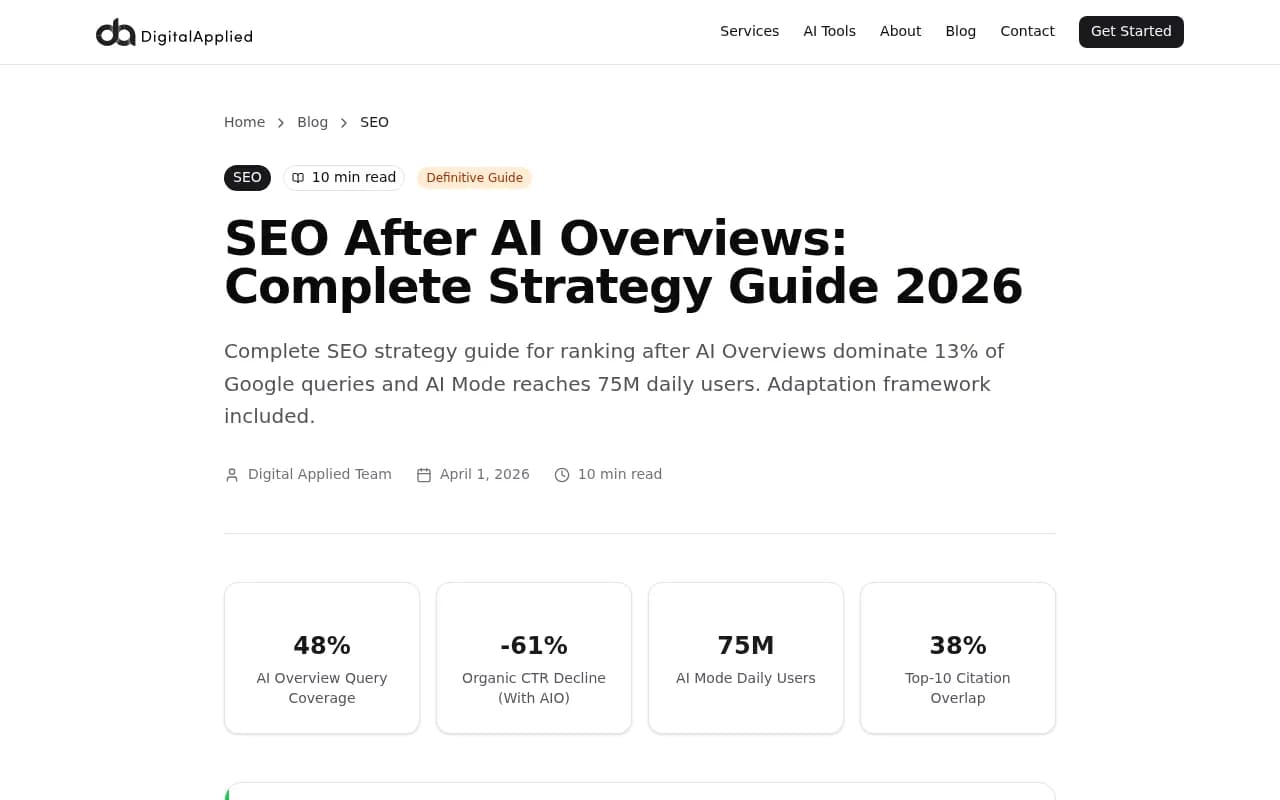

- AI Overviews now appear in roughly 48% of all Google searches, and position 1 click-through rates have dropped from 28% to 19% on those queries -- getting cited inside the Overview matters more than ranking first

- ChatGPT Search crossed 400 million weekly users in Q1 2026; if your content isn't optimized to be cited by LLMs, that traffic simply doesn't reach you

- Only 38% of pages cited in AI Overviews also rank in the traditional top 10 -- citation and ranking are now two separate games you have to play simultaneously

- The 60-day plan below splits into three phases: foundation (days 1-20), content production (days 21-45), and tracking and iteration (days 46-60)

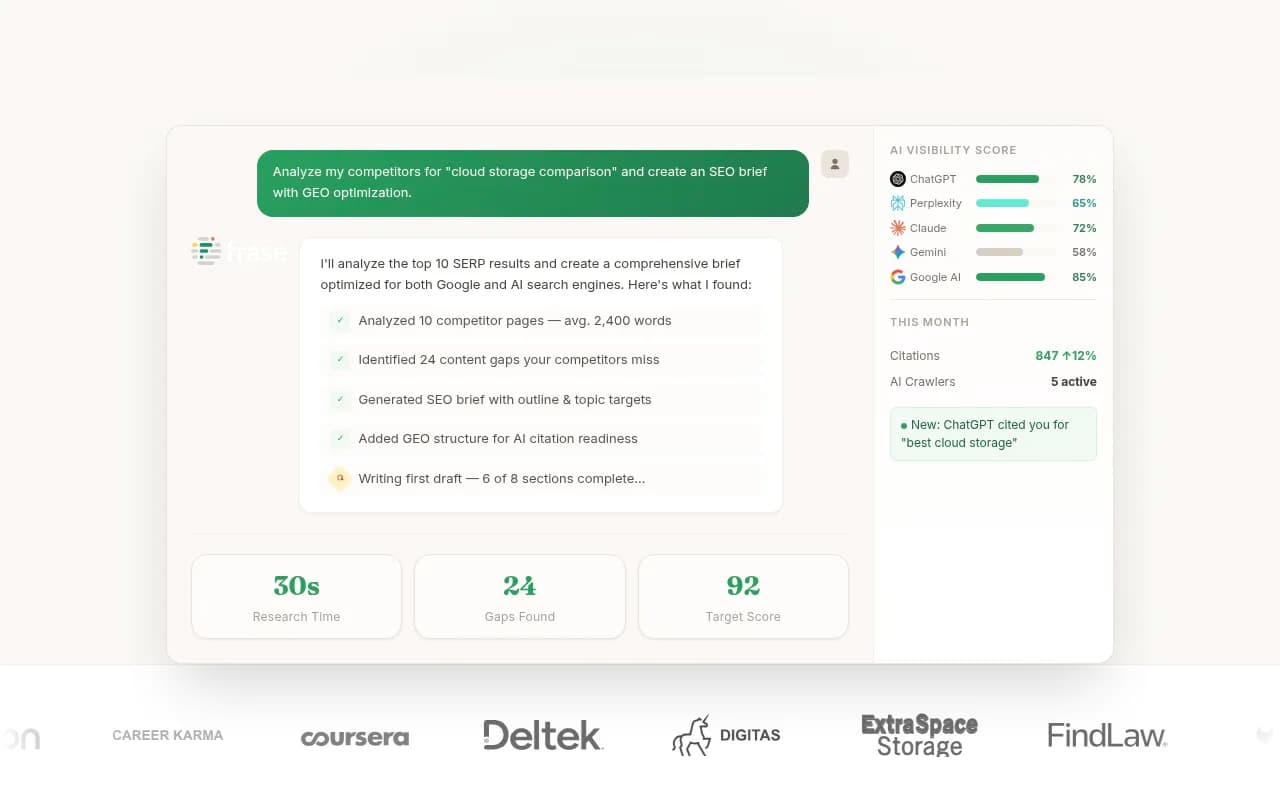

- Tools like Promptwatch can show you exactly which prompts competitors are getting cited for that you're missing -- which is the fastest way to find content gaps when you're starting cold

Starting from zero in AI SEO in 2026 is actually less daunting than it sounds. You don't have a legacy site full of thin content to clean up. You don't have years of bad SEO habits to unlearn. What you do have is a blank slate -- and that's genuinely useful when the rules have changed this much.

The search landscape shifted hard in late 2025. Google rolled AI Overviews to 100% of US queries. OpenAI made ChatGPT Search the default experience. Perplexity passed 50 million users. Together, these AI surfaces now handle roughly 8% of US search volume, growing 4-6 percentage points per quarter. That's not a rounding error anymore.

Here's the uncomfortable truth for anyone still running a 2023 SEO playbook: citation sources have decoupled from rankings. According to Ahrefs data, only 38% of pages cited in AI Overviews also rank in the traditional top 10 -- down from 76% just seven months prior. You can rank #1 on Google and still be invisible to every AI model. And you can get cited constantly by ChatGPT and Perplexity without ranking anywhere near the top of Google.

So the 60-day plan below treats these as two parallel tracks you build simultaneously.

Phase 1: Foundation (days 1-20)

Day 1-3: Understand what AI models actually want to cite

Before you write a single word, spend time understanding how AI models select sources. This is different from how Google ranks pages.

LLMs cite content that:

- Directly answers a specific question with factual, structured information

- Has clear authorship and expertise signals (author bios, credentials, publication dates)

- Uses clean HTML with proper heading hierarchy

- Contains original data, statistics, or first-hand perspectives -- not just summaries of other articles

- Is referenced or linked to from other credible sources (Reddit threads, industry forums, YouTube descriptions)

Pages above 20,000 characters average roughly 10 citations each in AI Overviews, versus 2.4 for pages under 500 characters. Depth matters -- but depth with structure matters more. A 5,000-word wall of text with no headings gets cited far less than a 2,000-word piece with clear H2s, numbered lists, and a concise summary at the top.

Day 4-7: Map your topic territory

Pick one core topic area and own it completely before expanding. This is topical authority, and it matters for both Google and AI models.

The mistake most beginners make is going wide immediately -- publishing 50 loosely related articles hoping something sticks. AI models reward depth over breadth. If you're a B2B SaaS company selling project management software, don't publish one article about "project management" and then pivot to "remote work tips" and "productivity hacks." Build 10-15 tightly related pieces that cover every angle of one specific topic cluster.

Map your cluster like this:

- One pillar page (2,500+ words, covers the full topic)

- 8-12 supporting pages that answer specific sub-questions

- 3-4 comparison or "vs" pages targeting decision-stage queries

- 2-3 data-driven or original research pieces

The comparison and research pieces are particularly important for AI citations. When someone asks ChatGPT "what's the best project management tool for remote teams," it needs sources that directly compare options. If you've published that comparison with real data, you become a candidate for citation.

For identifying which specific prompts and questions to target, tools like Promptwatch can show you the actual prompts people are using in AI search engines, along with volume estimates and difficulty scores.

Day 8-12: Technical foundation for AI crawlers

AI crawlers (ChatGPT's GPTBot, Claude's ClaudeBot, Perplexity's PerplexityBot) need to be able to read your site cleanly. This is often overlooked by people focused purely on content.

Check your robots.txt file. Many sites accidentally block AI crawlers -- either through legacy rules or by copying a robots.txt that blocks all bots. Make sure GPTBot, ClaudeBot, and PerplexityBot are explicitly allowed if you want to be cited.

Beyond access, clean up your technical setup:

- Use proper semantic HTML (H1 for page title, H2 for main sections, H3 for subsections)

- Add schema markup -- at minimum, Article schema with author, datePublished, and dateModified

- Make sure your pages load fast and render without JavaScript errors (AI crawlers often don't execute JS)

- Add a clear author bio with credentials to every content page

- Include a "last updated" date on all articles

For a thorough technical audit, Screaming Frog is still the fastest way to catch structural issues at scale.

Day 13-20: Competitor citation analysis

Before you write anything, find out what's already getting cited in your space. This is the most underused tactic for people starting from zero -- you don't have to guess what works, you can just look at what's already working for competitors.

The process:

- Identify 5-8 competitors or established sites in your niche

- Run the specific questions your audience asks through ChatGPT, Perplexity, and Google AI Overviews

- Note which sources get cited repeatedly

- Analyze those pages: what format are they? How long? What data do they include? What questions do they directly answer?

This gives you a content brief that's grounded in what AI models actually want, not what you think they want.

Promptwatch's Answer Gap Analysis automates this process -- it shows you exactly which prompts competitors are visible for that you're not, so you can prioritize the highest-value gaps first instead of manually querying every model.

Phase 2: Content production (days 21-45)

The content format that gets cited

Not all content formats get cited equally. Based on what's working in 2026, these formats consistently earn AI citations:

Direct answer articles -- Pages structured around a single question with the answer in the first paragraph, then supporting detail below. Think "What is [X]?" or "How does [X] work?" The answer appears before any preamble.

Comparison pages -- "X vs Y" and "Best X for [use case]" pages get cited heavily because they directly answer decision-stage queries. AI models love citing these because they save the user from having to synthesize multiple sources.

Data and statistics roundups -- If you can compile original data or aggregate statistics from primary sources with proper attribution, these become citation magnets. AI models need numbers to answer quantitative questions.

Step-by-step guides -- Numbered processes with clear steps. The structure makes it easy for AI to extract and cite specific steps.

FAQ pages -- Underrated for AI citations. A well-structured FAQ with specific questions as H3 headings and concise answers directly below each one is exactly the format LLMs are built to parse.

Day 21-30: Write your pillar content

Your pillar page is the most important piece you'll publish. It needs to be comprehensive, well-structured, and genuinely more useful than anything currently ranking.

A few non-negotiable elements:

- Answer the core question in the first 100 words

- Use a clear heading hierarchy (don't skip levels)

- Include a table of contents for long pieces

- Add a "key takeaways" or summary section at the top

- Cite your sources with links to primary research

- Include at least one original data point, case study, or first-hand observation

- Update the "last modified" date whenever you make meaningful changes

For content optimization against what's currently ranking, tools like Surfer SEO and Clearscope analyze top-ranking pages and tell you which topics and terms your content needs to cover.

Day 31-40: Build your supporting content cluster

With your pillar page live, build out the supporting cluster. Each piece should:

- Link back to the pillar page

- Cover a specific sub-question in depth

- Cross-link to 2-3 other cluster pieces where relevant

- Be optimized for a distinct prompt or query (not overlapping with other cluster pieces)

Aim for one new piece every 2-3 days during this phase. Consistency matters -- AI crawlers return to sites that update regularly. Content freshness is a real signal.

For content creation at this pace without sacrificing quality, AI writing tools with SEO grounding help significantly. Jasper and Frase both generate content briefs and drafts that can be edited into final form faster than writing from scratch.

Day 41-45: Comparison and decision-stage content

These pages often have lower search volume but higher citation rates in AI models because they directly answer "which should I choose" queries.

Write 3-4 comparison pieces targeting:

- Your product/approach vs. the main alternatives

- "Best [category] for [specific use case]" roundups

- "How to choose [X]" guides with clear criteria

Be honest in these comparisons. AI models are getting better at detecting promotional content, and users who click through from an AI citation and find a biased comparison bounce immediately. Genuine, balanced comparisons with clear criteria get cited more often and convert better.

Phase 3: Tracking and iteration (days 46-60)

What to measure in AI SEO

Traditional SEO metrics (rankings, organic traffic) are necessary but no longer sufficient. You need to track a parallel set of AI-specific metrics:

| Metric | What it tells you | Where to track it |

|---|---|---|

| AI citation rate | How often your pages appear in LLM responses | Promptwatch, Otterly.AI |

| Mention sentiment | Whether AI describes your brand positively | Promptwatch, Brandlight |

| Prompt coverage | Which queries you appear for vs. competitors | Promptwatch, AthenaHQ |

| AI crawler visits | Which pages AI bots are reading and how often | Promptwatch (crawler logs) |

| AI-referred traffic | Actual visitors arriving from AI search | GSC, server logs, Promptwatch |

| Google AI Overview presence | Whether you're cited in Google's AI answers | SE Ranking, Semrush |

The most important metric when starting from zero is prompt coverage -- how many of the relevant queries in your space does your content appear in? Start with a baseline on day 46 and check again on day 60.

Day 46-52: Baseline your AI visibility

Run a systematic audit of your current AI visibility. Pick 20-30 prompts that represent your target audience's actual questions. Query ChatGPT, Perplexity, and Google AI Overviews with each one. Record:

- Whether you appear at all

- Where you appear (cited source, mentioned brand, or not present)

- Which competitors appear instead

This baseline is your starting point. Without it, you can't tell whether your content changes are working.

Promptwatch automates this process across 10 AI models simultaneously, with volume estimates for each prompt so you can prioritize the ones that actually matter.

Day 53-57: Identify and fill the remaining gaps

By day 53, you should have 15-20 pieces of content live and a clear picture of where you're appearing and where you're not. Now look at the gaps:

- Which prompts are competitors appearing for that you're not?

- Which of your pages are AI crawlers visiting but not citing?

- Which pieces have good Google rankings but zero AI citations?

Pages that rank well on Google but don't get AI citations usually have one of these problems: they're too promotional, they don't directly answer the query, or they lack the structured format AI models prefer. Fix the format first -- add a direct answer at the top, restructure with clear headings, add schema markup.

For pages that AI crawlers visit but don't cite, the content itself is likely the issue. It's being read but not considered authoritative enough. Add original data, deepen the analysis, or add expert quotes.

Day 58-60: Set up your ongoing monitoring system

By day 60, you need a system that runs without constant manual effort. The goal is to catch citation drops quickly, identify new prompt opportunities as they emerge, and track whether your visibility is trending up.

Minimum viable monitoring setup:

- Weekly AI visibility check across your 20-30 target prompts

- Google Search Console integration to track AI-referred traffic

- AI crawler log monitoring to see which pages bots are reading

- Monthly competitor citation analysis to spot new gaps

For teams that want this automated, Promptwatch's Professional plan covers crawler logs, page-level citation tracking, and traffic attribution in one place. For tighter budgets, Otterly.AI covers basic monitoring at a lower price point, though without the content gap analysis or crawler logs.

The reality check: what 60 days actually gets you

Let's be honest about expectations. Sixty days of consistent work will not make you the dominant voice in a competitive category. What it will do:

- Get your site indexed and actively crawled by major AI models

- Establish a topical cluster that signals expertise to both Google and LLMs

- Generate your first AI citations (likely in Perplexity and Google AI Overviews first, then ChatGPT)

- Give you a clear baseline and data to inform the next 60 days

The brands winning in AI search right now started 12-18 months ago. But the gap between "no AI visibility" and "some AI visibility" closes faster than you'd expect if you're producing structured, genuinely useful content consistently.

Google still processes 13.7 billion searches per day versus ChatGPT's 1 billion. Traditional SEO isn't going anywhere. But the trajectory is clear -- AI search is growing 4-6 percentage points of market share per quarter. The brands that build AI citation authority now will be significantly harder to displace in 18 months than the ones that wait.

Start with the foundation. Build the cluster. Track what's working. Sixty days from now, you'll have real data instead of guesses.