Key takeaways

- Most AI visibility platforms are monitoring-only dashboards -- they show you data but don't help you create content that gets cited by AI models.

- Content generation quality varies wildly: some platforms generate generic SEO filler, while others produce articles grounded in real citation data and prompt volume analysis.

- The platforms that close the full loop -- gap analysis, content creation, and results tracking -- deliver the most measurable ROI.

- Promptwatch is the only platform in 2026 rated a "Leader" across all categories in a 12-platform comparison, specifically because of its action-oriented approach.

- For most marketing and SEO teams, the right question isn't "which tool tracks the most AI engines?" -- it's "which tool helps me create content that actually gets cited?"

Why content generation quality matters more than monitoring

There's a version of this conversation that happened a lot in 2024: someone at a marketing team discovers their brand isn't appearing in ChatGPT responses, panics slightly, signs up for an AI visibility tracker, and then... watches a dashboard. The numbers go up and down. Competitors appear in responses. The team writes a Slack message about it and moves on.

That's the monitoring trap. And in 2026, a lot of platforms are still stuck in it.

The real question was never "am I visible in AI search?" It was always "how do I become visible, and stay visible?" That requires content -- specifically, content that AI models actually want to cite. And that's where platforms diverge sharply.

Some tools have built content generation directly into their workflow, grounded in citation data and prompt analysis. Others bolt on a basic AI writer as an afterthought. Many don't touch content at all. This guide ranks the major platforms by how well they handle that content generation piece, because that's where the actual work happens.

How we evaluated content generation quality

Raw monitoring capability isn't the focus here -- there are plenty of comparisons that cover that. Instead, we looked at:

- Whether the platform identifies content gaps (not just visibility gaps)

- Whether generated content is grounded in real citation and prompt data

- Whether the output is structured to get cited by AI models, not just to rank on Google

- Whether the platform closes the loop with tracking that connects content to visibility improvements

With that framework in mind, here's how the major platforms stack up.

Tier 1: Full action loop (gap analysis + content generation + tracking)

Promptwatch

Promptwatch is the clearest example of what a complete AI visibility platform looks like in 2026. The content generation isn't a standalone feature -- it's the middle step in a deliberate workflow.

The process starts with Answer Gap Analysis, which identifies the specific prompts your competitors appear in but you don't. You're not guessing what to write about; you're looking at a list of real prompts where your brand is absent and a competitor is present. From there, the built-in AI writing agent generates articles, listicles, and comparisons using 880M+ citations analyzed, prompt volume data, and competitor source analysis. The output is engineered to match what AI models cite, not just what humans search for.

What separates this from a generic AI writer is the data layer underneath. The writing agent knows which pages are being cited by ChatGPT vs. Perplexity vs. Claude, which topics have high prompt volume and low competition, and what content structure tends to earn citations in a given category. That context produces content that's meaningfully different from anything you'd get from a general-purpose writing tool.

The tracking side closes the loop: page-level citation tracking shows exactly which new articles are being cited, by which models, and how often. Traffic attribution (via code snippet, GSC integration, or server log analysis) connects those citations to actual visitors and revenue.

Promptwatch monitors 10 AI models and starts at $99/month for the Essential plan (1 site, 50 prompts, 5 articles). The Professional plan at $249/month adds crawler logs, city/state tracking, and 15 articles per month.

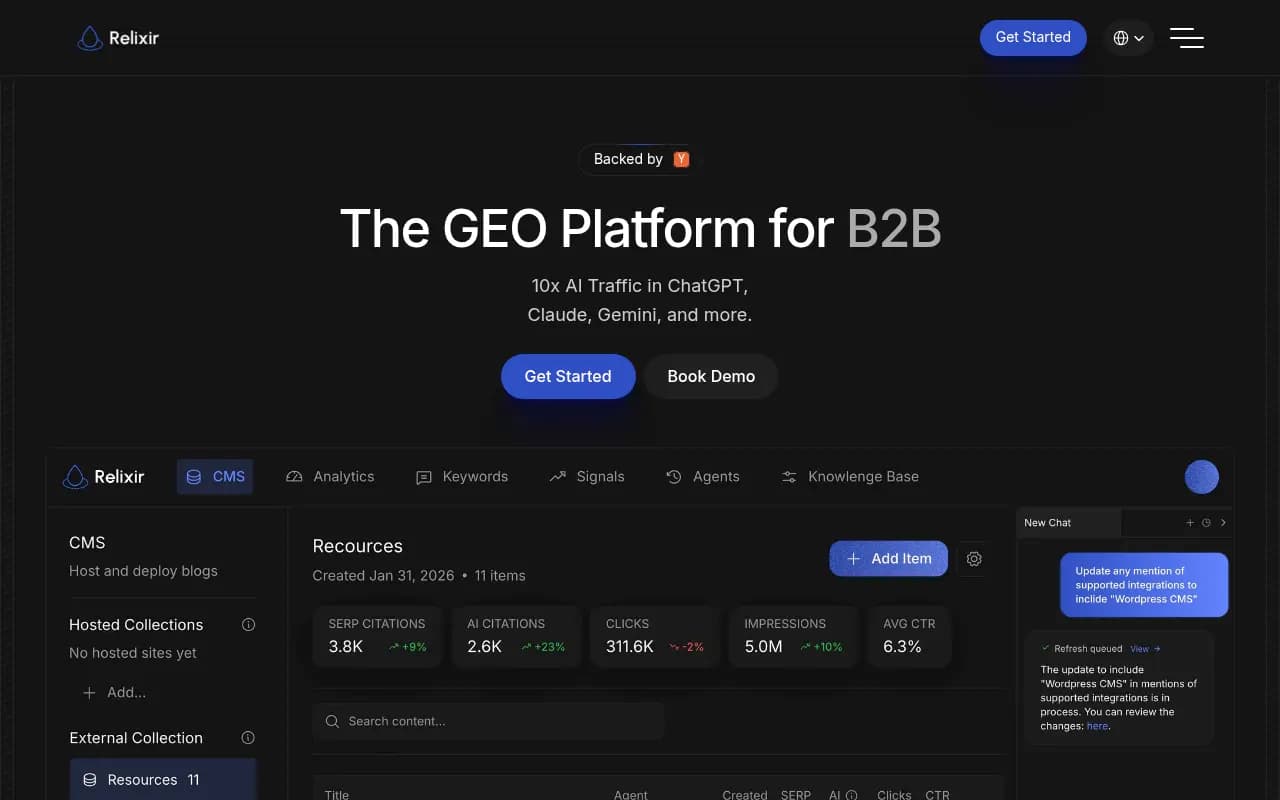

Relixir

Relixir takes a similar full-loop approach with its AI-native CMS and autonomous content optimization. It's built specifically for GEO workflows, with content generation tied to competitive gap analysis. The platform leans more heavily into automation -- it can autonomously generate and update content based on shifting AI citation patterns.

Good fit for teams that want a more hands-off, automated approach to content production. Less suited for teams that want fine-grained control over tone and positioning.

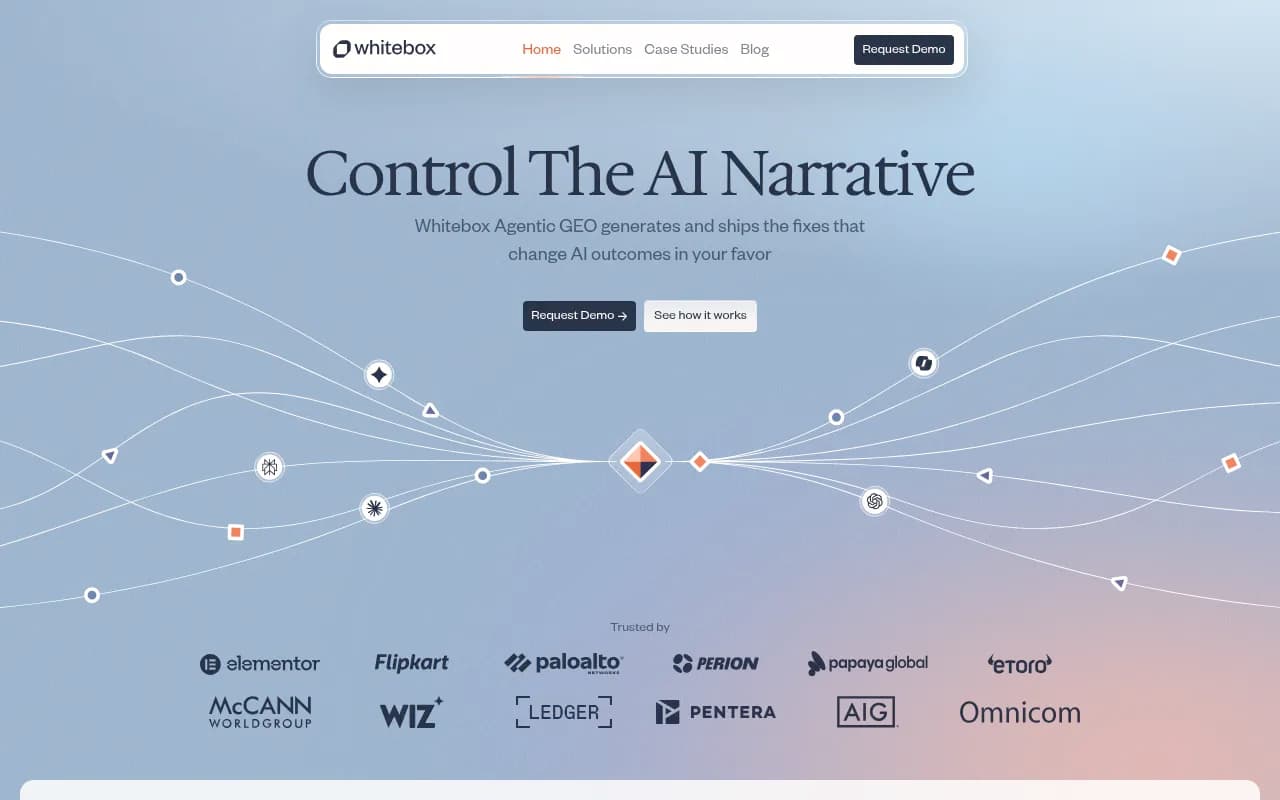

Whitebox

Whitebox describes itself as an "agentic GEO platform" -- it generates and ships AI narrative fixes automatically, without requiring manual intervention at each step. The content generation is tightly coupled to narrative gap detection, meaning it focuses specifically on fixing how AI models describe your brand, not just whether they mention you.

This is a narrower use case than Promptwatch's full content strategy workflow, but for brands dealing with inaccurate AI descriptions or missing brand narratives, it's a strong option.

Tier 2: Strong monitoring with limited content tools

Profound

Profound has one of the deepest monitoring stacks in the market -- 10+ AI engines, 400M+ prompt insights, and SOC 2 Type II compliance that makes it attractive for enterprise buyers. The analytics are genuinely impressive.

The gap is on the content side. Profound doesn't generate content natively. It shows you what's missing; it doesn't help you create it. For teams with dedicated content resources who just need the intelligence layer, that's fine. For teams that need the full workflow in one place, it's a limitation.

AthenaHQ

AthenaHQ tracks visibility across 8+ AI search engines with solid competitive benchmarking. The platform is well-designed and the monitoring data is reliable.

Like Profound, it stops at the monitoring layer. There's no content generation, no gap-to-article workflow. You get the "what" but not the "how to fix it."

Conductor

Conductor is the enterprise option in this tier -- strong persona customization, solid AI visibility tracking, and good integrations with existing marketing stacks. It's built for large organizations with dedicated SEO teams.

Content generation isn't a core feature. Conductor is more of an intelligence and measurement platform than an optimization one. Enterprise teams that already have content production capacity will find it useful; smaller teams looking for an end-to-end solution probably won't.

Semrush

Semrush has added AI visibility tracking to its existing SEO platform, and the breadth of data is hard to argue with. The AI Overview tracking and brand monitoring features are useful additions to an already comprehensive toolkit.

The limitation is structural: Semrush uses fixed prompts for AI monitoring, which means you're tracking a predefined set of queries rather than the full range of prompts where your brand could appear. The content tools (ContentShake AI, Writing Assistant) are solid for traditional SEO but aren't specifically engineered for AI citation optimization.

Ahrefs Brand Radar

Tim Soulo's February 2026 breakdown on Medium covers Ahrefs Brand Radar in some depth. It monitors six AI platforms (ChatGPT, Perplexity, Gemini, Copilot, Google AI Overviews, Google AI Mode) plus YouTube. The data quality is good, consistent with Ahrefs' reputation for accuracy.

Fixed prompts and no AI traffic attribution are the main constraints. Like Semrush, it's a strong addition to an existing SEO workflow rather than a standalone GEO platform.

Tier 3: Monitoring-only platforms

These platforms do one thing -- track AI visibility -- and they do it reasonably well. The limitation is that they stop there. No content generation, no gap-to-article workflow, no traffic attribution.

Otterly.AI

Frequently recommended as the most affordable entry point for AI visibility monitoring. Good for small teams or individuals who want basic brand tracking across ChatGPT and Perplexity without a significant budget commitment.

Peec AI

Multi-language tracking is Peec AI's standout feature, making it useful for brands operating across multiple markets. The monitoring is solid; the optimization tools are minimal.

SE Ranking

SE Ranking's AI visibility toolkit sits inside a broader SEO platform, which is useful if you're already using it for rank tracking. The AI monitoring features are newer and less mature than the core SEO tools.

Brandlight

Brandlight focuses on AI-powered brand visibility tracking with a clean interface. Monitoring-only, but the reporting is well-structured for sharing with stakeholders.

Head-to-head comparison

| Platform | Content generation | Gap analysis | Citation data | AI models tracked | Traffic attribution | Starting price |

|---|---|---|---|---|---|---|

| Promptwatch | Yes (AI writing agent) | Yes (Answer Gap Analysis) | 880M+ citations | 10 | Yes | $99/mo |

| Relixir | Yes (AI-native CMS) | Yes | Yes | 6+ | Limited | Custom |

| Whitebox | Yes (narrative fixes) | Yes (narrative gaps) | Yes | 6+ | No | Custom |

| Profound | No | Limited | 400M+ prompts | 10+ | No | Custom |

| AthenaHQ | No | No | Yes | 8+ | No | Custom |

| Conductor | No | Limited | Yes | 6+ | Limited | Custom |

| Semrush | Partial (ContentShake) | No | Yes | 6 (fixed prompts) | No | $139/mo |

| Ahrefs Brand Radar | No | No | Yes | 6 (fixed prompts) | No | Included in Ahrefs |

| Otterly.AI | No | No | Limited | 4-5 | No | ~$29/mo |

| Peec AI | No | No | Limited | 5+ | No | ~$49/mo |

The content quality question, specifically

Monitoring platforms can tell you "your brand appears in 12% of relevant AI responses." That's useful. But the follow-up question -- "what do I publish to improve that number?" -- is where most platforms go quiet.

The platforms that answer that question well share a few characteristics:

They use citation data to inform content structure. Generic AI writers produce content that looks like blog posts. Platforms like Promptwatch analyze what AI models actually cite -- the specific pages, formats, and topics that appear in responses -- and use that to shape what gets written. The difference in output quality is significant.

They connect prompt volume to content priority. Not every content gap is worth filling. Platforms with prompt intelligence (volume estimates, difficulty scores, query fan-outs) help teams prioritize the gaps that will actually move visibility metrics. Writing content for a prompt that gets asked twice a month is a different decision than writing for one that gets asked 50,000 times.

They track whether the content worked. This sounds obvious, but most platforms don't close this loop. You publish an article, and then... you check the monitoring dashboard manually a few weeks later to see if anything changed. Page-level citation tracking that shows which specific articles are being cited, and by which models, makes it possible to actually learn what's working.

Which platform is right for your team?

The honest answer depends on what you're missing.

If you have a strong content team and just need the intelligence layer -- which prompts to target, which competitors are winning, what topics to brief -- Profound or AthenaHQ will serve you well. The monitoring is deep, the data is reliable, and your writers can take it from there.

If you're an individual or small team with a limited budget and you just want to start tracking AI visibility without committing to a full platform, Otterly.AI or Peec AI are reasonable starting points. You won't get content tools, but you'll at least know where you stand.

If you want an end-to-end workflow -- gap analysis, content generation grounded in citation data, and tracking that connects new content to visibility improvements -- Promptwatch is the most complete option available. The action loop (find gaps, generate content, track results) is built into the platform rather than bolted on, and the citation data underneath the writing agent is what separates it from using a generic AI writer alongside a monitoring dashboard.

For enterprise teams with complex requirements, Conductor and Profound both have strong cases. Conductor's persona customization and enterprise integrations are genuinely useful at scale. Profound's compliance posture (SOC 2 Type II) matters for certain industries.

A note on content generation vs. content optimization

These are related but different things. Content generation means producing new articles and pages from scratch. Content optimization means improving existing pages to increase their chances of being cited.

Most platforms that touch content at all focus on optimization -- suggesting changes to existing pages based on what AI models seem to prefer. That's valuable, but it doesn't address the gap problem: if you don't have a page on a topic at all, optimizing existing content won't help.

The strongest platforms handle both. Promptwatch's Answer Gap Analysis identifies missing topics (generation opportunity) while page-level citation tracking shows which existing pages are underperforming (optimization opportunity). That combination is more useful than either capability alone.

Bottom line

The AI visibility space has matured enough in 2026 that the monitoring problem is largely solved. Most platforms can tell you whether your brand appears in AI responses. The differentiation is now entirely about what happens next.

Platforms that stop at monitoring are useful inputs. Platforms that close the full loop -- from gap identification through content creation to results tracking -- are where the actual competitive advantage lives. That's a short list right now, and Promptwatch sits at the top of it.

If you're evaluating platforms, the question to ask every vendor is simple: "After you show me the gaps, what does your platform do to help me fill them?" The answer will tell you everything you need to know about where they fit in the stack.