Key takeaways

- A GEO content calendar is different from a traditional SEO calendar -- it's built around the prompts and questions AI models answer, not just keywords Google ranks.

- The monthly cycle has four phases: discover gaps, plan content, publish and optimize, then track what gets cited.

- Prompt intelligence (volume, difficulty, query fan-outs) should drive your topic selection, not gut feel.

- AI visibility tools can show you exactly which competitors are being cited for prompts you're not -- that's your content backlog.

- Consistency matters more than volume. One well-structured, AI-optimized article per week beats a burst of thin content.

Why your existing content calendar isn't built for AI search

Most content calendars were designed around a simple idea: find keywords, write posts, rank on Google. That workflow made sense for a decade. It doesn't fully hold up in 2026.

When someone asks ChatGPT "what's the best project management tool for remote teams?" or asks Perplexity "which CRM is easiest to set up?", those AI models aren't crawling your sitemap. They're drawing on content they've already indexed, citations they trust, and sources that have answered similar questions clearly and completely. If your site hasn't published content that directly addresses those prompts, you simply won't appear -- no matter how strong your traditional SEO is.

That's the gap Generative Engine Optimization (GEO) fills. And a GEO content calendar is how you close it systematically, month after month, instead of hoping you stumble into AI citations.

The good news: the calendar structure isn't radically different from what you already know. It's the inputs that change.

Phase 1: Discover your gaps before you plan anything

The biggest mistake teams make with GEO content planning is starting with topics they think are important. The right starting point is data: which prompts are AI models answering right now, who's being cited, and where are you absent?

This is what's called Answer Gap Analysis. You're looking for the specific questions and prompts where competitors show up in AI responses but you don't. Those gaps are your content backlog -- a prioritized list of articles, comparisons, and listicles that AI models want but can't find on your site.

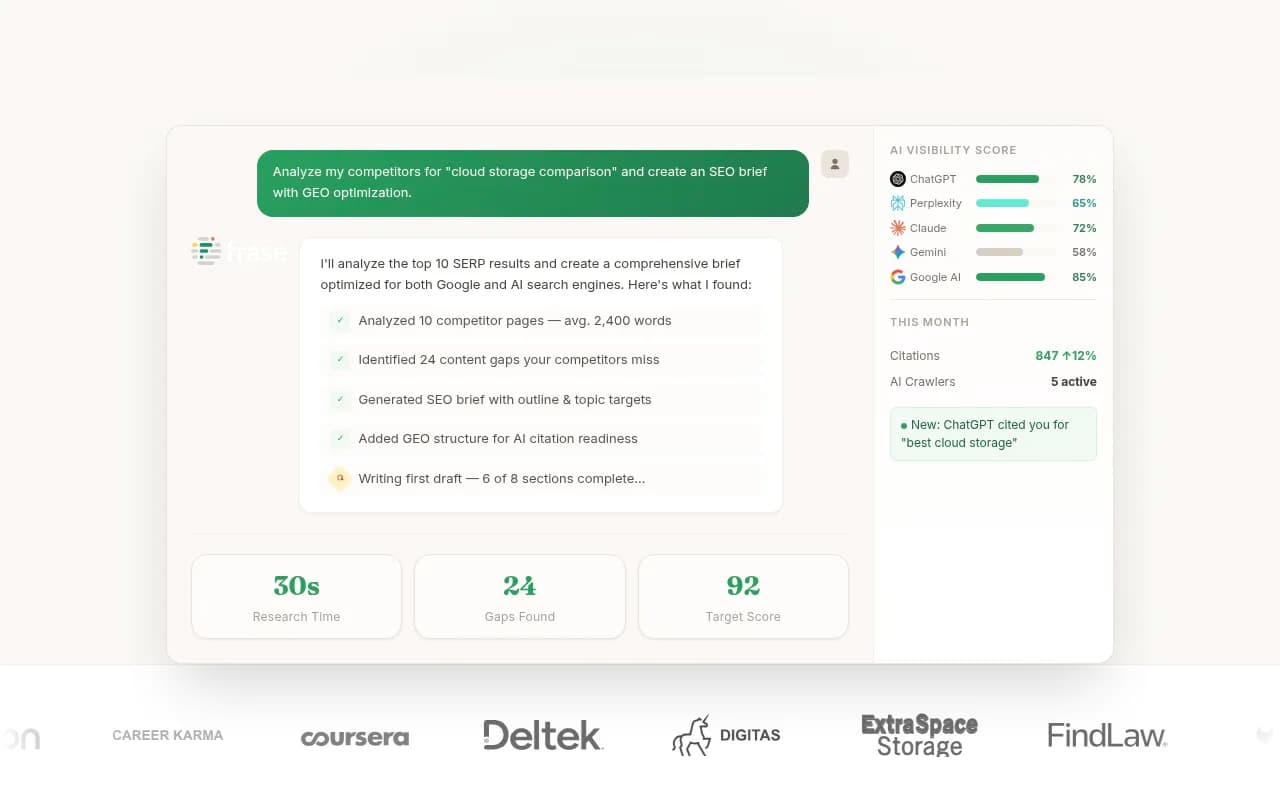

Promptwatch does this automatically. It monitors prompts across ChatGPT, Perplexity, Claude, Gemini, and seven other AI models, then surfaces the exact queries where your competitors are visible and you're not.

For each gap, you also want to know:

- How often is this prompt being asked? (prompt volume)

- How hard is it to rank for? (difficulty score)

- What sub-questions does it branch into? (query fan-outs)

These three data points let you prioritize ruthlessly. A high-volume, low-difficulty prompt with clear sub-questions is a content opportunity you should publish this month. A low-volume, high-difficulty prompt can wait.

If you're not yet using a dedicated GEO platform, you can do a manual version of this: run your target prompts in ChatGPT, Perplexity, and Claude, note which brands and pages get cited, and build a spreadsheet of what's missing. It's slow, but it works as a starting point.

Phase 2: Build your monthly content plan around prompt clusters

Once you have your gap list, the next step is organizing it into a monthly plan. The structure that works best for GEO is prompt clusters -- groups of related questions that a single piece of content (or a small set of linked pieces) can answer together.

Here's a practical example. Say you're marketing a B2B SaaS tool. Your gap analysis surfaces these prompts:

- "What is the best [category] tool for small teams?"

- "How does [your tool] compare to [competitor]?"

- "[Your tool] vs [competitor] -- which is better for [use case]?"

- "Is [your tool] worth it?"

These aren't four separate articles. They're one cluster. A well-structured comparison page that answers all of them -- with clear headings, specific feature comparisons, and honest pros/cons -- is far more likely to get cited across multiple prompts than four thin posts that each answer one question.

A realistic monthly content volume

For most teams, a sustainable GEO content calendar looks like this:

- 4-6 new articles per month (one per week, with occasional doubles)

- 2-3 existing pages refreshed or expanded based on citation data

- 1 "anchor" piece per month: a comprehensive guide or comparison that targets a high-value prompt cluster

That's it. The mistake most teams make is trying to publish more and ending up with content that's too thin to get cited. AI models cite sources that answer questions completely. A 2,000-word article that fully addresses a prompt beats five 400-word posts that each partially address it.

Content types that get cited most

Not all content formats perform equally in AI search. Based on citation patterns, these formats consistently earn references from AI models:

- Comparison articles ("X vs Y" or "Best X for Y")

- Listicles with specific, factual entries (not vague "top 10" fluff)

- How-to guides with step-by-step structure

- Definition and explainer pages that answer "what is X" clearly

- Original data or research that AI models can reference

Opinion pieces, brand storytelling, and social-style content rarely get cited. That doesn't mean you shouldn't write them -- they serve other purposes -- but they shouldn't dominate your GEO calendar.

Phase 3: Write content that AI models actually cite

There's a meaningful difference between content written for human readers and content engineered to be cited by AI. The best GEO content does both, but the structure has to be deliberate.

Here's what makes content citation-worthy:

Answer the prompt directly in the first 100 words. AI models often pull the opening of a page when constructing a response. If your article buries the answer three paragraphs in, it's less likely to be cited. Lead with the answer, then expand.

Use clear, descriptive headings. AI models parse headings to understand what a page covers. A heading like "How to set up [tool] in 5 steps" is far more useful than "Getting started." Think of your headings as a table of contents for an AI model, not just a reader.

Include specific, verifiable facts. Vague claims ("this tool is great for teams") don't get cited. Specific claims ("this tool supports up to 50 users on the free plan") do. AI models prefer content they can quote accurately.

Cover the full question, including sub-questions. If your target prompt is "best CRM for startups," your article should also address related questions: what features matter for startups, how pricing scales, which integrations are essential. Query fan-outs mean that one prompt branches into many -- your content should cover the branch, not just the trunk.

Link internally to related content. AI crawlers follow links. A well-linked content cluster signals that your site has depth on a topic, which increases the chance of multiple pages being cited.

Tools like Clearscope and MarketMuse can help you identify the semantic coverage your content needs. For GEO-specific content generation grounded in actual citation data, Promptwatch's built-in AI writing agent generates articles based on 880M+ citations analyzed -- so the structure and angle are informed by what AI models have actually cited before.

Phase 4: Track what gets cited and close the loop

Publishing is not the end of the process. It's the beginning of the feedback loop.

After publishing, you need to know:

- Which of your new pages are being cited by AI models?

- Which prompts are they showing up for?

- Is your citation rate improving week over week?

- Which AI models cite you most -- and which ignore you?

This is where most content teams fall short. They publish, move on to the next piece, and never find out whether the content actually worked. That's a problem because GEO optimization is iterative. A page that isn't getting cited might just need a structural tweak -- a clearer opening, a missing sub-question answered, a heading rewritten. But you can't make that call without data.

Page-level citation tracking shows you exactly which pages are being referenced, how often, and by which AI models. Combine that with traffic attribution (via a code snippet, Google Search Console integration, or server log analysis) and you can connect AI visibility directly to actual visits and revenue.

The monthly review should answer three questions:

- Which pages published last month are getting cited?

- Which prompts improved in visibility?

- What new gaps appeared that should go into next month's plan?

That third question is what keeps the calendar alive. AI search is not static. New prompts emerge, competitor content shifts, and AI models update their citation preferences. A monthly gap refresh keeps your backlog current.

The monthly GEO calendar: a practical template

Here's how to structure each month. This assumes a team of 2-3 people (content writer, SEO/GEO lead, and optionally a designer or developer).

| Week | Activities |

|---|---|

| Week 1 | Run gap analysis, refresh prompt backlog, prioritize top 4-6 topics for the month |

| Week 2 | Publish 1-2 new articles targeting high-priority prompt clusters |

| Week 3 | Publish 1-2 more articles; refresh 1-2 existing pages based on citation data |

| Week 4 | Publish final article(s); review citation data from the month; update backlog |

| End of month | 90-minute planning session: review what worked, set priorities for next month |

The 90-minute end-of-month review is non-negotiable. Block it in your calendar now. It's where you close the loop -- comparing what you published against what got cited, and feeding that back into next month's priorities.

Tools to support each phase

| Phase | What you need | Tools worth considering |

|---|---|---|

| Gap discovery | Prompt monitoring, competitor citation analysis | Promptwatch, AthenaHQ, Profound |

| Topic prioritization | Prompt volume, difficulty scores | Promptwatch, Rankscale |

| Content creation | AI writing with citation grounding | Promptwatch, Jasper, Frase |

| Content optimization | Semantic coverage, heading structure | Clearscope, MarketMuse, Surfer SEO |

| Citation tracking | Page-level AI citation monitoring | Promptwatch, Otterly.AI, Peec AI |

| Traffic attribution | Connecting AI visits to revenue | Promptwatch (GSC integration, code snippet) |

Common mistakes that kill GEO content calendars

Publishing without a prompt target. Every piece of content in your GEO calendar should map to one or more specific prompts. If you can't articulate which AI model query this article is trying to appear in, it probably shouldn't be on the calendar.

Chasing volume over depth. Ten thin articles will not outperform two comprehensive ones in AI citations. AI models prefer sources that answer questions completely. Depth wins.

Ignoring existing content. Your gap analysis will often reveal that you already have pages that partially address a prompt -- they just need to be expanded or restructured. Refreshing existing content is faster than writing new content and often more effective.

Not tracking AI crawler activity. AI models crawl your site before they cite it. If there are crawl errors, blocked pages, or slow load times, AI crawlers may not be reading your content at all. Monitoring AI crawler logs (which bots visited, which pages they read, what errors they hit) is a step most teams skip entirely -- and it's often where the real problem lies.

Treating GEO as a one-time project. The teams winning in AI search in 2026 are the ones running a continuous cycle: find gaps, publish, track, repeat. It's not a campaign. It's an ongoing operation.

Starting your first GEO calendar

If you're starting from scratch, here's the simplest possible version:

- Pick 10-15 prompts that matter to your business. These are the questions your customers ask AI models when they're looking for a product or service like yours.

- Run those prompts in ChatGPT, Perplexity, and one other model. Note who gets cited.

- Identify the 3-5 prompts where competitors appear and you don't. Those are your first articles.

- Write one article per week for the next month, each targeting one of those prompts.

- After 30 days, check whether any of your new pages are appearing in AI responses.

That's your first month. It's not perfect, but it's a real start -- and it's infinitely better than publishing content with no connection to what AI models are actually answering.

As you scale, the process gets more systematic. Prompt monitoring becomes automated, gap analysis runs continuously, and your citation data informs every planning decision. But the core logic stays the same: know what AI models are being asked, know where you're absent, publish content that fills the gap, track whether it worked.

That cycle -- repeated every month -- is what builds durable AI search visibility.