Key takeaways

- 65% of searches now end without a click, so getting cited in AI answers matters more than ranking #1 on a results page

- AI models cite content that answers questions directly and completely -- not content optimized around keyword density

- A lean team can compete by focusing on answer gap analysis first, then creating targeted content that fills those gaps

- Technical foundations (crawlability, structured data, clean architecture) are table stakes -- AI models can't cite pages they can't read

- Tracking AI visibility separately from Google rankings is now essential, not optional

The honest reality of AI SEO in 2026 is that most of the advice floating around assumes you have people. A content manager, a few writers, an SEO strategist, maybe someone handling technical audits. If you're a team of one or two, or a small agency managing a dozen clients, that advice doesn't land.

But here's what's actually true: a lean team can outperform a bloated one in AI search. The reason is that AI models don't reward volume. They reward relevance, authority, and directness. A single well-structured article that answers a specific question clearly will get cited more often than ten keyword-stuffed blog posts that dance around the answer.

This guide is about building a system that works without a big team -- the right priorities, the right tools, and a repeatable loop you can actually maintain.

Why AI search changes the game for lean teams

Traditional SEO rewarded scale. More content, more backlinks, more pages. The teams with the biggest budgets could outpublish everyone else and dominate rankings through sheer volume.

AI search breaks that dynamic. When someone asks ChatGPT or Perplexity a question, the model doesn't return a list of ten blue links. It synthesizes an answer and cites two or three sources. Being one of those sources requires being genuinely useful -- not just present.

That's a leveling force. A small brand with one excellent, well-structured page on a specific topic can get cited ahead of a large brand with fifty mediocre pages on the same topic.

The shift is real. According to data from the AI SEO community, 65% of searches now end without a click -- users get their answer from the AI response itself. That means if you're not in the cited sources, you're invisible. Ranking #4 on Google used to be fine. Being the fourth source cited in an AI answer doesn't exist.

For lean teams, this is actually good news. You don't need to produce more. You need to produce better, and you need to produce it in the right places.

Step 1: Fix the technical foundation first

Before any content work, AI models need to be able to read your site. This sounds obvious, but it's where a surprising number of brands fall short -- and it's a problem that content volume can't fix.

The basics matter:

- Clean site architecture with logical internal linking

- Fast load times (AI crawlers deprioritize slow pages)

- Proper use of structured data (FAQ schema, HowTo schema, Article schema)

- An updated sitemap and a robots.txt that doesn't accidentally block AI crawlers

- HTTPS throughout, no broken redirect chains

One thing that's easy to overlook: AI crawlers are not the same as Googlebot. ChatGPT's crawler (GPTBot), Anthropic's ClaudeBot, and Perplexity's crawler all behave differently and visit at different frequencies. If you've blocked any of them in your robots.txt -- which some site owners did accidentally when trying to block AI training -- you're invisible to those models by default.

Tools like Screaming Frog are useful for auditing crawlability issues quickly.

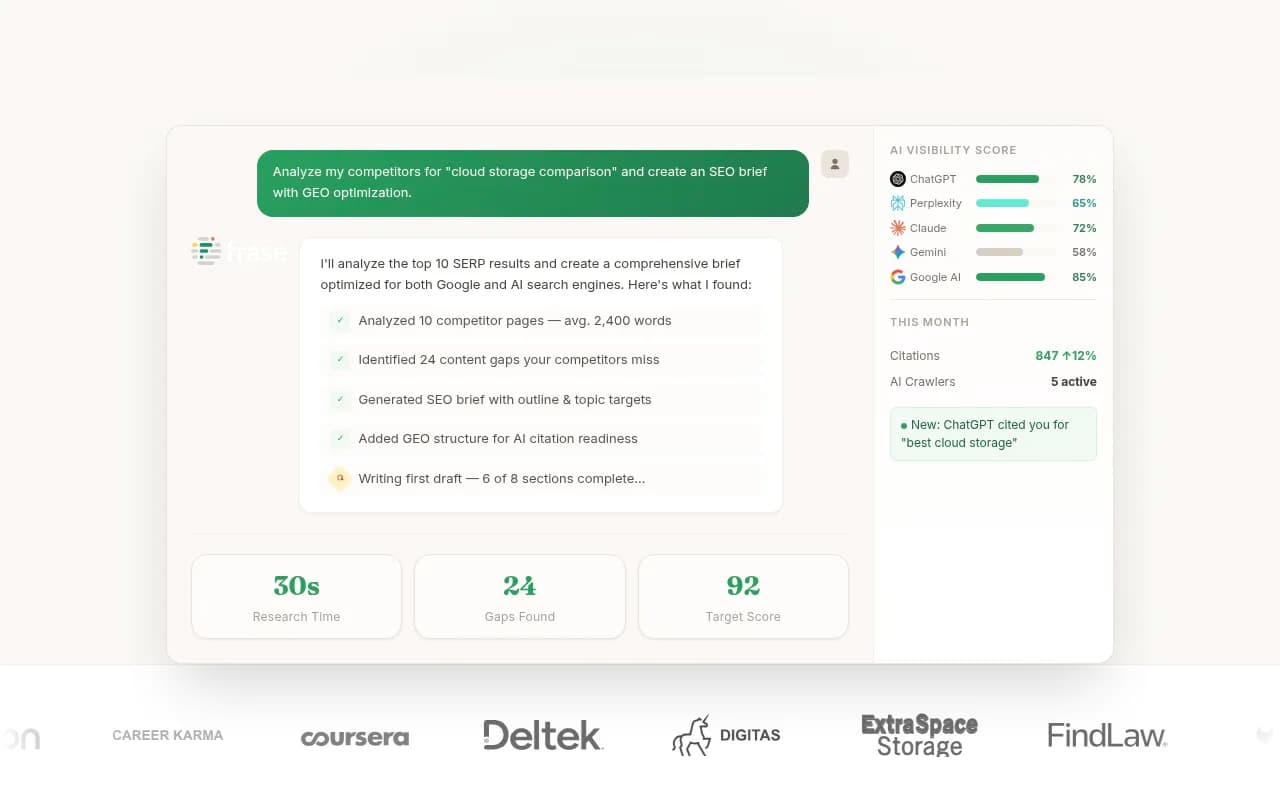

If you want to go deeper and actually see which AI crawlers are hitting your site, which pages they're reading, and what errors they're encountering, that's where a dedicated AI visibility platform becomes worth the investment. Promptwatch has crawler log analysis built in -- you can see GPTBot, ClaudeBot, and Perplexity's crawler activity in real time, which pages they're skipping, and where they're hitting errors.

For most lean teams, the technical audit is a one-time investment of a few hours that unlocks everything else. Do it once, fix the issues, then move on.

Step 2: Find the gaps before you write anything

The biggest mistake lean teams make is writing content based on intuition or keyword tools built for traditional search. You end up producing articles that rank fine on Google but never get cited by AI models -- because the AI models are looking for answers to different questions.

Answer gap analysis is the practice of identifying which prompts (questions people ask AI models) your competitors are appearing in but you're not. It's the most efficient way to prioritize content when you have limited capacity.

Here's what this looks like in practice:

- Identify 20-30 prompts relevant to your product or category

- Run those prompts across ChatGPT, Perplexity, and Google AI Overviews

- Note which competitors appear in the answers and which questions you're completely absent from

- Prioritize the gaps where you have genuine expertise and the question is specific enough that one good article could own it

Doing this manually is tedious but possible. Doing it at scale requires tooling. Promptwatch's Answer Gap Analysis shows you exactly which prompts competitors are visible for that you're not, with prompt volume estimates so you can prioritize the highest-value gaps first.

For lean teams, this is the highest-leverage activity in the entire workflow. Spend an hour on gap analysis and you'll have a content roadmap that's more targeted than anything a large team produces by guessing.

Step 3: Write content that AI models actually cite

This is where the "answer first" principle matters. AI models are synthesizing responses to questions. They cite sources that answer those questions directly, clearly, and completely. They don't cite sources that bury the answer in three paragraphs of preamble.

A few structural principles that consistently correlate with AI citations:

Lead with the answer. The first paragraph should answer the question, not introduce it. If someone asks "what is the best CRM for small businesses," your article should answer that in the first two sentences, then explain why.

Use clear headings that match question phrasing. AI models often pull from sections of pages, not entire articles. A heading like "How long does it take to see results from AI SEO?" is more citable than "Timeline considerations."

Include specific, verifiable facts. AI models are more likely to cite content with concrete data, named examples, and specific claims. Vague generalizations get skipped.

Keep paragraphs short. Dense walls of text are harder for models to parse and extract from. Short paragraphs with clear topic sentences work better.

Cover the topic completely. AI models reward topical depth. A 1,200-word article that covers every angle of a narrow question will outperform a 3,000-word article that covers a broad topic shallowly.

For lean teams, the practical implication is: write fewer articles, but make each one genuinely complete. One article per week that fully answers a specific question beats five articles per week that partially answer broad topics.

Step 4: Build a minimal but repeatable content workflow

The challenge for lean teams isn't knowing what to do -- it's doing it consistently without burning out. You need a workflow that's simple enough to actually follow.

Here's a minimal version that works:

Week 1 of each month: Run your gap analysis. Identify the top 3-5 prompts where you're invisible and competitors are visible. These become your content targets for the month.

Week 2-3: Write one article per gap. Use AI writing tools to accelerate drafting, but edit heavily for accuracy, specificity, and your brand's actual voice. The research from Lean Marketing is worth taking seriously here -- 62% of consumers are less likely to trust content they believe is AI-generated. The draft is a starting point, not the final product.

Week 4: Publish, then check your AI visibility scores. Did the new content get picked up? Which models cited it? Which didn't? Use that data to inform next month's priorities.

This is a four-week loop that a single person can manage. It's not glamorous, but it compounds. Each month you're filling gaps and tracking results, and over time your AI visibility improves in a measurable way.

For the writing step, tools like Surfer SEO help optimize content structure, while Frase is useful for research and brief generation.

For content brief building specifically, Content Harmony is worth a look if you're briefing out to a freelancer.

Step 5: Track AI visibility separately from Google rankings

This is the step most lean teams skip, and it's the one that makes everything else measurable.

Traditional rank tracking tools show you where you appear in Google's blue links. That's still useful, but it doesn't tell you whether you're being cited in AI answers -- which is increasingly where the traffic decision gets made.

AI visibility tracking is a different measurement. It shows you:

- Which prompts your brand appears in across ChatGPT, Perplexity, Gemini, and other models

- How often you're cited vs. competitors

- Which specific pages are being cited and by which models

- Whether your visibility is improving or declining over time

Without this data, you're flying blind. You might be publishing good content and getting zero AI citations, and you'd have no idea.

| What you need to track | Traditional SEO tool | AI visibility platform |

|---|---|---|

| Google keyword rankings | Yes | Sometimes |

| AI model citations | No | Yes |

| Which pages AI crawlers visit | No | Yes (Promptwatch) |

| Competitor AI visibility | No | Yes |

| Prompt volume / difficulty | No | Yes (Promptwatch) |

| Traffic from AI referrals | No | Yes (with attribution) |

A few tools worth knowing for this:

Promptwatch covers the full loop -- tracking, gap analysis, content generation, and attribution. For lean teams, having one platform that does all of this is more practical than stitching together four separate tools.

If you want something more focused on monitoring only, Otterly.AI is affordable and straightforward.

Peec AI is worth considering if you need multi-language tracking.

The tools that actually move the needle for lean teams

Here's a practical comparison of the tools most relevant to a lean AI SEO workflow:

| Tool | Best for | Price range | Content generation | AI crawler logs |

|---|---|---|---|---|

| Promptwatch | Full AI SEO loop (track + fix + measure) | $99-$579/mo | Yes | Yes |

| Otterly.AI | Budget monitoring | Lower | No | No |

| Peec AI | Multi-language tracking | Mid | No | No |

| Surfer SEO | Content optimization | Mid | Partial | No |

| Frase | Research + brief generation | Low-mid | Partial | No |

| Screaming Frog | Technical crawl audits | Low | No | No |

For a lean team, the decision usually comes down to this: do you want one platform that handles the full workflow, or are you comfortable stitching together cheaper point solutions?

The stitched approach works, but it creates coordination overhead. You're exporting data from one tool, importing it into another, and manually connecting the dots. For a team of one or two, that overhead adds up.

What to prioritize if you're starting from zero

If you're new to AI SEO and don't know where to begin, here's the honest priority order:

- Fix technical issues that block AI crawlers (one-time, high-impact)

- Run a gap analysis to find where competitors are visible and you're not (monthly)

- Write one complete, answer-first article per week targeting a specific prompt (ongoing)

- Set up AI visibility tracking so you can see what's working (set up once, review monthly)

- Expand to Reddit and YouTube content once your owned content is working (later)

That last point is worth flagging. AI models don't only cite brand websites. They cite Reddit threads, YouTube videos, and third-party publications. Once your own site is in reasonable shape, publishing in those channels -- or getting mentioned there -- extends your AI footprint without requiring more content on your own site.

The mindset shift that matters most

The lean marketer's advantage in AI SEO isn't budget or team size. It's focus.

Large content teams often produce a lot of content that doesn't answer anything specific. It's optimized for keyword density, padded to hit word counts, and structured around what the team thinks people want rather than what AI models are actually being asked.

A lean team that runs gap analysis first, writes to answer specific questions completely, and tracks results monthly will outperform that approach consistently. The feedback loop is tighter, the content is more targeted, and the results are measurable.

The tools exist to make this manageable. The workflow is simple enough to actually follow. What's left is the discipline to do it consistently -- which, honestly, is the same thing that separates good SEO from bad SEO in any era.