Key takeaways

- Most AI visibility platforms only track whether your brand gets mentioned in AI responses -- they miss the deeper signals that explain why you appear (or don't)

- Only 16% of brands are actively tracking AI visibility, meaning there's still a real first-mover advantage for teams who go beyond basic mention counting

- The 8 metrics covered here -- including AI crawler activity, query fan-outs, citation source analysis, and per-model visibility breakdowns -- are what separate actionable intelligence from vanity dashboards

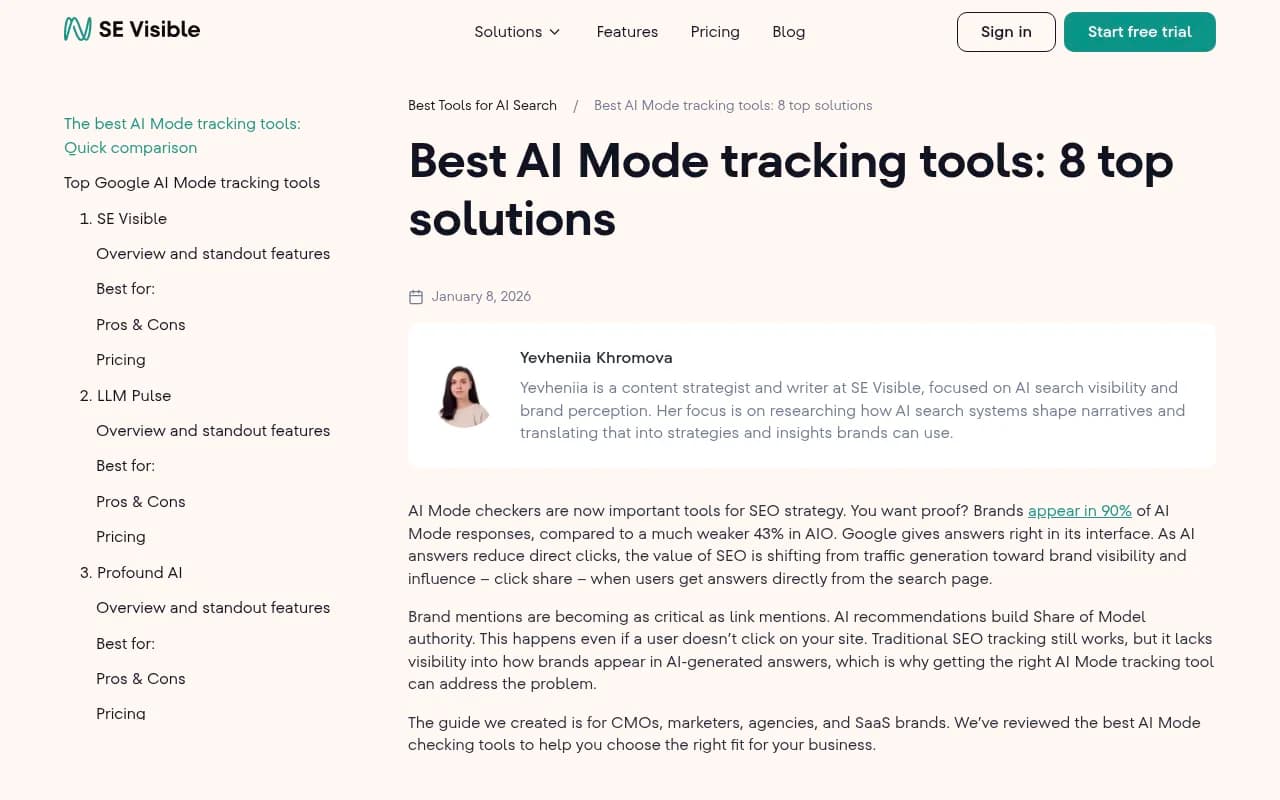

- Brands appear in roughly 90% of Google AI Mode responses (vs. 43% in AI Overviews), so the stakes for getting this right are higher than ever

- Tools like Promptwatch are built around the full tracking loop -- not just monitoring, but diagnosing gaps and generating content to close them

The conversation about AI visibility tracking usually goes like this: someone sets up a tool, watches their brand mention score tick up or down, and calls it "monitoring AI search." That's fine as far as it goes. But mention rate is roughly the equivalent of tracking impressions in paid search and ignoring clicks, conversions, and quality score. You're seeing one number when there are eight things that actually matter.

This guide is about the metrics that most platforms either skip entirely or bury so deep in their UI that nobody looks at them. Some of these gaps are technical. Some are strategic. All of them will affect your ability to actually improve your AI visibility -- not just observe it.

Why standard AI visibility dashboards fall short

The typical AI visibility tool does one thing well: it sends prompts to AI models and checks whether your brand name appears in the response. That's genuinely useful. But it answers only the question "are we visible?" -- not "why aren't we visible for this prompt?" or "which of our pages is actually driving citations?" or "what happens when a user asks a slightly different version of this question?"

Most platforms are monitoring dashboards. They show you a score. They don't tell you what to do about it.

The result is that marketing teams get a weekly report showing their "AI visibility score" went from 34% to 31%, and they have no idea whether that's because a competitor published new content, because an AI model updated its training data, because their own site had a crawl issue, or because the prompts being tracked don't actually reflect how real users ask questions.

The 8 metrics below are what you need to answer those questions.

Metric 1: AI crawler activity on your own site

This one surprises people. Most visibility tools track what AI models say about your brand. Almost none of them track what AI crawlers are actually doing on your website.

AI models like ChatGPT, Perplexity, and Claude don't just use training data -- they actively crawl the web to retrieve fresh information. If their crawlers are hitting your site with errors, skipping key pages, or not returning frequently enough, your content won't make it into responses regardless of how well-written it is.

What you want to know: which pages are AI crawlers visiting, how often, what HTTP errors they're encountering, and whether they're indexing your most important content. A page that gets zero AI crawler visits in 30 days is essentially invisible to AI search, no matter how much you've optimized it.

This is one of the most actionable metrics in the whole stack -- because crawler errors are fixable. But you can only fix them if you can see them.

Promptwatch includes real-time AI crawler logs as part of its Professional and Business plans, showing exactly which crawlers (ChatGPT, Claude, Perplexity, etc.) are hitting which pages, and what they're encountering when they do.

Metric 2: Query fan-outs and sub-query coverage

When a user types "best project management software for remote teams," an AI model doesn't just answer that exact question. It internally branches into sub-queries: What are the top-rated tools? What features matter for remote teams? What do users say about pricing? Which tools integrate with Slack?

This branching is called a query fan-out. Your visibility for the parent prompt might be decent, but if you're invisible for the sub-queries that actually drive the response, you're not really in the conversation.

Most platforms track prompts as atomic units. They tell you whether you appear for "best project management software" but don't show you the sub-query tree that determines how you appear and what the AI says about you.

Understanding fan-outs lets you prioritize content creation more precisely. Instead of targeting a single broad prompt, you can identify the specific sub-topics where you're weak and build content that addresses them directly.

Metric 3: Per-model visibility breakdown

ChatGPT, Perplexity, Claude, Gemini, and Google AI Mode don't behave the same way. They cite different sources, weight different types of content, and have different update cycles. A brand that appears prominently in Perplexity responses might barely register in Claude's.

Most basic tools either track one model or aggregate everything into a single "AI visibility score." The aggregate number hides the model-level story, which is where the actionable insight lives.

If you're strong on Perplexity but weak on Google AI Mode, the fix is different than if you're strong on ChatGPT but invisible on Gemini. Google AI Mode tends to favor content that already ranks well in traditional search. Perplexity leans heavily on recent, well-cited web content. Claude has its own source preferences.

You need per-model data to know which model to prioritize and what kind of content to create.

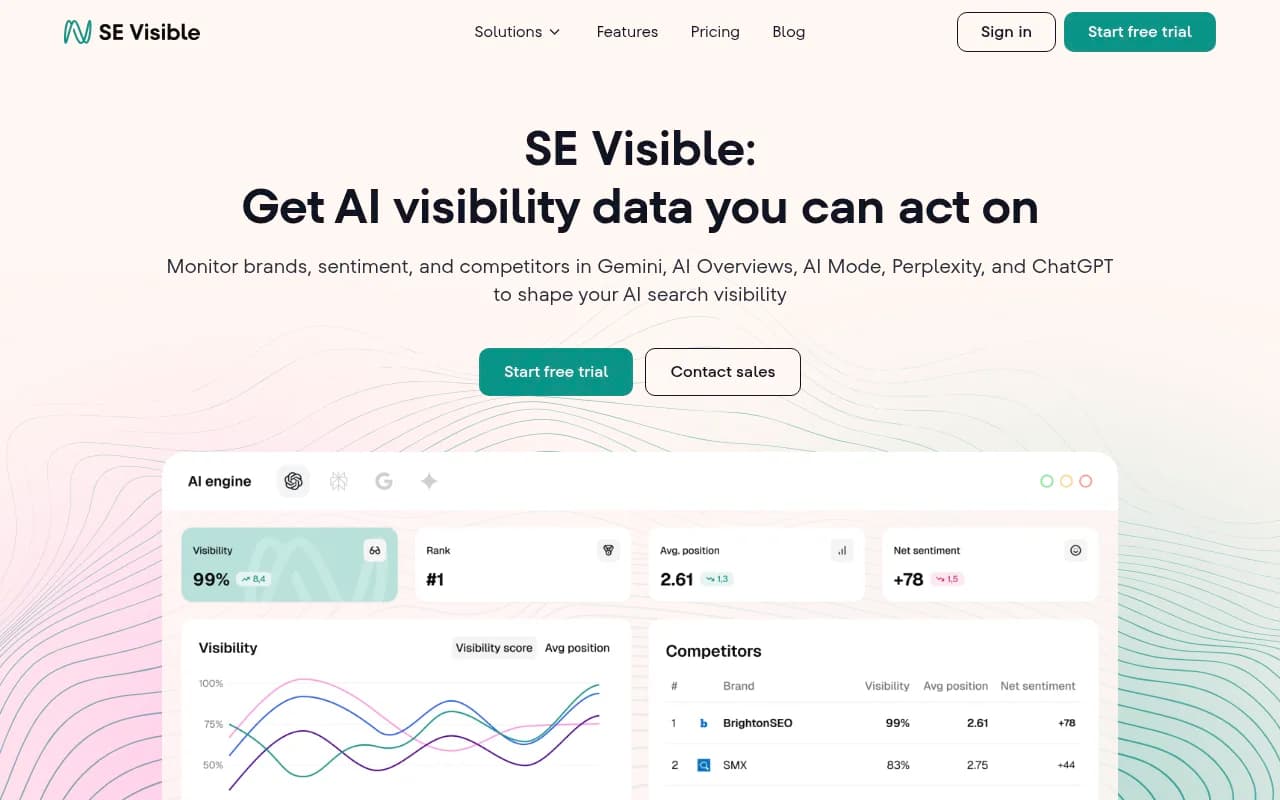

Tools like SE Visible and Promptwatch both offer multi-model tracking. SE Visible covers AI Mode, AI Overviews, Gemini, ChatGPT, and Perplexity.

Metric 4: Citation source analysis

When an AI model mentions your brand, it's usually citing something -- a webpage, a Reddit thread, a YouTube video, a news article. The citation source matters enormously because it tells you why you're appearing and whether that appearance is stable.

If you're being cited primarily through a single Reddit thread from 18 months ago, your visibility is fragile. If you're being cited through multiple owned pages, that's a much stronger signal. If competitors are being cited through sources you haven't touched -- certain review sites, industry publications, YouTube channels -- that's a content gap you can close.

Most platforms show you that you were mentioned. Fewer show you what was cited to generate that mention. Even fewer show you the full citation landscape across a topic, including sources that cite competitors but not you.

This matters because the path to improving AI visibility isn't always "write more content on your own site." Sometimes it's publishing on the platforms AI models actually trust for your category.

Metric 5: Answer gap analysis (prompts you're missing, not just tracking)

Standard AI visibility tools let you set up a list of prompts and track your visibility for those prompts. That's useful. But it has a blind spot: you can only track what you already know to track.

Answer gap analysis flips the question. Instead of "how do I rank for these prompts?" it asks "what prompts are my competitors appearing for that I'm not?" You find out about entire categories of questions where you have zero visibility -- not because you're tracking them and scoring low, but because you never knew they existed.

This is one of the most valuable capabilities in the space, and most monitoring-only tools don't offer it at all. You need a platform that actively surfaces the prompts you're missing, not just scores the ones you've already identified.

Promptwatch's Answer Gap Analysis does exactly this -- it shows you the specific prompts where competitors are visible and you're not, giving you a prioritized list of content opportunities grounded in real prompt data.

Metric 6: Prompt volume and difficulty scoring

Not all prompts are worth tracking. Some are asked by thousands of people every day. Others are niche queries with minimal traffic. Some are highly competitive -- every major brand in your category is already appearing for them. Others are winnable gaps where a single well-optimized piece of content could get you cited consistently.

Most platforms treat prompts as equal. They'll show you your visibility for "best CRM software" and "best CRM for nonprofits under 50 users" with the same weight, even though the strategic value and difficulty of those two prompts are completely different.

What you want is volume estimates (how often is this prompt asked?) combined with difficulty scoring (how hard is it to appear here given current competition?). That combination lets you prioritize -- go after high-volume, winnable prompts first, build momentum, then tackle the harder ones.

This is the difference between a random content calendar and a strategic one.

Metric 7: Reddit and YouTube citation tracking

This one gets overlooked almost universally. AI models don't just cite brand websites and news articles -- they heavily cite Reddit discussions, YouTube videos, and forum content. For many categories, Reddit threads are among the most-cited sources in AI responses.

If you're not tracking which Reddit threads and YouTube videos are influencing AI recommendations in your category, you're missing a major lever. You might be publishing great content on your own site while a competitor's product is being recommended in AI responses because a Reddit thread from two years ago keeps getting cited.

The fix isn't to spam Reddit (that backfires fast). It's to understand which communities and discussions are shaping AI recommendations, participate authentically, and ensure your brand is part of those conversations.

Most platforms ignore this channel entirely. It's a significant blind spot.

Promptwatch surfaces Reddit and YouTube citations as part of its citation analysis, which is genuinely rare in this space.

Metric 8: AI traffic attribution (connecting visibility to revenue)

This is the metric that turns AI visibility from a marketing vanity metric into something a CFO cares about. You can have a great visibility score, but if you can't connect that visibility to actual website traffic and revenue, it's hard to justify the investment or make smart budget decisions.

AI traffic attribution means being able to say: "Users who saw our brand mentioned in Perplexity responses visited our site at this rate, converted at this rate, and generated this much revenue." That requires connecting your AI visibility data to your web analytics -- either through a tracking snippet, Google Search Console integration, or server log analysis.

Very few platforms offer this. Most stop at "you were mentioned X times." The ones that go further -- connecting mentions to clicks, sessions, and conversions -- give you something you can actually use to justify and grow your AI search investment.

How the leading platforms stack up on these 8 metrics

Here's an honest look at how the major platforms cover these metrics:

| Metric | Promptwatch | SE Visible | Profound | Peec AI | Otterly.AI | AthenaHQ |

|---|---|---|---|---|---|---|

| AI crawler logs | Yes | No | No | No | No | No |

| Query fan-outs | Yes | No | Partial | No | No | No |

| Per-model breakdown | Yes (10 models) | Yes (5 models) | Yes | Yes | Yes | Yes |

| Citation source analysis | Yes | Partial | Yes | No | No | Partial |

| Answer gap analysis | Yes | No | Partial | No | No | No |

| Prompt volume + difficulty | Yes | No | No | No | No | No |

| Reddit/YouTube tracking | Yes | No | No | No | No | No |

| AI traffic attribution | Yes | No | No | No | No | No |

The pattern is clear: most platforms cover per-model tracking reasonably well. The gaps show up in the more advanced metrics -- crawler logs, gap analysis, attribution -- which happen to be the ones that drive actual improvement rather than just observation.

Building a complete tracking setup

You don't necessarily need one platform that does everything. Some teams combine a strong monitoring tool with separate attribution and gap analysis workflows. But the more tools you're stitching together, the more time you spend on data wrangling instead of acting on insights.

A practical starting point:

- Pick a platform that covers at least per-model tracking, citation sources, and competitor benchmarking. That's table stakes.

- Add crawler log monitoring -- either through a platform that includes it or by setting up server log analysis separately.

- Build out prompt tracking that includes volume and difficulty data, not just a flat list of prompts you've manually added.

- Set up attribution so you can connect AI visibility to web traffic. Even a basic UTM-based approach is better than nothing.

- Audit your Reddit and YouTube presence in your category. Know which discussions are influencing AI recommendations.

The brands that are winning in AI search right now aren't just monitoring -- they're running a continuous loop of finding gaps, creating targeted content, and measuring what changes. That cycle is what turns a visibility score into a business outcome.

The window is still open

Only 16% of brands are actively tracking AI visibility, according to a 2026 analysis by Klaus Schremser on LinkedIn. That number will look very different in 12 months. The teams building systematic tracking and optimization practices now -- covering all 8 metrics, not just mention rate -- will have a structural advantage that's hard to close once the space matures.

The metrics in this guide aren't exotic or theoretical. They're the difference between knowing your visibility score and knowing what to do about it.