Key takeaways

- Most GEO platforms in 2026 are monitoring-only dashboards. They show you visibility gaps but leave you to figure out the fix yourself.

- A small number of platforms now close the full loop: gap analysis, AI-assisted content creation, and publishing or indexing — all in one place.

- The platforms that combine these three steps save significant time and produce better results because the content generation is grounded in real citation data, not generic SEO logic.

- Promptwatch is the most complete end-to-end option, covering gap analysis, AI content generation, and traffic attribution across 10 AI models.

- For teams that just need monitoring plus a writing workflow, pairing a tracker with a dedicated content tool is a viable alternative.

Why "monitoring only" is no longer enough

When GEO tools first appeared, just knowing whether ChatGPT or Perplexity mentioned your brand felt like a revelation. That was 2023. In 2026, that baseline is table stakes.

The problem with monitoring-only platforms is that they hand you a diagnosis without a prescription. You log in, see that a competitor is cited for 40 prompts you're invisible for, and then... what? You export a spreadsheet, brief a writer, wait two weeks, publish something, and hope the AI models eventually pick it up. The feedback loop is slow, manual, and disconnected from the data that should be driving it.

The platforms worth paying attention to now are the ones that compress that loop. Find the gap, generate the content, publish it, watch the visibility score move. That cycle, done well, is genuinely different from anything traditional SEO tooling offered.

This guide focuses specifically on that capability: which GEO tools in 2026 actually help you go from "we're invisible for this prompt" to "we have a live article that AI models can cite" without switching between five different tools.

What the full workflow actually looks like

Before comparing tools, it's worth being precise about what "end-to-end" means in this context. There are three distinct steps:

Step 1: Gap identification. The platform runs your target prompts across multiple AI models and shows you where competitors appear and you don't. Good gap analysis goes beyond a simple yes/no — it tells you which specific topics, angles, and questions are driving competitor citations, and what content your site is missing to compete.

Step 2: Content generation. The platform uses that gap data to produce a draft article, listicle, or comparison piece. The key word is "grounded" — the best tools generate content based on actual citation patterns (what sources AI models already trust and cite), not just keyword density or generic SEO rules.

Step 3: Publishing and tracking. The article goes live, and the platform tracks whether AI models start citing it. Page-level tracking shows which specific URLs are being cited, by which models, and how often. Traffic attribution connects those citations to actual visits and revenue.

Most tools do step 1 reasonably well. A handful do step 2. Very few do all three in a coherent workflow.

The platforms that go furthest

Promptwatch: the most complete loop

Promptwatch is the platform that comes closest to a true end-to-end workflow in 2026. It covers all three steps, and the connection between them is tight — the content generation is directly informed by the gap analysis data, not bolted on as an afterthought.

The Answer Gap Analysis shows exactly which prompts competitors rank for that you don't, with prompt volume estimates and difficulty scores so you can prioritize. The built-in AI writing agent then generates articles grounded in 880M+ citations analyzed across 10 AI models. That's not a small distinction: the agent knows what sources ChatGPT, Claude, and Perplexity actually cite, and it structures content to match those patterns.

On the tracking side, page-level monitoring shows which URLs are being cited and by which models. Traffic attribution works via a code snippet, Google Search Console integration, or server log analysis — so you can connect AI visibility to actual revenue, not just impression counts.

A few other capabilities that matter for this workflow: AI Crawler Logs show when ChatGPT, Claude, and Perplexity actually crawl your pages (and flag errors), which helps diagnose why new content isn't getting picked up. Prompt Intelligence includes query fan-outs — how one prompt branches into sub-queries — which is useful for deciding what angle to take when generating an article.

Pricing starts at $99/month (Essential: 1 site, 50 prompts, 5 articles), with the Professional plan at $249/month adding crawler logs, 150 prompts, and 15 articles per month.

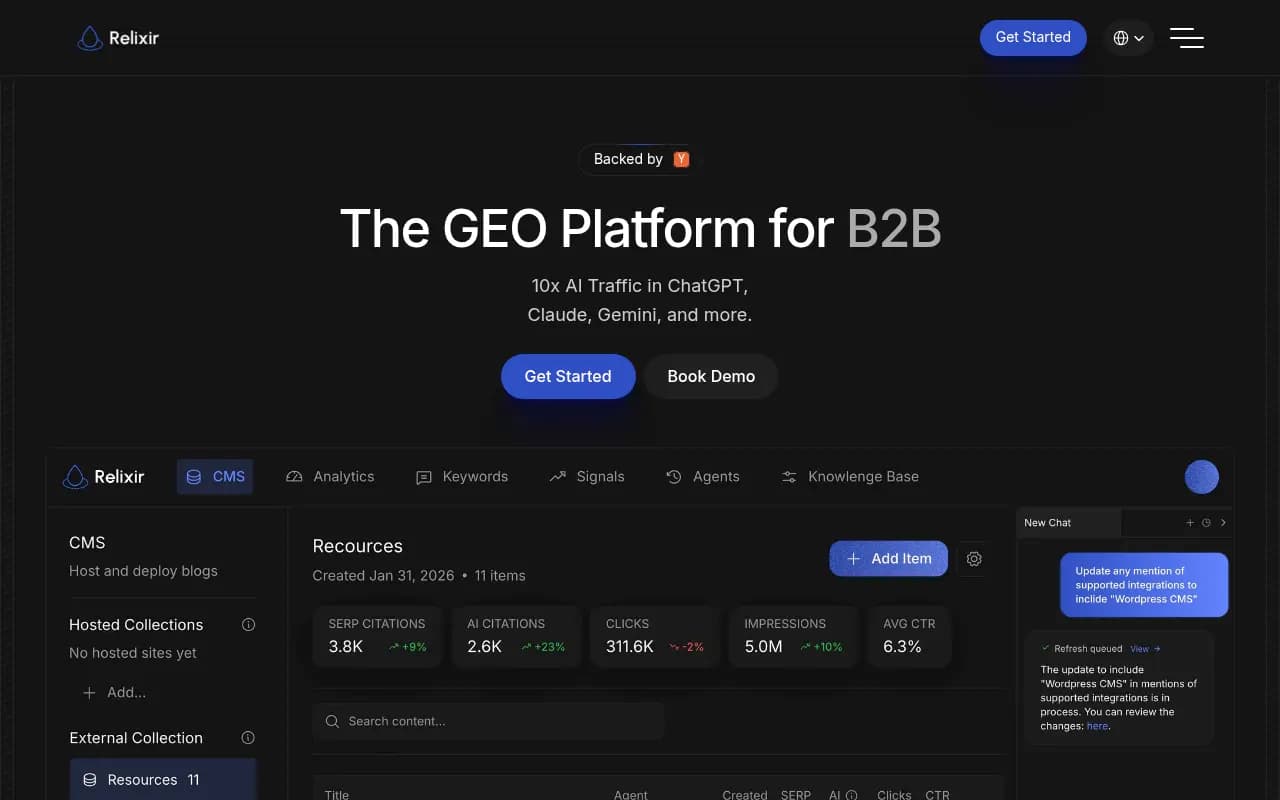

Relixir: AI-native CMS built for GEO

Relixir takes a different architectural approach. It's built around an AI-native CMS, meaning the content management layer is designed from the ground up for GEO rather than adapted from traditional SEO tooling. The platform includes autonomous content generation and competitive gap analysis, with a focus on getting content indexed and cited quickly.

It's a strong option for teams that want a dedicated publishing environment rather than a monitoring platform with content features added on. The trade-off is that it's less comprehensive on the tracking and attribution side compared to Promptwatch.

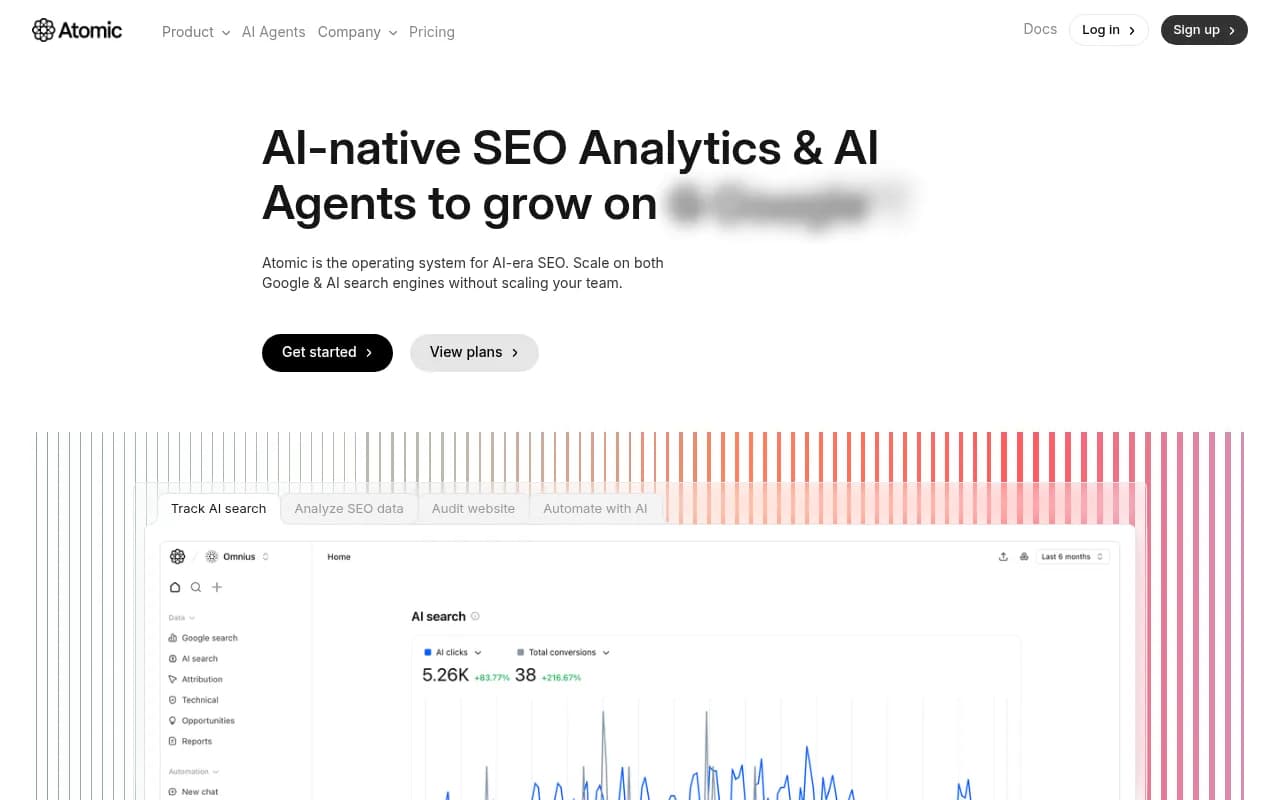

Atomic AGI: automated content with Google + LLM tracking

Atomic AGI tracks both traditional Google rankings and LLM visibility, and includes automated content generation as part of its workflow. The dual-track approach is useful for teams that still care about traditional SEO alongside AI search — you're not choosing between the two.

The automation angle is genuine: the platform can generate and schedule content based on gap data without requiring manual intervention at each step. For teams running high content volumes, that matters.

Whitebox: agentic GEO that ships fixes automatically

Whitebox is worth mentioning specifically because of its agentic approach. Rather than generating content for you to review and publish, it identifies narrative gaps in how AI models describe your brand and generates fixes autonomously. It's more opinionated than most tools — it decides what needs fixing and acts on it.

That's either a feature or a concern depending on your team's workflow. For brands that want to move fast and trust the system, it's compelling. For teams that need editorial review before anything goes live, it's less suited.

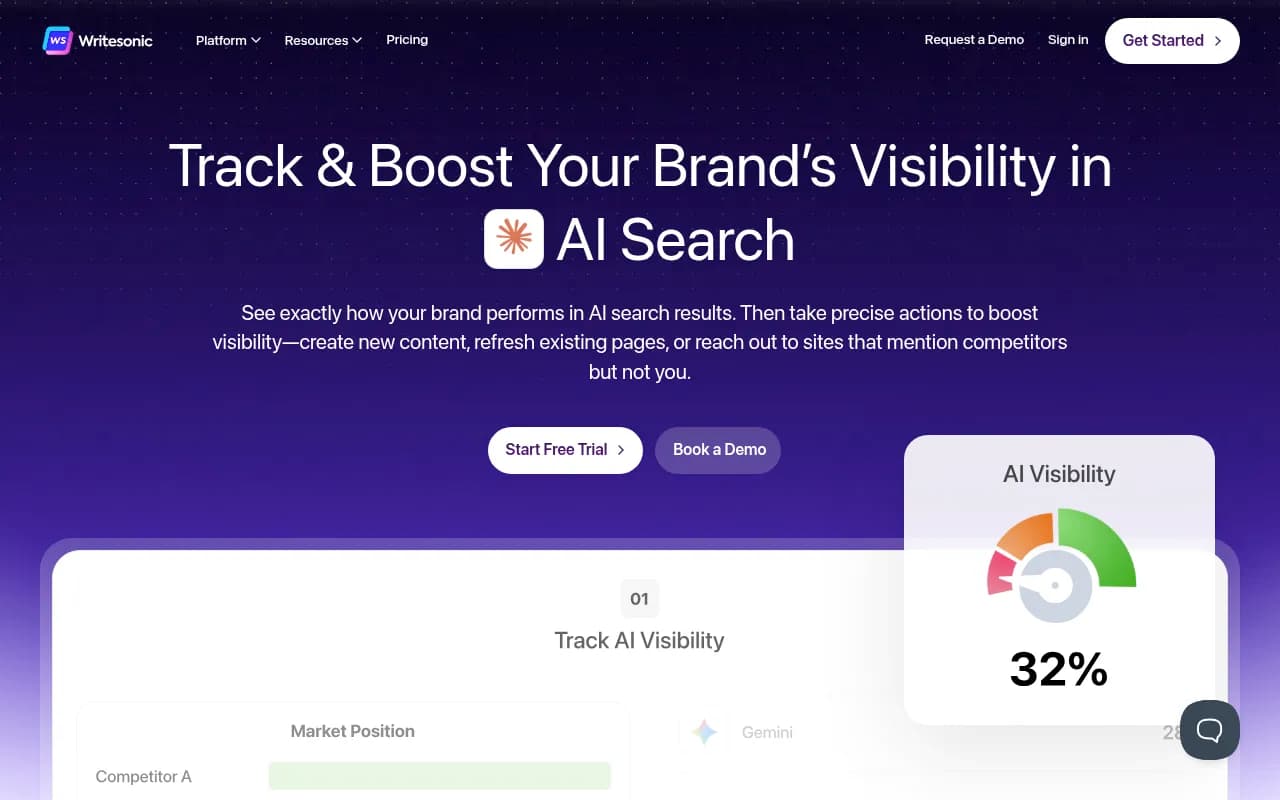

Writesonic: monitoring plus Action Center

Writesonic has evolved significantly from its origins as a general AI writing tool. Its GEO-focused features now include an Action Center that surfaces citation gaps and connects them to content creation. It monitors multiple AI engines and provides AI crawler analytics.

It's not as deep on the tracking side as Promptwatch, but it's a reasonable option for marketing teams that are already using Writesonic for content and want to add AI visibility monitoring without switching platforms entirely.

Tools that do monitoring well but stop there

It's worth being clear about which platforms are strong on step 1 (gap identification) but don't take you through to content generation and publishing. These are still useful tools — just not end-to-end solutions.

AthenaHQ has solid prompt volume tracking and a GEO score, with broad LLM coverage. It's monitoring-focused, which is fine if you have a separate content workflow.

Profound covers answer engine insights and has agent analytics, but the content creation side is limited compared to platforms built around the full loop.

Peec AI does multi-language tracking and competitor source analysis well. No content generation.

SE Visible has a clean visibility score and net sentiment tracking. Again, monitoring only.

Otterly.AI is affordable and easy to use for basic monitoring. No content tools, no crawler logs.

Comparison table: GEO tools by workflow coverage

| Tool | Gap analysis | AI content generation | Publishing/CMS | Citation tracking | Traffic attribution | AI crawler logs |

|---|---|---|---|---|---|---|

| Promptwatch | Yes | Yes (880M+ citations) | Via export | Page-level | Yes (GSC, snippet, logs) | Yes |

| Relixir | Yes | Yes (AI-native CMS) | Built-in CMS | Yes | Limited | No |

| Atomic AGI | Yes | Yes (automated) | Scheduled | Google + LLMs | Limited | No |

| Whitebox | Yes | Yes (agentic) | Autonomous | Yes | No | No |

| Writesonic | Yes | Yes (Action Center) | No | Yes | No | Yes |

| AthenaHQ | Yes | No | No | Yes | No | No |

| Profound | Yes | Limited | No | Yes | No | No |

| Peec AI | Yes | No | No | Yes | No | No |

| SE Visible | Yes | No | No | Yes | No | No |

| Otterly.AI | Yes | No | No | Yes | No | No |

What to look for when evaluating these platforms

Citation grounding matters more than writing quality

The biggest mistake teams make when evaluating GEO content tools is treating them like general AI writers. The question isn't "does it produce fluent prose?" — any LLM can do that. The question is "does it know what sources AI models actually cite, and does it structure content to match those patterns?"

A tool that generates an article based on keyword research is doing traditional SEO. A tool that generates an article based on citation data from 880M+ AI responses is doing something genuinely different. The output looks similar on the surface but performs very differently in practice.

Prompt volume and difficulty scoring changes prioritization

Not all content gaps are worth filling. A prompt that gets asked 50 times a month with low competition is a better target than one asked 5,000 times where established sources dominate. Platforms that provide prompt volume estimates and difficulty scores (like Promptwatch) let you make that call with data. Platforms that just show you a list of gaps leave you guessing.

The feedback loop needs to close

If you generate and publish content but can't track whether AI models start citing it, you're flying blind. Page-level citation tracking is what closes the loop. Without it, you can't tell if your content strategy is working or just generating articles that nobody (human or AI) reads.

Crawler logs are underrated

Most teams don't think about AI crawler logs until they publish something and it doesn't get cited for months. Knowing that ChatGPT's crawler visited your page, hit a 404, and never came back is actionable information. Knowing it visited 47 times last month is reassuring. Most platforms don't surface this at all.

How to choose based on your situation

If you want one platform that does everything: Promptwatch is the strongest option. The gap-to-article workflow is coherent, the citation grounding is real, and the tracking closes the loop properly. The Essential plan at $99/month is a reasonable starting point for smaller teams.

If you want a dedicated publishing environment: Relixir is worth evaluating. The AI-native CMS approach means the content management workflow is purpose-built for GEO, not adapted from something else.

If you're running high content volumes and want automation: Atomic AGI's scheduled generation is worth looking at, particularly if you also care about traditional Google rankings alongside AI search.

If you want monitoring now and content later: Start with a monitoring-focused tool (AthenaHQ, Peec AI, or Otterly.AI) and plan to migrate when the content workflow becomes a priority. Just be aware that switching platforms means rebuilding your prompt tracking history.

If you're already using Writesonic for content: The GEO features are worth activating. It's not as deep as a dedicated platform, but the integration with your existing content workflow has real value.

The bigger picture

The GEO tool market in 2026 is still maturing. A year ago, most platforms were pure monitoring dashboards. Now, several are adding content generation, and a few are building genuine end-to-end workflows. That trend will continue.

The platforms that will matter most over the next 18 months are the ones that can demonstrate a direct line from "we identified this gap" to "we published this content" to "our visibility score improved" to "this drove X visits and Y revenue." That's a hard chain to build, but it's the only one that justifies the investment in a dedicated GEO platform rather than a spreadsheet and a general AI writer.

The tools that are furthest along that path right now are worth serious evaluation. The ones that are still just showing you dashboards are running out of time to differentiate.