Summary

- Answer gap analysis reveals which prompts competitors rank for in AI search but you don't -- showing you exactly what content your site is missing

- AI models cite content that directly answers questions with structure, depth, and unique data -- not generic keyword-stuffed pages

- Tools like Promptwatch automate gap discovery by comparing your AI visibility vs competitors across ChatGPT, Claude, Perplexity, and other models

- Closing the gap requires creating content grounded in real citation data, prompt volumes, and the specific angles AI engines reward

- Tracking results at the page level proves which content improvements actually drive AI citations and traffic

Why traditional content gap analysis doesn't work for AI search

You've run keyword gap reports. You've analyzed competitors' rankings in Google. You've written 3,000-word guides targeting high-volume terms. And your traffic is still flat.

The problem: AI models don't care about your keyword density or word count. ChatGPT, Claude, Perplexity, and Google AI Overviews pull answers from content that directly addresses what someone asked -- with structure, specificity, and often data they can't generate themselves. If your content doesn't answer the exact question in a way AI can extract and cite, you're invisible.

Traditional SEO gap analysis finds keywords you don't rank for. Answer gap analysis finds prompts you don't show up for -- the actual questions people ask AI tools, and the specific content angles those tools reward with citations.

This is the difference between "we should write about personal injury law" and "we need a page explaining statute of limitations by state for slip-and-fall cases, structured as a table, because that's what Claude cites when someone asks 'how long do I have to file after a fall in California?'"

![]()

What AI models actually want (and why your content isn't giving it to them)

AI engines don't browse your site like a human. They scan for structured answers to specific queries. When someone prompts "best project management tools for remote teams," the model looks for:

- Direct answers: A clear statement or list that addresses the query without filler

- Structured data: Tables, bullet points, step-by-step instructions -- formats that extract cleanly

- Unique information: Data, case studies, or perspectives the model can't generate from consensus knowledge

- Authoritative signals: Citations, expert quotes, real examples that suggest the content is trustworthy

Most content fails on at least two of these. You write a 2,000-word blog post about project management tools, but it's mostly intro fluff, vague feature descriptions, and a conclusion that says "choose the tool that fits your needs." AI models skip it. They cite the competitor who published a comparison table with pricing, team size recommendations, and integration lists.

The gap isn't that you didn't cover project management tools. The gap is that you didn't structure the answer in a way AI can use.

How to find your answer gaps: the manual method

Before diving into tools, you can surface gaps by hand. This takes time but teaches you what AI models prioritize.

Step 1: List your core topics and common customer questions

Start with the topics you already cover or want to rank for. For each topic, write down 5-10 questions your customers actually ask. If you're a SaaS company selling CRM software, your list might include:

- "What's the difference between CRM and marketing automation?"

- "How much does CRM software cost for a 10-person team?"

- "Can I integrate [your CRM] with Salesforce?"

- "What reports can I generate in [your CRM]?"

Step 2: Prompt AI models with those questions

Open ChatGPT, Claude, Perplexity, and Google (in AI Mode or looking for AI Overviews). Enter each question exactly as a customer would ask it. Note:

- Does your brand or website appear in the answer?

- Which competitors are cited?

- What sources does the model pull from (documentation, comparison sites, Reddit threads, YouTube videos)?

- What format does the answer take (paragraph, list, table, step-by-step)?

If your site doesn't appear but competitors do, you've found a gap.

Step 3: Analyze the cited content

Visit the pages AI models cited. What do they have that you don't? Common patterns:

- Comparison tables (pricing, features, use cases)

- Step-by-step guides with numbered instructions

- Data or statistics (survey results, benchmark reports, case study metrics)

- FAQ sections that directly answer sub-questions

- Embedded examples (screenshots, code snippets, real customer scenarios)

Your gap isn't "we need more content." Your gap is "we need a pricing comparison table" or "we need a guide with actual screenshots showing how to set up integrations."

Step 4: Repeat for competitor visibility

Search for your competitors by name in AI tools. Prompts like "tell me about [Competitor X]" or "what are the pros and cons of [Competitor Y]?" reveal where they're getting cited. If AI models have detailed, positive information about competitors but vague or missing information about you, that's a visibility gap -- and it means they have content (or third-party coverage) you lack.

How to find your answer gaps: the automated method

Manual testing works for a handful of prompts. Scaling to hundreds or thousands of queries requires tools built for AI search monitoring.

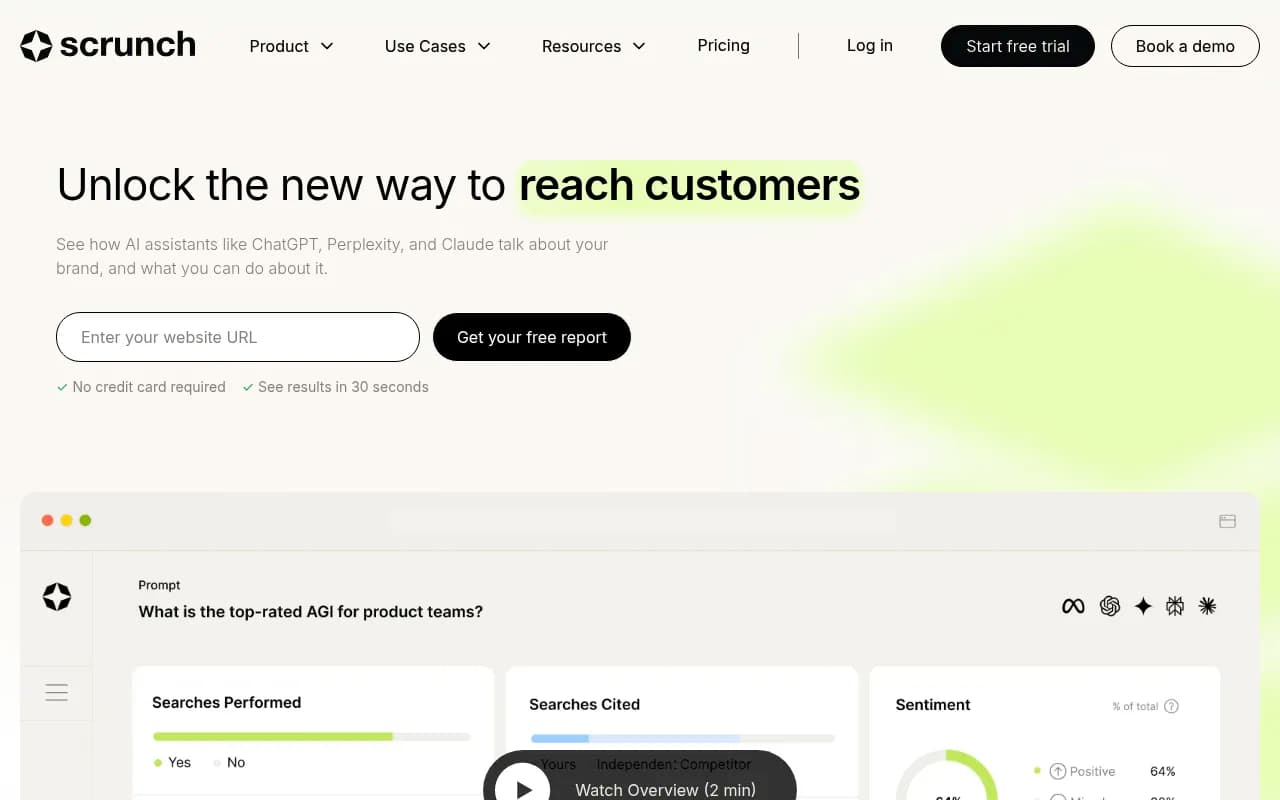

Promptwatch automates answer gap discovery by tracking your brand's visibility across 10 AI models (ChatGPT, Claude, Perplexity, Gemini, Grok, DeepSeek, Copilot, Mistral, Meta AI, Google AI Overviews) and comparing it to competitors. The platform's Answer Gap Analysis feature shows you:

- Prompts competitors are visible for but you're not: The exact queries where competitors get cited and you don't appear at all

- Which pages competitors are cited from: See the specific URLs AI models pull answers from, so you know what content types work

- Prompt volumes and difficulty scores: Prioritize high-value, winnable prompts instead of chasing every possible query

- Query fan-outs: Understand how one prompt branches into sub-queries, revealing related content gaps

This turns "we should write about X" into "we need to create a guide on X that covers Y and Z sub-topics, structured as a table, because that's what ChatGPT cites 73% of the time for this prompt cluster."

Other tools in this space include:

| Tool | Gap analysis capability | Best for |

|---|---|---|

| Promptwatch | Full competitor prompt comparison, citation source analysis, content generation | Teams that want to find gaps and fix them |

| Otterly.AI | Basic prompt tracking, competitor mentions | Budget-conscious monitoring |

| Peec.ai | Multi-language prompt tracking | International brands |

| AthenaHQ | Prompt monitoring, no gap analysis | Tracking existing visibility |

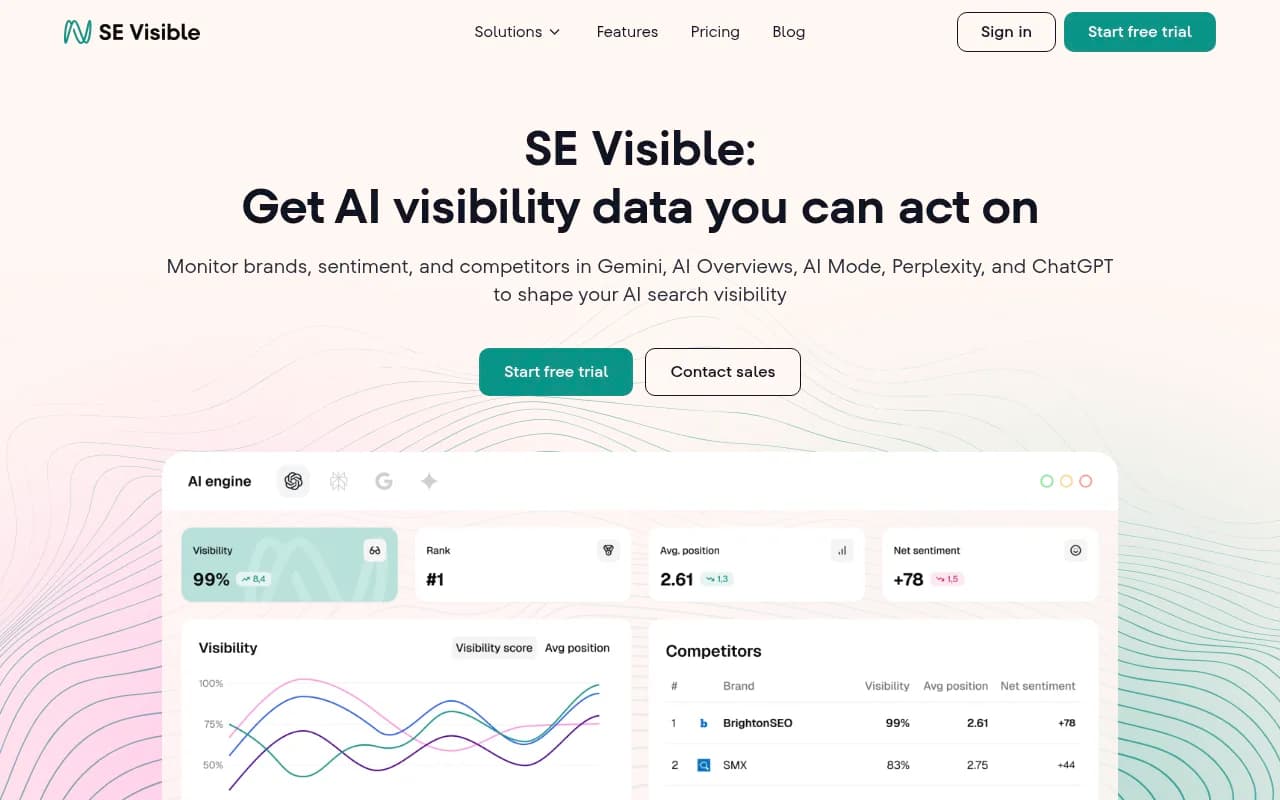

| SE Visible | User-friendly dashboards, limited gap features | Marketing teams new to AI search |

Most competitors stop at monitoring -- they show you where you're visible but don't help you find or fix gaps. Promptwatch is the only platform that closes the loop: find the gap, generate content to fill it, track the results.

The four types of answer gaps (and how to close them)

Not all gaps are the same. Understanding the type of gap helps you prioritize and create the right content.

1. Topic gaps: You don't cover the subject at all

This is the classic SEO content gap -- competitors have pages on a topic and you don't. In AI search, this shows up as zero visibility for entire prompt clusters.

Example: A CRM company has no content about integrations. When users prompt "does [CRM] integrate with Slack?" AI models say "integration details are not available" or cite a competitor instead.

How to close it: Create a dedicated integrations page (or hub) listing every integration, with setup instructions and screenshots. Structure it as a table or filterable list so AI can extract answers.

2. Angle gaps: You cover the topic but miss the specific question

You have content on the general topic, but it doesn't address the specific angle or sub-question people ask AI tools.

Example: You have a blog post titled "Best Project Management Tools" but it's a generic listicle. When someone prompts "best project management tools for construction companies," AI models cite a competitor who wrote a guide specifically for construction use cases.

How to close it: Create content targeting the specific angle. In this case, a guide on project management for construction teams, with examples of tracking job sites, managing subcontractors, and handling permits.

3. Format gaps: You have the information but it's not structured for extraction

Your content contains the answer, but it's buried in paragraphs or lacks the structure AI models need to extract and cite it.

Example: Your pricing page describes plans in prose ("Our Pro plan includes advanced reporting and priority support"). When someone prompts "how much does [your product] cost," AI models cite a competitor with a clean pricing table.

How to close it: Reformat the content. Add tables, bullet lists, or FAQ sections that make answers scannable. Use schema markup (FAQPage, HowTo, Product) to signal structure to AI crawlers.

4. Depth gaps: Your content is too shallow or generic

You cover the topic and use the right format, but your content lacks the depth, data, or unique perspective AI models prioritize.

Example: You have a guide on "How to Choose a CRM" but it's 500 words of generic advice ("consider your budget and team size"). Competitors have 3,000-word guides with decision frameworks, comparison tables, and case studies. AI models cite the deeper content.

How to close it: Add unique information the model can't generate: original research, customer data, expert interviews, detailed examples. This is where "Information Gain" matters -- the incremental value your content provides over what the AI already knows.

Creating content that closes the gap (and actually gets cited)

Finding the gap is step one. Closing it requires content engineered for AI citation, not just keyword targeting.

Start with the prompt, not the keyword

Traditional SEO starts with a keyword ("project management software") and builds content around it. AI-first content starts with the actual prompt ("what's the best project management software for a remote team of 15 people?") and structures the answer to match.

This changes everything. Instead of a generic listicle, you create a guide that:

- Directly answers the question in the first paragraph

- Includes a comparison table filtered for team size

- Explains why certain tools work better for remote teams (async communication, time zone support, integration with Slack/Zoom)

- Provides a decision framework ("if you need X, choose Y")

AI models cite this because it's a complete, structured answer to the exact query.

Use the formats AI models extract from

AI engines favor content they can parse and quote cleanly. Prioritize:

- Tables: Pricing, features, comparisons, specifications

- Lists: Numbered steps, bullet points, pros/cons

- FAQ sections: Direct question-and-answer pairs

- Definitions: Clear, concise explanations of terms

- Examples: Real scenarios, case studies, screenshots

Avoid long, unstructured paragraphs. If your content requires someone to read three paragraphs to extract a single fact, AI models will skip it.

Add unique data or perspectives

AI models can generate generic advice from their training data. They cite your content when it contains information they don't have:

- Original research: Survey results, benchmark data, usage statistics

- Customer stories: Real examples with metrics ("Company X reduced onboarding time by 40% using this workflow")

- Expert insights: Quotes from practitioners, analysts, or your own team

- Proprietary data: Information only you have access to (your product's performance metrics, customer success rates, feature adoption)

This is the "Information Gain" concept -- the incremental value your content provides over baseline knowledge. Higher gain = higher citation likelihood.

Structure for both humans and AI

Good AI-first content is also good human content. Use:

- Clear headings that match common questions ("How much does X cost?" not "Pricing Overview")

- Scannable sections with one idea per paragraph

- Visual hierarchy (headings, subheadings, bold text for key points)

- Schema markup (FAQPage, HowTo, Product, Organization) to signal structure

AI crawlers parse your HTML. Clean, semantic markup makes extraction easier.

Tracking whether you actually closed the gap

Creating content is pointless if you don't measure whether it worked. Did your new guide get cited? Did your visibility improve for the target prompts?

Promptwatch tracks this at the page level. After publishing new content, you can:

- Monitor prompt visibility: See if your brand now appears for the prompts you targeted

- Track citation sources: Confirm that AI models are citing your new page (not a competitor or third-party site)

- Measure visibility score changes: Quantify improvement over time

- Attribute traffic: Use the code snippet, Google Search Console integration, or server log analysis to connect AI citations to actual visitors and revenue

This closes the optimization loop: find the gap, create content, measure results, iterate. Most tools stop at step one.

Common mistakes that keep gaps open

Mistake 1: Writing for keywords instead of prompts

Keywords and prompts are not the same. The keyword "CRM software" might have 10,000 monthly searches, but people prompt AI tools with specific questions: "what's the best CRM for real estate agents?" or "can I use [CRM] without a credit card?"

If you optimize for the keyword, you create generic content. If you optimize for the prompts, you create targeted answers AI models cite.

Mistake 2: Ignoring format and structure

You can have the right information and still get zero citations if it's not structured for extraction. A 2,000-word essay on pricing will lose to a competitor's pricing table every time.

Mistake 3: Treating AI search as an afterthought

Most companies bolt AI optimization onto their existing SEO workflow ("let's add some FAQ schema to this blog post"). That's backwards. Start with the prompt, design the content for AI citation, then optimize for traditional search as a secondary goal.

Mistake 4: Not tracking at the page level

Brand-level visibility scores are useful, but they don't tell you which pages work. You need page-level tracking to know that your new integrations guide is getting cited while your generic "about us" page is ignored.

Mistake 5: Waiting for perfection

You don't need a 5,000-word masterpiece to close a gap. A well-structured 800-word guide with a comparison table and real examples will outperform a 3,000-word wall of text. Ship the structured answer, measure results, iterate.

Real example: Closing a gap for a B2B SaaS company

A project management SaaS company noticed competitors appearing in ChatGPT and Perplexity for prompts like "best project management tools for agencies" while they had zero visibility.

Manual testing revealed the gap: competitors had dedicated pages for agency use cases with client billing features, time tracking comparisons, and case studies from creative agencies. The SaaS company had a generic "features" page.

They created a new guide: "Project Management for Agencies: Features, Pricing, and Client Billing." The page included:

- A comparison table of PM tools with agency-specific features (client portals, time tracking, invoicing)

- A case study from a design agency showing how they reduced billing errors by 60%

- A step-by-step guide to setting up client billing in their product (with screenshots)

- An FAQ section answering common agency questions ("Can clients log in without a paid seat?" "How do I track billable vs non-billable hours?")

Within three weeks, the page started getting cited in ChatGPT and Claude for agency-related prompts. Visibility for the target prompt cluster increased from 0% to 34%. Traffic from AI referrals (tracked via UTM parameters and Promptwatch's code snippet) grew to 12% of total organic traffic.

The gap was closed not by writing more content, but by writing the right content in the right format for the specific prompts AI models serve.

Tools to accelerate gap discovery and content creation

Beyond Promptwatch, several tools help with different parts of the answer gap workflow:

| Tool | Use case | Limitation |

|---|---|---|

| Promptwatch | End-to-end: find gaps, generate content, track results | Premium pricing for full feature set |

| Semrush | Keyword gap analysis (traditional SEO) | No AI prompt tracking or citation analysis |

| Ahrefs | Competitor content analysis, backlink gaps | No AI search monitoring |

| Profound | Enterprise AI visibility tracking | Expensive, no built-in content generation |

| Scrunch | AI brand monitoring | Limited gap analysis features |

For most teams, the workflow is: use Promptwatch for AI-specific gap analysis and content generation, then validate with manual testing in ChatGPT/Claude/Perplexity.

The future of answer gap analysis

AI search is evolving fast. In 2026, we're seeing:

- Agentic search: AI models that don't just answer questions but complete tasks (book a flight, compare products, generate a report). Content gaps will expand to include "task completion" gaps -- can AI models use your site to finish a workflow?

- Multimodal answers: AI models citing images, videos, and audio alongside text. Visual content gaps (infographics, demo videos, annotated screenshots) will matter more.

- Personalization: AI models tailoring answers based on user context (location, industry, role). Generic content will lose to hyper-targeted content.

- Real-time data: AI models pulling live data (pricing, availability, reviews) instead of static content. Structured data and APIs will become citation sources.

The core principle stays the same: find what AI models want but can't find on your site, then create it in a format they can extract and cite.

Start closing your answer gaps today

You don't need a massive content overhaul. Start small:

- Pick 5-10 prompts your customers actually ask

- Test them in ChatGPT, Claude, and Perplexity

- Note where competitors appear and you don't

- Analyze the cited content (format, depth, unique data)

- Create one piece of content designed to close the gap

- Track whether it gets cited

Repeat. Over time, you'll build a library of content that AI models rely on -- and your visibility will compound.

Or skip the manual work and let Promptwatch automate gap discovery, content generation, and tracking. Either way, the opportunity is clear: AI search is rewriting the rules of content strategy, and the brands that adapt first will own the citations.