Key takeaways

- AI search engines like ChatGPT, Perplexity, and Claude use fundamentally different signals than Google -- ranking in them requires a separate strategy, not just better traditional SEO

- Citation authority, entity clarity, and structured factual writing are the three most consistent signals across all major LLMs

- Content that gets cited tends to be specific, sourced, and written for a clear audience -- not keyword-stuffed or generic

- Tracking your AI visibility requires dedicated tools; Google Search Console won't show you ChatGPT citations

- The gap between brands that act now and those that wait is widening fast -- ChatGPT Search hit 400 million weekly users by Q1 2026

Something shifted in late 2024 and accelerated hard through 2025. Consumers stopped going exclusively to Google for answers. They started asking ChatGPT, Perplexity, and Claude -- and those tools responded with specific brand names, product recommendations, and source citations. If your brand wasn't in those answers, you simply didn't exist for that query.

By Q1 2026, ChatGPT Search had crossed 400 million weekly active users. Perplexity passed 50 million. Together they account for roughly 8% of US search volume, growing 4 to 6 percentage points per quarter. Google AI Overviews now appear on about 47% of informational searches, and click-through rates on the #1 organic result dropped from 28% to 19% on queries that trigger an AI Overview.

The old playbook -- rank #1, win the click -- doesn't hold the same way anymore. Here's what actually works in 2026.

Why AI ranking signals are different from Google signals

Google's algorithm ranks pages. LLMs don't rank pages -- they generate answers and then cite sources to support them. That's a meaningful distinction.

When ChatGPT or Perplexity answers a question, it's not running a keyword match against a database. It's generating a response based on patterns learned from training data, then pulling in live web sources (in search-enabled modes) to ground its answer. The question isn't "does this page rank for this keyword?" -- it's "does this source get treated as authoritative enough to cite?"

That changes almost everything about what you should optimize for.

Google rewards pages that match query intent and accumulate backlinks. AI models reward sources that are:

- Factually precise and easy to extract information from

- Cited by other credible sources (not just linked to)

- Associated with a clearly defined entity (a person, brand, or organization with a coherent identity)

- Present across multiple surfaces -- not just one website

The overlap with traditional SEO exists, but it's partial. Strong domain authority helps, but it's not sufficient. Keyword optimization is largely irrelevant. Schema markup matters more than it ever did for Google.

The signals that actually move you up in AI search

1. Citation authority -- being cited, not just linked to

This is the biggest shift. In traditional SEO, backlinks are currency. In AI search, citations are currency -- and they're not the same thing.

A citation in the AI context means your content is referenced as a source in AI-generated responses. Perplexity, for example, shows numbered citations alongside its answers. ChatGPT Search surfaces sources in a sidebar. Claude cites documents when it has web access. Being cited repeatedly across different queries and different models builds what you might call citation authority.

How do you build it? A few ways:

- Get your content cited in high-authority publications that AI models already trust (major news outlets, Wikipedia, industry databases)

- Publish original research, statistics, or data that other sites reference -- AI models love citing primary sources

- Appear in Reddit threads, YouTube transcripts, and other user-generated content that LLMs frequently pull from

- Build a consistent presence on platforms that AI models actively index

One thing worth knowing: Perplexity and ChatGPT Search don't just pull from the open web. They have their own indexing priorities. Perplexity has been transparent about preferring sources that are fast-loading, clearly structured, and updated regularly.

2. Entity clarity -- being a known, coherent thing

AI models work with entities. A brand, a person, a product -- these need to exist as clear, consistent entities in the model's training data and in structured data sources.

If your brand name is ambiguous, if your website says one thing and your LinkedIn says another, if there's no Wikipedia article or Wikidata entry for your company, you're harder for an AI to confidently cite. Models don't like uncertainty -- they'll cite a competitor they know well over a brand they're unsure about.

Practical steps:

- Get a Wikipedia or Wikidata entry if you're eligible (this matters more than almost anything else for entity recognition)

- Use consistent brand naming across every platform -- website, social profiles, press releases, third-party mentions

- Add Organization schema markup to your site with complete, accurate information

- Claim and complete your Google Business Profile, Crunchbase, and industry-specific directories

3. Structured, extractable content

AI models need to extract information efficiently. Content that's buried in walls of text, hidden behind JavaScript rendering, or structured as a narrative without clear headers is harder to cite accurately.

The content formats that get cited most often:

- Direct answers to questions, ideally in the first 1-2 sentences of a section

- Numbered lists and step-by-step instructions

- Comparison tables with clear labels

- Definition blocks ("X is Y that does Z")

- Statistics with named sources and dates

This is why FAQ sections, structured how-to guides, and data-backed articles tend to perform well in AI search. It's not that AI models prefer these formats aesthetically -- it's that they're easier to extract and cite without distorting the meaning.

4. Topical depth and specificity

Generic content doesn't get cited. AI models are trained on enormous amounts of generic content -- they don't need to cite your 800-word "What is SEO?" article because they already know what SEO is.

What they do cite: specific, narrow, expert-level content that answers questions they can't answer from training data alone. Original research. Proprietary data. Expert opinions on niche topics. Case studies with real numbers. Regional or industry-specific information.

The implication: go narrower and deeper, not broader and shallower. A 3,000-word guide to AI visibility for SaaS companies in the DACH region will get cited more than a 1,000-word overview of AI visibility for everyone.

5. Freshness and update signals

Perplexity and ChatGPT Search both prioritize recently updated content for queries where recency matters. This is especially true for:

- Industry news and trends

- Product comparisons (pricing changes, feature updates)

- Statistics and market data

- Regulatory or compliance topics

If you published a comprehensive guide in 2023 and haven't touched it since, it's losing ground to fresher sources. Updating existing content with new data, new examples, and a clear "last updated" date is one of the highest-ROI actions you can take right now.

6. Multi-surface presence

Here's something most SEO guides miss: AI models don't just pull from websites. They pull from Reddit, YouTube transcripts, Quora, LinkedIn posts, news articles, and academic papers. Perplexity has been observed citing Reddit threads heavily for product recommendation queries. ChatGPT pulls from a wide range of sources in its training data.

This means your brand's presence across platforms matters. A brand that appears only on its own website is less likely to be cited than one that appears in:

- Reddit discussions in relevant subreddits

- YouTube videos with accurate transcripts

- LinkedIn articles from company experts

- Press coverage in industry publications

- Podcast transcripts and show notes

You don't need to be everywhere. You need to be in the places AI models actually look for your type of content.

7. E-E-A-T signals (yes, still)

Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) was designed for human quality raters, but the underlying signals it measures -- author credentials, source reputation, factual accuracy -- are exactly what AI models use to assess whether a source is worth citing.

Named authors with verifiable credentials outperform anonymous content. Sources with a track record of accurate information get cited more than newer or less established ones. Content that cites its own sources (links to studies, data, named experts) gets treated as more trustworthy.

This isn't about gaming a checklist. It's about actually being the kind of source that deserves to be cited.

How the major AI models differ

Not all AI search engines work the same way. Understanding the differences helps you prioritize.

| Signal | ChatGPT Search | Perplexity | Claude | Google AI Overviews |

|---|---|---|---|---|

| Live web crawling | Yes (Bing-powered) | Yes (own index) | Limited | Yes (Google index) |

| Reddit/UGC weighting | Moderate | High | Low | Moderate |

| Schema markup impact | Moderate | Low | Low | High |

| Freshness weighting | High | Very high | Low | High |

| Entity recognition | High | Moderate | High | Very high |

| Citation format | Sidebar sources | Numbered inline | Document references | Blue link citations |

| Training data influence | High | Lower | Very high | Lower |

Claude is particularly interesting because it relies more heavily on training data than live web crawling. Getting into Claude's training data -- through widely-cited publications, Wikipedia, and high-authority sources -- matters more for Claude than publishing fresh web content.

Google AI Overviews, meanwhile, rewards structured content and schema markup more than the other models, because it's built on top of Google's existing indexing infrastructure.

What doesn't work (and why people keep trying it)

A few tactics that feel intuitive but don't move the needle in AI search:

Keyword stuffing for AI queries. Putting "best AI SEO tool 2026" seventeen times in your content doesn't help. AI models don't match keywords -- they understand meaning. Write for the concept, not the phrase.

Thin content at scale. Publishing 500 AI-generated articles with no original insight is the fastest way to become invisible in AI search. Models are trained on this kind of content and don't need to cite it. Worse, it can dilute your domain's authority signal.

Chasing individual model updates. ChatGPT's search algorithm changes frequently. Perplexity's indexing priorities shift. Trying to reverse-engineer each model's current behavior is a losing game. Focus on the fundamentals -- authority, specificity, structure -- and they hold across models.

Ignoring technical basics. Slow-loading pages, broken crawl paths, and JavaScript-heavy rendering hurt your AI visibility just as much as your Google rankings. AI crawlers need to access and parse your content efficiently.

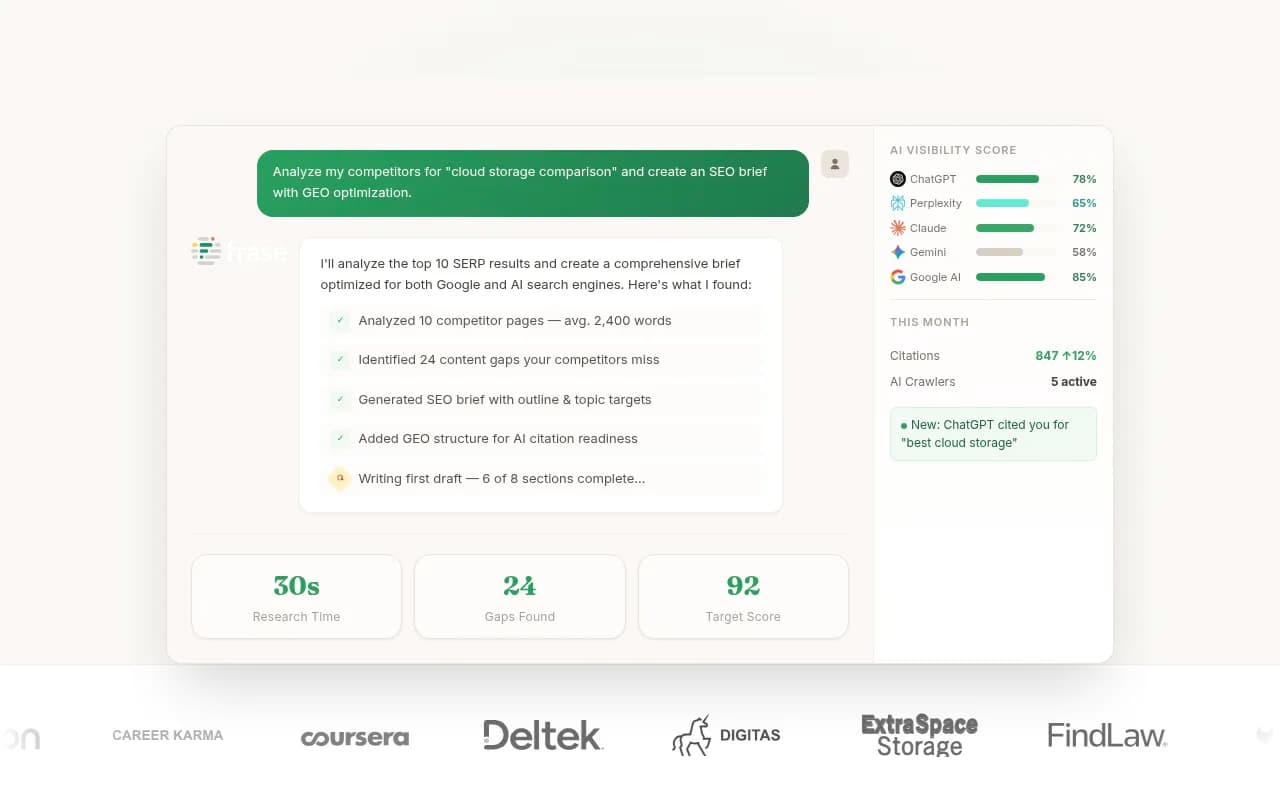

How to track whether any of this is working

This is where most brands get stuck. You can implement every signal above and still have no idea if it's moving the needle, because Google Search Console doesn't show you ChatGPT citations.

You need dedicated AI visibility tracking. At minimum, you want to know:

- Which prompts your brand appears in (and which ones your competitors own)

- Which AI models are citing you, and how often

- Which specific pages are getting cited

- Whether your visibility is improving after you publish new content

Promptwatch covers all of this -- it tracks your brand across 10 AI models, shows you which prompts competitors rank for that you don't, and connects visibility data to actual traffic through crawler log analysis and GSC integration. It's one of the few platforms that closes the loop from "where am I invisible?" to "here's the content that fixes it."

Other tools worth knowing about for AI visibility tracking:

For content creation specifically -- generating articles that are structured to get cited rather than just to rank on Google -- tools like the following are worth exploring:

A practical starting point

If you're trying to figure out where to begin, here's a straightforward sequence:

-

Run a baseline audit. Ask ChatGPT, Perplexity, and Claude the 10 most important questions in your category. Note who gets cited. If it's not you, that's your gap list.

-

Fix your entity signals. Consistent brand name, complete schema markup, Wikipedia/Wikidata presence if eligible, complete directory listings.

-

Identify your highest-value prompts. Not all queries are equal. Focus on the ones where purchase intent is high and competition in AI search is still low.

-

Create specific, structured content. Pick the top 5 prompts where you're invisible and write dedicated content for each -- structured, sourced, and narrow enough to be genuinely useful.

-

Build multi-surface presence. Identify 2-3 platforms (Reddit, YouTube, LinkedIn) where your audience asks questions in your category. Contribute genuinely useful content there.

-

Track and iterate. Set up proper AI visibility tracking so you can see what's working. Update content that's getting close to citations but not quite landing.

The brands doing this systematically right now are building a compounding advantage. AI search is still early enough that moving deliberately beats moving perfectly -- but the window where early movers have a clear edge won't stay open indefinitely.

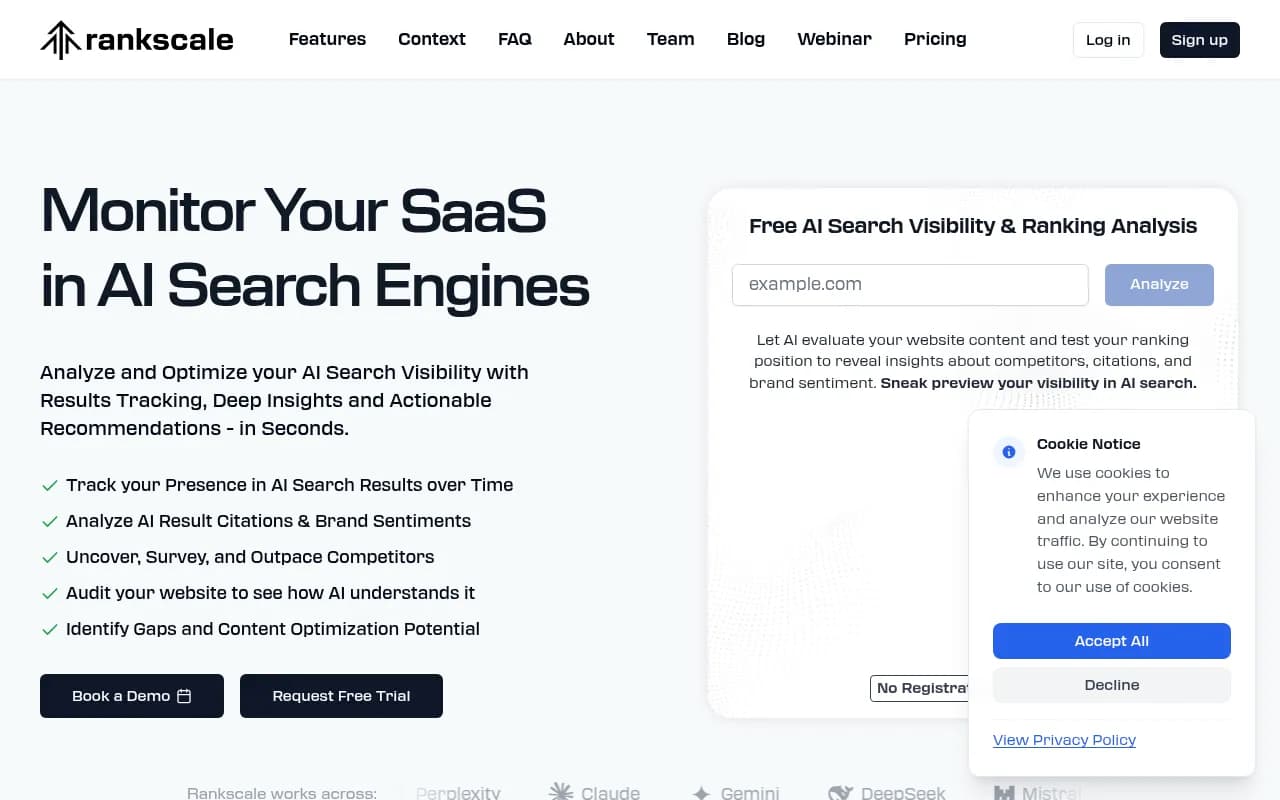

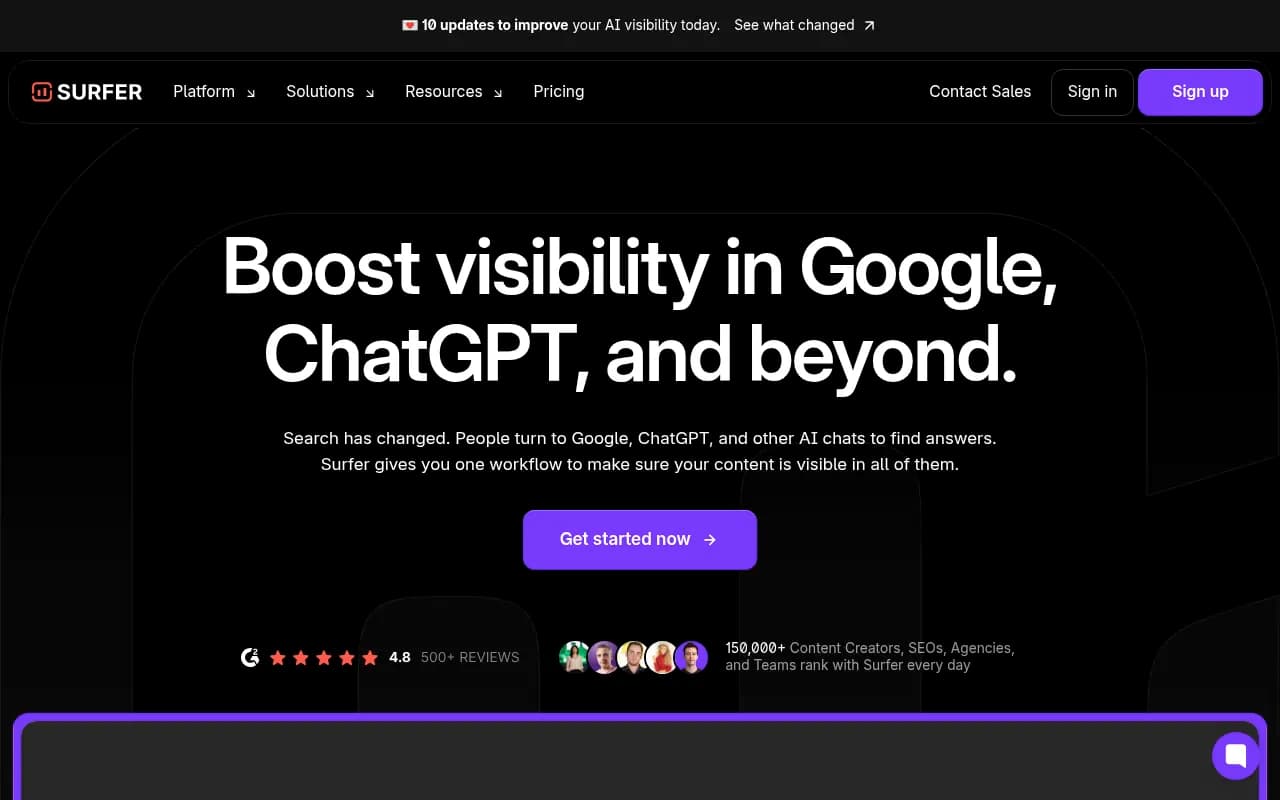

Tools comparison: AI visibility tracking platforms

| Tool | Tracks ChatGPT | Tracks Perplexity | Content generation | Crawler logs | Best for |

|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | Full-cycle optimization |

| Profound | Yes | Yes | No | No | Enterprise monitoring |

| Rankscale | Yes | Yes | No | No | Rank tracking focus |

| Otterly.AI | Yes | Yes | No | No | Budget monitoring |

| Peec.ai | Yes | Yes | No | No | Multi-language tracking |

| Frase | Partial | No | Yes | No | Content creation |

| Surfer SEO | No | No | Yes | No | On-page optimization |

The core difference between monitoring-only tools and platforms like Promptwatch is what happens after you see the data. Most tools show you where you're invisible and leave you to figure out the rest. A smaller number help you actually fix it.

The fundamentals of AI search visibility come down to this: be a source worth citing. That means being specific, being credible, being findable across multiple surfaces, and being structured in a way that makes extraction easy. Everything else is implementation detail.