Key takeaways

- AI search engines like ChatGPT, Perplexity, and Google AI Overviews pull citations from trusted sources -- new websites need to deliberately build that trust, it doesn't happen automatically.

- The fastest path to citations is publishing content that directly answers specific questions AI models are already being asked, not just general SEO content.

- Technical signals matter: structured data, crawlability by AI bots, and clean schema markup all affect whether AI systems can read and cite your pages.

- Building off-site authority (mentions, backlinks, forum presence) accelerates how quickly AI models recognize your site as a credible source.

- Tracking your AI visibility from day one -- even when numbers are low -- gives you a baseline to measure progress and spot what's working.

Starting a new website in 2026 means competing in two search ecosystems simultaneously. There's traditional Google, which you're probably already thinking about. And then there's AI search -- ChatGPT, Perplexity, Google AI Overviews, Claude, Gemini -- where a growing share of queries now end without a single click to any website.

That second ecosystem is where the real opportunity is for new sites. Here's the counterintuitive reality: AI search is still early enough that a new website with the right content can get cited alongside (or instead of) established players. The window isn't permanently open, but it's open right now.

This guide covers exactly how to take advantage of it.

Understanding how AI models decide what to cite

Before you can build citation authority, you need to understand what AI models are actually doing when they generate answers. They're not running a live search and ranking pages by domain authority. They're drawing on a combination of training data, real-time retrieval (in tools like Perplexity and ChatGPT with browsing), and signals that indicate trustworthiness.

What those signals look like in practice:

- Content that directly and specifically answers a question, without burying the answer in filler

- Pages that are well-structured and easy to parse (clear headings, logical flow, no walls of text)

- Sources that are cited or referenced by other credible sources -- the web's existing trust graph still matters

- Structured data that helps AI systems understand what a page is about

- Consistent entity presence: your brand name appearing across multiple contexts (your site, third-party sites, forums, directories)

One thing that trips up new websites: AI models aren't just reading your homepage. They're reading specific pages. A well-optimized article on a new domain can get cited if it's the best answer to a specific question -- even if your overall domain authority is low. That's the opening.

Step 1: Map the prompts you want to appear in

Traditional SEO starts with keyword research. AI search optimization starts with prompt research -- figuring out which questions people are actually asking AI models, and which of those questions your site could plausibly answer better than what's currently being cited.

For a new website, the goal isn't to chase the highest-volume prompts right away. You want prompts where:

- The existing cited sources are weak (thin content, outdated, generic)

- The question is specific enough that a focused answer can win

- The topic is genuinely within your expertise

A few ways to find these prompts:

- Ask ChatGPT, Perplexity, and Gemini the questions your target audience would ask. Look at what they cite. If the cited sources are mediocre, that's a gap.

- Use Reddit and forums to find the actual language people use when asking questions in your niche. AI models are heavily influenced by forum content -- knowing what questions get asked there is valuable.

- Look at "People Also Ask" boxes in Google for your core topics. These often mirror the kinds of queries AI models handle.

Tools like Promptwatch can show you which prompts competitors are visible for but you're not -- a direct map of where the gaps are.

Step 2: Create content engineered for AI citation

This is where most new websites go wrong. They create content that's fine for traditional SEO -- keyword-optimized, decent length, reasonable structure -- but that AI models don't cite because it doesn't actually answer questions clearly.

Content that gets cited by AI models tends to share a few characteristics:

Answer the question in the first paragraph

AI models often pull the first clear, direct answer they find. If your article takes three paragraphs to get to the point, you've already lost. Lead with the answer, then support it.

Use question-based headings

Structure your content around the specific questions your target prompts contain. If someone asks "what is the best CRM for a 5-person startup," your article should have a heading that says something close to that. AI models use heading structure to identify what a section is about.

Be specific where others are vague

Generic advice ("use good content marketing") doesn't get cited. Specific, actionable guidance with concrete details does. This is actually an advantage for new websites -- you can write more focused, specific content than a large site that's trying to cover everything.

Keep it readable and well-structured

Short paragraphs. Clear headings. Bullet points where appropriate. AI models parse structure, and a page that's easy to read programmatically is more likely to be cited.

Cover the topic completely

AI models favor pages that answer not just the main question but the follow-up questions too. If someone asks about a topic, think about what they'd naturally ask next, and answer that on the same page.

Step 3: Get your technical foundation right

A new website has one advantage here: you can build the technical foundation correctly from the start, instead of retrofitting it onto years of accumulated technical debt.

Schema markup

Implement structured data from day one. At minimum:

Organizationschema on your homepage (name, URL, logo, social profiles)ArticleorBlogPostingschema on every content pageFAQPageschema on pages that answer multiple questionsBreadcrumbListschema for site navigation

Schema helps AI systems understand what your content is about and who published it. It's not a magic citation trigger, but it's a signal that your site is professionally built and trustworthy.

Allow AI crawlers

Check your robots.txt file. Several new websites accidentally block AI crawlers -- either through overly broad disallow rules or through security plugins that block unfamiliar bots.

The major AI crawlers you want to allow:

GPTBot(OpenAI/ChatGPT)ClaudeBot(Anthropic)PerplexityBotGoogle-Extended(Google's AI training crawler)Applebot-Extended

If these are blocked, AI models literally cannot read your content. You won't get cited.

Page speed and Core Web Vitals

AI crawlers, like Google's crawler, favor pages that load quickly and don't throw errors. A slow or broken page is less likely to be fully indexed. Get your Core Web Vitals into the green before you start building content at scale.

Canonical URLs and clean structure

Make sure every page has a clear canonical URL, no duplicate content issues, and a logical URL structure. Confusion about which page is "the" version of a piece of content makes it harder for any crawler to index you correctly.

Step 4: Build your off-site entity presence

AI models don't just read your website. They read the entire web, and they form opinions about your brand based on what they find across multiple sources. For a new website, this means you need to exist in more places than just your own domain.

Get listed in relevant directories

For most industries, there are 5-10 authoritative directories that AI models consistently cite. Get your brand listed there early. For B2B software, that might be G2, Capterra, and Product Hunt. For local businesses, it's Google Business Profile, Yelp, and industry-specific directories.

Publish on platforms AI models trust

Medium, Substack, LinkedIn articles, and industry publications are all sources that AI models cite regularly. Publishing there -- with links back to your site -- creates multiple citation pathways. You're not just hoping AI models find your site; you're putting your content in places they already trust.

Get into Reddit discussions

This is underused by most new websites. Reddit is heavily cited by AI models, particularly Perplexity. Find the subreddits where your target audience asks questions, and contribute genuinely useful answers. Don't spam links -- just be helpful. When AI models answer questions in your niche, they often pull from Reddit threads, and if your brand or content is mentioned there, that's a citation signal.

Earn backlinks from cited sources

A backlink from a domain that AI models already cite is worth more than a generic backlink. When you're doing outreach for links, prioritize sites that you've seen cited in AI responses for your target prompts. That's a signal that AI models trust that domain, and a link from it is a trust transfer.

Step 5: Build topical authority, not just individual pages

AI models favor sources that demonstrate deep expertise in a topic, not just one good article. For a new website, this means building content clusters -- groups of related pages that together cover a topic comprehensively.

The structure looks like this:

- A main "pillar" page that covers a broad topic at a high level

- Supporting pages that go deep on specific subtopics

- Internal links connecting them all

For example, if you're building a website about project management software, your pillar might be "Project management software: a complete guide." Supporting pages might cover specific use cases, comparisons with competitors, guides for specific team sizes, and so on.

This approach does two things. It signals to AI models that your site is a genuine authority on the topic, not just a one-hit wonder. And it creates multiple entry points for citations -- AI models might cite your pillar for broad questions and your supporting pages for specific ones.

Step 6: Track your AI visibility from day one

Most new websites don't start tracking AI visibility until they're already established. That's a mistake. Tracking from the beginning gives you:

- A baseline to measure progress against

- Early signals about which content is working

- Data on which AI models are citing you (they behave differently)

- Visibility into which competitors you're gaining on

You don't need to obsess over the numbers when they're low. But knowing that you went from zero citations to five in a month, and that those citations came from Perplexity for a specific type of query, tells you something actionable.

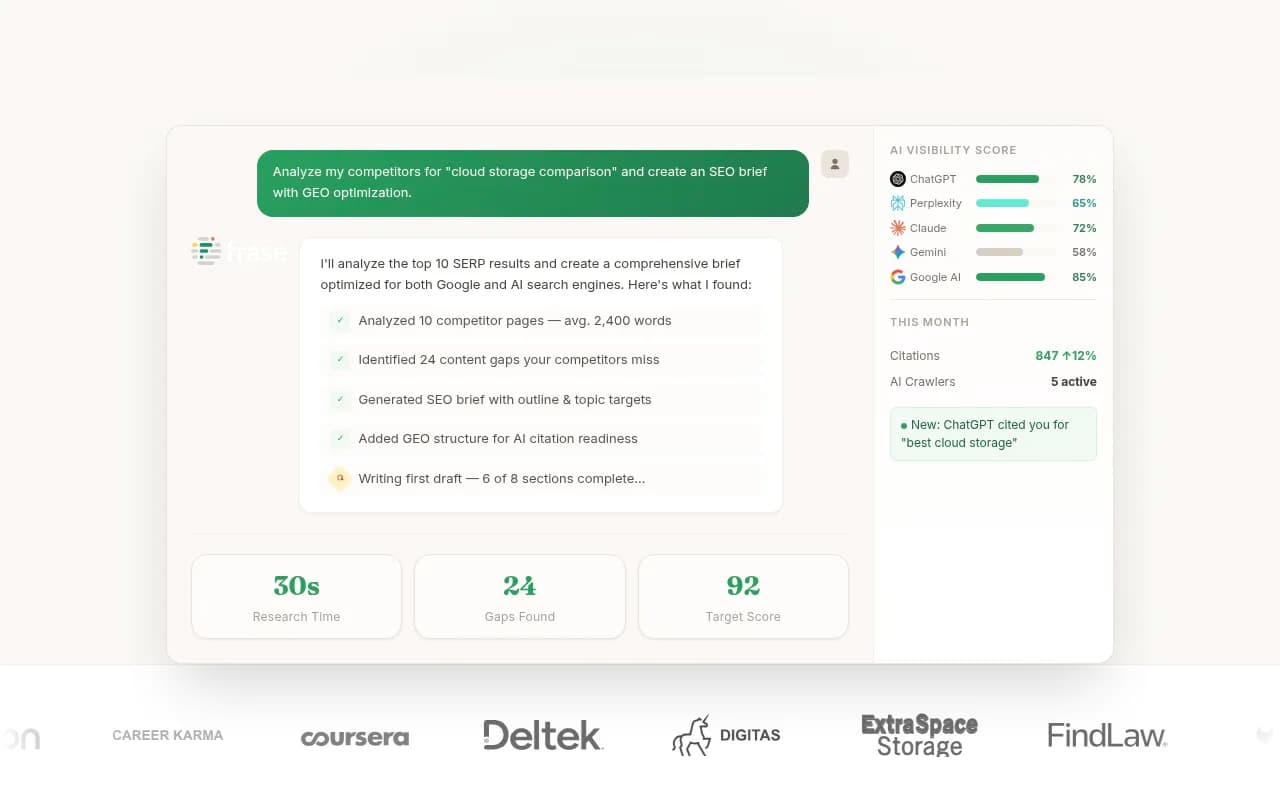

Several tools can help with this. For comprehensive tracking across multiple AI models with the ability to also act on what you find, Promptwatch covers the full loop -- from identifying gaps to generating content to tracking results. For more focused monitoring, tools like Otterly.AI and Peec AI offer affordable entry points.

How long does it actually take?

Honest answer: it varies, but most new websites see their first AI citations within 4-8 weeks of publishing well-optimized content, assuming the technical foundation is solid and the content genuinely answers specific questions.

Meaningful, consistent visibility -- where you're appearing regularly for multiple prompts across multiple AI models -- typically takes 3-6 months of consistent effort. Competitive prompts in crowded niches can take longer.

The research from Medium's 2026 AI visibility guide aligns with this: measurable improvements within 3-6 months for most sites, 6-12 months for highly competitive keywords.

What accelerates the timeline:

- Publishing content that fills genuine gaps (prompts with weak existing citations)

- Getting cited on high-trust third-party platforms early

- Building entity presence across multiple sources simultaneously

- Fixing technical issues that block AI crawlers

What slows it down:

- Generic content that doesn't answer specific questions

- Blocking AI crawlers (even accidentally)

- Focusing only on your own site and ignoring off-site presence

- Chasing high-competition prompts before establishing any baseline authority

A practical 90-day plan for new websites

Here's a concrete sequence that works for most new websites:

| Timeframe | Focus | Key actions |

|---|---|---|

| Week 1-2 | Technical foundation | Schema markup, robots.txt audit, Core Web Vitals, AI crawler access |

| Week 3-4 | Prompt research | Map 20-30 target prompts, identify gaps in current citations |

| Week 5-8 | Content creation | Publish 8-12 focused articles targeting specific prompts |

| Week 6-8 | Off-site presence | Directory listings, one guest post or publication, Reddit contributions |

| Week 8-10 | Content expansion | Add FAQ schema, expand pillar pages, publish supporting content |

| Week 10-12 | Track and iterate | Review first citation data, double down on what's working, fix gaps |

This isn't a rigid formula -- adjust based on your niche and resources. But the sequence matters: get the technical foundation right before creating content, and start tracking before you think you have anything worth tracking.

Common mistakes new websites make

Waiting for domain authority before trying. AI citation authority and traditional domain authority are related but not identical. A new site with great content on a specific topic can get cited before it has strong DA.

Creating content for humans but not for AI parsing. Content that reads well but has no structure -- no clear headings, no direct answers, dense paragraphs -- is hard for AI models to extract citations from.

Ignoring which AI model is citing what. ChatGPT, Perplexity, and Google AI Overviews have different citation patterns. A source cited frequently in Perplexity might not appear in ChatGPT at all. Tracking by model helps you understand where to focus.

Treating AI search as separate from traditional SEO. They're not separate. Strong traditional SEO signals (backlinks, topical authority, technical health) all contribute to AI citation authority. You're not choosing between them.

Not updating content. AI models favor current information. A page published in 2024 that hasn't been updated is less likely to be cited for time-sensitive topics than a page updated in 2026. Build a content maintenance habit early.

Tools worth knowing about

Beyond Promptwatch for end-to-end tracking and optimization, a few other tools are worth considering depending on your budget and focus:

For content creation specifically, tools that help you research and structure AI-optimized content can speed up the process significantly:

The window is real, but it won't stay open forever

The research from Attorney Journals put it plainly: every day you delay, competitors are getting indexed and cited while you're not. That's true. But the flip side is also true -- the firms and websites that move now are building citation authority that will compound over time.

New websites have a genuine shot at AI search visibility in 2026 because the ecosystem is still being shaped. AI models are still figuring out which sources to trust for which topics. A new site that publishes specific, well-structured, genuinely useful content -- and builds the off-site presence to back it up -- can earn citations that would take years to achieve in traditional search.

The work is the same work good content marketers have always done: understand what your audience needs to know, answer it better than anyone else, and make it easy for the systems that surface information to find and trust you. The systems have changed. The fundamentals haven't.