Key takeaways

- Cheap GEO tools typically offer monitoring-only dashboards with limited data coverage, leaving you with incomplete visibility scores and no path to fixing what's broken.

- Bad AI visibility data leads to real strategic mistakes: optimizing for the wrong prompts, missing competitor gaps, and publishing content that AI models never cite.

- The hidden costs compound over time through wasted content spend, missed revenue from AI-driven traffic, and the opportunity cost of acting on misleading metrics.

- The GEO market in 2026 is crowded with entry-level trackers that look similar on a pricing page but differ enormously in data quality, model coverage, and actionability.

- Investing in a platform that closes the loop -- from gap analysis to content creation to traffic attribution -- pays back faster than cycling through cheap tools that only show you a number.

There's a familiar pattern in software buying. A team needs a new capability, someone finds a cheaper option that looks roughly equivalent, and the budget gets approved. Six months later, the team is frustrated, the data doesn't make sense, and they're shopping again.

In traditional SEO, this cycle was annoying but recoverable. You'd lose a few months of momentum. In AI search visibility, the stakes are higher and the mistakes are harder to spot -- because bad data doesn't look like bad data. It looks like a dashboard full of numbers.

This guide is about what cheap GEO tools actually cost you in 2026, beyond the monthly subscription price.

The GEO tool market in 2026: a lot of dashboards, not much depth

The market for AI visibility tools has exploded. There are now dozens of platforms promising to track how your brand appears in ChatGPT, Perplexity, Claude, Gemini, and other AI models. Many of them are priced to attract early adopters: $29/month, $49/month, sometimes free.

The pricing is appealing. The problem is that most of these tools are built around a simple loop: send a prompt to an AI model, check if your brand appears in the response, log the result. That's it. No context about why you appeared or didn't. No data on what content the AI actually cited. No way to act on what you find.

You end up with a visibility score that goes up and down, and no reliable way to know whether the movement is real, meaningful, or caused by the tool's own sampling methodology.

This isn't a hypothetical concern. The quality of AI visibility data varies enormously depending on:

- How many prompts are tracked (and whether they're the right prompts for your category)

- Which AI models are queried, and how often

- Whether the tool accounts for response variation (AI models don't give the same answer twice)

- Whether citation sources are tracked, not just brand mentions

- Whether prompt volume and difficulty data is available to help you prioritize

A tool that checks 20 prompts once a week gives you a very different picture than one tracking 350 prompts across 10 models with statistical sampling to account for response variance. Both might call themselves "AI visibility platforms." Only one is giving you data you can actually trust.

What bad data actually costs you

Wasted content spend

The most direct cost of low-quality GEO data is publishing content that doesn't move the needle.

If your tool can't tell you which specific prompts your competitors rank for and you don't, you're guessing at content strategy. You might publish five articles on topics where you already have decent visibility, while completely missing the 12 prompts where a competitor is getting cited every time and you're invisible.

Content production isn't cheap. A well-researched article costs real money in writer time, editing, and publishing. If your gap analysis is wrong, that spend is largely wasted.

The better tools -- the ones that do proper answer gap analysis -- show you exactly which prompts competitors appear in that you don't. You can see the specific questions AI models are answering, the content they're citing, and what's missing from your site. That's the difference between a content strategy and a content guess.

Promptwatch is one of the few platforms that does this end-to-end: it identifies the gaps, then has a built-in AI writing agent that generates content specifically engineered to get cited by AI models, grounded in citation data from over 880 million analyzed responses.

Decisions made on noise, not signal

AI model responses vary. Ask ChatGPT the same question twice and you'll often get different answers, different sources cited, different brand mentions. A cheap tool that queries each prompt once and logs the result is giving you a single data point and presenting it as a trend.

This creates a specific kind of problem: you see your visibility score drop, you panic, you make changes. Or you see it rise, you assume what you did worked, and you double down. Neither conclusion is necessarily correct if the underlying data is just noise.

Reliable AI visibility data requires statistical sampling -- querying each prompt multiple times across multiple sessions to get a stable estimate of how often you appear. It requires tracking across multiple AI models, because your visibility on Perplexity and your visibility on ChatGPT can tell very different stories. And it requires prompt volume data so you know whether the prompts you're tracking actually matter to real users.

Without these things, you're optimizing against a metric that doesn't reliably reflect reality.

Missed traffic attribution

One of the most underappreciated costs of cheap GEO tools is what they don't measure: actual traffic from AI search.

Knowing your brand appears in AI responses is useful. Knowing that those appearances are driving 3,000 sessions a month to specific pages -- and that those sessions convert at a higher rate than organic search -- is what justifies the investment and guides where to focus next.

Most entry-level GEO tools stop at visibility monitoring. They don't connect to your actual traffic data. You can't tell whether your AI visibility improvements are translating into revenue, which means you can't make a business case for continued investment, and you can't prioritize the pages and prompts that are actually worth your time.

This is a real gap. The platforms that offer traffic attribution -- through a code snippet, Google Search Console integration, or server log analysis -- give you a fundamentally different level of insight than those that don't.

The crawler blind spot

Here's something most cheap tools don't tell you: AI models have to crawl your website before they can cite it. If ChatGPT's crawler can't access your pages, or if it's hitting errors, or if it's only crawling your homepage and ignoring your most relevant content -- your visibility will be low regardless of how good your content is.

Cheap GEO tools don't show you this. They just show you that you're not appearing, with no explanation of why.

AI crawler logs -- real-time records of which AI bots visited your site, which pages they read, and what errors they encountered -- are a capability that most entry-level platforms simply don't have. Without them, you're missing a whole category of fixable problems.

The compounding cost problem

The costs above don't stay flat. They compound.

Every month you're running on bad data, you're making content decisions based on wrong priorities. Every month you're missing traffic attribution, you're unable to make the business case for proper GEO investment. Every month your AI crawler issues go undiagnosed, your content is being ignored by the models you're trying to rank in.

Meanwhile, competitors using better tools are finding the gaps, publishing the right content, and accumulating citations. AI models learn from what they've cited before -- if a competitor's content has been cited consistently for six months and yours hasn't, you're not starting from zero when you finally fix the problem. You're starting from behind.

This is the real hidden cost of cheap GEO tools. It's not just the wasted subscription fee. It's the compounding opportunity cost of acting on bad information while competitors act on good information.

What separates cheap tools from genuinely useful ones

Here's a practical comparison of what you get at different levels of the market:

| Capability | Entry-level tools | Mid-tier tools | Full-platform tools |

|---|---|---|---|

| Brand mention tracking | Yes | Yes | Yes |

| Multi-model coverage | Sometimes (2-3 models) | Usually (5-7 models) | Yes (10+ models) |

| Prompt volume/difficulty data | Rarely | Sometimes | Yes |

| Answer gap analysis | No | Rarely | Yes |

| Citation source tracking | No | Sometimes | Yes |

| AI crawler logs | No | No | Yes |

| Content generation | No | No | Yes |

| Traffic attribution | No | Rarely | Yes |

| Reddit/YouTube citation tracking | No | No | Yes |

| Statistical sampling for accuracy | Rarely | Sometimes | Yes |

The gap between entry-level and full-platform isn't just about features. It's about whether the tool is built to help you take action or just to show you a number.

Tools like Otterly.AI and Peec AI are popular entry points -- they're affordable and easy to use, but they're fundamentally monitoring dashboards. You can see where you stand, but you can't do much about it from within the platform.

AthenaHQ and Profound offer stronger feature sets but are primarily focused on tracking and analysis rather than optimization and content generation.

The platforms that actually close the loop -- showing you the gap, helping you create content to fill it, then tracking whether that content gets cited -- are a much smaller group.

The SEO parallel: we've seen this before

This isn't the first time the digital marketing industry has gone through a "cheap tools vs. quality tools" reckoning.

In traditional SEO, the market learned the hard way that cheap link-building tools, automated keyword stuffing software, and low-quality audit platforms could do real damage. Not just by wasting money, but by actively harming rankings through penalties and by creating a false sense of progress that delayed real investment.

The GEO market is earlier in that cycle, but the pattern is recognizable. A lot of tools are being built quickly to capture a hot market. Many of them are thin wrappers around basic API calls to AI models. They look like serious platforms on a pricing page, but they don't have the data infrastructure, the sampling methodology, or the product depth to give you reliable, actionable information.

The question isn't whether cheap GEO tools are a bad deal in principle. Some entry-level tools are genuinely useful for getting started. The question is whether you're treating a monitoring dashboard as a strategy tool -- and whether you're making real decisions based on data that isn't reliable enough to support them.

How to evaluate a GEO tool before you commit

Before signing up for any AI visibility platform, ask these questions:

How many prompts do you track, and how are they selected? A tool tracking 20 generic prompts tells you almost nothing about your specific competitive situation. You want prompts that reflect how real users in your category actually query AI models.

How do you handle response variation? If the answer is "we query each prompt once," the data isn't statistically reliable. Look for platforms that sample multiple times and give you confidence intervals or averaged scores.

Which AI models do you cover? ChatGPT and Perplexity are obvious, but Google AI Overviews, Claude, Gemini, Grok, and DeepSeek all have meaningful user bases. Coverage matters.

Can you show me what's causing my visibility gaps? A tool that shows you a low score without explaining why is a monitoring tool, not an optimization tool. You want to see which specific content is missing, which competitors are filling the gap, and what you'd need to publish to compete.

Do you track AI crawler activity on my site? If not, you're missing a whole category of fixable problems.

Can you connect AI visibility to actual traffic and revenue? This is the question that separates tools built for marketers from tools built for demos.

The real cost calculation

Let's put some rough numbers on this.

A cheap GEO tool might cost $49/month. A full-platform tool like Promptwatch runs from $99/month (Essential) to $579/month (Business), with agency and enterprise pricing above that.

The price difference looks significant. But consider what the cheap tool is actually costing you:

- If your content team publishes four articles per month based on gap analysis from a tool with unreliable data, and two of those articles are targeting the wrong prompts, you've wasted roughly half your content budget on misdirected work.

- If your AI visibility is actually driving 5,000 sessions/month that you can't attribute because your tool doesn't do traffic tracking, you're making budget decisions without knowing one of your most important traffic sources.

- If AI crawlers are hitting errors on your key pages and you don't know it, your visibility will stay low regardless of how much content you publish.

The math changes quickly. The question isn't whether a $49/month tool is cheaper than a $249/month tool. It's whether the $49/month tool is giving you information that's worth acting on.

What good looks like

The best GEO platforms in 2026 share a few characteristics. They're built around a cycle, not a dashboard: find the gaps, create content to fill them, track whether it works, repeat. They have real data infrastructure behind them -- not just API calls to AI models, but citation databases, crawler log analysis, and traffic attribution. And they're honest about what they don't know, rather than presenting noisy data as confident scores.

Promptwatch tracks across 10 AI models, has processed over 1.1 billion citations and prompts, offers built-in AI content generation grounded in real citation data, and connects visibility to actual traffic through multiple attribution methods. That's a meaningful difference from a tool that checks whether your brand name appears in a handful of AI responses.

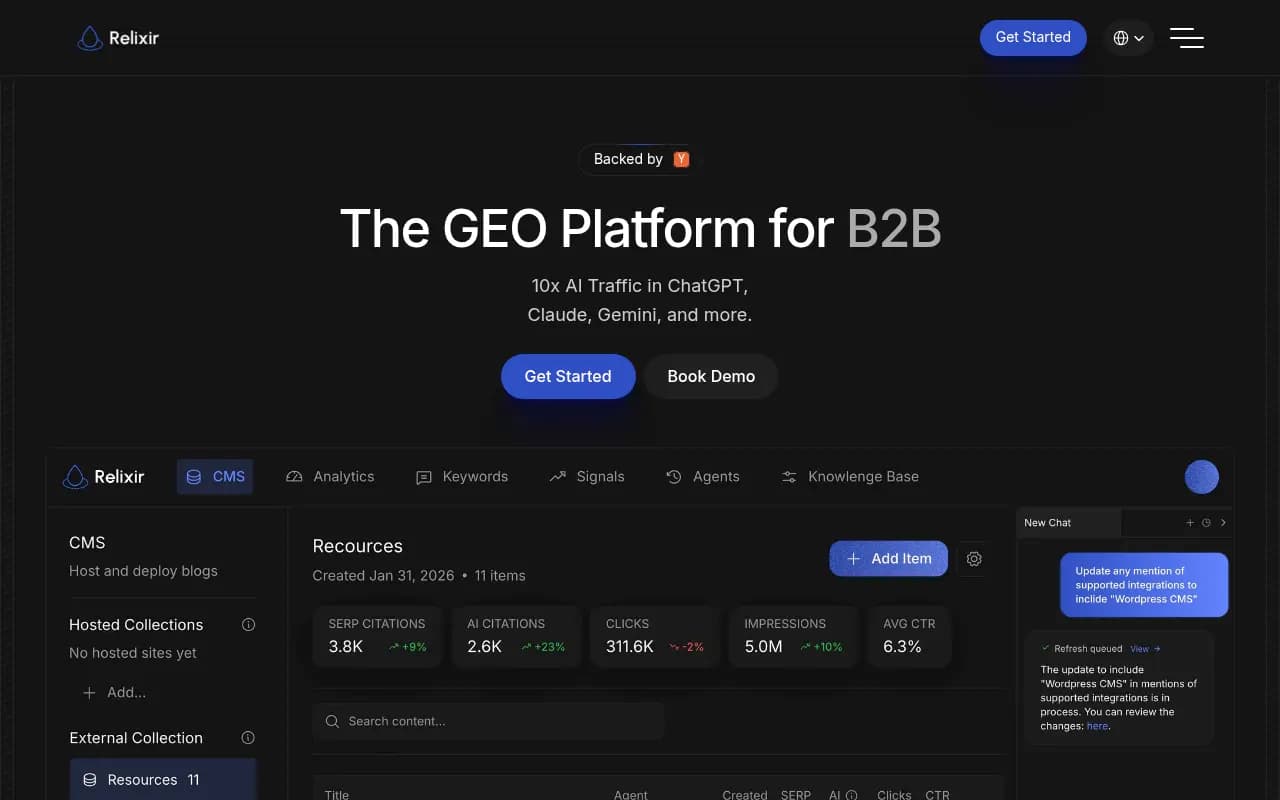

Other platforms worth evaluating at the more capable end of the market include Ranksmith, Gauge, and Relixir, each of which offers more than basic monitoring.

The bottom line

Cheap GEO tools aren't always worthless. If you're just getting started and want to understand the basic concept of AI visibility before committing to a real budget, an entry-level tool can be a reasonable first step.

But if you're making actual content decisions, budget allocations, or competitive strategy based on data from a monitoring-only dashboard with limited model coverage and no statistical sampling, you're not saving money. You're spending money on bad information and then spending more money acting on it.

In a market where AI search is already driving meaningful traffic for many categories -- and where that traffic share is growing -- the cost of bad GEO data isn't theoretical. It's the gap between where your brand appears in AI responses and where your competitors do. Every month that gap widens, it gets harder to close.

The tools that help you close it are worth paying for.