Key takeaways

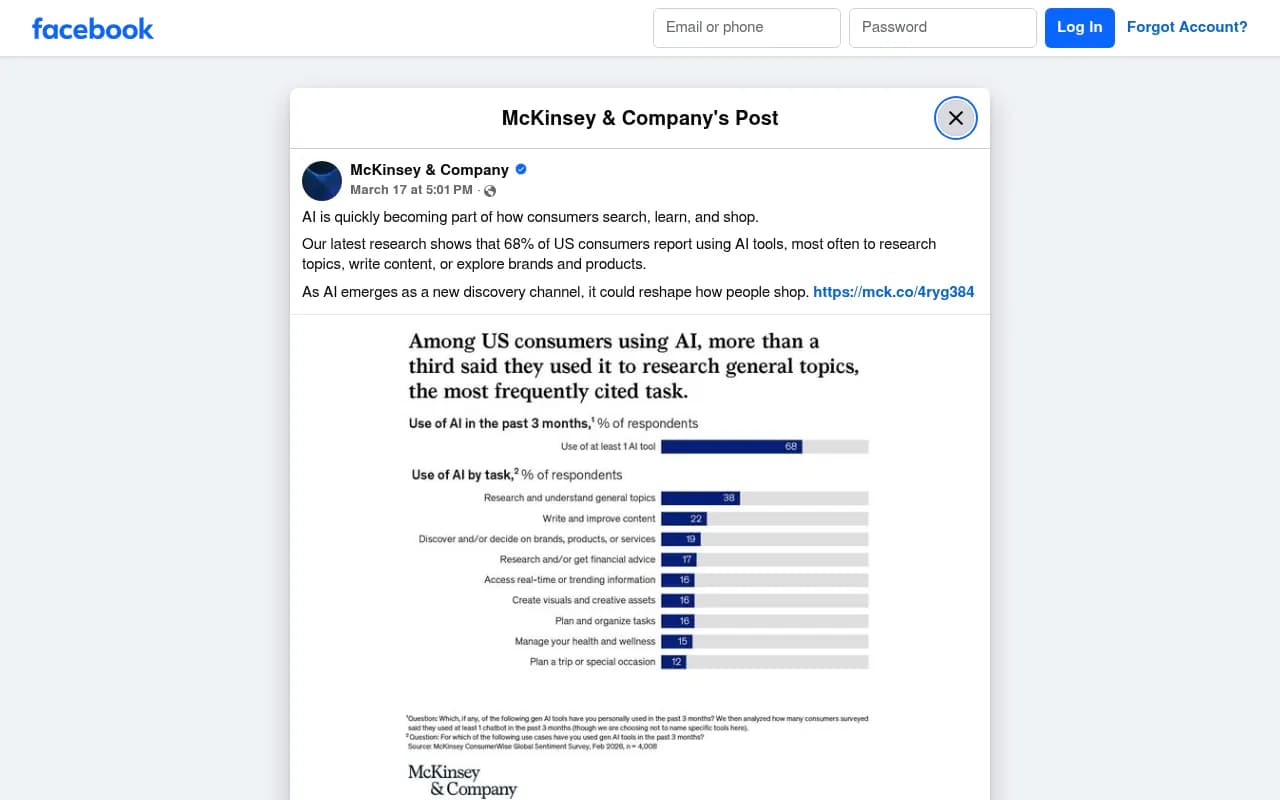

- 68% of consumers already use AI tools as part of how they search, learn, and shop, according to McKinsey's 2026 research

- AI shopping is still primarily a discovery and research tool -- not a purchase tool. Most buyers still complete transactions on Amazon or brand sites

- Query behavior has shifted dramatically: people now ask full conversational questions instead of short keyword phrases, which changes how brands need to write and structure content

- Brands that aren't visible in AI-generated answers are losing consideration before the shopper ever reaches a product page

- Tracking and optimizing for AI search visibility has become a distinct discipline from traditional SEO

Something shifted in how people shop, and it happened faster than most brands were ready for.

Consumers who once opened Google to research a product are now opening ChatGPT, Perplexity, or Gemini instead. They're not just searching differently -- they're asking different questions, expecting different answers, and making decisions at a different point in the funnel. By the time many of them land on a product page, they've already decided what they want.

That's the core tension in AI shopping right now. The discovery layer has been rebuilt. The transaction layer hasn't. And brands are caught in the middle, trying to figure out where they need to show up and how.

This guide covers what's actually happening in 2026 -- the real data, the real limitations, and what brands need to do about it.

The 68% number that should get your attention

McKinsey's latest consumer research found that 68% of consumers are already using AI as part of how they search, learn, and shop. That's not a niche behavior anymore. It's the majority.

What's interesting about that number is what it doesn't tell you. It doesn't mean 68% of purchases are being initiated through AI. It means AI has become part of the research and discovery process for most consumers -- the phase where they figure out what they want, which brands exist, and what other people think.

That's actually the most important phase for brand visibility. If you're not showing up when someone asks ChatGPT "what's the best [product category] for [use case]," you're not in the consideration set. You don't get a second chance at that moment.

What AI shopping actually looks like in 2026

Here's the honest picture: AI shopping is a much smarter search bar. It is not, yet, an autonomous purchasing agent.

PYMNTS ran a useful real-world test of this. A writer tried to use an LLM to find and buy a specific toaster -- a product she already knew she wanted, with all the details provided upfront. The AI produced a reasonable list of options. It didn't include the brand she wanted. More importantly, it couldn't confirm stock availability, verify the actual current price, or place the order. She ended up going to Amazon.

That test has been repeated periodically since, and the LLMs have improved. They're faster, more conversational, better at surfacing relevant brands. But the fundamental gap remains: AI can help you decide what to buy, but the infrastructure for actually executing the purchase through AI isn't there yet. Real-time inventory, live pricing, checkout -- these are still handled by retailers and marketplaces.

This matters for how brands should think about AI search. The goal right now isn't to get someone to buy through ChatGPT. The goal is to get recommended by ChatGPT so the buyer comes to you already convinced.

How query behavior has changed

The shift in how people type (or speak) their searches is one of the most practically significant changes for brands.

Old behavior: "running shoes flat feet"

New behavior: "What are the best running shoes for flat feet if I run three times a week and need good arch support under $150?"

That's not a trivial difference. The second query contains intent signals, budget, use frequency, and a specific physical need. AI systems can process all of that and return a genuinely tailored recommendation. Traditional keyword-based search couldn't do that well.

For brands, this means:

- Short, keyword-stuffed product descriptions don't serve AI systems well

- Content that answers specific questions in natural language gets cited more often

- Use cases, scenarios, and "who this is for" framing matters more than feature lists

- FAQ-style content, comparison articles, and "best for X" framing are the formats AI models pull from most reliably

The ALM Corp analysis of 2026 search behavior makes a good point here: search hasn't ended, but it has been redistributed. Users move between traditional search, AI summaries, brand sites, marketplaces, and review forums as part of one connected journey. Brands that only optimize for one of those touchpoints are missing most of the path.

The three platforms reshaping product discovery

ChatGPT

ChatGPT is where a huge amount of product research now happens, particularly for considered purchases. Users ask open-ended questions, compare options in conversation, and increasingly interact with ChatGPT's shopping carousels -- product cards that appear directly in responses for certain queries.

The shopping carousel feature is still rolling out and varies by query type, but it represents a real commercial surface. Brands that appear in those carousels get visibility that functions more like a paid placement than an organic result -- except it's driven by what the model thinks is most relevant, not by ad spend.

ChatGPT also draws heavily from web content it has crawled, which means your published content -- articles, comparison pages, product descriptions -- directly influences whether you get cited.

Perplexity

Perplexity has carved out a distinct position as the AI search engine for people who want sourced, verifiable answers. It cites its sources inline, which means brands and publishers can actually see when they're being referenced.

For product discovery, Perplexity tends to surface content from review sites, editorial publications, Reddit discussions, and brand pages. It rewards content that is specific, factual, and well-structured. Vague marketing copy doesn't get cited. Detailed, honest product information does.

The platform has grown fast. A Reddit thread from early 2026 captures the debate well: some users find Perplexity slower than Google's AI Mode but prefer the quality of responses. That tradeoff -- speed vs. depth -- is shaping which platform different buyer types gravitate toward.

Gemini and Google AI Overviews

Google's AI features are the ones with the most existing traffic at stake. AI Overviews appear at the top of Google results for a wide range of queries and can significantly reduce click-through to organic results below them.

For shopping queries, Google AI Mode (the more conversational version) is becoming a real factor. It can pull product information, compare options, and surface recommendations -- all before the user scrolls to traditional results or ads.

The important nuance here: Google's AI features tend to favor content that already ranks well organically. Strong traditional SEO is still a prerequisite, not a replacement strategy.

What this means for brand visibility

The practical implication is that there's now a new layer of visibility that sits above traditional search rankings. You can rank #1 on Google and still not appear in the AI-generated answer that most users read first.

This has created a new discipline: Generative Engine Optimization (GEO), sometimes called AEO (Answer Engine Optimization). The core idea is that you need to optimize not just for ranking algorithms but for what AI models choose to cite when they generate answers.

The factors that influence AI citations are different from traditional SEO signals:

| Factor | Traditional SEO | AI citation optimization |

|---|---|---|

| Content format | Keywords, headings, backlinks | Conversational, question-answering, structured data |

| What gets rewarded | Domain authority, link profile | Specificity, factual accuracy, source credibility |

| Query type | Short keyword phrases | Long, conversational, intent-rich questions |

| Measurement | Rankings, organic traffic | Citation frequency, mention share, AI traffic |

| Update cycle | Weeks to months | Can shift with model updates |

Brands that are investing in this now are building an advantage that compounds. AI models tend to cite sources they've cited before -- once you're in the training data or crawl index as a credible source for a topic, you're more likely to keep appearing.

The gap between discovery and purchase

It's worth being honest about the limits here. AI has transformed discovery. It has not transformed the transaction.

The infrastructure problem is real. AI models can tell you which product to buy, but they generally can't tell you whether it's in stock at a price you'd actually pay, or complete the purchase for you. Agentic commerce -- where an AI agent autonomously completes a purchase on your behalf -- is being worked on by multiple companies, but it's not mainstream yet.

What this means practically: the funnel has compressed at the top (discovery and research happen faster, with more AI involvement) but the bottom of the funnel still runs through traditional channels. Amazon, brand websites, and retail apps are still where transactions happen.

For brands, the strategic implication is to win the AI discovery moment so that when the buyer does go to complete the purchase, they're already looking for you specifically. Being the recommended brand in a ChatGPT response is worth more than a generic impression -- the buyer arrives with intent.

How to track whether you're actually showing up

You can't optimize what you can't measure. The problem is that traditional analytics tools don't capture AI referrals well. When someone reads a ChatGPT response that mentions your brand and then navigates directly to your site, that often shows up as direct traffic -- invisible in your attribution.

A few approaches that actually work:

- Monitor AI responses directly by running your target queries through ChatGPT, Perplexity, and Gemini regularly and noting whether your brand appears

- Use server log analysis to identify AI crawler activity -- tools like Googlebot are well-known, but ChatGPT's crawler (OAI-SearchBot), Perplexity's bot, and Claude's crawler are all hitting sites now

- Track citation share: what percentage of responses to your key product queries mention your brand vs. competitors?

Platforms built specifically for this are worth knowing about. Promptwatch tracks brand visibility across 10 AI models including ChatGPT, Perplexity, Gemini, and Claude, and goes beyond monitoring to help identify content gaps and generate content designed to get cited. It's one of the few tools that closes the loop between "where am I invisible" and "here's what to publish to fix that."

For brands that want to track AI visibility without the full optimization suite, there are lighter options too:

What content actually gets cited by AI models

Based on what's observable from citation patterns across ChatGPT, Perplexity, and Gemini, a few content types consistently perform well for product discovery queries:

- Comparison articles ("X vs Y for [use case]") -- AI models love pulling from these because they're structured to answer decision-making questions

- "Best [product] for [specific person/situation]" listicles -- the more specific the use case, the better

- Detailed FAQ sections on product pages -- questions that match how real buyers actually phrase their research

- Third-party reviews and editorial coverage -- AI models weight independent sources heavily, so getting covered by review publications matters

- Reddit and forum discussions -- Perplexity in particular pulls from Reddit heavily; authentic user discussions about your product category can influence recommendations even if your brand isn't directly mentioned

What doesn't get cited much: generic marketing copy, thin product descriptions, and content that reads like it was written for a keyword rather than a human question.

The competitive reality

Here's the uncomfortable truth for brands that haven't started thinking about this: your competitors who are optimizing for AI visibility right now are building a lead that will be hard to close later.

AI models develop citation patterns. If a competitor's content has been consistently cited for "best [product] for [use case]" queries over the past year, that pattern is reinforced. Getting into the citation set later requires not just publishing good content but displacing an established source.

The brands winning in AI search right now tend to share a few characteristics: they publish specific, use-case-driven content at volume; they have strong coverage on third-party review and editorial sites; and they're actively monitoring their AI visibility rather than assuming their traditional SEO performance translates.

The shift from keyword search to conversational AI search isn't coming -- it's here. 68% of consumers are already there. The question for any brand is whether their content is ready to be cited when those consumers ask their questions.

Tools worth knowing about

Beyond the monitoring platforms, a few tools are specifically relevant for the AI shopping context:

For enterprise brands with complex product catalogs, Azoma focuses specifically on AI shopping optimization across ChatGPT, Amazon's Rufus AI, and other shopping-specific AI surfaces:

For tracking how AI models cite and talk about your brand across multiple platforms:

For content optimization specifically aimed at getting cited in AI responses:

The tooling ecosystem is still maturing. Some of these platforms are a year old or less. But the core capability -- knowing whether AI models are recommending you, and being able to do something about it when they're not -- is no longer optional for brands that care about discovery.

The transaction layer of AI commerce will catch up eventually. When it does, the brands that built AI visibility early will have a significant head start.