Key takeaways

- Most GEO platforms stop at monitoring -- they show you visibility scores but don't tell you what to do about them.

- Benchmarking approaches vary wildly: some tools compare you to competitors prompt-by-prompt, others just show aggregate share-of-voice numbers.

- The most useful benchmarking includes prompt-level data, source attribution, and content gap identification -- not just a dashboard with a percentage.

- A handful of platforms (notably Promptwatch, Profound, and AthenaHQ) go deeper on competitive intelligence, while lighter tools like Otterly and Peec are better for quick spot-checks.

- If you want benchmarking that actually leads somewhere -- to content you can publish and track -- the shortlist gets much shorter.

The GEO tool market has exploded. A year ago there were maybe a dozen serious options. Now there are well over 50 platforms claiming to help brands "dominate AI search." Most of them look similar on a landing page: a dashboard, some visibility scores, a competitor comparison chart.

But when you get inside them, the differences are significant -- especially in how they handle benchmarking. That's the part that actually matters for strategy. Knowing you have 12% AI visibility is useless without knowing what your competitor has, which prompts they're winning, and why.

This guide breaks down how 10 of the most widely used GEO platforms approach competitive benchmarking in 2026. I've organized it around the questions that matter most to marketing and SEO teams: What does the benchmark actually measure? How granular does it get? And does it help you do anything about the gap?

What good benchmarking actually looks like

Before comparing tools, it's worth being clear about what competitive benchmarking in GEO should do.

At minimum, a benchmark tells you how often your brand appears in AI responses versus a competitor, across a defined set of prompts. That's table stakes.

Better benchmarking goes further:

- Which specific prompts is the competitor winning that you're not?

- What sources is the AI citing when it mentions them?

- Is the competitor being described more positively, more accurately, or more completely?

- Which AI models favor them -- and which ones are more neutral?

The best benchmarking connects those gaps to action: here's the content you're missing, here's what to write, here's how to track whether it worked.

Most platforms in 2026 are still stuck at the first level. A few are getting to the second. Almost none have nailed the third.

The 10 platforms, compared

1. Promptwatch

Promptwatch is the platform that comes closest to closing the full loop. Its competitive benchmarking is built around what it calls Answer Gap Analysis -- you see exactly which prompts your competitors appear in that you don't, not just an aggregate score.

The heatmap view lets you compare your AI visibility against multiple competitors across 10 different models (ChatGPT, Claude, Perplexity, Gemini, Grok, DeepSeek, Copilot, Meta AI, Mistral, and Google AI Overviews) simultaneously. You can filter by model, by prompt category, or by competitor -- which is more useful than it sounds when you're trying to prioritize.

What separates Promptwatch from most of the field is what happens after you find a gap. The built-in AI writing agent generates articles, listicles, and comparisons grounded in citation data from over 880 million citations analyzed. It's not generic content -- it's specifically designed to fill the gaps the benchmark identified. Then page-level tracking shows whether the new content actually improved your visibility.

For agencies and larger teams, the multi-site management, Looker Studio integration, and API access make it practical to run this workflow at scale. Pricing starts at $99/month (Essential) up to $579/month (Business), with custom plans for agencies.

2. AthenaHQ

AthenaHQ takes a data-heavy approach to benchmarking, with strong prompt volume tracking and a GEO score that aggregates your performance across models. The competitive comparison view shows share-of-voice by prompt category, which is useful for understanding where you're losing ground at a topical level.

The Shopify integration is a differentiator for ecommerce brands -- it connects AI visibility to product-level data, which most platforms don't do. Prompt volume estimates help you prioritize which gaps are worth closing first.

Where AthenaHQ falls short is on the "now what?" side. The benchmarking is solid, but there's no built-in content generation to act on what you find. You're left exporting data and figuring out the content strategy yourself. At $295/month, it's a meaningful investment for a monitoring-focused tool.

3. Profound

Profound has been one of the more serious enterprise-oriented platforms in the GEO space. Its benchmarking includes sentiment analysis alongside visibility scores, so you can see not just whether you appear in AI responses but how you're described relative to competitors.

The source attribution is reasonably detailed -- you can see which domains are being cited when AI models discuss your category, which helps identify where to build authority. The interface is clean and the data is reliable.

The main limitation is price and scope. Profound is built for enterprise teams with enterprise budgets, and it doesn't have the content optimization layer that would make the benchmarking actionable for most marketing teams. It's a strong monitoring tool that stops short of being an optimization platform.

4. Scrunch AI

Scrunch AI positions itself around influencer and source signal analysis -- understanding which content creators, publishers, and domains are shaping AI responses in your category. That's a genuinely useful angle for competitive benchmarking that most platforms ignore.

If your competitor is winning in AI responses partly because a popular Reddit thread or YouTube channel keeps recommending them, Scrunch surfaces that. The competitive benchmarking is less about prompt-by-prompt comparison and more about understanding the content ecosystem that's influencing AI outputs.

The tradeoff is that it's narrower in scope than full-stack GEO platforms. It's excellent for a specific type of competitive intelligence but doesn't give you the broad visibility tracking that Promptwatch or AthenaHQ provide.

5. Otterly.AI

Otterly is the lightweight end of the market. It's fast to set up, affordable, and gives you a quick read on brand mentions across a handful of AI models. The competitive benchmarking is basic -- you can compare your mention rate against competitors across a set of prompts, but the depth stops there.

No source attribution, no content gap analysis, no crawler logs. What you get is a clean dashboard showing whether you're mentioned more or less than competitors, updated regularly. For small teams or individuals who just want a sanity check on their AI visibility, it works fine. For anyone who needs to build a strategy from the data, it's not enough.

6. Peec AI

Peec AI is similar to Otterly in positioning -- a monitoring-first tool with a focus on multi-language tracking, which is its real differentiator. If you're operating across multiple markets and languages, Peec handles that better than most.

The competitive benchmarking shows competitor mention rates and source analysis at a basic level. Prompt organization is decent -- you can group prompts by topic and see how your performance varies by category. Daily tracking keeps the data reasonably fresh.

Like Otterly, it's a monitoring tool. The benchmark tells you the score; it doesn't help you change it.

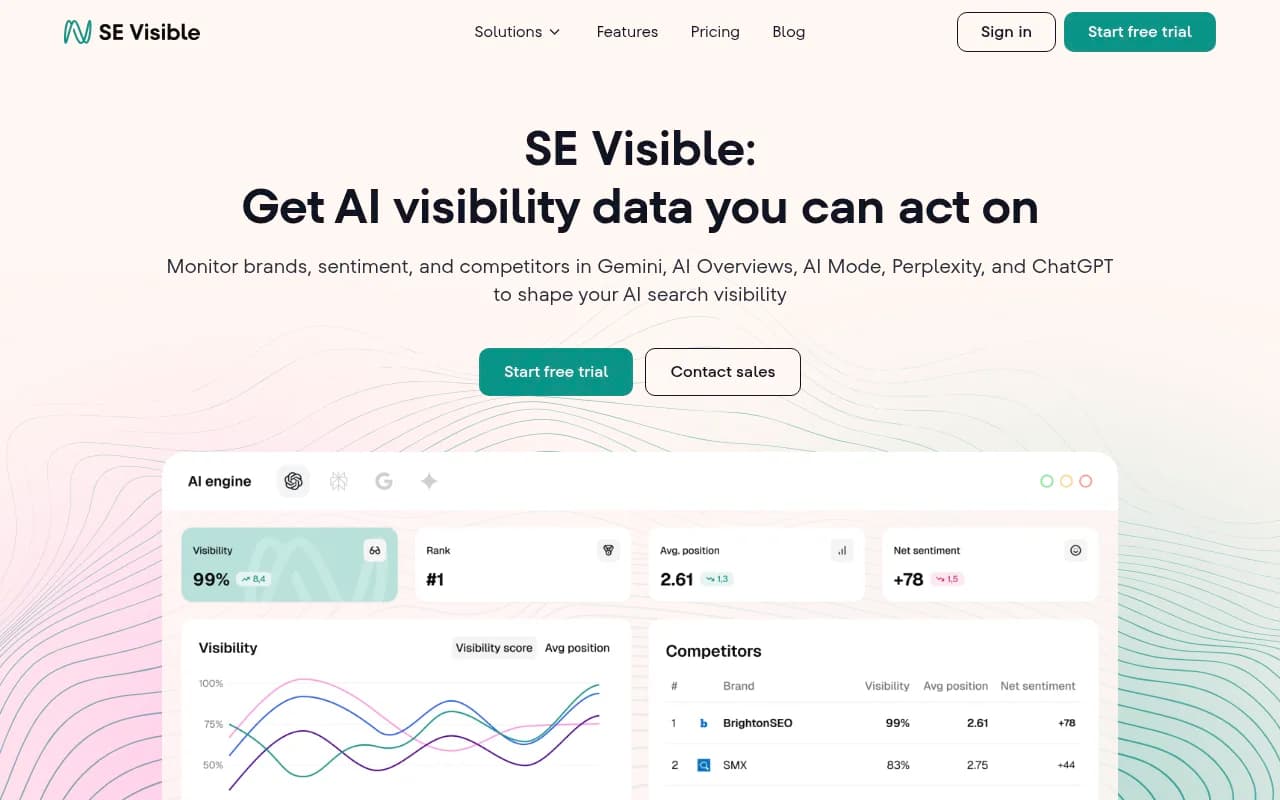

7. SE Visible

SE Visible (from SE Ranking) takes a more integrated approach, combining traditional SEO data with AI visibility tracking. The benchmarking includes a net sentiment score alongside visibility metrics, which adds useful color -- you might appear in AI responses but be described negatively relative to a competitor.

Coverage across Google AI Overviews, AI Mode, Gemini, ChatGPT, and Perplexity is solid. The competitor benchmarking view is straightforward and well-designed. For teams already using SE Ranking for traditional SEO, the integration is a natural extension.

The AI visibility features feel like an add-on to an SEO platform rather than a purpose-built GEO tool. That's not necessarily a problem -- the data is reliable -- but the depth of competitive analysis doesn't match dedicated GEO platforms. Starts at $189/month.

8. Semrush

Semrush added AI visibility tracking to its existing suite, and the brand monitoring features are competent. The competitive benchmarking shows how your brand compares across AI-generated responses, and the integration with Semrush's existing keyword and traffic data is genuinely useful for connecting AI visibility to broader SEO strategy.

The limitation is that Semrush uses fixed prompt sets rather than letting you define custom prompts around your specific competitive landscape. That matters because the prompts your customers actually use may not match Semrush's defaults. For GEO benchmarking specifically, that's a real constraint.

It's a reasonable choice for teams already deep in the Semrush ecosystem who want AI visibility as one data point among many, not as their primary focus.

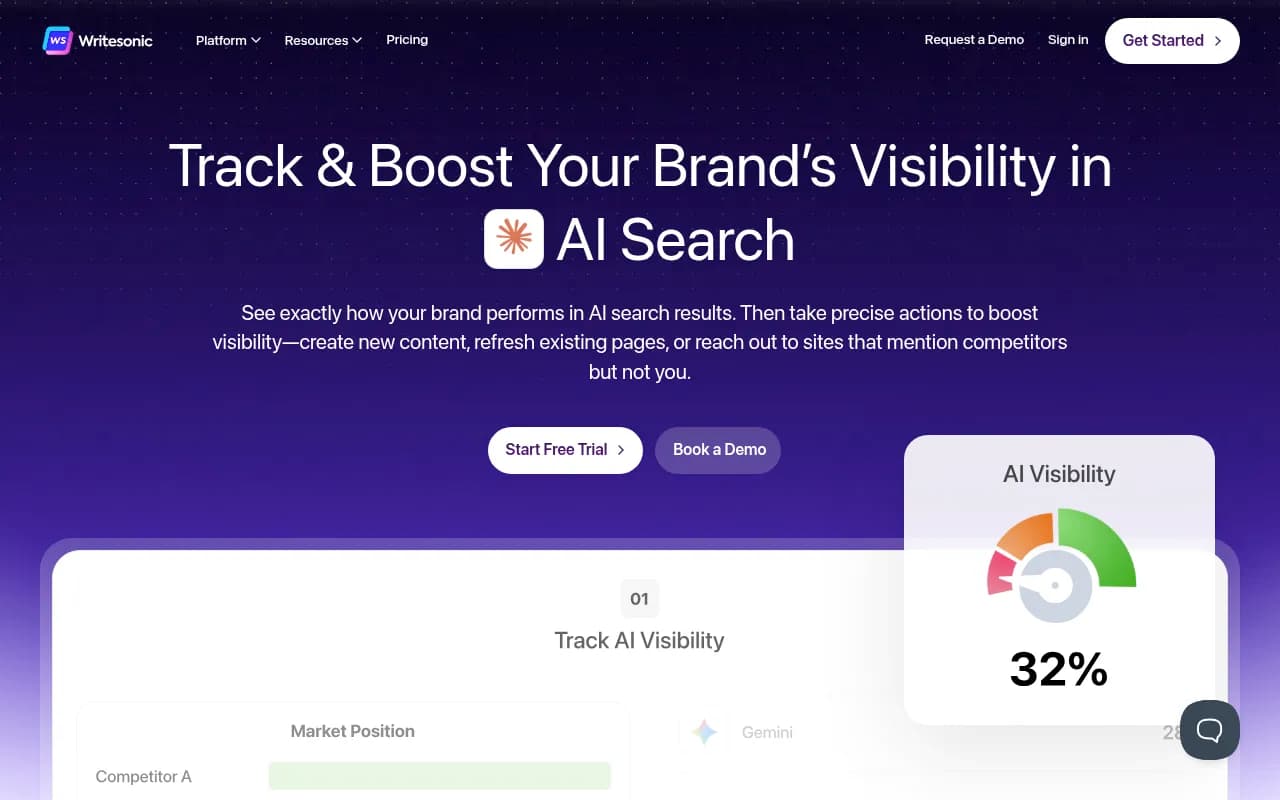

9. Writesonic

Writesonic has evolved from a content generation tool into something closer to a full GEO platform, with an Action Center that identifies citation gaps and AI crawler analytics. The competitive benchmarking is improving -- you can see where competitors are being cited and what content is driving those citations.

The content generation side is strong, which makes it one of the few tools where benchmarking connects to action. Find a gap, generate content to fill it. The AI visibility tracking is less mature than dedicated GEO platforms, but the combination of monitoring and content creation at $49/month makes it one of the better value options.

The tradeoff is depth. The benchmarking data isn't as granular as Promptwatch or AthenaHQ, and the platform coverage is narrower.

10. Bluefish

Bluefish positions itself as an enterprise GEO platform with a focus on brand safety and narrative accuracy -- not just whether you appear in AI responses, but whether what the AI says about you is accurate and favorable.

The competitive benchmarking includes narrative tone analysis, which is useful for brands where reputation management is as important as visibility. The platform is built for Fortune 500 teams with corresponding pricing and complexity.

For most mid-market teams, it's more platform than they need. But for enterprise brands where a single inaccurate AI-generated description could cause real problems, the depth of analysis is worth it.

Side-by-side comparison

| Platform | Prompt-level benchmarking | Source attribution | Content gap analysis | Built-in content generation | Multi-model coverage | Starting price |

|---|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | 10 models | $99/mo |

| AthenaHQ | Yes | Partial | No | No | Broad | $295/mo |

| Profound | Partial | Yes | No | No | Major LLMs | Enterprise |

| Scrunch AI | No (source-focused) | Yes | No | No | Selected | Custom |

| Otterly.AI | Basic | No | No | No | Selected | Low |

| Peec AI | Basic | Partial | No | No | Multi-language | Low |

| SE Visible | Yes | Partial | No | No | 5 platforms | $189/mo |

| Semrush | Fixed prompts | Partial | No | No | Selected | Varies |

| Writesonic | Partial | Yes | Partial | Yes | Multi-engine | $49/mo |

| Bluefish | Yes | Yes | No | No | Broad | Enterprise |

How to choose based on what you actually need

The right tool depends on what you're trying to accomplish with benchmarking.

If you need to understand where you stand versus competitors quickly and cheaply, Otterly or Peec will do the job. Don't expect to build a content strategy from the data, but for a monthly sanity check, they're fine.

If you're an SEO or content team that needs to turn competitive gaps into actual content and track whether it works, Promptwatch is the clearest choice. The full loop -- gap identification, content generation, visibility tracking -- is built in rather than requiring you to stitch together multiple tools.

If you're at an enterprise with specific brand safety concerns and budget to match, Bluefish or Profound are worth evaluating. The depth of narrative analysis and source attribution is better than most.

If you're already invested in a traditional SEO platform and want AI visibility as an extension rather than a primary focus, SE Visible (if you use SE Ranking) or Semrush's AI features are the path of least resistance.

The benchmarking gap most tools miss

There's one thing almost every platform in this comparison gets wrong: they benchmark visibility but not influence.

Appearing in an AI response isn't the same as being recommended. A brand can show up in 80% of relevant prompts as a cautionary example, while a competitor appears in 40% and gets recommended every time. Raw mention rates don't capture that.

A few platforms are starting to add sentiment and narrative analysis to address this. Promptwatch's citation data, Profound's sentiment scoring, and Bluefish's narrative accuracy features all gesture toward this problem. But it's still an area where the whole category has room to grow.

The other gap is traffic attribution. Most platforms show you AI visibility scores, but connecting those scores to actual website traffic and revenue is still hard. Promptwatch's approach -- combining a code snippet, Google Search Console integration, and server log analysis -- is the most complete implementation I've seen, but it's not yet seamless.

What to watch in the second half of 2026

A few trends worth tracking as the GEO platform market continues to develop:

ChatGPT Shopping is becoming a real channel for ecommerce brands. Promptwatch already tracks when brands appear in ChatGPT's product recommendations and shopping carousels -- most competitors don't. That gap will matter more as AI-assisted shopping grows.

AI crawler logs are underused. Knowing which pages the ChatGPT or Perplexity crawler is actually reading -- and which ones it's ignoring or hitting errors on -- is foundational data for GEO. Promptwatch surfaces this; most tools don't even try.

The content generation race is accelerating. Writesonic and Promptwatch both have built-in content generation tied to visibility data. Expect more platforms to add this capability, because the "monitoring only" positioning is getting harder to defend when marketers want results, not just reports.

The platforms that will matter in 2027 are the ones that close the loop between data and action. Right now, that list is short.