Key takeaways

- Most GEO monitoring tools focus on ChatGPT, Perplexity, and Google AI Overviews. Coverage of DeepSeek, Grok, and Mistral is patchy at best.

- Only a handful of platforms track all three emerging models. Promptwatch is the most cited for breadth, covering 10 AI engines including Mistral, Meta/Llama, DeepSeek, and Grok.

- Tracking coverage alone isn't enough. The platforms that deliver real value combine monitoring with content gap analysis and optimization tools.

- If your audience uses AI assistants outside the ChatGPT/Google bubble, you need to audit which models your GEO tool actually queries before committing to a subscription.

Why the "emerging models" problem matters right now

For most of 2024, GEO tracking was essentially a two-horse race: ChatGPT and Perplexity. Google AI Overviews joined the conversation as it rolled out, and Claude got added to a few platforms. That covered maybe 80% of AI search traffic, which felt good enough.

2025 changed that. DeepSeek's R1 release in January 2025 sent shockwaves through the AI industry and drove a surge in user adoption. Grok, Elon Musk's AI built into X (formerly Twitter), started getting real traction with a demographic that's notoriously hard to reach through traditional search. Mistral, the French AI lab, carved out a significant enterprise user base in Europe. Meta AI, running on Llama, became the default assistant for billions of WhatsApp and Instagram users.

The result: the AI search landscape fragmented. Your brand might be consistently cited by ChatGPT but completely absent from Grok. A competitor might dominate Mistral's responses in France while you don't even know that gap exists.

This is the problem most GEO tools haven't caught up with. Many platforms still only query 4-6 models. If your GEO tool doesn't track DeepSeek, Grok, or Mistral, you're missing a growing slice of how people discover brands through AI.

What "tracking" actually means for these models

Before diving into which tools cover which models, it's worth clarifying what "tracking" means. There are two approaches:

API-based tracking queries the model directly through its official API. This is accurate, reproducible, and reflects what users actually see. The downside is cost and rate limits, which is why some platforms skip newer models.

Scraping/simulation mimics user behavior by querying the model's web interface. Cheaper, but less reliable and potentially against terms of service.

When evaluating whether a platform "tracks" DeepSeek, Grok, or Mistral, ask which method they use. API-based coverage is meaningfully better.

The model coverage landscape in 2026

Here's a realistic picture of which models the major GEO platforms actually cover. This is based on documented features and published comparisons, not marketing claims.

| Platform | ChatGPT | Perplexity | Google AIO | Claude | Gemini | Grok | DeepSeek | Mistral | Meta/Llama | Copilot |

|---|---|---|---|---|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| Peec AI | Yes | Yes | Yes | Yes | Yes | Add-on | Add-on | No | No | No |

| Otterly.AI | Yes | Yes | Yes | No | Yes | No | No | No | No | Yes |

| AthenaHQ | Yes | Yes | Yes | Yes | Yes | No | No | No | No | No |

| Profound | Yes | Yes | Yes | Yes | Yes | No | No | No | No | No |

| Scrunch AI | Yes | Yes | Yes | Yes | Core/Ent | Enterprise | No | No | No | No |

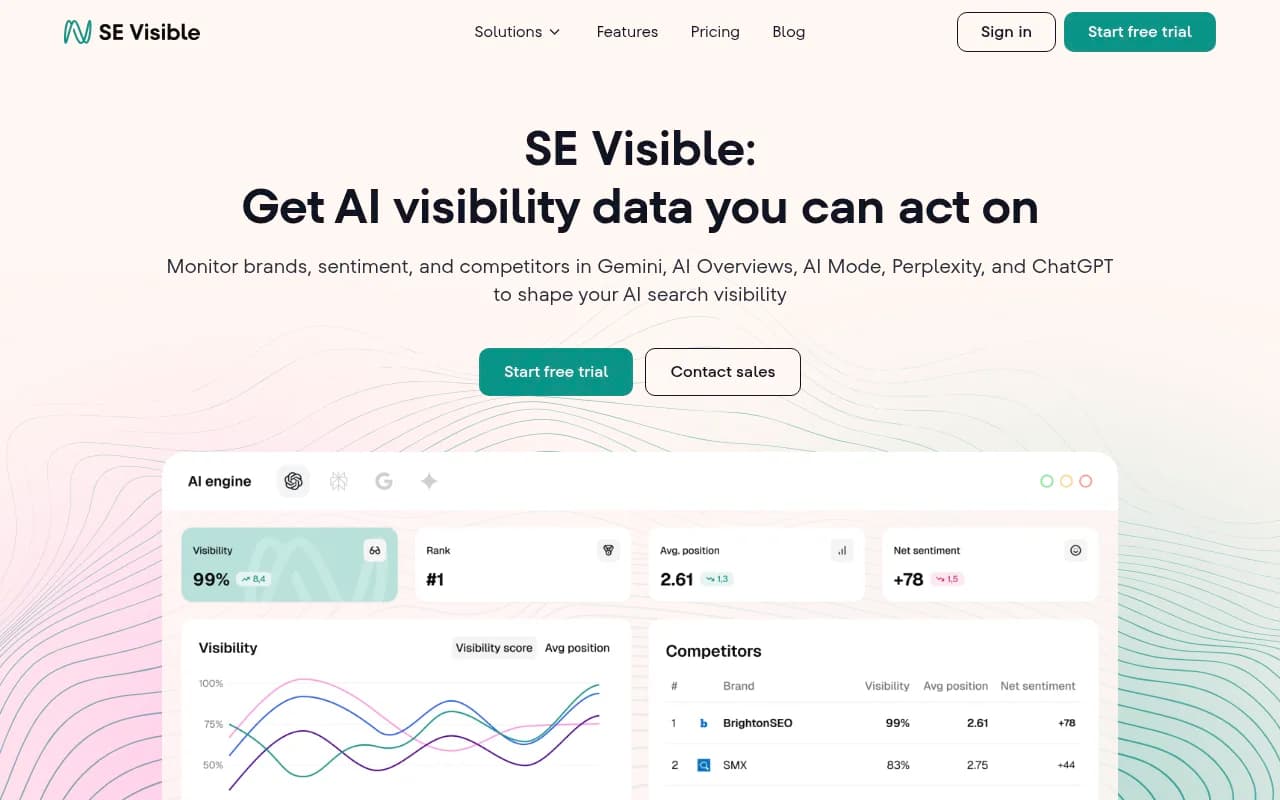

| SE Visible | Yes | Yes | Yes | No | Yes | No | No | No | No | No |

| Semrush AI Toolkit | Yes | Yes | Yes | No | Yes | No | No | No | No | No |

| Ahrefs Brand Radar | Yes | Yes | Yes | No | No | No | No | No | No | No |

The pattern is clear. Most platforms treat ChatGPT, Perplexity, and Google AI Overviews as the core trio, then add Claude and Gemini as secondary coverage. Grok, DeepSeek, Mistral, and Meta AI are either missing entirely or gated behind enterprise tiers.

Which platforms actually cover the emerging models

Promptwatch: broadest coverage by a significant margin

Promptwatch is the most comprehensive option for teams that need to track across the full AI search landscape. It covers 10 models: ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Claude, Gemini, Grok, DeepSeek, Mistral, Meta/Llama, and Copilot.

That breadth matters, but what sets it apart is what happens after the tracking. Most platforms show you where you're invisible and leave you to figure out what to do. Promptwatch's Answer Gap Analysis shows you the specific prompts where competitors are getting cited but you're not, and the built-in AI writing agent generates content designed to close those gaps. For teams tracking emerging models, this means you can find that you're absent from Grok's responses for a key category prompt, then immediately start fixing it.

The crawler logs feature is also relevant here: you can see when DeepSeek or Mistral's crawlers visit your site, which pages they read, and whether they're hitting errors. That's a level of operational detail most platforms don't offer.

Pricing starts at $99/month for the Essential plan (1 site, 50 prompts).

Peec AI: strong core coverage with add-on flexibility

Peec AI covers the main five models well, with Grok and DeepSeek available as add-ons rather than standard inclusions. If you're primarily focused on ChatGPT and Perplexity but want the option to expand to Grok later, this works. The platform is strong on multi-language tracking (115+ languages), which matters if you're monitoring Mistral's European user base. Mistral itself isn't currently listed as a tracked model.

Pricing starts at €89/month.

Scrunch AI: enterprise path to Grok coverage

Scrunch AI takes a tiered approach. Their Core plan covers 4 LLMs; their Enterprise plan expands to 9+, which includes Grok. DeepSeek and Mistral coverage is less clearly documented. If you're an enterprise team with budget for the higher tier, Scrunch is worth evaluating. For smaller teams, the cost-to-coverage ratio is harder to justify when Promptwatch covers more models at a lower entry price.

AthenaHQ: solid monitoring, limited emerging model coverage

AthenaHQ covers 8+ AI search engines according to their documentation, but the specific list leans toward the established models. Grok, DeepSeek, and Mistral aren't prominently featured in their coverage. The platform is monitoring-focused, which means you get good visibility data but less support for acting on it.

Profound: enterprise-grade but gaps in emerging models

Profound is a serious platform for enterprise brands, with strong analytics and reporting. Coverage focuses on the major established models. If tracking Grok or DeepSeek is a priority, Profound isn't the obvious choice. It's also priced at $499/month with no free trial, which makes it a significant commitment before you've confirmed it covers the models you need.

Tools worth knowing for specific use cases

Beyond the main platforms, a few tools in the catalog are worth flagging for specific situations.

Gauge is built around competitive intelligence for AI visibility. If your primary goal is understanding how competitors appear in AI responses across models, it's worth a demo. Custom pricing suggests it's aimed at larger teams.

Otterly.AI is a solid budget option for teams focused on the core six models (ChatGPT, Google AI Overviews, Perplexity, Google AI Mode, Gemini, Copilot). It won't help with Grok, DeepSeek, or Mistral, but if those aren't priorities yet, the $25/month entry point is hard to argue with.

Rankscale covers AI search ranking at a low price point. Coverage of emerging models is limited, but it's a reasonable starting point for teams new to GEO tracking.

SE Visible from SE Ranking covers five models with unlimited seats, which is useful for larger marketing teams. Emerging model coverage is limited, but the per-seat pricing model makes it attractive for teams with many users.

Airefs takes a source-level citation approach, showing you which articles, Reddit threads, and forum discussions AI models cite when forming answers. Coverage is currently ChatGPT-focused with other models available on request. Useful for content strategy, less useful for comprehensive model coverage.

How to evaluate a platform's model coverage before you buy

Marketing pages often list model logos without being specific about what "tracking" means. Here are the questions to ask before committing:

1. Which models do you query, and through which method? API-based is better. If they're scraping, ask about reliability and update frequency.

2. Are emerging models (Grok, DeepSeek, Mistral) included in my plan tier, or are they add-ons? Several platforms include these models only at enterprise pricing. Get this confirmed in writing.

3. How often do you run queries? Daily tracking is the minimum for meaningful trend data. Some budget tools run weekly or on-demand only.

4. Can I see model-by-model breakdowns? Aggregate visibility scores are useful, but you need to see per-model data to understand where you're winning or losing across Grok vs. Mistral vs. ChatGPT separately.

5. Do you track AI crawler activity on my site? This is a differentiator. Knowing that DeepSeek's crawler visited your site 12 times last week but only read your homepage is actionable information. Most platforms don't offer this.

The coverage gap is a strategic problem, not just a data problem

There's a temptation to treat model coverage as a checkbox exercise. "We track 10 models, competitors track 6, we win." But the real question is: which models are your customers actually using?

If you're a B2B SaaS company selling to European enterprises, Mistral's user base is directly relevant to you. If your brand is in a category that skews toward tech-forward early adopters, Grok's X-integrated audience matters. If you're targeting younger consumers in Asia, DeepSeek's growing user base is something you can't ignore.

The platforms that cover more models give you the data to answer these questions. But data without action is just a dashboard. The reason the coverage gap matters is that it represents real customers asking real questions about your category, getting answers that may or may not include your brand, and making decisions based on those answers.

Tracking it is the first step. Fixing it is the point.

Comparison: emerging model coverage at a glance

| Platform | Grok | DeepSeek | Mistral | Meta/Llama | Starting price | Free trial |

|---|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | $99/mo | Yes |

| Peec AI | Add-on | Add-on | No | No | €89/mo | Yes |

| Scrunch AI | Enterprise | No | No | No | $250/mo | Yes |

| AthenaHQ | No | No | No | No | Custom | No |

| Profound | No | No | No | No | $499/mo | No |

| Otterly.AI | No | No | No | No | $25/mo | Yes |

| Semrush AI Toolkit | No | No | No | No | $99/mo | No |

| Ahrefs Brand Radar | No | No | No | No | $129/mo+ | Beta |

Bottom line

If tracking DeepSeek, Grok, and Mistral is a genuine requirement, the field narrows quickly. Promptwatch is the clearest option for comprehensive emerging model coverage combined with optimization capabilities. Peec AI works if you're willing to pay for add-ons and don't need Mistral. Scrunch AI gets you Grok at enterprise pricing.

For everyone else, the honest answer is: most GEO platforms aren't there yet. They were built when ChatGPT and Perplexity were the whole story. The platforms that invested in broader model coverage early are now in a meaningfully better position to help brands navigate a more fragmented AI search landscape.

Before picking a tool, run a quick test: ask the vendor to show you your brand's visibility specifically in Grok and DeepSeek responses. If they can't pull that up in the demo, you have your answer.