Key takeaways

- AI-referred traffic converts at 2x to 23x higher rates than organic search, but only 16% of brands track their AI search performance -- making launch-day AI visibility a significant competitive gap.

- 76.4% of ChatGPT's top-cited pages were updated within the last 30 days, which means freshness is one of the most actionable levers you have right after a product launch.

- Getting cited within 30 days requires a specific sequence: set up tracking before launch, build the right content structure on day one, then actively close citation gaps in weeks two and three.

- Most AI visibility tools only show you where you stand. The ones worth using also tell you what to fix and help you fix it.

- This guide covers the full 30-day playbook: pre-launch setup, launch-day content requirements, post-launch gap analysis, and how to measure whether it's working.

Why product launches are a missed opportunity in AI search

When a new product page goes live, most teams are focused on the usual checklist: meta tags, internal links, maybe a press release. AI search visibility isn't on the list. It should be.

Here's the uncomfortable reality: AI search traffic converts at dramatically higher rates than organic. Similarweb's ecommerce data puts ChatGPT referrals at 11.4% conversion vs. 5.3% for organic. Adobe Digital Insights, analyzing over 1 trillion site visits, found AI-referred shoppers spend 32% longer on-site, view 10% more pages, and bounce 27% less. These are buyers who've already done their research inside the AI and arrived at your page with intent.

And yet only 16% of brands track their AI search performance at all.

For a product launch, this creates a specific problem. AI models don't discover new pages the way Google crawls them. They rely on a combination of training data, real-time web access (for models like Perplexity and ChatGPT with browsing), and the citation patterns they've already established. A new page that isn't structured for AI consumption, isn't being tracked, and isn't appearing in the right source ecosystems will simply get ignored -- sometimes for months.

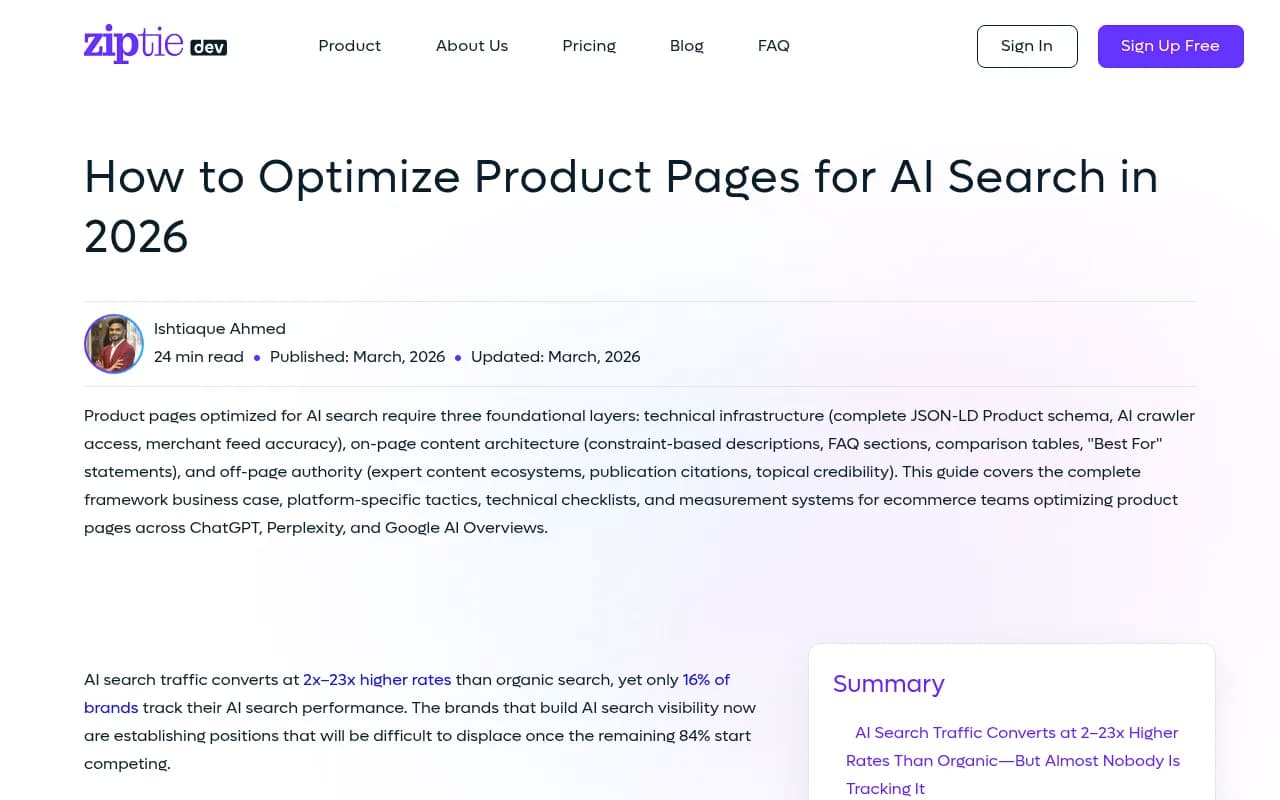

The 30-day window matters because of one data point from ZipTie.dev's research: 76.4% of ChatGPT's top-cited pages were updated within the last 30 days. AI models have a strong recency bias. A page that earns early citations tends to keep earning them. A page that doesn't get cited in the first month often gets stuck in a visibility hole that's hard to climb out of.

The good news: this is a solvable problem. But it requires a different playbook than traditional SEO.

Week 0 (pre-launch): Set up tracking before anything goes live

The biggest mistake teams make is treating AI visibility as something to think about after launch. By then you've already lost the baseline.

Define your target prompts

AI search doesn't work like keyword ranking. Nobody types "best project management software" into ChatGPT the same way they'd type it into Google. They ask questions: "What's a good tool for managing remote teams?", "Which project management apps integrate with Slack?", "Compare Asana and Monday for a 10-person startup."

Before your product page goes live, write out 20-30 prompts that a real buyer would use when researching your product category. Think about:

- Comparison prompts ("X vs Y", "best alternatives to Z")

- Problem-first prompts ("how do I solve [specific pain point]")

- Use-case prompts ("best tool for [specific scenario]")

- Brand-aware prompts (your product name directly)

These become your tracking set. You'll run these prompts against multiple AI models and measure whether your page gets cited.

Choose your tracking setup

You have two options here: manual or automated.

Manual works for a first baseline. Run each prompt in ChatGPT, Perplexity, Claude, and Google AI Overviews. Note whether your brand or page appears, whether it's cited as a source, and what competitors are being cited instead. This takes a few hours but gives you a real picture of where you're starting from.

Automated is what you'll need for ongoing tracking. Tools like Promptwatch run your prompt set across multiple AI models on a schedule, track citation rates, and show you which competitors are appearing for prompts where you're invisible.

Other tools worth knowing about for this stage:

Audit your AI crawler access

Before launch, check that AI crawlers can actually read your new page. This means:

- Your

robots.txtdoesn't block GPTBot, ClaudeBot, PerplexityBot, or other AI crawlers - Your page isn't behind a login or JavaScript wall that prevents crawling

- You have proper JSON-LD Product schema in place (for product pages specifically)

- Your sitemap is updated to include the new URL

Tools like DarkVisitors let you see which AI bots are hitting your site and whether they're encountering errors.

Day 1 (launch): Build the content structure AI models want to cite

This is where most product pages fail. They're written for humans browsing a website, not for AI models trying to extract a clear, citable answer.

The three content layers that get cited

Research from ZipTie.dev breaks down AI-cited product content into three layers:

Technical infrastructure: Complete JSON-LD Product schema, accurate merchant feed data, and confirmed AI crawler access. Without this, some models simply can't index your page properly.

On-page content architecture: This is the layer most teams skip. AI models cite pages that give direct, structured answers. That means:

- A clear "Best For" statement near the top of the page (who is this product for, specifically?)

- A FAQ section that answers the exact questions buyers ask AI models

- A comparison table showing how your product differs from alternatives

- Constraint-based descriptions that acknowledge what the product doesn't do (counterintuitively, this increases citation rates because it signals honesty)

Off-page authority: AI models don't just cite your own product page. They cite the ecosystem of content around your product -- reviews, comparisons, expert writeups, Reddit discussions, YouTube videos. More on this in week two.

Write for the prompt, not the page

Think about the specific prompts you defined in week zero. For each one, ask: if an AI model is trying to answer this prompt, what would it need to find on my page?

A prompt like "best CRM for small e-commerce brands" requires your page to clearly state that your product is a CRM, that it's designed for small businesses, and that it has e-commerce-specific features. If any of those signals are buried in marketing copy, the AI model may not surface your page.

Be explicit. Use the exact language buyers use in their prompts. Don't assume the AI will infer what your product does from clever brand messaging.

FAQ sections are not optional

This is probably the single highest-leverage change you can make on a product page for AI citation. FAQ sections give AI models pre-formatted answers they can pull directly.

Write FAQs that match your target prompts. If you're tracking "how does [your product] compare to [competitor]", your FAQ should include that question with a direct, honest answer. If you're tracking "what integrations does [your product] support", the FAQ should list them explicitly.

Mark up your FAQ with FAQPage schema so AI models can parse it cleanly.

Weeks 1-2: Close the citation gaps

By the end of the first week, you should have your first round of tracking data. Now the real work begins.

Run your gap analysis

Look at your tracking results and identify the prompts where competitors are being cited but you're not. This is your gap list. For each gap, ask two questions:

- Does my page contain the information needed to answer this prompt?

- Is my page being cited anywhere in the external sources AI models are drawing from?

The first question is a content problem. The second is a distribution problem. They require different fixes.

For content gaps, you need to add or restructure content on your product page (or create supporting pages) that directly address the missing prompts.

For distribution gaps, you need to get your product mentioned in the sources AI models are already citing.

Find where AI is getting its information

This is where citation source analysis becomes essential. For any prompt where a competitor is being cited, look at which specific pages are being referenced. Is it a Reddit thread? A comparison article on a third-party site? A YouTube review? An industry publication?

Those are your target channels. A platform like Promptwatch shows you exactly which domains and pages AI models are citing for your tracked prompts, which turns a vague "we need more coverage" problem into a specific list of places to publish or pitch.

The external content push

In parallel with fixing your own page, you need to seed the external ecosystem. This means:

- Getting your product reviewed or mentioned on sites that AI models already cite for your category

- Participating in (or seeding) Reddit discussions in relevant subreddits -- Reddit is heavily cited by ChatGPT and Perplexity

- Reaching out to YouTubers who cover your category, since YouTube content is increasingly cited in AI responses

- Submitting to product directories and comparison sites that appear in AI citations for your target prompts

This isn't traditional link building. You're not trying to pass PageRank. You're trying to appear in the source ecosystem that AI models draw from when answering questions about your category.

Weeks 3-4: Iterate and measure

What to measure

By week three, you should be tracking these metrics:

| Metric | What it tells you | How to track it |

|---|---|---|

| Citation rate | % of prompts where your page is cited | AI visibility platform |

| Share of voice | Your citations vs. competitors across all tracked prompts | AI visibility platform |

| Prompt coverage | How many of your target prompts return your brand at all | Manual or automated |

| AI-referred traffic | Actual visitors arriving from AI search | GSC + server logs |

| Citation sources | Which external pages are citing you | Citation analysis tool |

| Crawler activity | How often AI bots are visiting your page | Crawler log analysis |

Don't just track whether you're mentioned. Track whether you're cited as a source. There's a meaningful difference between an AI model saying "Brand X exists" and an AI model linking to your product page as the authoritative answer.

Refresh your content based on what's working

Remember the freshness data: AI-cited content is 25.7% fresher on average than traditionally ranked content. This means your 30-day window isn't just about getting cited once -- it's about establishing a pattern of regular updates that signals to AI models that your page is actively maintained.

After your initial launch push, schedule weekly content updates for the first month. Add new data points, update comparison tables, expand FAQ sections based on the gaps you've identified. Each update is a signal to AI crawlers that this page is worth revisiting.

Tool comparison: what to use at each stage

Different tools cover different parts of this workflow. Here's how they stack up for a product launch scenario:

| Tool | Best for | Citation source analysis | Content generation | Crawler logs |

|---|---|---|---|---|

| Promptwatch | Full launch workflow | Yes | Yes (AI writing agent) | Yes |

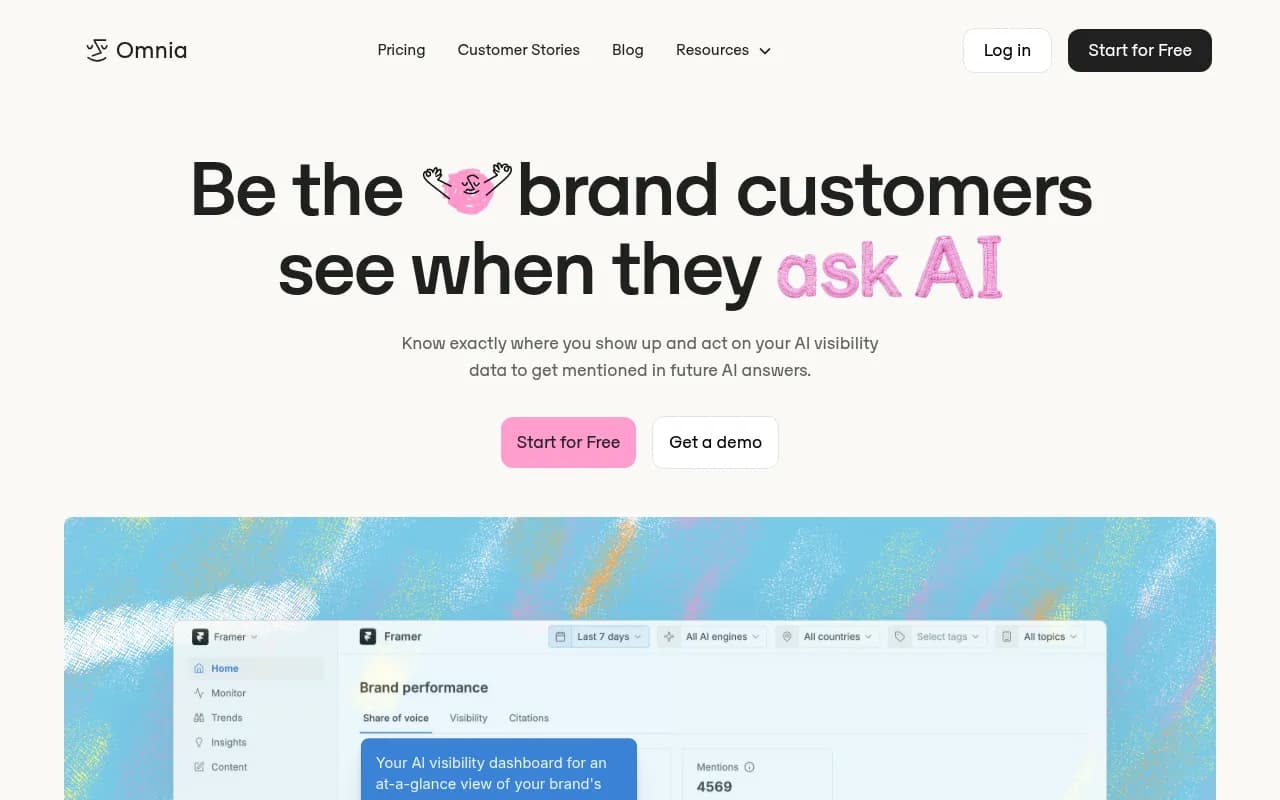

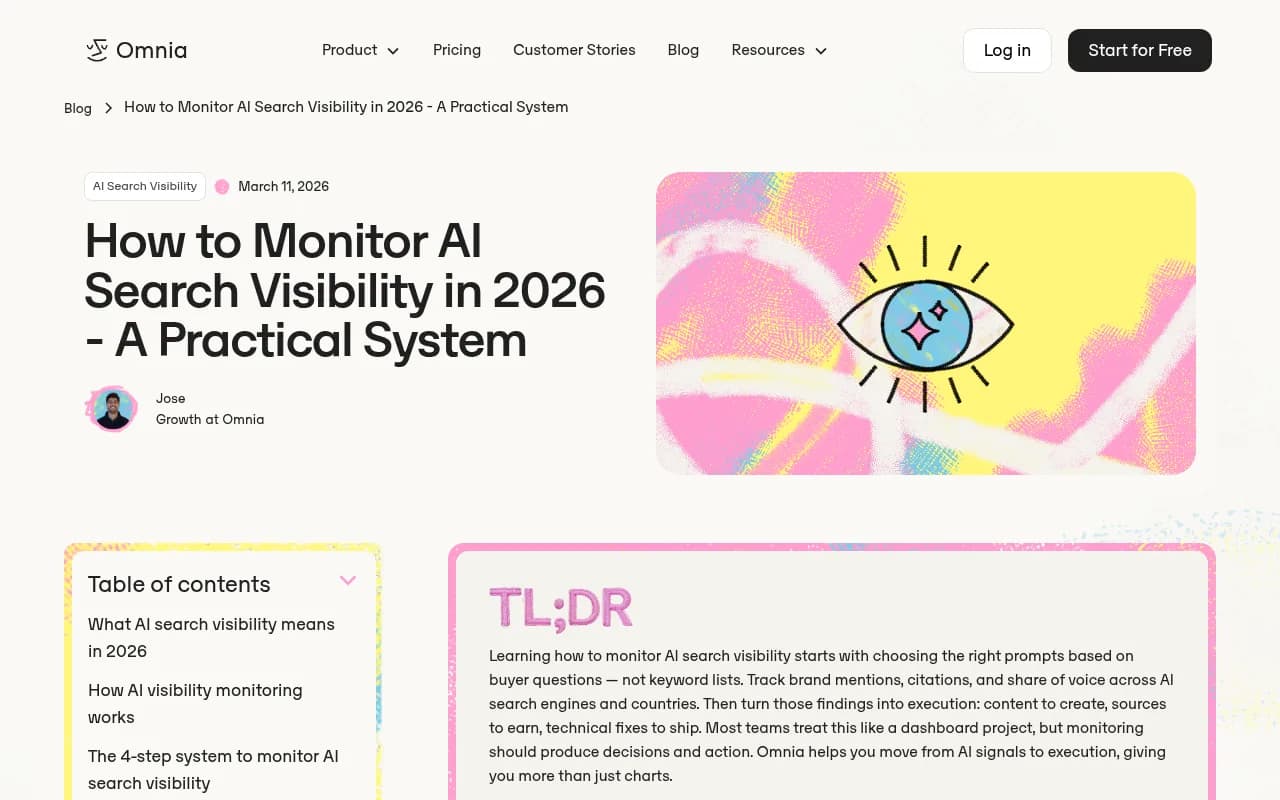

| Profound | Monitoring + reporting | Partial | No | No |

| Omnia | Share of voice tracking | Partial | No | No |

| Peec AI | Multi-language monitoring | No | No | No |

| DarkVisitors | AI bot access auditing | No | No | Yes |

| GetCito | Citation tracking | Yes | No | No |

The honest assessment: most tools in this space are monitoring dashboards. They show you where you stand but leave you to figure out what to do next. For a product launch where you're trying to move fast, that's a significant limitation.

Promptwatch is one of the few platforms that closes the loop -- it identifies which prompts you're missing, shows you the specific content gaps, and has a built-in writing agent to create content grounded in real citation data. For a 30-day sprint, that matters.

The 30-day checklist

Here's the full sequence compressed into a checklist:

Week 0 (pre-launch)

- Define 20-30 target prompts across comparison, problem-first, and use-case categories

- Set up AI visibility tracking (automated preferred)

- Audit robots.txt for AI crawler access

- Implement JSON-LD Product schema

- Run manual baseline across ChatGPT, Perplexity, Claude, Google AI Overviews

Day 1 (launch)

- Publish with FAQ section (minimum 10 questions matching target prompts)

- Include comparison table vs. top 2-3 competitors

- Add explicit "Best For" statement

- Submit updated sitemap

- Verify AI crawlers can access the page

Week 1

- Review first tracking data

- Identify top 5 citation gaps

- Begin external content push (Reddit, review sites, comparison articles)

- First content refresh (add data, expand FAQs)

Week 2

- Analyze which external sources AI is citing for your gap prompts

- Pitch or publish on 3-5 of those sources

- Second content refresh

- Check crawler logs for AI bot activity

Week 3

- Measure citation rate change vs. baseline

- Identify remaining gaps

- Double down on external sources that are working

- Third content refresh

Week 4

- Full metric review: citation rate, share of voice, AI-referred traffic

- Document what moved and what didn't

- Set ongoing monitoring cadence

What "cited within 30 days" actually looks like

To be clear about expectations: getting cited within 30 days is achievable, but it's not guaranteed for every product in every category. Highly competitive categories where established players dominate AI citations will take longer. Niche categories with less competition can move faster.

What you're really building in 30 days is the foundation: the right content structure, the external source presence, and the tracking infrastructure to know whether it's working. Some pages will earn citations in week two. Others will take six weeks. The difference is usually the quality of the external ecosystem you've built, not the quality of the product page itself.

The teams that get this right treat AI visibility as a launch requirement, not an afterthought. They set up tracking before the page goes live, structure content for AI consumption from day one, and actively close gaps in the weeks that follow.

The teams that don't will spend months wondering why their product page isn't showing up when buyers ask AI models for recommendations -- while their competitors, who did the work, keep getting cited.