Key takeaways

- AI visibility platforms vary widely: some only monitor where your brand appears, while others help you actually fix the gaps with content generation and optimization tools.

- Promptwatch is the only platform in this comparison that closes the full loop — from gap analysis to AI-native content creation to traffic attribution.

- Profound is the strongest pure-monitoring option for enterprise teams that need deep prompt data and multi-model coverage.

- Relixir suits teams that want an AI-native CMS alongside their visibility tracking, with autonomous content publishing built in.

- Scrunch is a lighter-weight option for brands that want quick setup and basic share-of-voice data without a steep learning curve.

If you've noticed your organic traffic getting weird lately, you're not imagining it. A growing chunk of buyer research now happens inside ChatGPT, Perplexity, Claude, and Google's AI Overviews. People ask those tools to recommend vendors, compare products, and shortlist options. The brands that show up in those answers win the consideration. The ones that don't... often don't even know they're missing.

That's the problem AI visibility platforms exist to solve. But "AI visibility platform" covers a lot of ground. Some tools just show you a dashboard of mentions. Others help you understand why you're not being cited and what to do about it. For content teams specifically, that distinction matters enormously.

This guide breaks down four platforms that content teams are actively evaluating in 2026: Promptwatch, Profound, Relixir, and Scrunch. We'll look at what each one actually does, where it fits, and where it falls short.

Why content teams need a different kind of AI visibility tool

Most AI visibility tools were designed for brand or SEO managers who want to see a number go up. That's fine, but content teams have a different job. They need to know:

- Which topics and questions are driving AI citations in their category?

- What content is missing from their site that AI models are pulling from competitors?

- Which pages are actually being cited, and which ones are being ignored?

- How do they prioritize what to write next?

A monitoring-only dashboard doesn't answer those questions. It tells you your "share of voice" is 12% and your competitor's is 34%, then leaves you to figure out what to do with that. Content teams need the gap analysis, the prompt intelligence, and ideally some help generating the content itself.

With that framing in mind, here's how the four platforms stack up.

Platform-by-platform breakdown

Promptwatch

Promptwatch is built around what it calls the "action loop": find gaps, create content, track results. That framing is accurate. It's not just a tracker.

The Answer Gap Analysis is the feature content teams will find most immediately useful. It shows you the specific prompts where competitors are getting cited but you're not. Not vague topic clusters -- actual prompts, with volume estimates and difficulty scores attached. You can sort by winnable opportunities and start writing to those gaps rather than guessing.

From there, Promptwatch's built-in AI writing agent generates articles, listicles, and comparisons grounded in citation data from over 880 million analyzed citations. The output isn't generic filler -- it's structured around what AI models actually want to cite. That's a meaningful difference from just running a topic through a general-purpose AI writer.

On the tracking side, Promptwatch monitors 10 AI models: ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews, Google AI Mode, Grok, DeepSeek, Copilot, and Meta AI. Page-level tracking shows which specific URLs are being cited and by which models. The AI Crawler Logs feature shows when AI crawlers visit your site, which pages they read, and any errors they hit -- something most competitors don't offer at all.

A few other things worth knowing: Reddit and YouTube tracking surfaces discussions that influence AI recommendations (a channel that's easy to overlook), and ChatGPT Shopping tracking monitors when your brand appears in product recommendation carousels.

Pricing starts at $99/month for the Essential plan (1 site, 50 prompts, 5 articles). The Professional plan at $249/month adds crawler logs, state/city tracking, and 15 articles per month. Business is $579/month for 5 sites and 30 articles. There's a free trial.

For content teams that want to close the loop between visibility data and actual content production, Promptwatch is the most complete option in this comparison.

Profound

Profound is the enterprise-grade monitoring platform in this group. It covers 10+ AI models and has some of the deepest prompt volume data available -- useful for teams that want to prioritize which prompts to target based on actual search demand rather than gut feel.

Where Profound shines is in its breadth of model coverage and its structured approach to tracking brand mentions across AI engines. It's well-suited to larger organizations that need to report on AI visibility to stakeholders and want reliable, defensible data. The UI is clean and the reporting is solid.

The honest limitation: Profound is primarily a monitoring tool. It shows you where you stand and how you compare to competitors, but it doesn't generate content or tell you specifically what to write. Content teams using Profound will still need a separate workflow for actually acting on the data. That's not a dealbreaker if you have writers and a content strategy in place -- but it means Profound is one piece of the stack, not the whole thing.

Pricing starts at $99/month, which is competitive for the feature set at the monitoring level.

Relixir

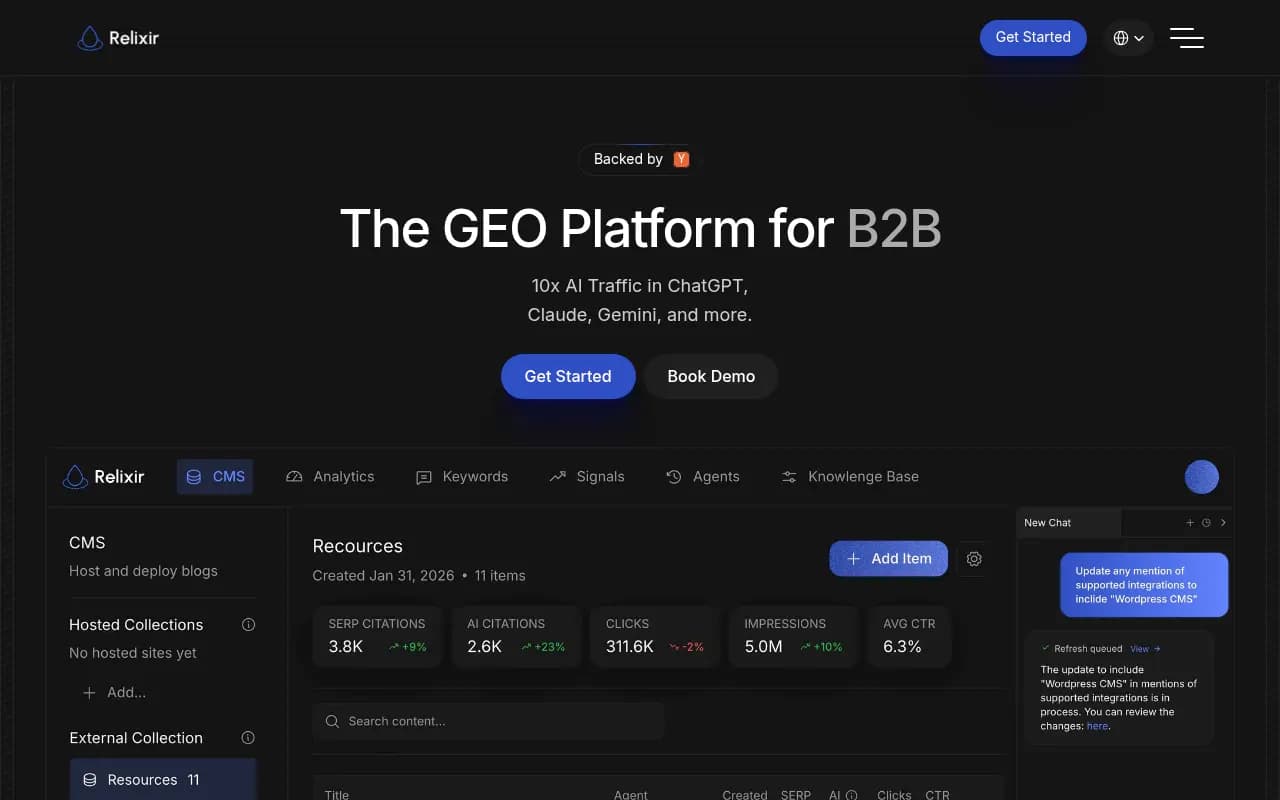

Relixir takes a different angle. It's positioned as an AI-native CMS with GEO capabilities built in -- meaning it's not just tracking your visibility, it's helping you publish content designed to rank in AI search from the start.

The autonomous content publishing angle is genuinely interesting for content teams that are resource-constrained. Relixir can identify gaps and generate content without requiring a human to manually brief and write each piece. For teams running lean, that's appealing.

The tradeoff is control. Autonomous publishing sounds great until you're explaining to your CMO why a piece went live without a proper review. Teams with strict editorial standards may find the workflow requires more guardrails than Relixir provides out of the box. It's also a newer platform, so the citation data depth and model coverage don't yet match what Promptwatch or Profound have built up.

That said, if your content team's primary bottleneck is production volume rather than strategy, Relixir is worth a look. It's built for teams that want to ship a lot of AI-optimized content quickly.

Scrunch

Scrunch is the lightest-weight option here. It's designed for brands that want to get a read on their AI visibility without a long onboarding process or a complex feature set.

Setup is fast, the interface is straightforward, and you can get a basic picture of your share of voice across major AI models without much configuration. For smaller teams or brands just starting to think about AI search, that low friction is genuinely valuable.

The ceiling is also lower. Scrunch doesn't offer the depth of prompt intelligence, the content generation tools, or the crawler log data that more advanced platforms provide. It's a good starting point, but content teams that want to move from "monitoring" to "optimizing" will likely outgrow it.

Side-by-side comparison

| Feature | Promptwatch | Profound | Relixir | Scrunch |

|---|---|---|---|---|

| AI models tracked | 10 (ChatGPT, Perplexity, Claude, Gemini, Grok, DeepSeek, Copilot, Meta AI, AI Overviews, AI Mode) | 10+ | Varies | Limited |

| Answer gap analysis | Yes | Partial | Yes | No |

| AI content generation | Yes (built-in writing agent) | No | Yes (autonomous CMS) | No |

| Prompt volume & difficulty scores | Yes | Yes | No | No |

| AI crawler logs | Yes | No | No | No |

| Reddit & YouTube tracking | Yes | No | No | No |

| ChatGPT Shopping tracking | Yes | No | No | No |

| Page-level citation tracking | Yes | Yes | Partial | No |

| Traffic attribution | Yes (GSC, snippet, server logs) | No | No | No |

| Starting price | $99/mo | $99/mo | Custom | Custom |

| Best for | Content teams wanting full action loop | Enterprise monitoring & reporting | High-volume content publishing | Basic brand monitoring |

Which platform fits your situation?

The right answer depends on what your content team actually needs to do.

If you're trying to build a repeatable system where you find gaps, create content to fill them, and track whether that content gets cited -- Promptwatch is the most purpose-built tool for that workflow. The combination of gap analysis, prompt intelligence, built-in content generation, and page-level tracking means you're not stitching together three different tools to do one job.

If your primary need is enterprise-grade reporting and you already have a content team that can act on data independently, Profound gives you solid monitoring with reliable multi-model coverage. It won't tell you what to write, but it'll tell you where you stand with confidence.

If production volume is your bottleneck and you want AI to handle more of the content creation autonomously, Relixir is worth evaluating. Just go in with clear editorial guardrails.

If you're just getting started and want to understand the basics of your AI visibility before committing to a more complex platform, Scrunch is a reasonable first step.

What to look for beyond the feature list

A few things that don't always show up in comparison tables but matter a lot in practice:

Data freshness. AI models update their training data and citation patterns. Platforms that query AI models in real time (rather than relying on cached or infrequent snapshots) give you a more accurate picture of where you actually stand today.

Prompt customization. Generic prompts like "best [category] tool" are fine for benchmarking, but your buyers ask more specific questions. Platforms that let you define custom prompts -- including persona-specific or region-specific variations -- will surface more actionable data.

Citation source transparency. Knowing your brand is mentioned in 23% of responses is useful. Knowing which pages are being cited, and which Reddit threads or YouTube videos AI models are pulling from, is what actually tells you what to do next.

Traffic attribution. Visibility scores are an intermediate metric. What you actually care about is whether AI visibility is driving traffic and revenue. Platforms that connect AI citations to actual site visits (via GSC integration, a tracking snippet, or server log analysis) let you make the business case for this work.

Most monitoring-only platforms stop at the visibility score. If your content team is going to invest time and budget in AI search optimization, you want a platform that can show the downstream impact.

The bottom line

The AI visibility platform market has matured quickly, but there's still a wide gap between tools that show you data and tools that help you act on it. For content teams specifically, that gap is the whole game. Monitoring your share of voice without a clear path to improving it is just a more expensive way to feel anxious about your competitor's numbers.

Promptwatch is the platform in this comparison that's most explicitly designed around that action loop. Profound is the strongest monitoring option if you're at enterprise scale and have the internal resources to translate data into content strategy. Relixir suits teams optimizing for publishing velocity. Scrunch works as a low-commitment starting point.

Whatever you choose, the teams that will win in AI search over the next few years are the ones that treat it as a content problem, not just a tracking problem. The visibility data is only as valuable as what you do with it.