Summary

- Answer gap analysis reveals which AI prompts competitors appear in while you're missing — the AI equivalent of traditional keyword gap analysis

- Most brands rank well in Google but are invisible in ChatGPT, Claude, and Perplexity because they lack the specific content AI models want to cite

- The fix is a three-step loop: find the gaps (which prompts you're missing), create content engineered for AI citation, and track results to close the visibility gap

- Tools like Promptwatch automate this process by showing exactly which prompts competitors own, what content is missing from your site, and generating articles grounded in real citation data

- Unlike traditional SEO gap analysis (which focuses on keywords), answer gap analysis targets conversational queries and the structured answers AI models generate — a fundamentally different content challenge

What is answer gap analysis?

Answer gap analysis is the process of identifying prompts and questions that trigger AI-generated responses where your competitors are cited but you're not. It's the AI search equivalent of keyword gap analysis, but instead of tracking Google rankings, you're monitoring visibility across ChatGPT, Claude, Perplexity, Gemini, and other large language models.

The core insight: if a competitor consistently appears in AI responses for prompts relevant to your business, they have content that AI models trust and cite. You don't. That's the gap.

Traditional keyword gap analysis asks "What terms does my competitor rank for in Google that I don't?" Answer gap analysis asks "What prompts does my competitor get cited for in AI search that I don't?" The mechanics are similar but the execution is completely different because AI models cite sources differently than Google ranks pages.

Here's what makes this challenging: you might rank #1 in Google for a keyword but be completely invisible when someone asks ChatGPT the same question. AI models don't just look at rankings — they evaluate content for citation-worthiness based on structure, specificity, recency, and how well it directly answers the query. A blog post optimized for Google might be useless for AI citation.

Why traditional content gap analysis fails for AI search

Most marketers run content gap analysis using tools like Semrush or Ahrefs. You plug in competitor domains, export a list of keywords they rank for that you don't, and start writing. This works for traditional SEO but breaks down for AI visibility.

The problem is threefold:

First, AI models don't "rank" content the way Google does. There's no position 1-10. Instead, models cite a handful of sources in their responses — sometimes zero, sometimes five, rarely more than ten. If you're not in that small set, you're invisible. Traditional gap analysis shows you keywords to target but doesn't tell you how to structure content so AI models actually cite it.

Second, the queries people use in AI search are fundamentally different from Google searches. Google queries are short and keyword-focused ("best project management software"). AI prompts are conversational and context-rich ("I'm managing a remote team of 15 people across 3 time zones and need a project management tool that integrates with Slack and has good mobile apps. What should I use?"). Your keyword gap analysis won't surface these longer, more specific prompts.

Third, AI models care about different signals than Google. Google weighs backlinks, domain authority, and user engagement metrics. AI models prioritize content that directly answers questions, includes specific data points, and is structured in a way that's easy to parse and cite. A page that ranks well in Google might lack the specificity AI models need.

The result: brands that dominate Google search are often invisible in AI search. They're optimizing for the wrong signals.

How answer gap analysis works

Answer gap analysis flips the traditional workflow. Instead of starting with keywords, you start with prompts — the actual questions people ask AI models. Then you identify which competitors appear in the responses and what content they have that you don't.

The process breaks down into three steps:

Step 1: Identify the prompts that matter

You need a list of prompts relevant to your business. Not keywords — full conversational queries that real users type into ChatGPT, Claude, or Perplexity.

Some platforms provide prompt volume estimates and difficulty scores, similar to keyword metrics in traditional SEO. High-volume, low-difficulty prompts are your best opportunities — lots of people asking, not much competition for citations.

You also want to look at query fan-outs: how one prompt branches into related sub-queries. For example, "best CRM for small business" might fan out into "best CRM for real estate agents", "best CRM under $50/month", "best CRM with email marketing". Each of these is a separate opportunity.

Step 2: Run competitive citation analysis

For each prompt, you need to see which domains AI models are citing. This is where most teams get stuck — manually prompting ChatGPT and Claude for every query is impossibly slow.

Platforms that automate this process query multiple AI models simultaneously and extract the cited sources from each response. You get a heatmap showing which competitors dominate which prompts across which models.

The pattern you're looking for: prompts where competitors consistently appear but you don't. That's the gap.

Step 3: Analyze what content is missing

Once you know which prompts you're losing, you need to figure out why. What content do competitors have that you don't? What angles are they covering? What specificity are they providing that AI models find citation-worthy?

This is where answer gap analysis diverges most sharply from traditional gap analysis. You're not just looking at keywords — you're analyzing the structure, depth, and specificity of competitor content to understand what makes it citation-worthy.

Some platforms surface this automatically by showing you the exact pages competitors are getting cited for, the topics they cover, and the content patterns AI models prefer. Others require manual analysis.

The action loop: From gaps to content to visibility

Identifying gaps is useful but insufficient. The real value comes from closing them — creating content that gets cited.

This is where most monitoring-only tools fall short. They show you the problem but leave you stuck figuring out the solution. The platforms that actually drive results build an action loop:

- Find the gaps: Answer gap analysis shows which prompts competitors own but you don't, and what content is missing from your site.

- Create content that ranks in AI: Generate articles, listicles, and comparisons engineered for AI citation — not generic SEO filler but content grounded in real citation data, prompt volumes, and competitor analysis.

- Track the results: Monitor your visibility scores as AI models start citing your new content. See which pages are being cited, how often, and by which models.

This cycle — find gaps, generate content, track results — is what separates optimization platforms from monitoring dashboards.

Promptwatch is built around this loop. Its Answer Gap Analysis feature shows exactly which prompts competitors are visible for but you're not, then surfaces the specific content your website is missing. The built-in AI writing agent generates articles grounded in 880M+ citations analyzed, prompt volumes, persona targeting, and competitor analysis. Page-level tracking shows which pages are being cited and by which models, so you can measure the impact of each new piece of content.

Other platforms like Profound and AthenaHQ offer strong monitoring capabilities but lack the content generation and optimization tools needed to close the loop.

Practical example: Running an answer gap analysis

Let's walk through a concrete example. Suppose you run a SaaS company selling project management software. You rank well in Google for terms like "project management software" and "team collaboration tools", but you're not showing up in ChatGPT or Perplexity when users ask for recommendations.

You start by identifying relevant prompts. Instead of broad keywords, you're looking for conversational queries:

- "What's the best project management tool for remote teams?"

- "I need a project management app that integrates with Slack and has time tracking. What do you recommend?"

- "What are the main differences between Asana, Monday, and ClickUp?"

- "How do I choose a project management tool for a startup with 10 people?"

Next, you run these prompts through multiple AI models and extract the cited sources. You discover that Asana, Monday, and ClickUp are consistently cited across most prompts. Your brand appears in zero responses.

You analyze the content competitors are getting cited for. Asana has a detailed comparison page breaking down features by use case (remote teams, marketing teams, engineering teams). Monday has a guide on choosing project management software with a decision tree based on team size and industry. ClickUp has case studies showing how specific companies use their tool.

You realize what's missing from your site: you have generic marketing pages but no detailed comparison content, no use-case-specific guides, and no decision frameworks. AI models can't cite you because you haven't given them citation-worthy content.

You create three new pieces:

- A comparison guide: "Asana vs Monday vs ClickUp vs [Your Tool]: Which is Best for Remote Teams in 2026?"

- A decision framework: "How to Choose Project Management Software: A Step-by-Step Guide for Startups"

- A use-case guide: "Project Management for Marketing Teams: Features That Actually Matter"

Each piece is structured for AI citation: clear headings, specific data points, direct answers to common questions, and comparison tables.

Two weeks later, you check your visibility. Your comparison guide is now being cited by Claude and Perplexity for prompts about remote team tools. Your decision framework appears in ChatGPT responses about choosing software for startups. Your use-case guide is cited by Gemini for marketing-specific queries.

You've closed the gap.

Tools for answer gap analysis

Most traditional SEO tools (Semrush, Ahrefs, Moz) don't support AI search monitoring in depth. Semrush has added some AI visibility features but uses fixed prompts and doesn't offer content gap analysis for AI. Ahrefs Brand Radar tracks brand mentions in AI but doesn't show which prompts competitors rank for or help you identify content gaps.

The platforms purpose-built for AI search visibility fall into two categories: monitoring-only tools and optimization platforms.

Monitoring-only tools show you where you're visible and where you're not, but don't help you fix it:

- Otterly.AI: Affordable monitoring across multiple models, but no content gap analysis or optimization features

- Peec.ai: Multi-language tracking with good persona customization, but no tools to help you create citation-worthy content

- Search Party: Agency-oriented with limited prompt metrics and no content gap analysis

Optimization platforms close the loop by helping you identify gaps and create content to fill them:

- Promptwatch: The only platform rated as a "Leader" across all categories in a 2026 comparison of 12 GEO platforms. Answer Gap Analysis shows which prompts competitors own, what content is missing, and generates AI-optimized articles grounded in 880M+ citations. Also includes AI crawler logs, Reddit/YouTube tracking, ChatGPT Shopping monitoring, and page-level citation tracking. Pricing starts at $99/mo.

- Profound: Strong feature set with good prompt tracking and competitor analysis, but higher price point and no Reddit tracking or ChatGPT Shopping visibility

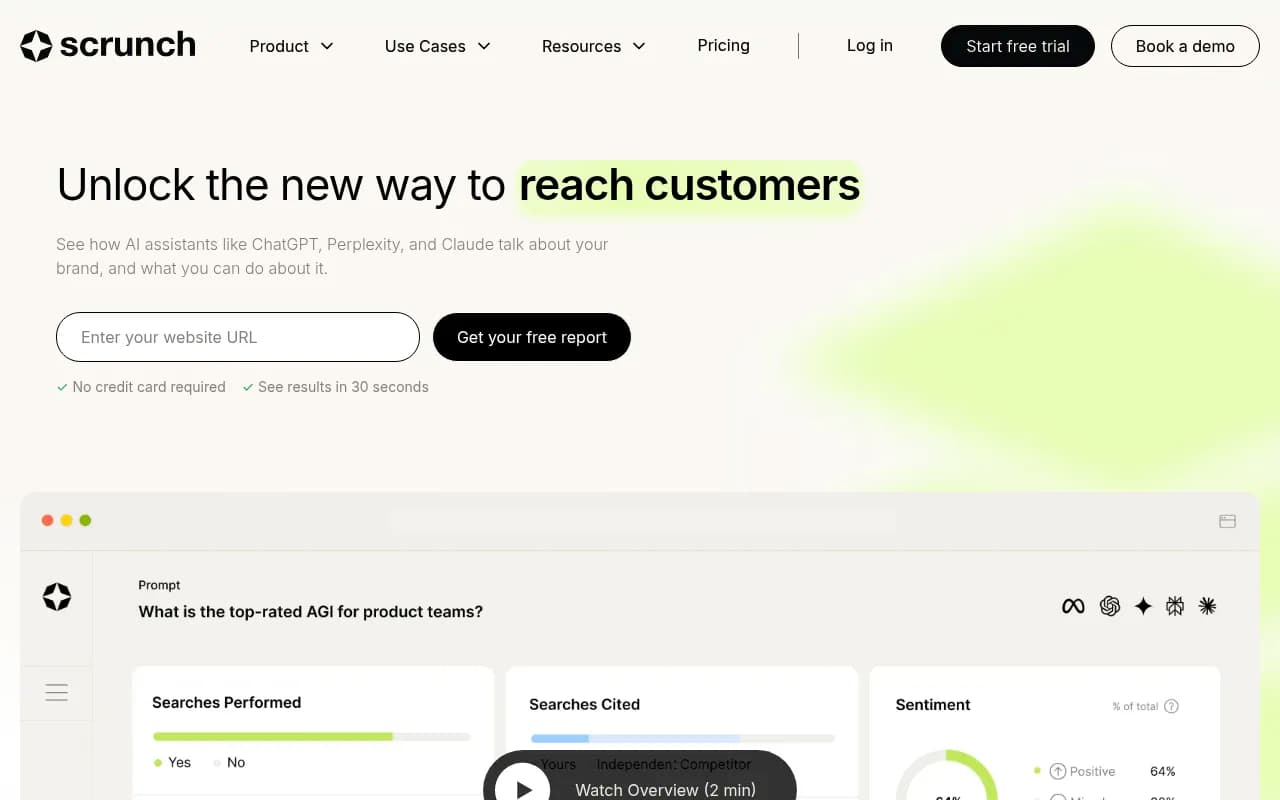

- Scrunch: Solid monitoring and some optimization features, but limited compared to Promptwatch and no content generation capabilities

Common mistakes in answer gap analysis

Teams new to AI search visibility make predictable mistakes:

Mistake 1: Treating AI prompts like Google keywords. You can't just take your keyword list and expect it to work for AI search. Prompts are longer, more conversational, and more context-specific. "Best CRM" is a Google keyword. "I run a real estate agency with 8 agents and need a CRM that tracks leads from Zillow and integrates with our email marketing tool. What should I use?" is an AI prompt. The content that ranks for the first won't get cited for the second.

Mistake 2: Focusing only on brand mentions. Many tools track how often your brand is mentioned in AI responses. That's useful but insufficient. You also need to know which prompts you're missing entirely — the queries where competitors are cited but you're not even in the conversation.

Mistake 3: Ignoring Reddit and YouTube. AI models cite Reddit threads and YouTube videos frequently, especially for product recommendations and how-to queries. If your answer gap analysis only looks at competitor websites, you're missing a huge part of the picture.

Mistake 4: Creating generic content. AI models don't cite vague, high-level content. They cite specific, detailed answers with concrete data points. If your content says "Our tool is great for remote teams", you won't get cited. If it says "Our tool includes async video messaging, timezone-aware scheduling, and integrates with Slack, Zoom, and Google Meet — features specifically built for remote teams across multiple time zones", you might.

Mistake 5: Not tracking results. You can't improve what you don't measure. If you create new content to fill gaps but don't track whether AI models start citing it, you have no idea if your strategy is working.

Answer gap analysis vs traditional gap analysis

Here's a side-by-side comparison:

| Dimension | Traditional Gap Analysis | Answer Gap Analysis |

|---|---|---|

| Query type | Short keywords ("project management software") | Conversational prompts ("What project management tool should I use for a remote team of 15?") |

| Ranking model | Position 1-100 in Google | Cited or not cited in AI response (binary) |

| Success metric | Keyword rankings, organic traffic | Citation frequency, AI visibility score |

| Content signals | Backlinks, domain authority, user engagement | Specificity, structure, direct answers, recency |

| Tools | Semrush, Ahrefs, Moz | Promptwatch, Profound, AthenaHQ |

| Optimization goal | Rank higher in Google SERPs | Get cited by AI models |

| Content format | SEO-optimized blog posts, landing pages | Citation-worthy guides, comparisons, decision frameworks |

How to prioritize which gaps to fill first

You'll identify dozens or hundreds of prompts where competitors are cited but you're not. You can't create content for all of them at once. Prioritization matters.

Here's a framework:

High priority: High volume + low difficulty + high business relevance. These are prompts lots of people are asking, where competition for citations is relatively low, and that directly relate to your product or service. If you sell CRM software and discover a high-volume prompt like "What's the best CRM for real estate agents under $100/month?" where only two competitors are consistently cited, that's a high-priority gap.

Medium priority: High volume + high difficulty + high business relevance. These are competitive prompts where lots of brands are fighting for citations, but the volume justifies the effort. You might not win immediately, but creating strong content positions you to gain visibility over time.

Low priority: Low volume + low difficulty + medium business relevance. These are niche prompts with limited search volume. They're easy wins but won't move the needle much. Fill these gaps when you have extra capacity.

Ignore: Low volume + high difficulty + low business relevance. These prompts aren't worth your time.

Some platforms provide prompt difficulty scores and volume estimates automatically. Others require manual judgment.

Measuring success: What good looks like

How do you know if your answer gap strategy is working? Track these metrics:

Citation frequency: How often are AI models citing your content across all tracked prompts? This should increase as you fill gaps.

Prompt coverage: What percentage of relevant prompts do you appear in? If you've identified 100 high-priority prompts and you're cited in 15, your coverage is 15%. Target 50%+ over time.

Model distribution: Are you visible across multiple AI models (ChatGPT, Claude, Perplexity, Gemini) or just one? Diversification reduces risk.

Page-level performance: Which specific pages are being cited most often? This tells you what content formats and topics work best.

Traffic attribution: Are AI citations driving actual traffic to your site? Some platforms offer code snippets, Google Search Console integration, or server log analysis to connect AI visibility to real visitors and revenue.

Promptwatch tracks all of these metrics and shows them in a unified dashboard. You can see your visibility score trend over time, which prompts you've gained or lost, and which pages are driving the most citations.

The future of answer gap analysis

AI search is still early. Most brands haven't started optimizing for it yet, which means the gaps are wide and the opportunities are massive.

But that window is closing. As more companies realize they're invisible in ChatGPT and Perplexity, competition for citations will increase. The brands that start now — identifying gaps, creating citation-worthy content, and tracking results — will build a durable advantage.

Two trends to watch:

Trend 1: AI models will get better at citing diverse sources. Right now, AI models tend to cite the same handful of authoritative domains repeatedly. As models improve, they'll cite a wider range of sources — which means more opportunities for smaller brands to gain visibility.

Trend 2: Prompt complexity will increase. As users get more comfortable with AI search, their prompts will become longer and more specific. "Best CRM" will become "Best CRM for a 50-person B2B SaaS company with a sales team in the US and customer success team in Europe, integrating with Salesforce and HubSpot, under $10k/year." The brands that create content addressing these hyper-specific queries will dominate.

Answer gap analysis isn't a one-time project. It's an ongoing process of identifying where you're invisible, creating content to fill the gaps, and tracking your progress. The brands that build this into their regular workflow will win in AI search.