Key takeaways

- Peec.ai is a strong monitoring tool for multi-language teams, but it stops at tracking -- there's no built-in content generation to act on what you find.

- Relixir takes a different approach with an AI-native CMS and autonomous content publishing, but that automation comes with tradeoffs around control and transparency.

- Promptwatch is the only platform of the three that closes the full loop: find gaps, generate content grounded in real citation data, then track whether it worked.

- If your goal is to actually appear in AI search results (not just measure whether you do), the platform you choose needs to do more than monitor.

The GEO platform market in 2025 was full of noise. Every tool claimed to help you "dominate AI search" or "optimize for LLMs." But when you looked closely at what they actually did, most of them were dashboards. They showed you a score. They told you your competitors were mentioned more than you. Then they left you to figure out what to do about it.

This comparison focuses on three platforms that took meaningfully different approaches: Peec.ai, Relixir, and Promptwatch. One is a monitoring specialist. One bets on autonomous publishing. One tries to connect visibility data to content creation to measurable results. Here's what each actually delivered.

What "ranking in AI search" actually means

Before comparing platforms, it's worth being precise about what we're measuring. In traditional SEO, ranking means appearing on page one of Google. In AI search, it means getting cited -- your brand, your URL, or your content appearing in a response from ChatGPT, Perplexity, Claude, Gemini, or another model.

Getting cited isn't random. AI models pull from sources they've indexed and trust. They favor content that directly answers the question being asked, comes from authoritative domains, and matches the specific framing of the prompt. That means the path to AI visibility runs through content -- the right content, targeting the right prompts, published on the right kind of pages.

A GEO platform that only monitors tells you whether you're being cited. A GEO platform that actually helps you rank tells you why you're not being cited and gives you the tools to fix it.

That distinction is the core of this comparison.

Peec.ai: solid monitoring, limited action

Peec.ai built its reputation on breadth. It covers a wide range of AI models, supports 115+ languages, and gives marketing teams a clean way to track brand visibility, sentiment, and competitive positioning across LLMs.

For teams that need to report on AI visibility across multiple markets and languages, Peec.ai is genuinely useful. The geographic targeting is more granular than most competitors, and the sentiment tracking gives you a read on how AI models describe your brand, not just whether they mention you.

Pricing runs from $97 to $545/month depending on the plan, which puts it in the mid-range of the market.

The limitation is what happens after you get the data. Peec.ai tells you that a competitor is being cited for a set of prompts you're missing. It shows you the gap. But it doesn't help you close it. There's no content generation, no answer gap analysis that maps to specific content briefs, no way to go from "I'm invisible for this prompt" to "here's the article that will fix it."

For teams with a strong content operation already in place, that's manageable -- you take the data and hand it to your writers. But for teams that need the full workflow in one place, Peec.ai leaves a significant gap.

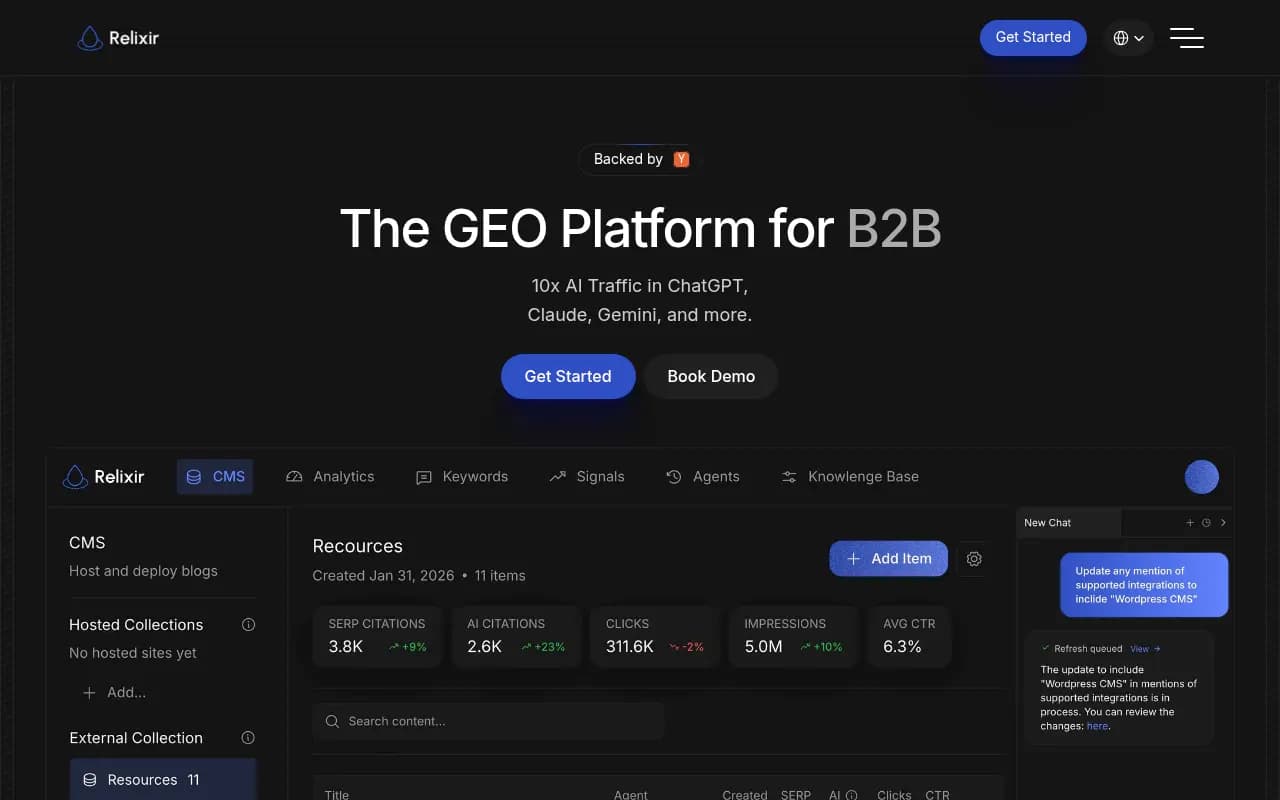

Relixir: autonomous publishing is interesting, but watch the tradeoffs

Relixir takes the opposite approach. Rather than giving you data and leaving you to act on it, Relixir leans into automation -- it has an AI-native CMS and can autonomously generate and publish content designed to rank in AI search.

The pitch is appealing: you connect your site, define your topics and competitors, and Relixir starts producing content that targets AI citation opportunities. For teams with limited bandwidth, that sounds like a solution.

In practice, autonomous publishing is more complicated. The content Relixir generates needs to be reviewed before it goes live -- or at least it should be. AI-generated content that's published without human review can introduce factual errors, tone mismatches, or brand inconsistencies that are hard to walk back once AI models have indexed them. A few teams that used Relixir in 2025 found themselves spending more time editing auto-published content than they expected.

There's also a transparency question. When content is generated and published autonomously, it can be hard to understand why specific topics were chosen, what citation data drove the decision, or how to prioritize one piece over another. That opacity makes it difficult to learn from what's working.

Relixir is genuinely interesting as a concept, and for some teams it will be the right fit. But "autonomous" doesn't mean "hands-off" in practice, and the lack of granular control over the content strategy is a real limitation for brands that care about voice and accuracy.

Promptwatch: the full loop

Promptwatch approaches the problem differently. The core idea is that visibility in AI search is an optimization problem, not just a measurement problem -- and optimization requires a cycle: find the gaps, create content to fill them, then track whether it worked.

Finding the gaps

Promptwatch's Answer Gap Analysis shows you exactly which prompts your competitors are being cited for that you're not. Not just "you're missing 30% of prompts in your category" -- it shows you the specific questions, the specific competitors winning them, and the specific content those competitors have that you don't.

That's the starting point for any content decision. You're not guessing what to write. You're looking at real citation data from 880M+ citations analyzed and picking the prompts where you have the best chance of winning.

Creating content that gets cited

The built-in AI writing agent generates articles, listicles, and comparisons grounded in that citation data. It factors in prompt volumes, difficulty scores, persona targeting, and competitor analysis. The output isn't generic SEO content -- it's structured to answer the specific questions AI models are asking, in the format those models tend to cite.

This is the part most competitors skip entirely. Peec.ai doesn't have it. Relixir automates it but removes the strategic control. Promptwatch gives you the data and the generation tool together, so you can make deliberate decisions about what to publish and why.

Tracking what actually changed

After content goes live, Promptwatch tracks whether AI models start citing it. Page-level tracking shows which URLs are being cited, by which models, and how often. Traffic attribution (via code snippet, GSC integration, or server log analysis) connects those citations to actual visits and revenue.

That closed loop -- from gap to content to citation to traffic -- is what makes Promptwatch an optimization platform rather than a monitoring tool.

A few other things worth knowing

Promptwatch also logs AI crawler activity in real time. You can see when ChatGPT, Claude, or Perplexity crawls your site, which pages they read, and whether they're hitting errors. Most competitors don't offer this at all, and it's genuinely useful for diagnosing why certain pages aren't getting cited.

The platform also tracks Reddit and YouTube discussions that influence AI recommendations -- a channel that's easy to overlook but matters a lot for how AI models form opinions about brands.

Side-by-side comparison

| Feature | Peec.ai | Relixir | Promptwatch |

|---|---|---|---|

| AI model coverage | 5-6 models | 4-5 models | 10+ models (ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Copilot, Mistral, Meta AI, Google AI Overviews) |

| Monitoring & tracking | Strong | Basic | Strong |

| Answer gap analysis | No | Partial | Yes (specific prompts + competitor mapping) |

| Content generation | No | Yes (autonomous) | Yes (human-directed, citation-grounded) |

| Content control | N/A | Low (auto-publish) | High (you approve and publish) |

| AI crawler logs | No | No | Yes |

| Reddit/YouTube tracking | No | No | Yes |

| ChatGPT Shopping tracking | No | No | Yes |

| Traffic attribution | No | No | Yes (GSC, snippet, server logs) |

| Multi-language support | 115+ languages | Limited | Yes |

| Pricing (starting) | $97/mo | Custom | $99/mo |

| Free trial | Yes | Yes | Yes |

| Best for | Multi-language monitoring | Teams wanting automation | Teams wanting the full optimization loop |

Which platform actually helped brands rank in 2025?

This is the honest answer: all three platforms helped some brands improve AI visibility in 2025, but in very different ways and with very different levels of effort required.

Peec.ai helped teams understand where they stood. Brands that used it well had strong monitoring data and could brief their content teams effectively. But the platform itself didn't generate the content that got cited -- that happened elsewhere.

Relixir helped some teams publish more content faster. A few brands saw citation improvements from the volume of content Relixir pushed out. But there were also cases where auto-published content created problems -- factual errors, off-brand tone, or content that targeted the wrong prompts because the strategy wasn't well-defined upfront.

Promptwatch helped teams close the loop. The brands that used the Answer Gap Analysis to identify specific prompt opportunities, used the writing agent to produce targeted content, and then tracked citation improvements with page-level data had a clear, repeatable process. The results were more predictable because the process was more deliberate.

The pattern that emerged across 2025: monitoring-only tools are necessary but not sufficient. The brands that actually improved their AI search visibility were the ones that connected data to content to measurement. That's a workflow, not just a feature.

How to choose

The right platform depends on what you're actually trying to do.

If you have a large content team and operate across many languages and regions, Peec.ai's monitoring depth and language coverage might justify the cost. You'll need to build the content workflow separately, but the data quality is solid.

If you want maximum automation and are willing to trade some control for speed, Relixir is worth evaluating -- just go in with a clear review process for anything that gets published.

If you want a single platform that takes you from "I don't know why I'm not being cited" to "I published content that fixed it, and here's the traffic data to prove it," Promptwatch is the most complete option. The pricing is competitive ($99/mo to start), the coverage is the broadest of the three, and the action loop is built into the product rather than bolted on.

One practical suggestion: before committing to any of these platforms, run a free trial and specifically test the answer gap analysis or equivalent feature. Ask it to show you three specific prompts where a competitor is winning that you're not. Then ask: does this platform help me do something about it, or does it just show me the problem?

That question will tell you more than any feature matrix.

Other tools worth knowing about

If none of these three feel like the right fit, a few other platforms are worth a look depending on your specific needs:

For enterprise-scale monitoring with deep analytics, Profound is the most feature-rich option, though the pricing reflects that.

For budget-conscious teams that want basic monitoring, Otterly.AI starts at $29/month and includes a GEO audit feature that's genuinely useful for identifying quick wins.

For teams that want actionable insights with strong competitor intelligence, Ranksmith has built a solid reputation as an alternative worth evaluating.

And if you're specifically focused on tracking how AI models cite your pages at the URL level, AthenaHQ has strong page-level tracking, though it lacks content generation.

The GEO platform market is still maturing. Most tools that launched in 2024 and 2025 started as monitoring dashboards and are now trying to add optimization features. The question to ask any vendor is: what happens after I see the data? If the answer is "you figure it out," that's a monitoring tool. If the answer is "here's how we help you fix it," that's an optimization platform.