Key takeaways

- Most GEO platforms are monitoring dashboards — they show you where you're invisible but don't help you fix it

- Model updates (GPT-4o, Gemini 2.0, Claude 3.5, etc.) can dramatically shift which sources get cited, making cross-model tracking essential

- The best platforms track prompt responses consistently over time, not just as one-off snapshots

- A handful of tools go beyond tracking to offer content gap analysis and AI-native content generation — that's where the real ROI is

- Pricing ranges from ~$39/month for basic add-ons to $579+/month for full optimization platforms

The problem with most GEO platform comparisons is that they treat "tracking" as the end goal. It isn't. Knowing that ChatGPT doesn't mention your brand is useful for about five minutes. What you actually need to know is: why not, what changed, and what do you do about it?

That question matters more in 2026 than it did a year ago, because AI models are updating faster than ever. OpenAI, Google, Anthropic, and Perplexity have all pushed significant model updates in the past twelve months, and each one reshuffles which sources get cited, which brands get recommended, and which answers get surfaced. A brand that was consistently cited in February might be invisible by April — not because their content got worse, but because the model changed.

This guide focuses specifically on which platforms can track those shifts over time, across multiple models, and ideally help you do something about it.

What "tracking across model updates" actually means

Before getting into specific tools, it's worth being precise about what this capability requires. A platform that truly tracks prompt responses across model updates needs to:

- Run the same prompts repeatedly over time (not just on demand)

- Store historical response data so you can compare before and after a model update

- Monitor multiple AI models simultaneously, not just one or two

- Surface changes in citation patterns, brand mentions, and competitor positioning

- Ideally, flag when a response has changed significantly so you don't have to manually check

Most tools in this space do some of these things. Very few do all of them well.

The GEO platform landscape in 2026

The market has grown fast. Two years ago there were maybe five serious players. Now there are dozens, ranging from enterprise platforms to solo-founder tools charging $19/month. The quality varies enormously.

Here's a realistic breakdown of the main categories:

Full optimization platforms

These go beyond monitoring to help you actually improve your AI visibility. They include content gap analysis, AI writing tools, and traffic attribution alongside tracking.

Monitoring-focused dashboards

These show you your brand's visibility across AI models, competitor comparisons, and citation data. Useful, but they stop at the data layer.

Traditional SEO tools with AI add-ons

Semrush, Ahrefs, and similar platforms have bolted AI visibility features onto their existing products. Coverage is improving but still limited compared to dedicated GEO tools.

Lightweight trackers

Single-feature tools that check whether your brand appears in AI responses for a set of prompts. Low cost, limited depth.

The platforms worth knowing about

Promptwatch

Promptwatch is the most complete platform in this category right now. It monitors 10 AI models (ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews, Google AI Mode, Grok, DeepSeek, Copilot, and Mistral), tracks prompt responses over time, and stores historical data so you can see exactly how model updates affect your visibility.

What separates it from most competitors is the action loop. The Answer Gap Analysis shows you which prompts competitors rank for that you don't — with specific content recommendations, not vague suggestions. The built-in AI writing agent generates articles grounded in 880M+ citations analyzed, so the content it produces is actually calibrated to what AI models want to cite. And the AI Crawler Logs show you in real time which AI crawlers are visiting your site, which pages they're reading, and what errors they're hitting.

That last feature is rare. Most platforms have no visibility into crawler behavior at all.

For teams that need to track how model updates affect their visibility over time, Promptwatch's page-level tracking and prompt volume data (with difficulty scores and query fan-outs) make it genuinely useful for prioritization, not just reporting.

Pricing: Essential $99/mo, Professional $249/mo, Business $579/mo. Free trial available.

Profound

Profound has built a solid monitoring product focused on enterprise brands. It tracks ChatGPT, Claude, Google AI Overviews, and Perplexity, with good competitor comparison features and share-of-voice metrics. The interface is clean and the data is reliable.

The limitation is that it stops at monitoring. There's no content generation, no crawler logs, and no traffic attribution. For a team that already has a content workflow and just needs visibility data, that's fine. For a team that wants to close the loop, it's not enough.

AthenaHQ

AthenaHQ tracks 8+ AI search engines and has strong narrative monitoring — it's particularly good at showing how AI models describe your brand, not just whether they mention it. That's a meaningful distinction. A brand can be cited frequently but described inaccurately, which is arguably worse than not being cited.

Like Profound, it's primarily a monitoring tool. Content optimization and generation aren't part of the product.

Otterly.AI

Otterly is the most accessible entry point in this space. It covers ChatGPT, Claude, Perplexity, Google AI Overviews, AI Mode, and Bing Copilot, and the pricing is reasonable for smaller teams. The interface is straightforward and setup is fast.

The tradeoff is depth. No crawler logs, no visitor analytics, no content generation. It's a monitoring tool, and a decent one, but it won't help you understand why your visibility changed after a model update.

Peec AI

Peec is worth mentioning specifically for multi-language and multi-region use cases. If you're tracking AI visibility across markets — say, German-language queries in Germany vs. Spanish-language queries in Mexico — Peec handles that better than most tools in this price range.

Scrunch AI

Scrunch focuses on AI search visibility monitoring with an emphasis on influencer and source signals. It's particularly useful for understanding which third-party sources (Reddit threads, YouTube videos, review sites) are shaping AI responses about your brand. That's a real gap in most monitoring tools.

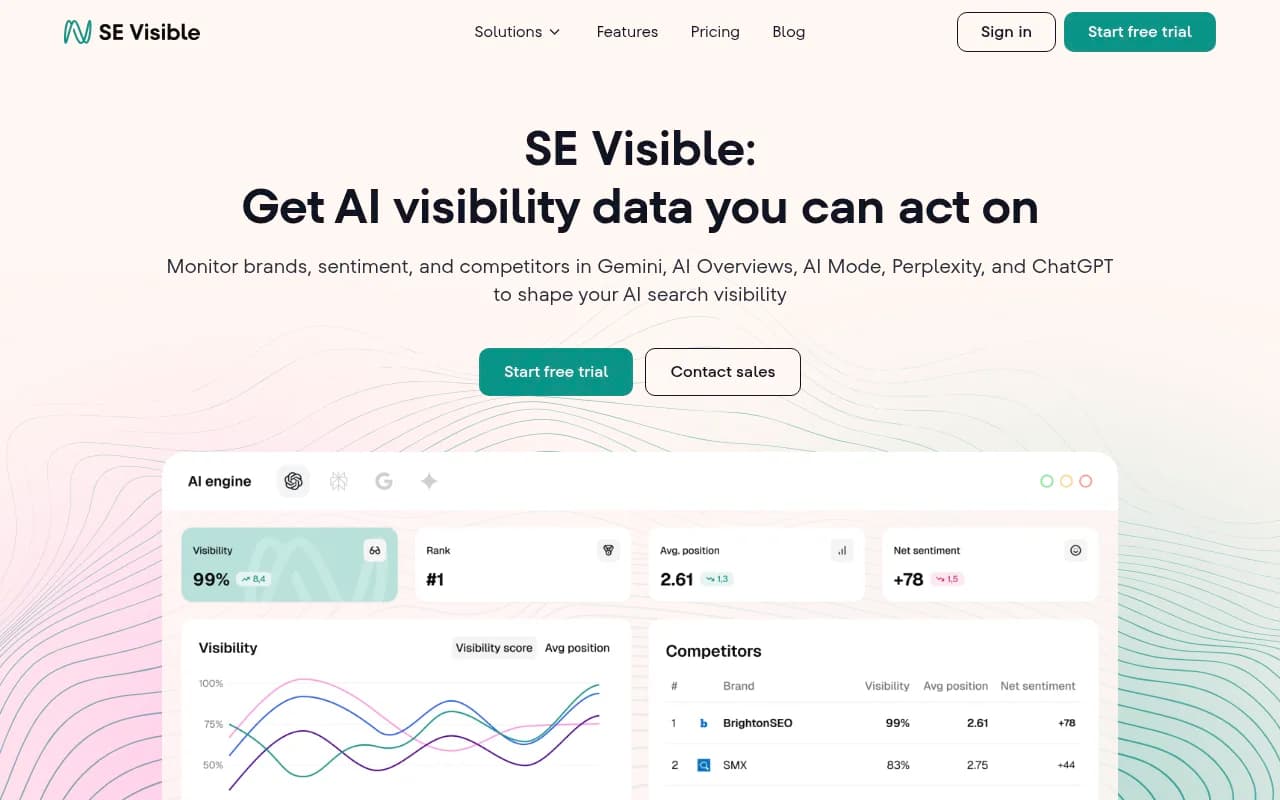

SE Visible

SE Visible (from SE Ranking) covers AI Overviews, AI Mode, Gemini, ChatGPT, and Perplexity. It's a solid mid-market option with good competitor intelligence and sentiment scoring. The $99/month price point makes it accessible, and the integration with SE Ranking's broader SEO toolkit is useful if you're already in that ecosystem.

Nightwatch

Nightwatch is primarily a rank tracking tool that has added AI visibility as a module. The base product starts at $39/month, with the AI add-on at $99/month. It covers ChatGPT, Google AI Overviews, Bing Copilot, Perplexity, and Claude. The zip-code level local tracking is genuinely useful for agencies managing local SEO alongside AI visibility.

Semrush

Semrush has added AI visibility features to its platform, but the implementation uses fixed prompts rather than custom ones. That's a significant limitation for tracking how model updates affect your specific brand positioning. It's useful as a starting point if you're already a Semrush customer, but dedicated GEO tools give you more control.

Ahrefs Brand Radar

Ahrefs Brand Radar tracks brand mentions in AI search results, but like Semrush, it uses fixed prompts and lacks AI traffic attribution. For teams already deep in the Ahrefs ecosystem, it adds some AI visibility context. For teams whose primary concern is AI search, it's not a primary tool.

Feature comparison: what each platform actually tracks

| Platform | Models tracked | Historical tracking | Content generation | Crawler logs | Traffic attribution | Multi-language |

|---|---|---|---|---|---|---|

| Promptwatch | 10 | Yes | Yes (AI writing agent) | Yes | Yes | Yes |

| Profound | 4 | Yes | No | No | No | Limited |

| AthenaHQ | 8+ | Yes | No | No | No | Limited |

| Otterly.AI | 6 | Limited | No | No | No | No |

| Peec AI | 5+ | Yes | No | No | No | Yes |

| Scrunch AI | 4+ | Yes | No | No | No | Limited |

| SE Visible | 5 | Yes | No | No | No | Limited |

| Nightwatch | 5 | Yes | No | No | No | No |

| Semrush | 4 | Limited | No | No | No | Limited |

| Ahrefs Brand Radar | 3 | No | No | No | No | No |

What to look for when model updates happen

Model updates are the event that separates useful GEO tools from ones that just look good in demos. Here's what a platform needs to handle them properly:

Consistent prompt scheduling. The platform needs to run your tracked prompts on a regular cadence — daily or at minimum weekly — so you have a baseline to compare against when a model updates. Tools that only run prompts on demand can't show you what changed.

Response diff capabilities. When a response changes, you want to see what changed, not just that it changed. Did your brand get dropped? Did a competitor get added? Did the cited sources shift? Platforms that store full response text (not just mention/no-mention flags) are much more useful here.

Multi-model coverage. GPT-4o, Gemini 2.0, and Claude 3.5 don't update on the same schedule. A change that tanks your visibility in ChatGPT might not affect Perplexity at all. You need to see each model separately.

Source tracking. When a model update changes which sources get cited, you want to know which domains gained and which lost. That tells you where to publish or build links to recover visibility.

Prompt volume data. Not all prompts are equally valuable. A platform that shows you prompt difficulty and estimated volume helps you prioritize which visibility losses actually matter.

Promptwatch covers all of these. Most other platforms cover two or three.

The content gap problem

Here's something that doesn't get discussed enough in GEO platform comparisons: tracking tells you what's missing, but it doesn't tell you what to build.

If a model update causes ChatGPT to stop citing your brand for "best project management software for remote teams," you know you have a problem. But what do you actually do? You need to know:

- What content currently gets cited for that prompt

- What topics and angles are covered in those cited sources

- What your site is missing relative to those sources

- What a piece of content would need to contain to be citation-worthy

Most monitoring tools give you the first two at best. The answer gap analysis in Promptwatch is specifically designed to answer all four, which is why it's genuinely different from tools that just show you a dashboard.

Practical recommendations by use case

For marketing teams at mid-size brands: Start with Promptwatch's Professional plan ($249/mo). The combination of tracking, gap analysis, and content generation means you can act on what you find without needing additional tools.

For agencies managing multiple clients: Promptwatch's agency pricing or the Business plan ($579/mo for 5 sites) gives you the multi-site tracking and white-label reporting you need. The Looker Studio integration and API make client reporting manageable.

For enterprise brands with complex needs: Profound or AthenaHQ for deep monitoring, combined with Promptwatch for content optimization. Or just Promptwatch if you want one platform.

For small teams with limited budgets: Otterly.AI or SE Visible as a starting point. You won't get content generation or crawler logs, but you'll at least know where you stand.

For multi-language/multi-region tracking: Peec AI for the language coverage, or Promptwatch if you need optimization capabilities alongside it.

The honest summary

The GEO platform market in 2026 has a clear split: tools that show you data, and tools that help you act on it. The monitoring-only tools aren't bad — they're just incomplete. If your goal is to understand and improve your AI search visibility, especially across model updates, you need a platform that closes the loop between finding gaps and filling them.

Most brands that start with a monitoring-only tool end up adding a content tool alongside it anyway. Platforms that combine both from the start save time and give you better data, because the content recommendations are grounded in the same citation database as the tracking.

The market will keep consolidating. Expect more monitoring tools to add content features, and expect the gap between basic trackers and full optimization platforms to widen. For now, the choice of which category you're in matters more than which specific tool you pick within that category.