Key takeaways

- Science (43.6%), Health (43.0%), and Pets & Animals (36.8%) have the highest AI Overview citation rates by industry in 2026, according to The Digital Bloom's citation report.

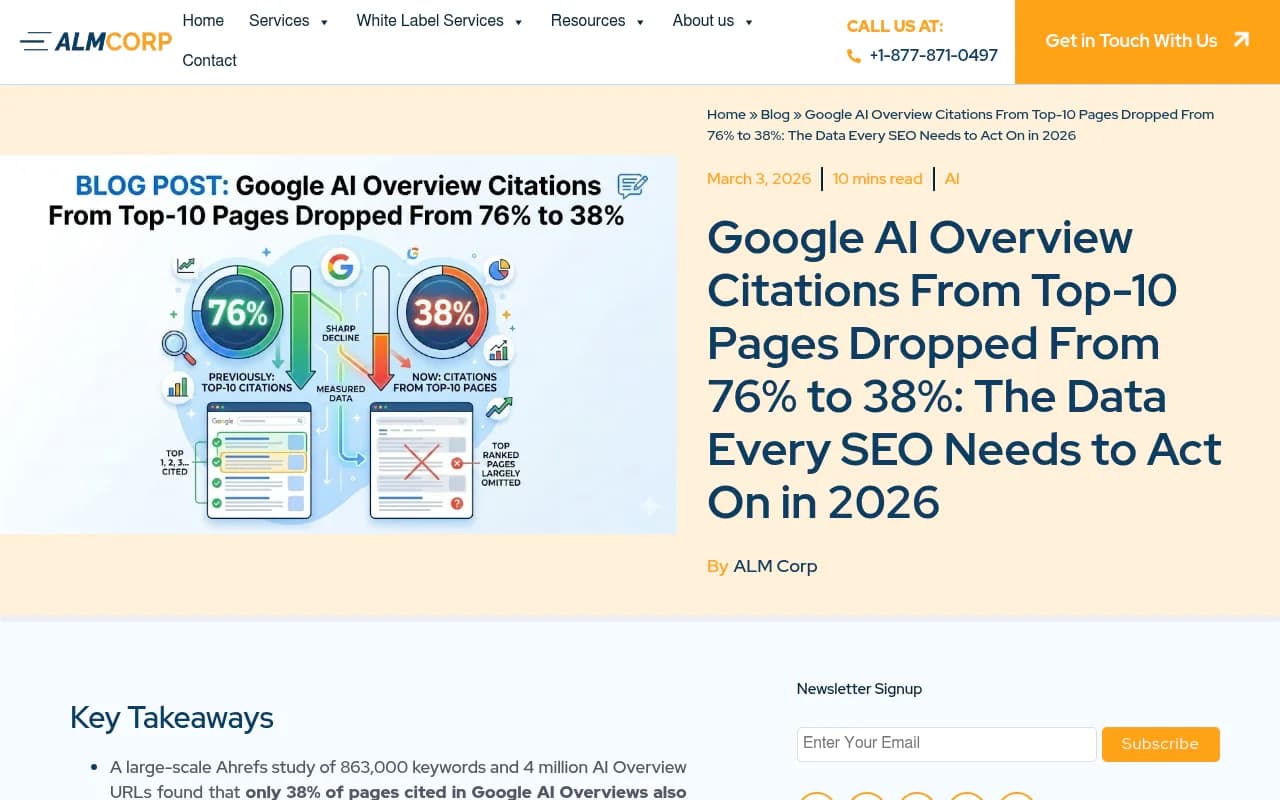

- Only 38% of pages cited in Google AI Overviews also rank in the top 10 organically -- down from 76% just seven months earlier. Ranking high no longer guarantees AI visibility.

- Finance, legal, and pharmaceutical sectors face the most aggressive filtering due to YMYL (Your Money or Your Life) content policies.

- YouTube is now the single most-cited domain in Google AI Overviews, accounting for 18.2% of citations from outside the top 100 organic results.

- AI Overviews now appear on 48% of all tracked queries, up 58% from a year ago -- making citation visibility a metric that matters as much as rankings.

Why industry matters more than you think

Most conversations about AI Overviews focus on tactics: structured data, topical authority, E-E-A-T signals. What gets less attention is that your industry might be working against you before you write a single word.

Google's AI Overview system doesn't treat all content categories equally. Some industries get cited constantly. Others are filtered out almost by default. And the gap between them is wide enough that a brand in a "favored" sector can outperform a technically superior site in a "filtered" one just by existing in the right category.

This isn't speculation. The Digital Bloom's 2026 AI Citation Position & Revenue Report breaks down AI Overview share by industry, and the variance is striking. Science sits at 43.6%. Health at 43.0%. Pets & Animals at 36.8%. People & Society at 35.3%. Then there's a long tail of sectors where AI Overviews appear far less frequently -- and where citations are harder to earn even when they do appear.

Understanding where your industry sits on this spectrum is the first step to building a realistic AI visibility strategy.

The sectors that dominate AI Overview citations

Science and health: the clear leaders

Science and Health sitting at the top isn't surprising if you think about what AI Overviews are designed to do. They answer questions. Science and health content is built around answering questions -- how does X work, what causes Y, what's the difference between A and B. That structural alignment between content format and AI intent is a big part of why these sectors get cited so heavily.

Health content also benefits from the sheer volume of queries. People search for health information constantly, and Google's AI Overviews appear on a large proportion of those queries. The challenge for health brands is that the same YMYL (Your Money or Your Life) policies that make Google cautious about health content also raise the bar for what gets cited. High citation rates in health don't mean it's easy -- it means the volume is there, but only credible, authoritative sources tend to make it through.

For science content specifically, the academic and educational framing helps. AI models are trained to treat peer-reviewed research and educational explanations as high-quality sources. If your content explains mechanisms, cites research, and uses precise language, it signals the kind of reliability that AI systems reward.

Pets & animals and people & society: the surprise performers

Pets & Animals at 36.8% is genuinely interesting. This sector punches above its weight partly because the queries are specific and answerable ("why does my dog eat grass," "how often should I bathe a cat"), and partly because there's relatively less competition from large institutional publishers. A well-structured pet care article from a mid-size brand can outperform a generic Wikipedia entry for a specific enough query.

People & Society at 35.3% covers a broad range of content -- demographics, social issues, cultural topics, community resources. These queries often have clear informational intent, which AI Overviews are built to serve.

The middle tier: travel, food, and lifestyle

Travel, food, and lifestyle content occupies a middle ground. These sectors get AI Overview appearances, but citation rates are more variable. Queries like "best restaurants in Barcelona" or "things to do in Kyoto" often trigger AI Overviews, but the sources cited tend to be dominated by large platforms (TripAdvisor, Yelp, Booking.com) rather than individual brand sites.

For brands in these sectors, the path to AI visibility often runs through third-party platforms rather than owned content. A restaurant that appears prominently on Yelp and Google Maps is more likely to get cited than one with a well-optimized website but thin review presence.

The sectors that get filtered out

Finance and legal: the YMYL wall

Finance and legal content faces the most aggressive filtering. Google's YMYL framework treats financial advice and legal guidance as high-stakes content where errors have real consequences. The result is that AI Overviews in these sectors are more conservative -- they appear less frequently, and when they do, they tend to cite institutional sources (government sites, major financial publications, established law firms) rather than smaller brands.

This creates a real problem for fintech startups, independent financial advisors, and boutique law firms. Even excellent content from these sources often gets passed over in favor of a Forbes article or an official government page. The AI isn't wrong to be cautious here, but it does mean that brands in these sectors need to think differently about their AI visibility strategy.

The most effective approach tends to be building presence on the platforms that AI systems do cite in these sectors -- contributing to established publications, building a strong LinkedIn presence, and earning mentions in trusted industry outlets rather than trying to get your own site cited directly.

Pharmaceutical and medical: high bar, high reward

Pharmaceutical content faces similar dynamics to finance and legal, but with an added layer of complexity. Medical claims require sourcing. AI systems are trained to be skeptical of medical content that doesn't cite clinical evidence. The result is that pharmaceutical brands without strong clinical content pipelines struggle to get cited.

The brands that do well here tend to have robust educational content that explains conditions, mechanisms, and treatment options without making direct therapeutic claims. Think patient education rather than product promotion.

News and current events: the freshness problem

News content has a different challenge. AI Overviews do cite news sources, but the freshness requirements are strict. A news article that was authoritative three months ago may not get cited today. For brands in media and publishing, this means AI visibility is inherently more volatile -- you can be highly cited one week and invisible the next.

The ranking disconnect that changes everything

Here's the data point that should make every SEO team reconsider their AI visibility assumptions: only 38% of pages cited in Google AI Overviews also rank in the top 10 organic results for the same query. Seven months ago, that figure was 76%.

This isn't a minor fluctuation. It's a structural shift driven largely by Google's upgrade to Gemini 3 as the global default for AI Overviews in January 2026. The new model uses a query fan-out process -- it splits a user's original query into multiple sub-queries and draws from across all of those sub-query results. That means a page that ranks well for the exact query might get passed over in favor of pages that answer related sub-questions more thoroughly.

The practical implication: if your AI visibility strategy is built on the assumption that ranking #1 guarantees citation, you're working with an outdated model. Topical depth, multi-format content, and coverage of related questions now matter more than position alone.

A separate BrightEdge analysis puts the top-10 overlap even lower, at around 17%. The range between 17% and 38% reflects different methodologies, but both numbers tell the same story: organic rankings and AI citations are increasingly independent signals.

What actually gets you cited: the cross-industry signals

Regardless of industry, certain patterns consistently predict AI Overview citations.

Topical depth over keyword targeting

AI systems reward content that covers a topic thoroughly, not content that targets a specific keyword. A page that answers the main question and five related questions is more likely to get cited than a page that answers the main question perfectly but nothing else.

This is the query fan-out effect in practice. When Google's AI splits a query into sub-queries, it needs sources that can answer multiple angles. Thin, keyword-targeted content fails this test even if it ranks well.

The 30% rule for content structure

One pattern worth knowing: AI systems are more likely to pull information from the first 30% of a webpage. If your key claims, definitions, and conclusions are buried in the middle of a long article, they're less likely to get cited. Front-loading your most important content isn't just good UX -- it's increasingly important for AI visibility.

Multi-format presence

YouTube is now the single most-cited domain in Google AI Overviews, accounting for 18.2% of citations from outside the top 100 organic results. This is a significant finding for brands that have treated video as optional.

For many industries, having a YouTube presence isn't just a nice-to-have -- it's one of the most reliable paths to AI Overview citations. A video that explains a concept clearly can get cited even when the brand's website isn't ranking for the same query.

Third-party credibility signals

The most-cited domains across AI platforms -- Reddit, YouTube, LinkedIn, Wikipedia, Forbes, Yelp, Amazon -- share one characteristic: they're trusted by AI systems as sources of real user experience or editorial validation. Brands that appear in these ecosystems (through reviews, community discussions, or editorial coverage) are more likely to get cited than brands that rely solely on their own website.

This is especially true for sectors facing heavy filtering. A fintech brand that gets mentioned in a Forbes article, discussed on Reddit's personal finance communities, and reviewed on G2 has a much better shot at AI visibility than one with a technically perfect website but no third-party presence.

Tracking your industry's AI visibility

Given how fast citation patterns are shifting -- the top-10 overlap dropped from 76% to 38% in seven months -- monitoring your AI visibility isn't optional if you're serious about this channel.

BrightEdge's data shows AI Overviews now appear on 48% of all tracked queries, up 58% from a year ago. Organic CTR for queries with AI Overviews has fallen by as much as 61%. That means a large and growing share of search traffic is being influenced by AI citations rather than organic rankings.

Tools like Promptwatch track exactly which prompts your brand appears in across AI models, which competitors are getting cited instead of you, and which content gaps are costing you visibility. For industry-specific tracking, that kind of granularity matters -- knowing that you're invisible for "how does X work" queries in your sector is actionable in a way that aggregate visibility scores aren't.

For teams that want to monitor AI Overview appearances specifically, a few tools are worth knowing about:

BrightEdge tracks AI Overview appearances at scale and is one of the more reliable sources of industry-level citation data.

SE Ranking's AI visibility toolkit covers Google AI Overviews alongside other AI platforms, useful for tracking citation trends over time.

Thruuu is built specifically for content teams monitoring AI Overview appearances -- lighter weight than enterprise platforms but useful for focused tracking.

Industry-by-industry summary

| Industry | AI Overview citation rate | Key challenge | Best citation path |

|---|---|---|---|

| Science | 43.6% | Accuracy bar is high | Educational, research-backed content |

| Health | 43.0% | YMYL filtering | Authoritative sources, clinical citations |

| Pets & Animals | 36.8% | Platform competition | Specific, answerable queries |

| People & Society | 35.3% | Broad query intent | Informational, community-focused content |

| Travel & Food | Moderate | Platform dominance | Third-party review presence |

| Finance | Low-moderate | YMYL wall | Institutional citations, LinkedIn, Forbes |

| Legal | Low-moderate | YMYL wall | Established publications, government sites |

| Pharmaceutical | Low-moderate | Medical claim sensitivity | Patient education content |

| News & Media | Variable | Freshness requirements | Consistent publishing cadence |

What to do if your industry is filtered

If your sector faces heavy filtering, the instinct is often to double down on your own website. That's usually the wrong move. The more effective approach:

Build presence where AI systems already trust. Reddit discussions, LinkedIn articles, YouTube explainers, and reviews on established platforms all get cited more reliably than brand websites in filtered sectors. Meet the AI where it already looks.

Earn editorial mentions. A single mention in a Forbes or TechRadar article can do more for your AI visibility than months of on-site optimization. Treat PR as an AI visibility strategy, not just a brand awareness play.

Cover the sub-questions. Because of query fan-out, AI systems are looking for content that answers related questions, not just the main query. Map out the sub-questions your target queries generate and make sure your content covers them.

Track what's actually happening. The citation landscape is shifting fast enough that assumptions made six months ago may already be wrong. Regular monitoring of which queries trigger AI Overviews in your sector, and which sources get cited, is the only way to stay current.

The brands winning AI visibility in 2026 aren't necessarily the ones with the best websites. They're the ones that understand how AI systems evaluate content in their specific industry -- and build their strategy around that reality rather than assumptions carried over from traditional SEO.