Key takeaways

- Ranking #1 on Google and appearing in AI Overviews are now two completely separate outcomes, driven by different signals.

- AI Overviews prioritize content that is directly extractable: clear answers, clean structure, and unambiguous entity signals.

- The most common reasons for exclusion are structural, not technical -- your content is hard for AI to parse, not broken.

- Fixing these issues requires rethinking how you write, not just how you optimize.

- Tools that track AI visibility separately from SERP rankings are now essential for understanding your true search presence.

There's a particular kind of frustration that's become very common in 2026: you open Search Console, see your page sitting at position one, then open Google and watch AI Overviews pull from a competitor three spots below you. Your traffic is down. Your rankings look fine. Nothing makes sense.

The problem is that Google's AI Overviews system doesn't care much about your ranking position. It cares about something different: whether it can safely extract a useful, structured answer from your page. Those are not the same thing, and the gap between them is where a lot of organic traffic is quietly disappearing.

Here's what's actually happening -- and how to fix it.

The gap between traditional SEO rankings and AI Overview inclusion is one of the defining challenges of search in 2026.

The gap between traditional SEO rankings and AI Overview inclusion is one of the defining challenges of search in 2026.

Reason 1: Your page doesn't lead with a direct answer

This is the single most common reason. AI Overviews pull from pages that answer the query in the first paragraph -- ideally in the first two or three sentences. If your page opens with a long introduction, a brand story, or a "let's explore what this means" setup, the AI skips it.

Think about what AI systems are trying to do: they need to extract a safe, summarizable answer quickly. If your content buries the answer under 200 words of context, the AI has no clean extraction point.

The fix is to restructure your content so the answer comes first, then the explanation. Write the way a dictionary or a reference document does: definition first, context second. This is sometimes called an "answer block" -- a short, direct response to the main query right at the top of the section, before any elaboration.

This doesn't mean your content needs to be shallow. It means the architecture changes: answer, then depth, not depth, then answer.

Reason 2: Your content structure is confusing to parse

AI systems rely heavily on heading hierarchy to understand what a page is about. If your H2s are vague, your sections mix multiple topics, or your headings don't match the content beneath them, AI can't reliably extract stable answers.

A few specific patterns that cause problems:

- Headings that are clever but not descriptive ("The secret sauce" instead of "How to optimize for AI Overviews")

- Sections that start with one topic and drift into another

- Multiple questions answered under a single heading

- Content that uses bold text as a substitute for proper heading structure

The rule of thumb: each section should answer one question. If you can't state in one sentence what question a section answers, it's probably doing too much.

Clean heading structure isn't just good for humans -- it's now a direct signal for AI extractability. One intent per section, clear H2s, and summary-first paragraphs within each section will get you much further than clever formatting.

Structural clarity -- not just keyword optimization -- is what separates pages that get cited from those that get skipped.

Structural clarity -- not just keyword optimization -- is what separates pages that get cited from those that get skipped.

Reason 3: Your brand entity is weak or ambiguous

AI Overviews don't just cite URLs -- they cite entities. If Google's systems can't clearly identify what your brand is, what category it belongs to, and what it specializes in, it won't confidently cite you.

This is where a lot of technically solid sites fall down. The page might be well-written, but if your site sends mixed signals about what you actually do -- inconsistent descriptions across pages, no clear topical focus, brand names that overlap with other entities -- AI systems will choose a clearer source instead.

Fixing entity clarity means:

- Making sure your About page, homepage, and schema markup all describe your brand consistently

- Using structured data (Organization schema, BreadcrumbList, FAQ schema) to give AI systems explicit signals

- Having a clear topical focus rather than covering everything loosely

- Building citations and mentions on third-party sites that reinforce what your brand is about

This is one area where traditional SEO and AI optimization genuinely overlap. A strong entity presence helps both.

Reason 4: You lack topical authority on the subject

One strong article isn't enough. AI systems prefer sites that demonstrate deep, consistent coverage of a topic -- not a single well-optimized page surrounded by unrelated content.

If your site covers 40 different topics at a surface level, AI models will consistently prefer a site that covers your topic in depth, even if that site ranks below you for the specific query.

Topical authority means having a cluster of content that covers a subject from multiple angles: the main concept, the subtopics, the related questions, the comparisons, the how-tos. When AI systems see that pattern, they trust the source more.

This is a longer-term fix, but it's the right one. Audit your content and identify where you have thin coverage. Build out the gaps systematically, not just the pages that rank well.

Tools like Promptwatch can help here -- the Answer Gap Analysis feature shows exactly which prompts competitors are visible for that you're not, which is essentially a map of your topical authority gaps.

Reason 5: Your content adds no new information

Google's March 2026 core update made this explicit: content that rewrites what's already ranking is losing visibility. The concept behind this is "information gain" -- how much new and useful information your page adds compared to what already exists.

If someone reads your page and gets the same understanding they'd get from any other top result, your content has zero information gain. AI systems are increasingly good at detecting this, not by flagging AI-generated text specifically, but by identifying content that has no distinct point of view.

Pages that get cited in AI Overviews tend to:

- Take a clear position rather than listing every possible answer

- Include specific examples, data, or results that aren't available elsewhere

- Explain what something depends on, not just that "it depends"

- Reflect genuine experience with the topic

The fix isn't to write more -- it's to say something that only you could say. That might be a counterintuitive finding, a specific result from your own testing, or a clear recommendation rather than a list of options.

Reason 6: You're answering the wrong version of the query

Search intent and AI extraction intent are not always the same thing. A query like "best CRM for small business" might have a transactional intent for traditional SEO purposes, but AI Overviews often pull from pages that answer it informationally -- with a clear, direct recommendation and a brief explanation of why.

If your page is optimized for conversions (lots of CTAs, comparison tables designed to push a sale, minimal explanatory text), it may rank well but be nearly impossible for AI to extract a neutral, useful answer from.

This doesn't mean you need to remove your conversion elements. It means you need to add an extractable answer layer -- a section that directly addresses the query in a way AI can use, separate from your conversion-focused content.

Think of it as writing for two audiences: the human who wants to be persuaded, and the AI that wants to extract a clean answer. Both can coexist on the same page if the structure is right.

Reason 7: AI crawlers can't properly access or process your page

This one is less obvious but surprisingly common. AI Overviews don't just use Google's existing index -- they rely on AI crawlers that behave differently from Googlebot. If your page has issues that specifically affect AI crawler access, you can rank in traditional search while being effectively invisible to AI systems.

Common problems include:

- JavaScript-heavy content that AI crawlers don't render properly

- Blocking AI crawlers in your robots.txt (sometimes done accidentally when blocking other bots)

- Pages that load slowly or return errors when crawled by less patient bots

- Content that's locked behind login walls or paywalls without proper structured data signals

You can check which AI crawlers are hitting your site and what they're seeing using crawler log analysis. Most traditional SEO tools don't surface this data -- it's a relatively new capability. Platforms that track AI crawler activity (like Promptwatch's crawler logs feature) show you which pages AI systems are reading, how often they return, and what errors they encounter.

How to diagnose which reason applies to you

The frustrating thing about this list is that the fix depends entirely on which problem you have. A page with weak entity signals needs different work than a page with a structural problem.

Here's a quick diagnostic:

| Symptom | Likely cause | First fix |

|---|---|---|

| Competitor ranks lower but gets cited | Structural clarity or direct answer | Add answer block at top of page |

| No pages on your site appear in AIO | Entity or topical authority issue | Audit brand consistency and content depth |

| Some pages cited, others not | Content quality or information gain | Review uncited pages for originality |

| Traffic dropped but rankings held | AI Overview is intercepting clicks | Check if AIO appears for your key queries |

| New content never gets cited | Crawler access or entity weakness | Check robots.txt and structured data |

The most reliable way to understand your specific situation is to track your AI visibility separately from your SERP rankings. They're different metrics now, and treating them as the same thing is how you end up confused about why your #1 ranking isn't driving traffic.

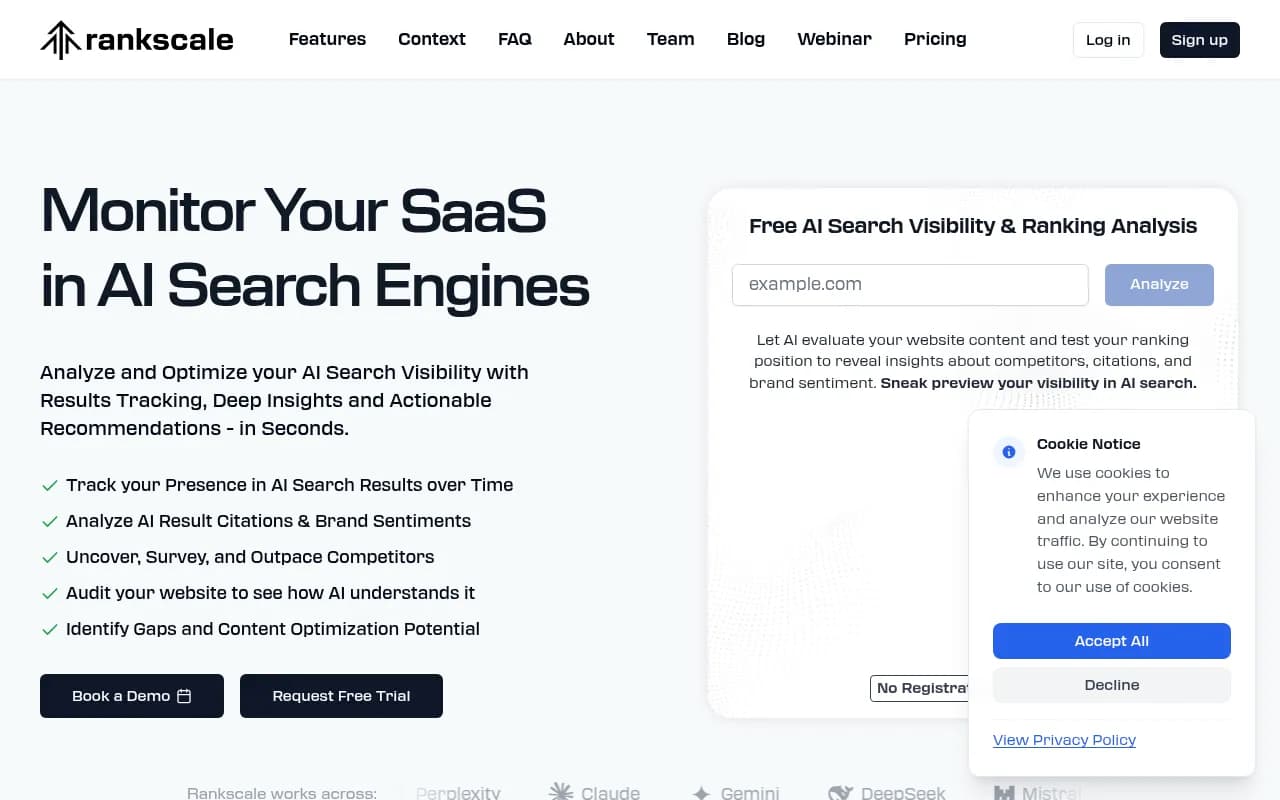

Several tools now track AI Overview inclusion specifically:

The bigger picture: ranking and visibility are now different things

The underlying shift here is that Google has built two parallel systems. One ranks pages. The other selects content for AI summaries. They share some signals but diverge significantly on others.

Traditional SEO metrics -- backlinks, keyword density, page authority -- still matter for rankings. But AI Overview inclusion is driven more by extractability, entity clarity, topical depth, and information gain. A page can score well on the first set of signals and poorly on the second.

The practical implication: you need to optimize for both, and you need to measure both. Checking your rankings in Search Console tells you one thing. Checking whether your content actually appears in AI responses tells you something different.

For teams that want to close that loop -- find the gaps, fix the content, track the results -- Promptwatch is built specifically around that workflow. But even if you're not using a dedicated platform, the first step is simply accepting that position one and AI Overview inclusion are separate goals that require separate strategies.

The good news is that the fixes are mostly about writing and structure, not technical complexity. Clear answers, clean headings, genuine expertise, consistent entity signals. None of that requires a new tech stack -- just a different way of thinking about what your content is actually for.