Summary

- Citation share measures how often AI models cite your brand vs competitors -- the core indicator of whether you're a trusted source

- Mention rate and sentiment reveal how frequently and favorably AI tools describe you when users ask questions in your category

- Prompt coverage shows which customer questions you're visible for and which gaps competitors are filling instead

- Assisted conversions and branded search lift connect AI visibility to actual revenue impact

- Weekly audits turn raw metrics into structured content updates that compound over time

AI search is no longer a side channel. When 90% of Google AI Mode responses include brand mentions and ChatGPT processes billions of queries per month, visibility in AI-generated answers directly shapes how customers discover and evaluate your brand. Traditional SEO metrics like rankings and clicks still matter, but they miss a critical question: when someone asks an AI assistant about your category, does your brand show up?

This guide walks through the five metrics that actually matter for AI visibility tracking in 2026. These aren't vanity numbers -- they're the signals that tell you whether AI models treat your brand as a trusted source, and they map directly to content actions you can take this week.

Why AI visibility metrics are different from SEO metrics

SEO taught us to track rankings, backlinks, and organic traffic. AI search flips the model. Users get answers without clicking. AI models synthesize information from multiple sources and decide which brands to cite based on topical authority, not just domain strength.

The shift means:

- Rankings don't translate: A #1 Google ranking doesn't guarantee a citation in ChatGPT's response

- Clicks aren't the goal: AI answers reduce click-through, so visibility and influence matter more than traffic volume

- Authority is contextual: AI models cite different sources for different query types -- product recommendations vs how-to guides vs comparisons

You need metrics that measure influence, not just traffic. The five below do exactly that.

Metric 1: Citation share (your slice of AI recommendations)

Citation share is the percentage of AI responses in your category that mention your brand. If 100 people ask ChatGPT "best project management tools" and your brand appears in 40 responses, your citation share is 40%.

This is the single most important AI visibility metric. It tells you whether AI models see you as a credible source worth recommending.

How to track citation share

- Define a set of core prompts that represent how your target customers ask questions (e.g. "best CRM for small teams", "how to automate email marketing", "Salesforce alternatives")

- Run those prompts across multiple AI models (ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews)

- Record which brands get cited in each response

- Calculate your citation share: (responses mentioning you / total responses) × 100

Tools like Promptwatch automate this process by running prompt sets on a schedule and tracking citation share over time. You define the prompts, the tool runs them weekly, and you get a dashboard showing your share vs competitors.

What good citation share looks like

- 20-30%: Solid presence -- you're in the conversation but not dominant

- 40-50%: Strong authority -- AI models consistently recommend you

- 60%+: Category leader -- you own the AI narrative in your space

Citation share compounds. The more often AI models cite you, the more they learn to associate your brand with the category, which increases future citations. This is why early investment in AI visibility pays off.

Metric 2: Mention rate and sentiment (how AI describes you)

Mention rate is how often your brand appears when users ask about your category. Sentiment is the tone -- positive, neutral, or negative -- of those mentions.

A high mention rate with negative sentiment is worse than a low mention rate with positive sentiment. You want both: frequent mentions that position you favorably.

Tracking mention rate

Mention rate = (prompts where you're mentioned / total prompts in your category) × 100

If you track 200 prompts and your brand appears in 80, your mention rate is 40%. This differs from citation share because it counts any mention, not just recommendations. A brand might be mentioned as context ("unlike X, which lacks Y feature") without being recommended.

Tracking sentiment

Sentiment analysis categorizes each mention:

- Positive: "X is a top choice for teams that need Y"

- Neutral: "X offers Y feature"

- Negative: "X struggles with Y compared to competitors"

Most AI visibility platforms include sentiment scoring. LLM Pulse specializes in this -- it tracks brand mentions across multiple LLMs and scores sentiment on each one.

What to do with sentiment data

Negative sentiment often points to specific product gaps or messaging problems. If AI models consistently describe your pricing as "expensive" or your interface as "complex", that's a signal to either fix the issue or publish content that reframes it.

Example: If ChatGPT says "Tool X is powerful but has a steep learning curve", publish a "Getting started with Tool X in 10 minutes" guide and a comparison showing how your onboarding compares to competitors. Over time, AI models will pick up the new framing.

Metric 3: Prompt coverage (which questions you're visible for)

Prompt coverage measures how many of the questions your target customers ask are answered with your brand in the response. It's the AI equivalent of keyword coverage in SEO.

Building a prompt library

Start by mapping the customer journey:

- Awareness stage: "What is X?", "How does X work?", "X vs Y"

- Consideration stage: "Best X for Y use case", "X alternatives", "X pricing"

- Decision stage: "X reviews", "Is X worth it?", "X vs competitor"

For each stage, write 10-20 prompts that represent real user queries. Use tools like AnswerThePublic, Reddit threads, and your own support tickets to find the exact phrasing customers use.

Measuring coverage

Run your prompt library across AI models and track:

- Total prompts: 100

- Prompts where you're cited: 45

- Coverage rate: 45%

Break this down by journey stage. You might have 70% coverage in awareness prompts but only 20% in decision prompts. That tells you where to focus content efforts.

Closing coverage gaps

Low coverage in a specific prompt cluster means AI models don't associate your brand with those topics. The fix:

- Identify the 5-10 highest-value prompts where you're missing

- Audit your existing content -- do you have pages that answer those questions?

- If not, create them. If yes, expand them with more depth, examples, and related subtopics

- Interlink related pages to signal topical authority

Prompt coverage improves slowly. Expect 60-90 days before new content starts getting cited consistently.

Metric 4: Share of voice vs competitors (who owns the AI narrative)

Share of voice (SOV) is your citation share relative to competitors. If three brands dominate your category and you're the fourth, SOV shows how far behind you are and which competitor is winning.

Calculating share of voice

- Pick 3-5 direct competitors

- Run the same prompt set for all brands

- Count total citations for each brand

- Calculate: (your citations / total citations across all brands) × 100

Example:

| Brand | Citations | Share of voice |

|---|---|---|

| You | 120 | 24% |

| Competitor A | 180 | 36% |

| Competitor B | 140 | 28% |

| Competitor C | 60 | 12% |

Competitor A owns the AI narrative. Your goal is to close that gap.

Using SOV to prioritize content

SOV reveals which competitors are winning specific prompt clusters. If Competitor A dominates "best X for small teams" prompts, audit their content:

- What topics do they cover that you don't?

- How deep is their content vs yours?

- What format are they using (listicles, comparisons, how-to guides)?

Then build better versions. AI models cite the most comprehensive, well-structured source. Depth beats breadth.

Tools like Profound and Promptwatch include competitor SOV tracking with heatmaps showing where each brand is strongest.

Metric 5: Assisted conversions and branded search lift (connecting visibility to revenue)

AI visibility only matters if it drives business outcomes. Assisted conversions and branded search lift are the two metrics that connect AI citations to revenue.

Tracking assisted conversions

An assisted conversion happens when a user discovers your brand through an AI assistant, then converts later through another channel (direct, organic search, paid ads).

To track this:

- Add UTM parameters to any links AI models might cite (e.g.

?utm_source=chatgpt&utm_medium=ai_search) - Set up a custom channel grouping in Google Analytics for "AI Search"

- Use multi-touch attribution to see how AI-assisted sessions contribute to conversions

Most users won't click directly from ChatGPT. They'll remember your brand name and search for it later. That's where branded search lift comes in.

Measuring branded search lift

Branded search lift is the increase in branded search volume correlated with AI visibility improvements.

Track this in Google Search Console:

- Filter for branded queries (your brand name + common modifiers like "pricing", "login", "review")

- Compare month-over-month growth

- Cross-reference with AI citation share increases

If citation share goes up 15% in January and branded search volume increases 20% in February, there's likely a causal link. AI recommendations drive brand awareness, which drives search behavior.

Why attribution is hard (and how to work around it)

AI models rarely pass referral data. A user who asks ChatGPT "best CRM" and sees your brand mentioned won't show up in your analytics as an AI referral. They'll show up as direct traffic or organic search when they visit later.

This is why citation share and branded search lift matter more than direct AI referral clicks. You're measuring influence, not clicks.

How to turn metrics into action: the weekly audit process

Metrics are useless without a process to act on them. Here's a simple weekly workflow:

Monday: Review last week's data

- Citation share: up or down?

- Which prompts did you gain/lose visibility on?

- Any sentiment shifts (positive to neutral, neutral to negative)?

Tuesday: Identify the top 3 gaps

Pick the three highest-value prompts where you're not cited but competitors are. Use prompt volume estimates (if available) to prioritize.

Wednesday-Thursday: Create or update content

For each gap:

- Audit existing content -- can you expand a current page?

- If no relevant page exists, create one

- Structure content to directly answer the prompt (use the exact question as an H2)

- Add depth: examples, comparisons, step-by-step instructions, screenshots

- Interlink to related pages

Friday: Publish and track

Publish the new/updated content. Add it to your prompt tracking list so you can measure citation improvements over the next 60 days.

This process compounds. Week 1, you close 3 gaps. Week 2, another 3. By week 10, you've addressed 30 high-value prompts and your citation share has climbed 10-15%.

Comparison: AI visibility tracking tools

Here's how the leading platforms stack up for the five metrics covered in this guide:

| Tool | Citation share | Sentiment tracking | Prompt coverage | Competitor SOV | Attribution |

|---|---|---|---|---|---|

| Promptwatch | ✓ | ✓ | ✓ | ✓ | Traffic attribution via snippet |

| Profound | ✓ | ✓ | ✓ | ✓ | GSC integration |

| LLM Pulse | ✓ | ✓✓ (best-in-class) | ✓ | ✓ | Basic |

| Otterly.AI | ✓ | ✓ | ✓ | ✓ | None |

| Peec AI | ✓ | ✓ | ✓ | ✓ | None |

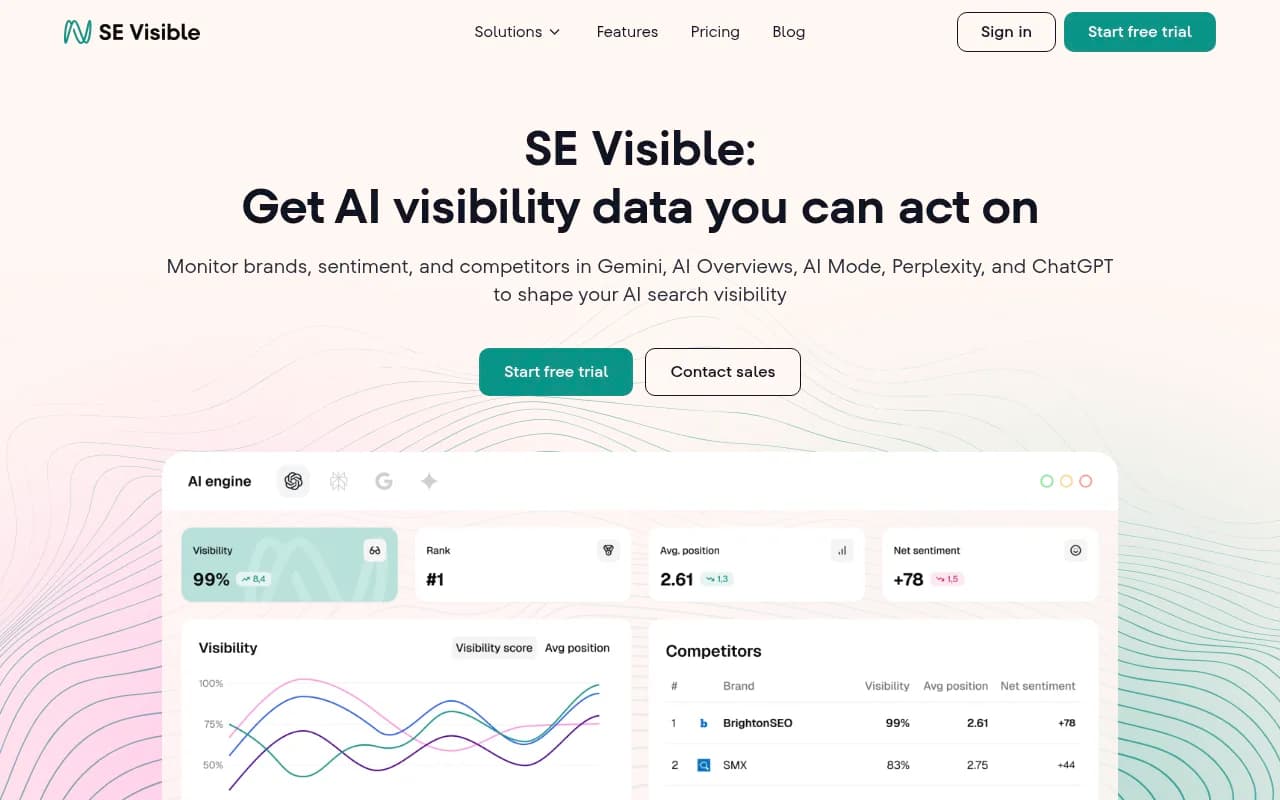

| SE Visible | ✓ | ✓ | ✓ | ✓ | Basic |

Promptwatch is the only platform that combines all five metrics with an AI writing agent to help you close content gaps. Most competitors stop at monitoring -- they show you the data but leave you to figure out what to do next. Promptwatch's Answer Gap Analysis tells you exactly which prompts competitors are visible for but you're not, then generates articles grounded in real citation data to help you catch up.

Common mistakes when tracking AI visibility

Mistake 1: Tracking too many prompts

More prompts ≠ better insights. Start with 20-30 high-value prompts that represent your core customer journey. You can always expand later.

Mistake 2: Ignoring prompt phrasing

AI models are sensitive to phrasing. "Best CRM" and "Top CRM tools" might return different results. Test multiple phrasings for each concept.

Mistake 3: Focusing only on ChatGPT

ChatGPT has the most users, but Perplexity, Claude, and Google AI Overviews all drive meaningful traffic. Track at least 3-4 models.

Mistake 4: Expecting instant results

AI visibility improves slowly. New content takes 60-90 days to get indexed and cited consistently. Track weekly, but judge progress monthly.

Mistake 5: Not connecting visibility to revenue

If you can't tie AI visibility to business outcomes (leads, conversions, revenue), you'll struggle to justify the investment. Set up branded search tracking and attribution from day one.

What to do this week

- Pick your 5 core metrics: Citation share, mention rate, prompt coverage, share of voice, branded search lift

- Choose a tracking tool: Promptwatch if you want monitoring + content generation, LLM Pulse if sentiment is your priority, Profound for enterprise-scale tracking

- Build your first prompt library: 20-30 prompts covering awareness, consideration, and decision stages

- Run a baseline audit: Where do you show up today? Where are the biggest gaps?

- Create one piece of content: Pick the highest-value gap and publish something this week

AI visibility compounds. The brands that start tracking and optimizing now will own the AI narrative in their category by Q3 2026. The ones that wait will be playing catch-up for years.