Summary

- AI search visibility requires consistent, multi-engine tracking across platforms like ChatGPT, Perplexity, Google AI Overviews, Claude, and Gemini -- manual testing can't capture the full picture

- Effective monitoring combines citation tracking, share-of-voice metrics, prompt-level analysis, and competitor benchmarking to measure what actually matters

- Choose tools based on LLM coverage, prompt scale, citation depth, and whether they help you take action (not just show data)

- A complete strategy includes baseline measurement, automated tracking, content gap analysis, and continuous optimization loops

- Small teams can start with focused monitoring on 2-3 engines and scale as they prove ROI

Why multi-engine monitoring matters in 2026

AI search has fundamentally changed how users discover brands. When someone asks ChatGPT for product recommendations or queries Perplexity for research, they're bypassing traditional search results entirely. Brands now appear in 90% of Google AI Mode responses, compared to just 43% in AI Overviews. The shift is real and accelerating.

But here's the problem: most marketers still can't answer basic questions about their AI visibility. Which engines cite your content? How often does your brand appear in AI recommendations? What prompts trigger mentions of your competitors but not you?

Manual testing -- typing prompts into ChatGPT and checking the response -- feels like a reasonable starting point. You get immediate feedback and can see exactly what the AI says about your brand. But this approach breaks down fast:

- AI responses shift with small phrasing changes, even when intent stays the same

- Different engines surface different sources for identical questions

- The same prompt returns different answers over time or across geographic locations

- Citations appear once and disappear on the next run

- User history and context influence results in ways you can't control

A single prompt can't tell you whether a result is repeatable or accidental. You can't compare performance, track movement over time, or explain outcomes to other teams. Manual testing gives you anecdotes, not data.

Visibility only becomes measurable when you have consistent, multi-engine, scalable tracking that lets you observe results over time. That's what this guide is about.

The core metrics that actually matter

Before you pick tools or set up dashboards, you need to know what you're measuring. AI visibility isn't a single number -- it's a set of interconnected metrics that tell different parts of the story.

Citation count and frequency

How often does an AI engine cite your content when answering relevant prompts? This is the baseline metric. If you're tracking 100 prompts related to your product category and your site appears in 15 responses, your citation rate is 15%.

But raw counts don't tell the whole story. A citation in position one (the first source mentioned) carries more weight than position five. Some platforms let you filter by citation rank or visibility score to account for this.

Share of voice

When AI engines answer prompts in your category, what percentage of citations go to your brand versus competitors? Share of voice measures your slice of the AI visibility pie.

If ChatGPT cites three sources when recommending project management tools, and your brand appears in 40% of those responses while competitors split the remaining 60%, you have a 40% share of voice. This metric makes competitive analysis possible.

Prompt coverage

Which specific prompts trigger citations of your content? More importantly, which prompts trigger competitor citations but not yours? This is where content gaps become visible.

Effective monitoring tools let you see prompt-level performance: "Your site appears for 'best CRM for small teams' but not 'affordable CRM software' even though competitors do." That's actionable intelligence.

Engine-specific performance

Your visibility on ChatGPT might look completely different from your visibility on Perplexity or Google AI Overviews. Each engine has its own ranking logic, source preferences, and citation patterns.

Tracking engine-by-engine performance reveals where you're strong and where you're invisible. It also helps you prioritize optimization efforts based on which engines your target audience actually uses.

Sentiment and positioning

Citations aren't all equal. Does the AI mention your brand positively, neutrally, or negatively? Does it recommend you as a top choice or list you as an alternative?

Some platforms track sentiment and positioning within AI responses. This matters because a negative mention can be worse than no mention at all.

Building your monitoring stack: Tool selection framework

The AI visibility tool market exploded in 2025 and matured through 2026. You now have dozens of options, each with different strengths, coverage, and price points. Choosing the right stack means evaluating tools across several dimensions.

LLM coverage: Which engines does it monitor?

The minimum viable set includes ChatGPT, Perplexity, Google AI Overviews, Claude, and Gemini. These five engines represent the majority of AI search volume in 2026. Tools that only track one or two engines leave you blind to most of the landscape.

Some platforms also monitor Meta AI, Grok, DeepSeek, Mistral, and Copilot. Broader coverage is better, but prioritize the engines your audience actually uses. If your customers are primarily on ChatGPT and Perplexity, those are your must-haves.

Prompt scale: Can it track hundreds or thousands of queries?

Manual prompt entry doesn't scale. You need tools that let you import keyword lists, auto-generate conversational prompts from those keywords, and run them at scale.

The best platforms convert your existing SEO keyword research into AI-relevant prompts automatically. Instead of tracking "project management software" as a keyword, the tool generates prompts like "What's the best project management software for remote teams?" and "Compare Asana vs Monday for small businesses."

Citation depth: Does it show why you were cited?

Seeing that your site was cited is useful. Understanding which specific page, which content section, and which facts the AI extracted is actionable.

Look for tools that provide citation-level detail: the exact URL cited, the snippet or paragraph the AI referenced, and ideally the context around that citation. This tells you what content is working and what to replicate.

Competitor benchmarking: Can you compare performance?

AI visibility is inherently competitive. You're not just measuring your own citations -- you're measuring your share of a finite pool of visibility.

Tools that let you add competitor domains and compare citation rates, share of voice, and prompt coverage side-by-side are essential for strategic decision-making. You need to know who's winning and why.

Action vs monitoring: Does it help you optimize?

This is the biggest divide in the market. Most tools are monitoring-only dashboards: they show you data but leave you stuck figuring out what to do next.

The best platforms close the loop by showing you content gaps, generating optimization recommendations, or even helping you create new content designed to get cited. Promptwatch is one example -- it identifies which prompts competitors rank for but you don't, then helps you generate content to fill those gaps.

Pricing and scalability

Entry-level tools start around $29-49/month for basic monitoring. Mid-tier platforms run $99-249/month with more engines, prompts, and features. Enterprise solutions can exceed $2,500/month.

Match your budget to your needs. If you're a small team testing AI visibility for the first time, start with an affordable option that covers 2-3 engines and 50-100 prompts. You can always scale up.

Comparison: Leading AI visibility platforms

| Platform | Engines Covered | Prompt Scale | Citation Depth | Competitor Tracking | Content Optimization | Starting Price |

|---|---|---|---|---|---|---|

| Promptwatch | 10 (ChatGPT, Perplexity, Google AI, Claude, Gemini, Meta AI, Grok, DeepSeek, Mistral, Copilot) | 50-350+ | Page-level + snippet extraction | Yes | Answer Gap Analysis + AI content generation | $99/mo |

| LLMrefs | 4 (ChatGPT, Perplexity, Google AI, Gemini) | Unlimited | Page-level + citation context | Yes | Prompt optimization recommendations | Custom |

| Peec AI | 5 (ChatGPT, Perplexity, Google AI, Claude, Gemini) | 100-500 | Basic citation tracking | Yes | No | €89/mo |

| Otterly.AI | 3 (ChatGPT, Perplexity, Google AI) | 50-200 | Basic citation tracking | Limited | GEO audit reports | $29/mo |

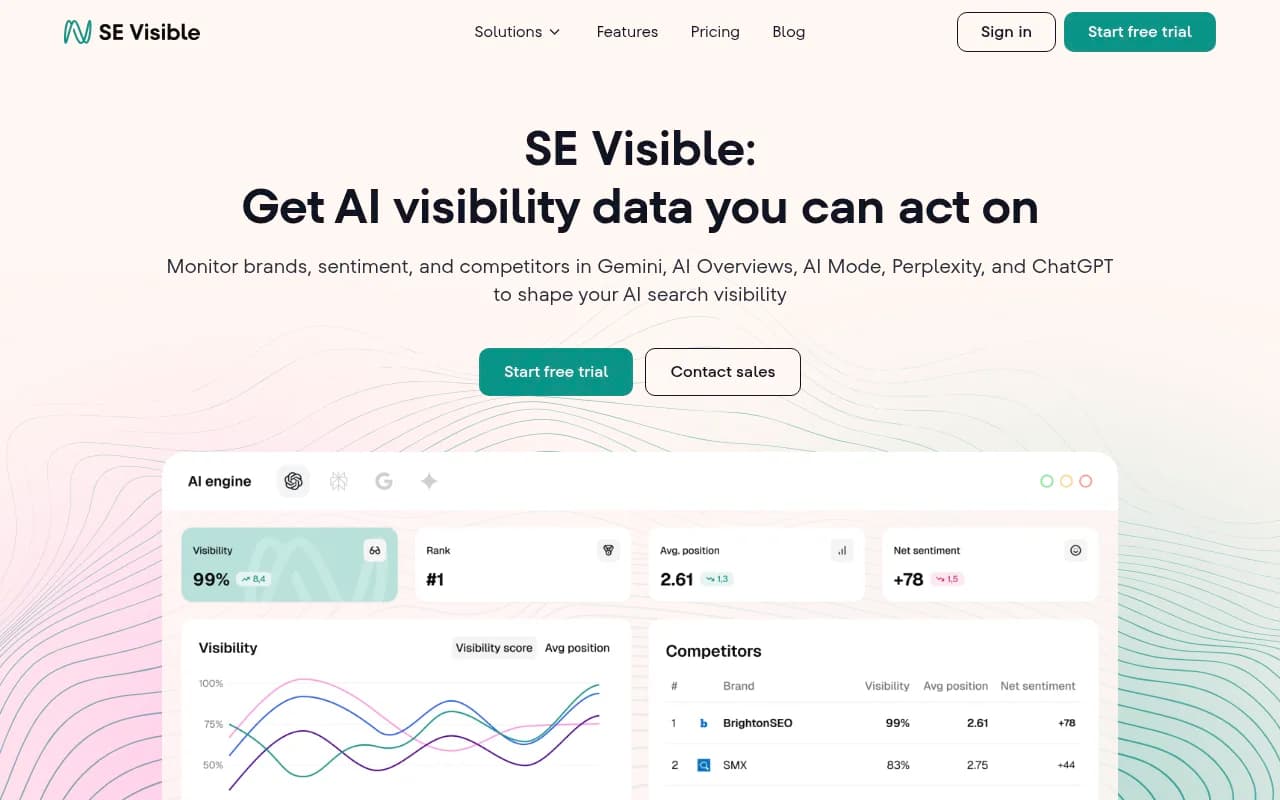

| SE Visible | 2 (Google AI Mode, AI Overviews) | 100-300 | Citation + positioning | Yes | No | $189/mo |

| Profound AI | 6 (ChatGPT, Perplexity, Google AI, Claude, Gemini, Meta AI) | 200-1000 | Deep citation analysis | Yes | AI content automation | $99/mo |

This table shows the strategic tradeoffs. Promptwatch offers the widest engine coverage and is the only platform that combines monitoring with content gap analysis and AI-powered content generation. LLMrefs focuses on statistical reliability and prompt optimization. Peec AI and Otterly.AI prioritize simplicity and affordability. SE Visible specializes in Google's AI products. Profound AI targets enterprise teams with automation needs.

Setting up your baseline: The first 30 days

Once you've chosen your tools, the first month is about establishing a baseline. You can't measure improvement without knowing where you started.

Step 1: Define your prompt universe

Start with your existing keyword research. Export your top 100-200 keywords from your SEO tool -- the ones that drive traffic, conversions, or represent core product categories.

Feed these into your AI visibility tool and let it generate conversational prompts. Review the output and refine. Remove prompts that don't match user intent. Add prompts that reflect how your customers actually talk (check support tickets, sales calls, and community forums for language patterns).

Your goal is a prompt set that represents the questions your target audience asks AI engines when they're looking for solutions in your category.

Step 2: Add competitors

Identify 3-5 direct competitors and add their domains to your monitoring dashboard. These should be brands that compete for the same customers and keywords, not just anyone in your industry.

Competitor selection matters. If you're a mid-market project management tool, don't compare yourself to Asana and Monday -- compare yourself to other mid-market alternatives. You want realistic benchmarks.

Step 3: Run your first tracking cycle

Most platforms run tracking cycles daily or weekly. Your first cycle establishes the baseline: citation counts, share of voice, prompt coverage, and engine-specific performance.

Don't expect immediate insights. AI visibility data is noisy. A single day's results might show you cited 10 times. The next day it's 8. The day after it's 12. You need at least two weeks of data to see patterns.

Step 4: Identify quick wins

While you're building baseline data, look for obvious gaps:

- Prompts where competitors are cited but you're not

- Engines where you have zero visibility

- Content topics where you have thin or outdated pages

- High-value prompts (based on search volume or business impact) where you're missing

These become your first optimization targets.

Optimization loops: Turning data into action

Monitoring without action is pointless. The goal isn't to watch dashboards -- it's to improve visibility. That requires structured optimization loops.

Content gap analysis

Your AI visibility tool should show you which prompts trigger competitor citations but not yours. This is your content gap report.

For each gap, ask:

- Do we have content on this topic at all?

- If yes, is it comprehensive enough to answer the prompt?

- If no, should we create it?

Prioritize gaps based on prompt volume (how often users ask this question) and business value (how closely it aligns with your product or service).

Content creation and optimization

Once you've identified gaps, you need content that AI engines will cite. This isn't traditional SEO content -- it's content engineered for AI consumption.

Key principles:

- Directness: AI engines prefer clear, definitive answers over vague marketing copy

- Structure: Use headings, lists, and tables that make information easy to extract

- Depth: Cover topics comprehensively -- AI models cite sources that provide complete answers

- Citations: Reference data, studies, and authoritative sources -- AI engines trust content that cites others

- Freshness: Update content regularly -- AI models prefer recent information

Some platforms, like Promptwatch, include AI writing agents that generate content grounded in citation data and competitor analysis. This speeds up the creation process and ensures the content is optimized for AI visibility from the start.

Crawler log analysis

AI engines crawl your website to discover content. If they can't access your pages, they can't cite them.

Tools like Promptwatch provide AI crawler logs that show which pages ChatGPT, Claude, Perplexity, and other engines are reading, how often they return, and what errors they encounter. Use this data to:

- Fix crawl errors (404s, 500s, timeouts)

- Optimize robots.txt and meta tags for AI crawlers

- Prioritize which pages to improve based on crawl frequency

Tracking and iteration

After publishing or updating content, monitor its impact:

- Did citation counts increase for target prompts?

- Did share of voice improve?

- Which engines started citing the new content?

- How long did it take for changes to appear in AI responses?

AI visibility changes aren't instant. It can take 1-4 weeks for new content to get indexed and cited. Track weekly and look for trends, not day-to-day fluctuations.

Advanced strategies: Beyond basic monitoring

Persona-based tracking

Different users ask different questions. A CTO researching software asks different prompts than a marketing manager. A small business owner phrases queries differently than an enterprise buyer.

Advanced platforms let you create personas and track visibility for each. This reveals whether you're visible to your actual target audience or just generic searchers.

Multi-language and geo-specific monitoring

AI responses vary by language and location. If you operate in multiple markets, you need to track visibility in each.

Some tools support multi-language prompt tracking and geo-specific results. This is critical for global brands or companies targeting non-English markets.

Reddit and YouTube integration

AI engines increasingly cite Reddit discussions and YouTube videos in their responses. If your brand or competitors are mentioned in these channels, it influences AI recommendations.

Platforms like Promptwatch surface Reddit threads and YouTube videos that AI models reference. This shows you where to engage, what conversations to join, and what content formats to create.

ChatGPT Shopping and product recommendations

ChatGPT now includes shopping features and product carousels. If you sell products, tracking your appearance in these recommendations is essential.

Monitor when your brand shows up in ChatGPT's shopping results, which products are featured, and how you compare to competitors. This is a new channel that most brands are still ignoring.

API integration and custom reporting

If you're an agency or enterprise team, you might need custom dashboards or automated reporting. Look for platforms with APIs and integrations (Looker Studio, Google Sheets, etc.) that let you export data and build on top of it.

Common mistakes to avoid

Tracking vanity metrics

Total citation count feels good but doesn't mean much if those citations come from irrelevant prompts. Focus on citation rate for high-value prompts and share of voice in your category.

Ignoring engine-specific differences

Optimizing for ChatGPT doesn't automatically improve your Perplexity visibility. Each engine has different ranking logic. Track and optimize for each separately.

Expecting instant results

AI visibility changes take time. Don't panic if you publish new content and don't see citations the next day. Give it 2-4 weeks and track trends, not daily fluctuations.

Over-optimizing for AI at the expense of humans

Content that's only designed for AI consumption often reads poorly to humans. Balance AI optimization with user experience. The best content works for both.

Not connecting visibility to business outcomes

Citations are great, but do they drive traffic? Revenue? Track the full funnel. Use traffic attribution (code snippets, GSC integration, or server logs) to connect AI visibility to actual business results.

Small team playbook: Getting started with limited resources

You don't need a big budget or a dedicated team to start tracking AI visibility. Here's a realistic plan for small teams:

Month 1: Baseline

- Choose one affordable tool (Otterly.AI at $29/mo or Promptwatch Essential at $99/mo)

- Track 50 prompts across 2-3 engines (ChatGPT, Perplexity, Google AI)

- Add 2 competitors

- Establish baseline metrics

Month 2: Quick wins

- Identify 5-10 content gaps where competitors are cited but you're not

- Update or create 2-3 pieces of content targeting those gaps

- Monitor crawler logs and fix any access issues

Month 3: Expand and iterate

- Scale prompt tracking to 100-150 queries

- Add 1-2 more engines

- Track citation changes from your content updates

- Create 2-3 more optimized articles

Month 4+: Optimization loop

- Continue content gap analysis and creation

- Track share of voice trends

- Connect visibility to traffic and conversions

- Scale prompt tracking and content production as ROI becomes clear

This approach lets you prove value before committing to expensive tools or large content budgets.

The future of AI visibility tracking

AI search is still evolving fast. New engines launch, existing ones change their algorithms, and user behavior shifts. What works in 2026 might not work in 2027.

But the fundamentals will stay the same: consistent multi-engine tracking, content gap analysis, optimization loops, and connecting visibility to business outcomes. The tools will get better, the data will get richer, and the competition will get fiercer.

The brands that win are the ones that start tracking now, build optimization processes, and iterate continuously. AI visibility isn't a one-time project -- it's an ongoing discipline, just like SEO.

If you're serious about being visible in AI search, the time to start is today. Pick a tool, define your prompts, establish your baseline, and start closing gaps. The longer you wait, the further ahead your competitors get.