Key takeaways

- AI search engines evaluate brands through multiple trust layers: entity identity, topical authority, consensus signals, and technical health -- not just traditional SEO metrics.

- Backlinks still matter, but contextual relevance now outweighs raw volume or domain authority scores.

- EEAT (Experience, Expertise, Authoritativeness, Trustworthiness) has become the dominant framework for AI citation decisions, not just Google's quality raters.

- Reputation signals from third-party sources -- Reddit, review platforms, YouTube -- directly influence what AI models say about your brand.

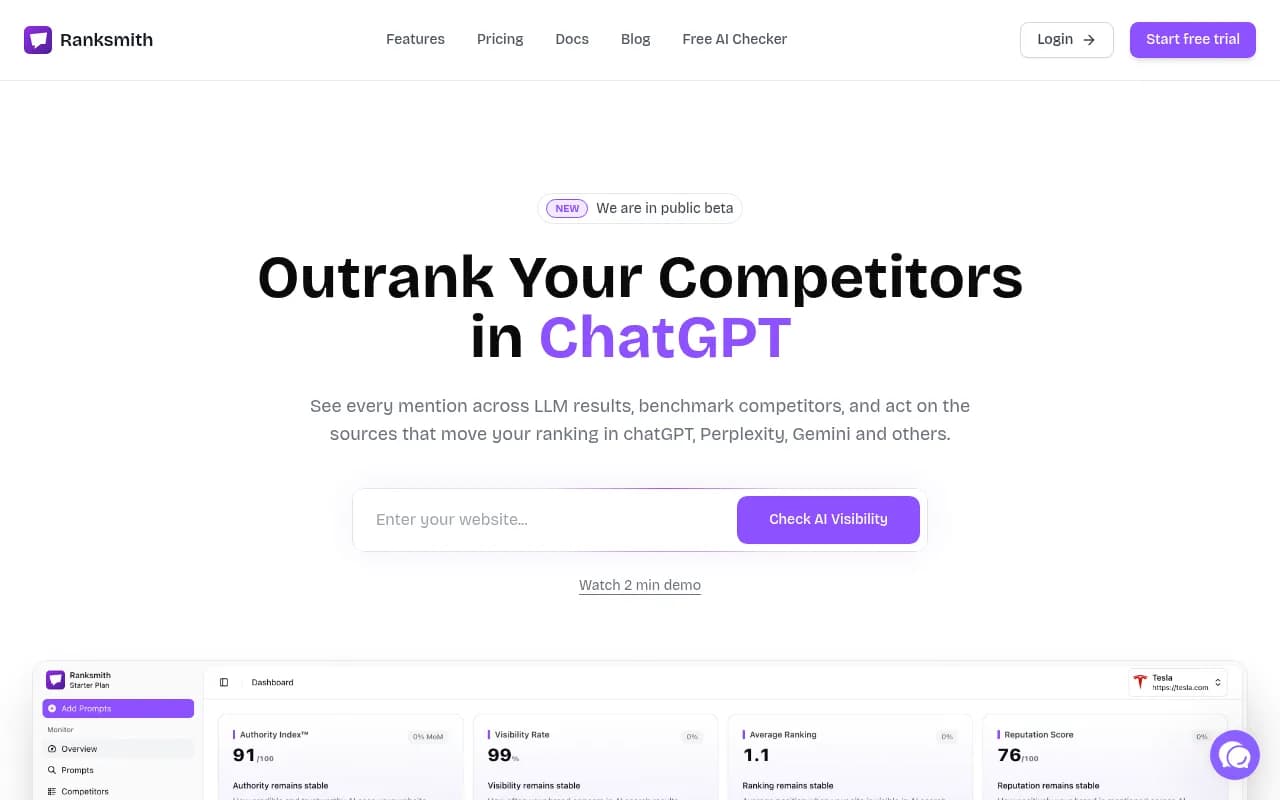

- Tracking your AI visibility and identifying content gaps is now a distinct discipline from traditional SEO, requiring dedicated tools and workflows.

There's a question most marketing teams are only starting to ask seriously: why does ChatGPT recommend my competitor instead of me?

It's not random. AI search engines -- ChatGPT, Perplexity, Gemini, Google AI Overviews, and the rest -- follow a surprisingly structured logic when deciding which brands to surface in their answers. Understanding that logic is the difference between being cited and being invisible.

This guide breaks down exactly how that trust evaluation works, what signals matter most in 2026, and what you can actually do about it.

How AI search engines build answers (and why it matters for brands)

Before getting into trust signals, it helps to understand what's actually happening when an AI model generates a recommendation.

These systems don't just retrieve the top-ranked web page and summarize it. They pull from multiple sources, weigh them against each other, and construct a synthesized answer. The brands that get cited are the ones the model has enough confidence in to include.

That confidence comes from a layered evaluation process. Authority Builders describes it well: AI systems treat brand authority as a "multi-layered signal" that combines brand mentions, editorial context, backlinks, reputation, and sentiment. Each layer adds (or subtracts) from the model's confidence in your brand.

The practical implication: you can't optimize for one signal in isolation. A brand with great technical SEO but no third-party mentions will still get skipped. A brand with lots of press coverage but thin, low-utility content will still get skipped. The model needs the full picture.

The four core signals AI models use to evaluate brands

1. Entity recognition and identity consistency

The first thing an AI model does is try to establish who you are. This sounds basic, but it's where a surprising number of brands fall short.

Entity recognition means the model can identify your brand as a distinct, verifiable organization -- not just a website. This requires consistency: your brand name, description, location, and contact information should match across your website, Google Business Profile, LinkedIn, Wikipedia (if applicable), and any other platform where you exist.

Structured data helps enormously here. Organization schema markup on your homepage tells AI crawlers exactly who you are, what you do, and how to verify it. "sameAs" links connecting your site to your social profiles and knowledge graph entries give the model multiple corroborating sources to draw from.

If your brand appears differently across platforms -- different name formats, inconsistent descriptions, conflicting addresses -- the model's confidence in your identity drops. It's not that it distrusts you; it just can't be sure you're who you say you are.

2. Topical authority

Once a model knows who you are, it evaluates whether you actually know what you're talking about in the relevant domain.

Topical authority isn't about having the most content. It's about having the right content -- comprehensive, specific, and genuinely useful coverage of the topics your audience cares about. AI models evaluate whether your site answers the full range of questions a user might have in your space, not just the high-traffic head terms.

This is where traditional SEO thinking starts to diverge from AI search optimization. A site with 50 deeply researched articles covering a topic thoroughly will often outperform a site with 500 thin pages in AI citations. The model is looking for the source that can actually answer the question, not the source with the most pages indexed.

Content utility matters too. Markobrando's analysis of Google's AI citation patterns notes that AI systems specifically evaluate whether content directly answers the user's query, provides actionable information, and offers something beyond what's already in the model's training data.

3. Consensus signals

This is the trust signal most brands underestimate. AI models don't just evaluate what you say about yourself -- they look for what others say about you.

Consensus signals include:

- Backlinks from editorially relevant, credible sources (not just high-DA sites)

- Brand mentions in news coverage, industry publications, and research

- Reviews and ratings on third-party platforms

- Discussions on Reddit, Quora, and community forums

- YouTube content that references or reviews your brand

The Reddit point deserves emphasis. One SEO practitioner with eight years of experience noted on Reddit's r/DigitalMarketing that "in the AI era, reputation signals matter as much as rankings. If AI tools summarize your brand using Reddit threads and review pages, you want those to be positive." That's not a small thing -- Reddit is one of the most heavily cited sources in AI search responses, and the conversations happening there directly shape how models characterize your brand.

The key shift from traditional link-building: AI models are sophisticated enough to evaluate the context of a mention, not just its existence. A backlink from a relevant industry publication that discusses your methodology in depth carries more weight than ten links from generic directories. Contextual relevance has replaced volume as the primary metric.

4. EEAT signals

Google formalized Experience, Expertise, Authoritativeness, and Trustworthiness as its quality evaluation framework years ago. In 2026, EEAT has effectively become the universal standard that all major AI models apply when deciding what to cite.

Experience means demonstrating first-hand knowledge. This shows up in content that references real case studies, original data, specific client outcomes, and the kind of detail that only comes from actually doing the work. Generic overviews score poorly here.

Expertise means validating credentials. Author bios with real credentials, bylines from named experts, citations of original research, and content that demonstrates genuine depth of knowledge all contribute. Anonymous content from "the editorial team" is a red flag.

Authoritativeness means industry recognition. This is where third-party signals (press coverage, awards, industry associations, speaking engagements) feed back into the model's evaluation. It's not enough to claim authority -- you need external sources confirming it.

Trustworthiness covers the technical and transparency layer: HTTPS, clear privacy policies, accessible contact information, accurate and up-to-date content, and consistent behavior across platforms.

Technical signals that affect AI crawlability

Trust signals aren't purely about content and reputation. The technical health of your site directly affects whether AI crawlers can access, read, and understand your content.

A few things that matter specifically for AI search (beyond standard technical SEO):

Structured data markup: Schema.org Organization, Article, FAQ, HowTo, and Product schemas all help AI models parse your content more accurately. The more structured your data, the less the model has to infer.

Page speed and accessibility: Slow pages or pages that require JavaScript rendering to display content can be partially or fully missed by AI crawlers. This is especially relevant for single-page applications.

Crawl accessibility: Some brands inadvertently block AI crawlers through their robots.txt or rate-limiting configurations. Checking your crawler logs to see which AI bots are visiting your site -- and whether they're encountering errors -- is something most brands have never done.

Content freshness: AI models weight recency for time-sensitive topics. Outdated content (especially content with specific dates or statistics that have since changed) can actually hurt your credibility score for those queries.

Why traditional SEO metrics don't map cleanly to AI visibility

Here's the uncomfortable truth for anyone who's been doing SEO for a while: your Domain Authority score, your keyword rankings, and your organic traffic numbers tell you almost nothing about your AI search visibility.

A brand can rank #1 for a keyword on Google and still be completely absent from ChatGPT's recommendations for the same query. The evaluation frameworks are different. AI models aren't looking at your position in the SERP -- they're evaluating whether you're a credible, authoritative source on the topic.

This means brands need to measure AI visibility separately. That includes tracking which prompts your brand appears in, which AI models cite you, what those citations say, and how your visibility compares to competitors. Tools like Promptwatch are built specifically for this -- tracking brand mentions across ChatGPT, Perplexity, Gemini, Claude, and others, while also identifying the content gaps that explain why competitors are getting cited and you're not.

The trust signal audit: where to start

Rather than trying to fix everything at once, it helps to audit your current position across the four signal categories and prioritize the biggest gaps.

| Signal category | What to audit | Common gaps |

|---|---|---|

| Entity identity | Schema markup, NAP consistency, social profiles | Missing Organization schema, inconsistent brand name formats |

| Topical authority | Content depth, topic coverage, content utility | Thin pages, missing subtopics, no original data |

| Consensus signals | Backlink quality, brand mentions, review profiles, Reddit presence | Generic link profiles, no third-party coverage, negative or absent reviews |

| EEAT | Author credentials, original research, transparency signals | Anonymous content, no author bios, outdated statistics |

| Technical health | Crawl accessibility, structured data, page speed | Blocked AI crawlers, missing schema, slow render times |

Start with entity identity -- it's the foundation everything else builds on. If AI models can't reliably identify who you are, the quality of your content doesn't matter.

Building consensus signals: the part most brands skip

Most brands focus on their own website when thinking about AI visibility. That's understandable but incomplete.

The sources AI models cite most heavily are often not your website. They're the Reddit threads discussing your product, the review aggregators comparing you to competitors, the YouTube videos where customers share their experience, and the industry publications that have covered your space.

This means your AI visibility strategy needs to extend beyond your own domain:

- Actively monitor and respond to brand discussions on Reddit and relevant forums

- Build a review acquisition strategy across the platforms AI models cite most (G2, Trustpilot, Google Reviews, depending on your category)

- Pursue editorial coverage in publications that AI models treat as authoritative sources in your space

- Create or encourage YouTube content that demonstrates your product's value

The goal isn't to game these channels -- it's to ensure that when an AI model goes looking for third-party perspectives on your brand, it finds accurate, positive, and substantive content.

Tracking your progress

One of the harder parts of AI search optimization is knowing whether your efforts are working. Unlike traditional SEO, where rank tracking tools give you daily position data, AI visibility is more fluid. The same prompt can produce different answers across different AI models, different regions, and different user contexts.

Dedicated AI visibility platforms have emerged to address this. Here's a quick look at some of the options:

The key things to track: which prompts your brand appears in, your share of voice versus competitors, what AI models actually say about you when they do cite you, and whether your visibility is improving over time as you publish new content.

Promptwatch goes a step further by connecting visibility data to actual traffic -- using a code snippet, Google Search Console integration, or server log analysis to show whether AI citations are translating into visits and revenue. That attribution layer is what separates optimization from monitoring.

What "safe to recommend" actually means to an AI model

There's a useful frame from The HOTH's analysis of AI trust signals: AI models are looking for brands that are "safe to recommend." That means the model is confident it won't embarrass itself by citing you.

A brand is safe to recommend when:

- Its identity is verifiable and consistent

- Its content is accurate, current, and genuinely useful

- Third parties (credible ones) vouch for its expertise

- Its technical presence is clean and accessible

- Its reputation signals are positive or at least neutral

The inverse is also true. Brands with inconsistent information, thin content, no third-party validation, or negative sentiment in review platforms and forums are unsafe to recommend. The model will skip them, even if their traditional SEO metrics look strong.

This framing is useful because it shifts the question from "how do I rank in AI search?" to "how do I become the kind of brand an AI model would be confident recommending?" The answer to the second question is more durable and harder to game.

The practical next step

If you take nothing else from this guide, take this: AI search visibility is now a measurable, trackable discipline -- not a vague aspiration.

Start by auditing your entity signals (schema, consistency, third-party profiles). Then evaluate your content against topical authority standards. Then look at your consensus signals and identify where you're absent from the conversations AI models are drawing from.

The brands winning in AI search in 2026 aren't necessarily the ones with the biggest budgets or the most content. They're the ones that understood the trust framework early and built their presence around it systematically.