Key Takeaways

- Visibility counts (how often your brand is mentioned) tell you nothing about sentiment, context, or competitive positioning -- the metrics that actually drive trust and conversions

- Sentiment analysis in AI responses reveals whether mentions are positive, negative, or neutral, helping you identify reputation risks before they spread

- Context tracking shows where your brand appears in AI answers (first citation vs buried in a list), which competitors are mentioned alongside you, and what topics trigger mentions

- Tools like Profound, Peec AI, and Promptwatch offer sentiment scoring, competitive heatmaps, and citation context analysis -- features missing from basic monitoring dashboards

- A complete sentiment tracking workflow includes prompt libraries organized by buyer journey stage, weekly sentiment trend reports, and alerts for negative shifts or competitor displacement

Why visibility counts are not enough

You run a query through an AI visibility tool. It reports: "Your brand was mentioned 47 times this week." You celebrate. Then traffic drops, sales stall, and customer support starts fielding questions about a competitor you've never heard of.

What happened? The visibility count told you your brand appeared in AI responses. It didn't tell you that 32 of those mentions were buried at the bottom of long lists, 8 were framed negatively ("Brand X is expensive compared to..."), and 7 appeared in answers where a competitor was positioned as the superior alternative.

Visibility is binary: you're either mentioned or you're not. Sentiment is dimensional. It captures tone, context, competitive framing, and the likelihood that a mention will actually drive a positive outcome. A single negative mention in a high-intent prompt ("best alternatives to [your brand]") can do more damage than 50 neutral citations in low-stakes queries.

Traditional SEO taught us to obsess over rankings. AI search requires a different lens. When ChatGPT or Perplexity synthesizes an answer, it's not returning a ranked list of URLs -- it's constructing a narrative. Your job is to understand where you fit in that narrative, how you're framed relative to competitors, and whether the sentiment around your brand is helping or hurting.

What sentiment tracking actually measures

Sentiment analysis in AI responses breaks down into three core dimensions:

Tone classification

Is the mention positive, negative, or neutral? Tools like Peec AI and Profound assign sentiment scores to each mention. A positive mention might look like: "Brand X is known for excellent customer support and fast onboarding." A negative mention: "Brand X has been criticized for high pricing and limited integrations." Neutral mentions are factual but lack evaluative language.

Tone matters because AI models don't just cite sources -- they editorialize. A neutral mention in a list of alternatives is fine. A negative framing in a high-intent query ("should I use Brand X or Brand Y?") is a conversion killer.

Competitive positioning

Where does your brand appear relative to competitors? Sentiment isn't just about you -- it's about how you're positioned in a competitive landscape. Tools with competitive heatmaps (like Promptwatch and Profound) show you which competitors are mentioned alongside your brand, how often, and in what context.

If your brand consistently appears in answers that frame a competitor as the superior choice, you have a positioning problem. If you're mentioned first in a list of alternatives, you have an advantage. If you're not mentioned at all in high-value prompts where competitors dominate, you have a visibility gap that no amount of neutral citations will fix.

Citation context

Where in the answer does your brand appear? First citation, middle of a list, or buried at the end? What topic or question triggered the mention? What other brands or sources are cited in the same response?

Context determines impact. A first-citation mention in a ChatGPT answer to "best project management tools for remote teams" carries more weight than a fifth-place mention in a generic "list 10 project management tools" query. Tools like ZipTie and Peec AI surface this context, showing you the full AI-generated answer, the prompt that triggered it, and where your brand fits in the narrative.

Tools that track sentiment (not just mentions)

Most AI visibility tools are glorified mention counters. They tell you your brand appeared X times across Y models. They don't tell you whether those mentions helped or hurt. Here are the tools that go deeper:

Sentiment scoring and trend tracking

Peec AI assigns sentiment scores to each mention and tracks weekly sentiment shifts. You see a clear score (positive, negative, neutral) and a trend line that shows whether sentiment is improving or degrading over time. This is critical for spotting reputation risks early -- a sudden drop in sentiment score might indicate a viral complaint, a competitor's smear campaign, or a product issue that's leaking into AI training data.

Profound offers similar sentiment analysis with the added benefit of persona-based tracking. You can see how sentiment varies across different user personas (e.g., enterprise buyers vs SMB users) and adjust your content strategy accordingly. If enterprise buyers see more negative mentions than SMBs, you know where to focus your reputation management efforts.

Promptwatch goes beyond sentiment scoring to show you the actual content gaps that drive negative or neutral mentions. Its Answer Gap Analysis reveals which prompts competitors are winning and why -- often because they have content that directly addresses a question your site doesn't answer. You see the missing topics, the angles competitors are covering, and the specific prompts where sentiment is weakest. Then the platform's AI writing agent helps you create content engineered to shift sentiment in your favor.

Competitive context and heatmaps

Profound and Promptwatch both offer competitive heatmaps that show which brands are mentioned alongside yours, how often, and in what context. You can see at a glance whether you're being framed as a leader, a challenger, or an also-ran. If your brand consistently appears in answers that position a competitor as the better choice, the heatmap makes it obvious.

Otterly.AI is a budget-friendly option that tracks competitive mentions across major AI surfaces (ChatGPT, Perplexity, Claude, Gemini). It doesn't offer sentiment scoring, but it does show you which competitors are winning for specific prompts -- a useful proxy for understanding competitive positioning.

Citation and source analysis

Promptwatch surfaces the exact pages, Reddit threads, YouTube videos, and domains that AI models cite when mentioning your brand. This is critical for understanding why sentiment is what it is. If negative mentions are driven by a single Reddit thread or a critical blog post, you know where to focus your reputation management efforts. If positive mentions are tied to a specific case study or testimonial page, you know what content to amplify.

ZipTie provides deep citation analysis with full screenshots of AI-generated answers. You see the entire response, the sources cited, and where your brand fits in the narrative. This level of detail is essential for auditing sentiment and identifying the root causes of negative or neutral framing.

Reddit and YouTube insights

AI models increasingly pull from Reddit discussions and YouTube videos when constructing answers. If your brand is being discussed negatively on Reddit, that sentiment will leak into AI responses. Promptwatch tracks Reddit and YouTube mentions, showing you which discussions are influencing AI models and what sentiment they carry. Most competitors ignore this channel entirely, leaving brands blind to a major source of reputation risk.

Methods for tracking sentiment at scale

Tools are only half the equation. You need a process for turning sentiment data into action. Here's a workflow that works:

Build a prompt library organized by sentiment risk

Not all prompts carry equal sentiment risk. High-intent queries ("should I use Brand X or Brand Y?", "Brand X alternatives", "is Brand X worth it?") are more likely to surface negative or competitive framing. Low-intent queries ("what is Brand X?", "Brand X features") tend to be neutral.

Organize your prompt library by risk level. Track sentiment for high-risk prompts weekly. Track low-risk prompts monthly. This lets you focus your attention where it matters most.

Set up sentiment alerts

Most sentiment tracking tools offer alerts when sentiment drops below a threshold or when a new negative mention appears. Configure these alerts to notify your team immediately. A sudden sentiment shift often indicates a brewing reputation crisis -- catching it early gives you time to respond before it spreads.

Track sentiment by buyer journey stage

Sentiment matters most at high-intent stages of the buyer journey. A negative mention in an awareness-stage query ("what is project management software?") is less damaging than a negative mention in a decision-stage query ("Asana vs Monday.com"). Segment your sentiment tracking by journey stage and prioritize fixes where the impact is highest.

Compare sentiment to traffic and conversions

Sentiment is a leading indicator. Traffic and conversions are lagging indicators. If sentiment drops but traffic holds steady, you have a grace period to fix the issue before it hits your bottom line. If sentiment drops and traffic follows, you're already in trouble. Tools like Promptwatch let you connect sentiment data to actual traffic attribution (via code snippet, GSC integration, or server log analysis), closing the loop between AI visibility and revenue.

Run competitive sentiment audits quarterly

Once per quarter, run a full competitive sentiment audit. Compare your sentiment scores to competitors across all tracked prompts. Identify patterns: Are competitors consistently framed more positively? Are there specific topics or questions where your sentiment lags? Use this data to guide content strategy, product messaging, and reputation management efforts.

Common sentiment tracking mistakes

Here's what doesn't work:

Tracking too many prompts. More prompts = more noise. Focus on high-value, high-intent prompts where sentiment actually drives outcomes. A list of 500 generic prompts will bury the signal in noise.

Ignoring context. A negative mention in a low-traffic prompt is not the same as a negative mention in a high-traffic, high-intent query. Context determines impact. Track where mentions appear, not just whether they're positive or negative.

Treating sentiment as static. Sentiment shifts over time. A positive mention today can turn negative tomorrow if a competitor publishes better content or a customer complaint goes viral. Track trends, not snapshots.

Focusing only on your brand. Sentiment is relative. If your sentiment score is 70% positive but competitors are at 85%, you're losing. Track competitive sentiment alongside your own.

Ignoring Reddit and YouTube. AI models pull heavily from these sources. If you're not tracking sentiment on Reddit and YouTube, you're missing a major driver of AI-generated opinions.

Sentiment tracking comparison table

| Tool | Sentiment scoring | Competitive heatmaps | Citation context | Reddit/YouTube tracking | Starting price |

|---|---|---|---|---|---|

| Promptwatch | Yes | Yes | Yes | Yes | $99/mo |

| Profound | Yes | Yes | Yes | No | $199/mo |

| Peec AI | Yes | No | Yes | No | $79/mo |

| ZipTie | No | Yes | Yes | No | $149/mo |

| Otterly.AI | No | Yes | No | No | $29/mo |

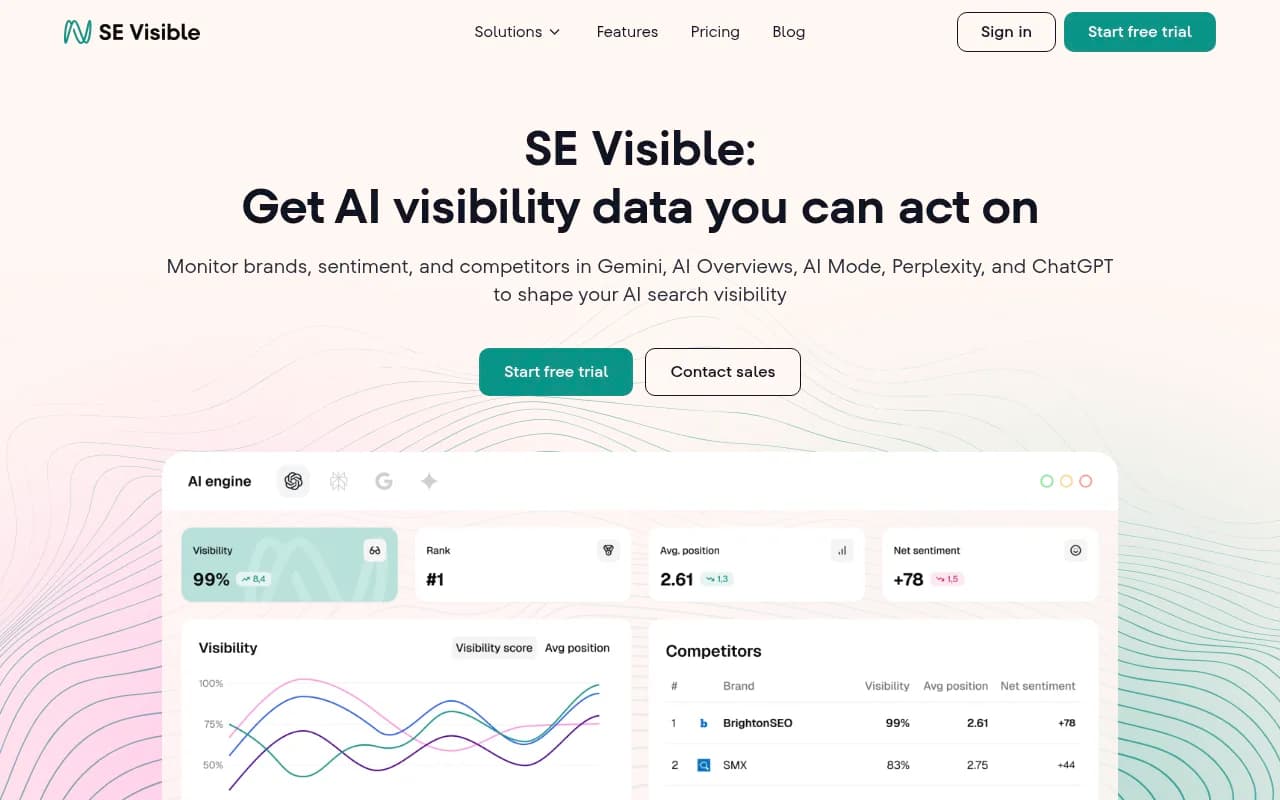

| SE Visible | Yes | No | No | No | $49/mo |

What to do when sentiment turns negative

Negative sentiment in AI responses is fixable, but it requires a systematic approach:

Identify the source. Use citation analysis to find the specific pages, Reddit threads, or YouTube videos driving negative mentions. Most negative sentiment traces back to a small number of sources.

Create counter-content. Publish content that directly addresses the negative framing. If AI models are citing a complaint about pricing, publish a detailed pricing guide with ROI calculators and customer testimonials. If competitors are framed as superior alternatives, publish comparison pages that highlight your differentiators.

Optimize for the prompts that matter. Use tools like Promptwatch to identify the specific prompts where negative sentiment is highest. Then create content engineered to rank for those prompts. The platform's AI writing agent can generate articles, listicles, and comparisons grounded in real citation data, prompt volumes, and competitor analysis -- content designed to shift sentiment in your favor.

Engage on Reddit and YouTube. If negative sentiment is driven by Reddit discussions or YouTube videos, engage directly. Respond to complaints, share your side of the story, and provide value. AI models will pick up on this engagement and adjust their framing accordingly.

Monitor the results. Track sentiment weekly after publishing counter-content. You should see a shift within 2-4 weeks as AI models re-crawl your site and incorporate new content into their responses. If sentiment doesn't improve, the content isn't addressing the root cause -- dig deeper and try again.

The future of sentiment tracking in AI search

Sentiment tracking is still early. Most tools are playing catch-up, adding sentiment features as an afterthought. The next generation of tools will go deeper:

Emotion detection. Beyond positive/negative/neutral, tools will classify emotional tone: frustration, excitement, skepticism, trust. This gives you a richer understanding of how users feel about your brand in AI-generated answers.

Sentiment attribution. Which specific pieces of content or sources are driving sentiment? Future tools will trace sentiment back to individual pages, Reddit threads, or YouTube videos, making it easier to fix the root cause.

Predictive sentiment modeling. AI models will predict how sentiment is likely to shift based on current trends, competitor activity, and content gaps. You'll get early warnings before sentiment turns negative, giving you time to act.

Real-time sentiment alerts. Today's tools update sentiment daily or weekly. Future tools will track sentiment in real-time, alerting you the moment a negative mention appears in a high-value prompt.

For now, the tools that matter are the ones that go beyond mention counts to track tone, context, and competitive positioning. Start with Promptwatch, Profound, or Peec AI, build a prompt library organized by sentiment risk, and track trends weekly. Sentiment is the metric that actually predicts outcomes -- visibility is just noise.