Key takeaways

- Most GEO tools in 2026 are monitoring dashboards — they show you where you're invisible but don't help you fix it

- The switch to a full optimization platform involves three stages: auditing what you have, migrating your prompt library, and establishing a content feedback loop

- The biggest switching cost isn't money — it's rebuilding your prompt set and baseline data, which takes 2-4 weeks

- Before switching, confirm your new platform covers content generation, answer gap analysis, and traffic attribution — not just citation tracking

- Tools like Promptwatch close the loop between visibility data and content action, which is what separates optimization platforms from trackers

If you've been using a GEO monitoring tool for a few months, you've probably hit the same wall. The dashboard looks great. You can see your brand mention rate, your share of voice across ChatGPT and Perplexity, maybe a competitor heatmap. And then... you're stuck. The tool told you that you're invisible for 60% of your target prompts. It just didn't tell you what to do about it.

That's the monitoring trap. And in 2026, with Gartner projecting a 25% drop in traditional search volume as users migrate to AI answer engines, staying stuck in it has real consequences.

This guide is for teams who've outgrown their current GEO tool and want to move to something that actually helps them act on the data.

Why monitoring-only tools aren't enough anymore

When GEO tools first emerged, monitoring was the right starting point. Nobody knew what AI search visibility even looked like, so just seeing whether ChatGPT mentioned your brand felt like progress.

That phase is over.

The teams winning in AI search right now aren't just watching their visibility scores. They're running a continuous loop: find the prompts where competitors appear and they don't, create content specifically designed to get cited, and then track whether that content moves the needle. Monitoring tools support step three at best. They don't help with steps one or two at all.

The research backs this up. According to GEO analysis from Stackmatix, citation frequency accounts for roughly 35% of AI answer inclusions — and brands actively optimizing for AI search see citation rates 2-3x higher than those relying on passive monitoring. Watching your citation rate without acting on it is like watching your organic traffic drop without touching your content.

The core problem with monitoring-only platforms is structural. They're built around reporting, not action. Their data models are designed to answer "what's happening?" — not "what should I do next?" Switching to an optimization platform means switching to a tool built around a fundamentally different question.

What a full optimization platform actually does differently

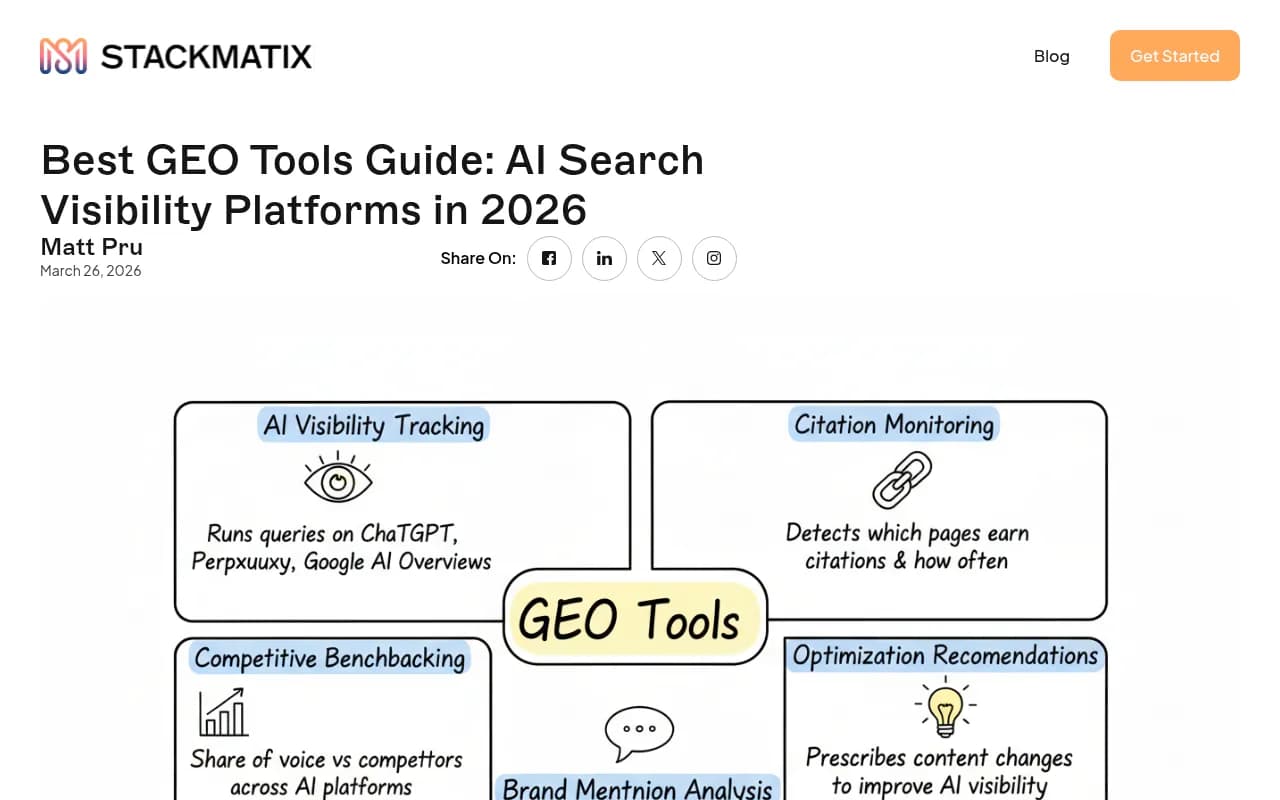

Before you start evaluating alternatives, it helps to be precise about what "optimization" means in this context. There are three capabilities that separate a real optimization platform from a monitoring dashboard:

Answer gap analysis. This isn't just showing you prompts where you don't appear. It's showing you the specific prompts where competitors appear and you don't, with enough context to understand why — what content they have that you're missing, what topics AI models want to cite but can't find on your site.

Content generation grounded in citation data. Generic AI writing tools produce generic content. An optimization platform generates content based on what AI models actually cite — which sources they pull from, which formats they prefer, which angles get picked up. The output is engineered to get cited, not just to exist.

Traffic attribution. Visibility scores are vanity metrics unless you can connect them to actual business outcomes. A proper optimization platform closes the loop by showing you which pages are being cited, which models are citing them, and how that translates into traffic and revenue.

Most tools in the market today cover one of these three. Very few cover all three.

Auditing your current setup before you switch

Switching platforms without auditing what you have is how you lose months of baseline data. Before you do anything else, export everything your current tool has collected.

What to export

- Your full prompt library (every prompt you're currently tracking)

- Historical visibility scores, ideally at the page level if your tool supports it

- Competitor benchmarks — your share of voice vs. specific competitors over time

- Any citation source data (which pages, domains, or external sources AI models are pulling from)

- Alert history if your tool has it

Most monitoring tools will let you export CSVs. Some have API access. Either way, do this before you cancel your subscription — some platforms delete your data immediately on cancellation.

What to document

Beyond raw data, write down the decisions you made when setting up your current tool:

- Which prompts you chose to track and why

- Which competitors you benchmarked against

- Which AI models you prioritized

- Any custom personas or regional settings you configured

This documentation is what lets you rebuild your setup quickly in a new platform rather than starting from scratch.

Evaluating optimization platforms: what to look for

Here's a practical comparison of the key capabilities to evaluate when switching. Not every platform covers every dimension — this table is designed to help you ask the right questions during trials.

| Capability | Monitoring-only tools | Full optimization platforms |

|---|---|---|

| Brand mention tracking | Yes | Yes |

| Share of voice across LLMs | Yes | Yes |

| Competitor visibility heatmaps | Sometimes | Yes |

| Answer gap analysis | No | Yes |

| Prompt volume and difficulty scoring | No | Yes |

| AI-grounded content generation | No | Yes |

| AI crawler log access | No | Yes (best platforms) |

| Page-level citation tracking | Rarely | Yes |

| Traffic attribution | No | Yes |

| Reddit/YouTube citation tracking | No | Yes (best platforms) |

| ChatGPT Shopping tracking | No | Yes (best platforms) |

When you're evaluating a new platform, run through this list explicitly. Ask for a demo that shows each capability working with real data, not just slides.

Some platforms worth evaluating at different price points and use cases:

Promptwatch covers the full loop — gap analysis, content generation grounded in 880M+ citations, crawler logs, and traffic attribution. It's the platform that most directly addresses the monitoring trap described above.

Profound has strong tracking capabilities and is worth evaluating for enterprise teams, though it sits at a higher price point and doesn't include content generation.

AthenaHQ tracks visibility across 8+ AI engines and has solid monitoring features, but like most competitors, it's primarily a tracking platform rather than an optimization one.

Otterly.AI is one of the more affordable monitoring options, good for teams that are just starting out, but it won't help you act on what you find.

Peec AI offers multi-language tracking which is useful for international teams, though it's monitoring-focused.

The migration process, step by step

Step 1: Run both tools in parallel for 2-4 weeks

Don't cancel your old tool the moment you sign up for a new one. Run them simultaneously long enough to validate that the new platform is capturing the same prompts and producing comparable visibility scores. If the numbers diverge significantly, you need to understand why before you rely on the new data.

This parallel period also gives you time to rebuild your prompt library in the new platform without losing continuity in your tracking.

Step 2: Rebuild your prompt library with better structure

This is actually an opportunity, not just a chore. Most teams set up their initial prompt library quickly and never revisited it. When you migrate, rebuild it properly:

- Group prompts by funnel stage (awareness, consideration, decision)

- Tag prompts by persona if your new platform supports it

- Include prompts where you know competitors are strong, not just prompts where you think you should win

- Add long-tail conversational prompts that reflect how real users actually query AI engines, not just keyword-style phrases

The quality of your prompt library determines the quality of your optimization work. A better prompt set in a better platform compounds quickly.

Step 3: Establish your baseline in the new platform

Before you start making content changes, let the new platform run for at least two weeks to establish a clean baseline. This is your before state. Without it, you can't measure whether your optimization work is actually moving the needle.

Document your baseline visibility scores by:

- Overall brand mention rate

- Share of voice vs. your top 3 competitors

- Which AI models are citing you most and least

- Which of your pages are currently being cited

Step 4: Run your first answer gap analysis

This is where optimization platforms earn their keep. An answer gap analysis shows you the specific prompts where competitors appear in AI responses but you don't. The output isn't just a list of prompts — it's a content brief. You can see what your competitors have that AI models are citing, which tells you what you need to create.

Prioritize gaps based on two factors: prompt volume (how often real users are asking this) and difficulty (how entrenched your competitors are). High volume, lower difficulty gaps are where you should start.

Step 5: Create content and close the loop

With your gap analysis in hand, use your platform's content generation tools to create content specifically designed to fill those gaps. The key difference between this and regular content creation is that you're not writing for humans first and hoping AI picks it up. You're writing based on what AI models have demonstrated they want to cite.

After publishing, track which pages start getting cited, how citation rates change, and whether you're gaining share of voice on the prompts you targeted. This feedback loop is what makes optimization different from monitoring.

Common mistakes when switching platforms

Switching for features you won't use. It's easy to get excited about a platform's full feature set during a demo. Before you commit, map each feature to a specific workflow your team will actually run. If you can't name who will use a feature and when, it's not a reason to switch.

Abandoning your historical data. Even if your old platform's data isn't perfect, it's your baseline. Teams that switch without exporting their history lose the ability to show progress over time, which matters when you're reporting to stakeholders.

Expecting immediate results. AI models don't update their training data in real time. Content you publish today might not start appearing in AI citations for weeks. Build this lag into your expectations and your reporting timelines.

Tracking too many prompts too quickly. More prompts means more noise, not more signal. Start with a focused set of 50-100 high-priority prompts, get those working well, then expand. Platforms that charge per prompt (which most do) reward this discipline anyway.

Skipping the attribution setup. Traffic attribution is the hardest part of GEO to implement and the most commonly skipped. But it's also what connects your visibility work to revenue. Whether it's a code snippet, a GSC integration, or server log analysis, set it up during your migration — not six months later.

How to make the business case for switching

If you need to justify the cost of moving to a more capable platform, the argument is straightforward: monitoring tools tell you what's wrong, optimization platforms help you fix it. The value isn't in the data — it's in the action the data enables.

A few concrete numbers help here. According to GEO research, brands actively optimizing for AI search see 2-3x higher citation rates than those passively monitoring. If your current tool shows you're getting cited in 15% of relevant AI responses, an optimization platform that helps you get to 30-45% citation rate is a meaningful business outcome, especially as AI search continues to take share from traditional search.

The switching cost is real but bounded. Budget 2-4 weeks of parallel running, a few hours to rebuild your prompt library, and a one-time attribution setup. After that, the ongoing cost is just the platform fee — which for most optimization platforms is in the $249-$579/month range for mid-market teams.

A note on timing

The window for building AI search visibility is not infinite. AI models develop citation patterns over time, and brands that establish themselves as reliable sources early tend to maintain that position. The teams that started optimizing in 2024 and 2025 have a head start that's getting harder to close.

That doesn't mean it's too late — it means the cost of waiting is going up. If you've been sitting on monitoring data without acting on it, the right time to switch was six months ago. The second best time is now.

The practical path forward: export your data today, start a trial of an optimization platform this week, and run your first answer gap analysis before the end of the month. The gap analysis alone will tell you more about what to do next than months of monitoring dashboards ever did.