Summary

- Page-level tracking matters: Knowing your brand gets cited is useful. Knowing which pages get cited tells you what content works and what's missing.

- Most tools don't do this: Many AI visibility platforms track brand mentions but can't show you the specific URLs AI engines pull from.

- Five tools stand out: Promptwatch, Profound, Conductor, Bing Webmaster Tools (Copilot only), and a few others offer genuine page-level citation data.

- Citation gap analysis is the play: Compare your cited pages vs competitors' cited pages, identify the content you're missing, then create it.

- Bing's data is real but limited: Microsoft's AI Performance report shows exact Copilot citations per URL -- but only for Bing Copilot, not ChatGPT or Perplexity.

Why page-level citation tracking changes the game

If you're monitoring AI visibility, you're probably tracking whether your brand shows up in ChatGPT, Perplexity, or Google AI Overviews. That's a start. But it's not enough.

Brand-level tracking tells you that you're being cited. Page-level tracking tells you why. It shows you which articles, guides, or product pages AI engines consider authoritative. It reveals patterns: maybe your how-to content gets cited constantly while your product pages never do. Maybe one blog post accounts for 70% of your citations and everything else is invisible.

Without page-level data, you're flying blind. You know you have a visibility problem but not where to fix it.

What page-level citation tracking actually means

Page-level citation tracking means the tool can show you:

- The specific URL that was cited (not just your domain)

- How many times that URL was cited across different prompts

- Which AI models cited it (ChatGPT vs Perplexity vs Claude)

- What prompts triggered the citation

- How your pages compare to competitors' pages for the same prompts

This is different from brand monitoring ("your brand was mentioned 47 times this week") and different from domain-level tracking ("your site appeared in 12% of responses"). You need the granular view.

The Bing Webmaster Tools AI Performance report: real data, narrow scope

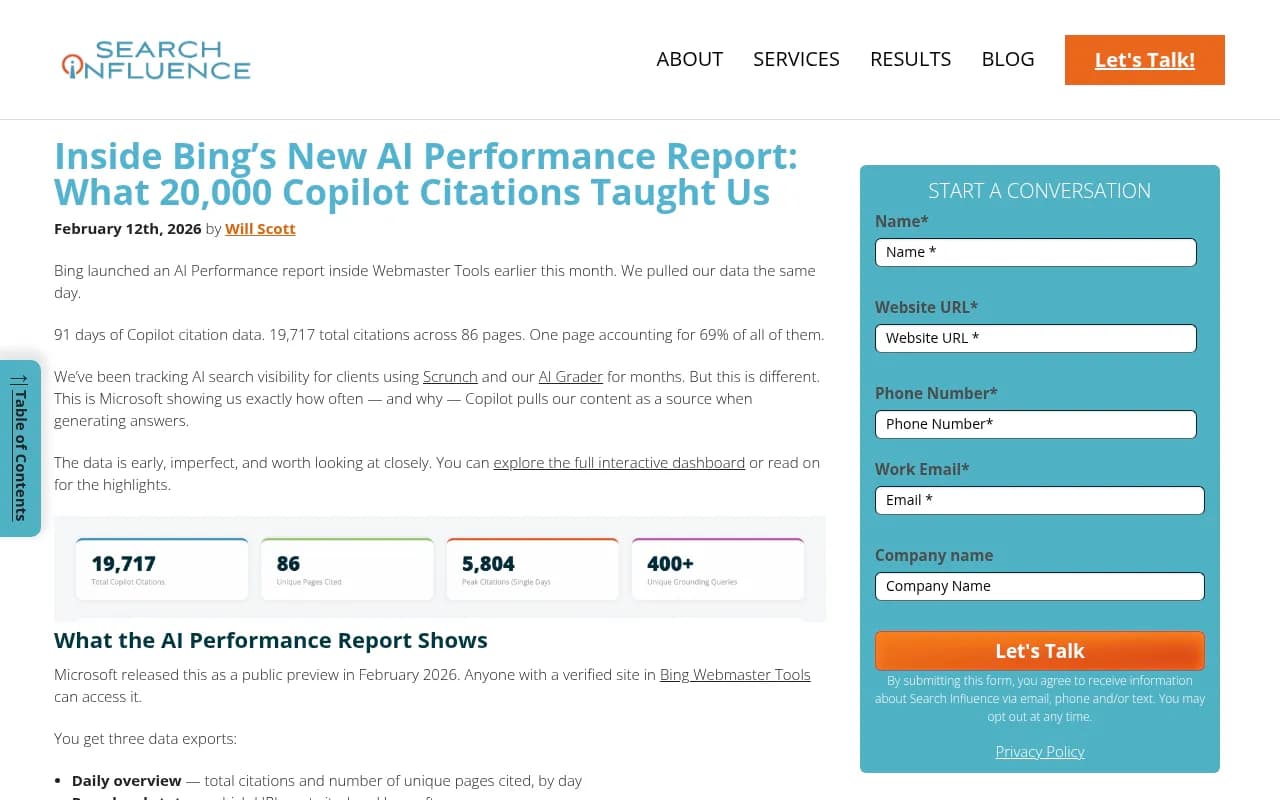

Microsoft launched an AI Performance dashboard inside Bing Webmaster Tools in February 2026. It's the first time a major AI platform has given publishers direct visibility into citation data.

The report shows three things:

- Daily citation counts: Total citations and number of unique pages cited per day

- Page-level stats: Which URLs get cited and how often

- Grounding queries: The retrieval queries that triggered citations

Search Influence pulled their data the day the report launched. 91 days of Copilot citations. 19,717 total citations across 86 pages. One page -- their AI SEO tracking tools analysis -- accounted for 69% of all citations. The top four pages made up 90% of total citations. Everything else combined was 10%.

That's the distribution you'll see everywhere: a handful of pages doing all the work, and a long tail of content that AI engines ignore.

The catch: this only tracks Bing Copilot. If you want ChatGPT, Perplexity, Claude, or Gemini citation data, you need a third-party tool. Microsoft hasn't said whether they'll expand the report to cover other models.

Tools that actually show page-level citations

Most AI visibility platforms don't do page-level tracking. They'll tell you your brand was mentioned or your domain appeared in a response, but they won't show you the specific URL. Here are the ones that do.

Promptwatch: page-level tracking + content gap analysis

Promptwatch tracks citations at the page level across 10 AI models: ChatGPT, Perplexity, Google AI Overviews, Claude, Gemini, Meta AI, DeepSeek, Grok, Mistral, and Copilot.

The platform shows you which pages get cited, how often, and for which prompts. But the real value is the Answer Gap Analysis: it surfaces the prompts your competitors get cited for but you don't, then shows you the specific content angles and topics your site is missing. The built-in AI writing agent generates articles grounded in citation data (880M+ citations analyzed) and optimized for AI search visibility.

You also get AI crawler logs -- real-time data on which pages ChatGPT, Claude, and Perplexity crawlers are reading, how often they return, and what errors they hit. Most competitors don't offer this at all.

Pricing starts at $99/month (Essential: 1 site, 50 prompts, 5 articles), $249/month (Professional: 2 sites, 150 prompts, 15 articles, crawler logs), and $579/month (Business: 5 sites, 350 prompts, 30 articles). Free trial available.

Profound: citation analysis with competitor benchmarking

Profound tracks page-level citations and breaks them down by AI platform. You can see which URLs get cited by ChatGPT vs Perplexity vs Google AI Overviews, and how your pages stack up against competitors.

Profound published research showing drastically different citation patterns between platforms. ChatGPT tends to cite fewer sources but goes deeper. Perplexity cites more sources but spreads citations across a wider range of domains. Google AI Overviews pulls heavily from high-authority publishers and tends to cite the same handful of domains repeatedly.

The platform's strength is competitive analysis: you can compare your cited pages vs competitors' cited pages for the same set of prompts, then identify content gaps. Pricing is higher than most competitors but includes white-glove onboarding and strategy consulting.

Conductor: persona-based citation tracking

Conductor's AI visibility module tracks page-level citations and lets you filter by persona. You can see which pages get cited when users prompt as a "small business owner" vs "enterprise buyer" vs "technical decision-maker."

This matters because AI engines tailor responses based on how the user phrases the prompt. A vague question like "best CRM" might pull generic listicles. A specific question like "CRM for real estate teams under 10 people" pulls different content entirely. Conductor lets you track both.

The platform also includes citation velocity tracking: how fast your pages are gaining or losing citations over time. This helps you spot trends early -- if a competitor's new guide is stealing citations from your existing content, you'll see it before it becomes a problem.

Pricing is enterprise-level. Expect to pay $2,000+ per month depending on the number of prompts and sites you're tracking.

Otterly.AI: affordable page-level tracking

Otterly.AI is one of the cheaper options that still offers page-level citation data. You can see which URLs get cited, how often, and by which AI models.

The interface is simpler than Promptwatch or Profound -- no content gap analysis, no AI writing agent, no crawler logs. But if you just want to know which pages are getting cited and don't need the optimization layer, Otterly.AI gets the job done.

Pricing starts at $49/month for basic tracking. The main limitation: no Reddit or YouTube citation tracking, and no visitor analytics to connect citations to actual traffic.

Comparison table: page-level citation tracking tools

| Tool | Page-level tracking | AI models covered | Content gap analysis | Crawler logs | Starting price |

|---|---|---|---|---|---|

| Promptwatch | Yes | 10 (ChatGPT, Perplexity, Claude, etc.) | Yes | Yes | $99/mo |

| Profound | Yes | 8+ | Yes | No | $500+/mo |

| Conductor | Yes | 6+ | Limited | No | $2,000+/mo |

| Otterly.AI | Yes | 5+ | No | No | $49/mo |

| Bing Webmaster Tools | Yes | 1 (Copilot only) | No | No | Free |

How to use page-level citation data

Once you have page-level citation data, here's what to do with it.

1. Identify your citation winners

Pull a report of your most-cited pages. Sort by total citations. Look at the top 5-10 pages.

What do they have in common? Are they all how-to guides? Product comparisons? Data-driven research? Long-form tutorials? Find the pattern.

If 80% of your citations come from 3 pages, you have a content distribution problem. You need more content that looks like those 3 pages.

2. Run a citation gap analysis

Compare your cited pages vs competitors' cited pages for the same set of prompts. Most tools (Promptwatch, Profound, Conductor) have a built-in competitor comparison feature.

Look for prompts where competitors get cited but you don't. Export the list. For each prompt, note:

- What content angle the competitor used (listicle, how-to, case study, data report)

- What specific questions they answered that you didn't

- What format worked (long-form guide, short FAQ, video transcript)

This is your content roadmap. You're not guessing what to write next -- you're filling documented gaps.

3. Audit your uncited pages

Pull a list of pages that have zero citations. Cross-reference it with your site's top organic landing pages (from Google Search Console).

If a page ranks well in Google but never gets cited by AI engines, that's a signal. Either:

- The content is too thin or generic (AI engines prefer depth)

- The page is optimized for keywords but doesn't answer real questions (AI engines pull from content that directly answers prompts)

- The page has technical issues (blocked by robots.txt, slow to load, broken structured data)

Fix the fixable ones. Rewrite the thin ones. Retire the dead weight.

4. Track citation velocity over time

Set up a weekly or monthly report that shows citation trends for your top pages. You want to catch drops early.

If a page that was getting 50 citations per week suddenly drops to 10, something changed. Maybe a competitor published a better guide. Maybe the AI model's algorithm shifted. Maybe your page fell out of the model's training data refresh cycle.

Whatever the reason, you need to know fast so you can respond.

What most tools get wrong about page-level tracking

A lot of platforms claim to offer page-level citation tracking but actually don't. Here's what to watch out for.

They show domain-level data and call it page-level

Some tools will show you "yoursite.com was cited 47 times" and break it down by AI model. That's domain-level tracking. Page-level tracking shows you "yoursite.com/guide/how-to-x was cited 12 times, yoursite.com/blog/best-tools was cited 8 times."

If the tool can't show you the specific URL, it's not page-level tracking.

They only track branded prompts

Some tools only track citations when someone explicitly mentions your brand in the prompt ("What does [YourBrand] say about X?"). That's useful for brand monitoring but useless for content strategy.

You want to track non-branded prompts -- questions where your brand isn't mentioned but your content gets cited anyway. That's where the real visibility opportunity is.

They don't connect citations to traffic

Knowing your page got cited 100 times is interesting. Knowing whether those citations drove actual traffic is actionable.

Tools like Promptwatch offer visitor analytics (via code snippet, Google Search Console integration, or server log analysis) that connect citations to traffic and conversions. Most competitors stop at the citation count.

The citation distribution problem: why most pages get ignored

Search Influence's Bing data showed a brutal truth: the top 4 pages accounted for 90% of citations. Everything else combined was 10%.

This isn't unique to Bing. It's how AI engines work. They have a short list of "trusted sources" for each topic, and they cite those sources repeatedly. If your content isn't on that short list, you're invisible.

The way onto the list: create content that directly answers high-volume prompts, cite your sources, use structured data, and get cited by other authoritative sites (Reddit threads, YouTube videos, industry publications). AI engines learn from the web's citation graph. If other trusted sources link to your content, AI engines notice.

Page-level tracking shows you which pages made the list and which didn't. Then you reverse-engineer the winners and fix the losers.

Reddit and YouTube: the hidden citation sources

AI engines don't just cite traditional websites. They pull heavily from Reddit threads and YouTube video transcripts.

Promptwat tracks Reddit and YouTube citations alongside traditional web pages. You can see which Reddit threads mention your brand, which YouTube videos cite your content, and how often AI engines pull from those sources when generating responses.

Most competitors (Otterly.AI, Peec.ai, AthenaHQ) don't track Reddit or YouTube at all. But if you're in SaaS, e-commerce, or any category with active Reddit communities, ignoring Reddit citations is a mistake. AI engines treat Reddit as a high-authority source for "real user opinions."

Tools that don't do page-level tracking (but claim to)

Here are some popular AI visibility tools that don't offer genuine page-level citation tracking:

- Semrush AI Visibility: Tracks brand mentions and domain-level visibility but doesn't show specific URLs

- Ahrefs Brand Radar: Monitors brand mentions in AI responses but doesn't break down citations by page

- Peec.ai: Tracks domain-level citations and brand mentions but no page-level granularity

- AthenaHQ: Brand monitoring across AI engines but no page-level citation data

These are solid tools for brand monitoring. But if you need to know which pages get cited, they won't help.

How to get started with page-level citation tracking

- Pick a tool: Start with Promptwatch (best all-around option), Profound (if you need deep competitive analysis), or Bing Webmaster Tools (if you only care about Copilot).

- Set up tracking for 50-100 prompts: Focus on high-value, non-branded prompts related to your core topics. Don't track vanity prompts like "What is [YourBrand]?" -- track the questions your customers actually ask.

- Run a baseline report: Pull page-level citation data for the past 30 days. Identify your top-cited pages and your zero-citation pages.

- Compare vs competitors: Run a citation gap analysis. Export the list of prompts where competitors get cited but you don't.

- Create content to fill the gaps: Use the gap analysis as your content roadmap. Write guides, comparisons, and how-tos that directly answer the prompts you're missing.

- Track results weekly: Set up a dashboard that shows citation trends over time. Watch for drops and respond fast.

Page-level citation tracking isn't a vanity metric. It's the fastest way to figure out what content works, what's missing, and where to focus next. Most brands are still stuck at brand-level monitoring. If you move to page-level tracking now, you're ahead.