Summary

- AI assistants like ChatGPT, Perplexity, and Claude have become the first research layer for B2B buyers -- 81% choose vendors before talking to sales, making AI visibility more critical than traditional SEO rankings

- The right AI visibility tool should track citations across multiple LLMs, show you content gaps competitors are exploiting, and help you create content that actually gets cited -- not just monitor what's already happening

- B2B SaaS companies need tools that connect AI visibility to pipeline: track which prompts drive traffic, which pages get cited, and how visibility translates to revenue

- Most platforms are monitoring-only dashboards that leave you stuck with data but no action plan -- look for tools with content gap analysis, AI-optimized content generation, and crawler log visibility

- A complete AI visibility stack for B2B SaaS costs under $500/month when you prioritize platforms that combine tracking, optimization, and content creation in one workflow

Why AI visibility matters more than Google rankings for B2B SaaS

Your ideal customer opens ChatGPT and types "best project management tools for remote teams." Your brand doesn't appear in the response. They ask Claude for "CRM alternatives to Salesforce." Still nothing. By the time they reach Google, they've already built a shortlist -- and you're not on it.

This is the new reality for B2B SaaS companies. Traditional SEO metrics like keyword rankings and backlink counts tell you how visible you are to search engines, but they're silent on the question that actually determines pipeline: how visible are you to AI models?

The economics make this urgent. CAC ratios increased 14% year-over-year while 81% of B2B buyers choose vendors before talking to sales. You can't afford to be invisible during the research phase that happens entirely inside ChatGPT, Perplexity, and Claude.

AI visibility isn't about gaming algorithms or keyword stuffing for chatbots. It's about ensuring that when prospects ask questions your product solves, AI models have authoritative, accurate information about your brand to cite. That requires different tools than traditional SEO.

What makes an AI visibility tool worth using

Most AI visibility platforms fall into one of two camps: monitoring-only dashboards that show you data but leave you stuck, or feature-bloated enterprise tools that cost five figures per month and take three months to implement.

The tools that actually move the needle for B2B SaaS teams share three characteristics:

They track citations across multiple LLMs, not just one. Your prospects don't use only ChatGPT or only Perplexity. They bounce between models depending on context, device, and mood. A tool that monitors only Google AI Overviews or only ChatGPT gives you an incomplete picture. You need visibility across ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, and Copilot at minimum.

They show you what's missing, not just what's working. Knowing that competitors get cited 47 times while you get cited 12 times is interesting. Knowing exactly which prompts they rank for that you don't -- and what content gaps are causing that invisibility -- is actionable. The best tools perform content gap analysis that shows you the specific topics, angles, and questions AI models want answers to but can't find on your site.

They help you fix the problem, not just measure it. Monitoring tools tell you where you're invisible. Optimization platforms show you why and give you a path to fix it. That means content recommendations grounded in real citation data, AI-optimized content generation, and tracking that connects visibility improvements to actual traffic and pipeline.

Companies using AI in marketing report 42% reduction in CAC, but 74% still struggle to achieve real AI value. The difference is tool fit. Features matter less than workflow integration.

The AI visibility features B2B SaaS teams actually use

Multi-LLM citation tracking

This is table stakes. The tool should run your target prompts across ChatGPT, Claude, Gemini, Perplexity, and other major models, then track how often your brand or URLs appear in responses. Look for:

- Coverage of at least 8-10 AI models (ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Copilot, Meta AI, Mistral)

- Prompt volume estimates and difficulty scoring so you prioritize high-value, winnable queries

- Citation position tracking (are you mentioned first, third, or buried at the bottom?)

- Sentiment and context analysis (is the mention positive, neutral, or a warning to avoid your product?)

Content gap analysis

This is where monitoring becomes optimization. The tool should compare your AI visibility against competitors and show you exactly which prompts they rank for that you don't. More importantly, it should tell you what content is missing from your site.

Good gap analysis surfaces:

- Specific topics and angles competitors cover that you ignore

- Questions AI models want to answer but can't find information about on your site

- Content formats that drive citations (comparison pages, listicles, how-to guides, case studies)

- Keyword and entity gaps that leave you invisible for entire categories of prompts

Without gap analysis, you're guessing at what content to create. With it, you're building exactly what AI models need to cite you.

AI crawler logs

Most teams have no idea how often ChatGPT, Claude, or Perplexity crawlers visit their website, which pages they read, or what errors they encounter. AI crawler logs give you real-time visibility into:

- Which AI models are crawling your site and how frequently

- Which pages they access (and which they can't reach due to robots.txt blocks or paywalls)

- Crawl errors, timeouts, and indexing issues that prevent AI models from understanding your content

- Return frequency patterns that indicate whether your content is being regularly refreshed in AI training data

This matters because if AI models can't crawl your site, they can't cite it. Crawler logs help you fix indexing issues before they cost you visibility.

Competitor visibility benchmarking

You need to know where you stand relative to competitors. The best tools provide:

- Side-by-side visibility scores showing your citation share vs competitors

- Heatmaps that reveal which prompts each competitor dominates

- Citation source analysis showing which pages, Reddit threads, YouTube videos, and domains AI models cite when recommending competitors

- Trend tracking that shows whether your visibility is improving or declining over time

Benchmarking turns abstract visibility scores into concrete competitive intelligence. You see exactly where competitors are winning and what content strategies drive their citations.

AI-optimized content generation

Monitoring and gap analysis tell you what to fix. Content generation helps you actually fix it. Look for tools that:

- Generate articles, listicles, and comparison pages grounded in real citation data (not generic SEO filler)

- Optimize for entity recognition, schema markup, and structured data that AI models parse

- Target specific personas and use cases based on prompt analysis

- Create content in formats that drive citations (comparison tables, feature breakdowns, use case guides)

The content should be good enough to publish with light editing, not a first draft you have to rewrite from scratch.

Traffic attribution and pipeline tracking

Visibility scores are interesting. Revenue is what matters. The tool should connect AI visibility to actual business outcomes:

- Traffic attribution showing which visitors came from AI search (via code snippet, Google Search Console integration, or server log analysis)

- Page-level tracking showing which URLs get cited and how often

- Conversion tracking that connects AI-driven traffic to pipeline and revenue

- ROI reporting that shows whether your AI visibility investment is paying off

Without attribution, you're optimizing in the dark. With it, you know exactly which prompts and pages drive revenue.

The tools B2B SaaS teams are actually using

Promptwatch: The action-oriented platform

Promptwatch is the only AI visibility platform rated as a "Leader" across all categories in a 2026 comparison of 12 GEO platforms. The core difference: most competitors are monitoring-only dashboards. Promptwatch is built around taking action.

The workflow is simple. Answer Gap Analysis shows exactly which prompts competitors are visible for but you're not. You see the specific content your website is missing -- the topics, angles, and questions AI models want answers to but can't find on your site. The built-in AI writing agent generates articles, listicles, and comparisons grounded in real citation data (880M+ citations analyzed), prompt volumes, persona targeting, and competitor analysis. Then you track results: visibility scores improve as AI models start citing your new content, page-level tracking shows exactly which pages are being cited, and traffic attribution connects visibility to revenue.

This cycle -- find gaps, generate content, track results -- is what makes Promptwatch an optimization platform instead of just another tracker.

Additional capabilities:

- AI Crawler Logs showing real-time logs of ChatGPT, Claude, Perplexity crawlers hitting your website

- Prompt Intelligence with volume estimates and difficulty scores for each prompt

- Citation & Source Analysis showing exactly which pages, Reddit threads, YouTube videos, and domains AI models cite

- Reddit & YouTube Insights surfacing discussions that influence AI recommendations

- ChatGPT Shopping Tracking monitoring when your brand appears in product recommendations

- Multi-language & Multi-region monitoring in any language, from any country

Monitors 10 AI models: ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Claude, Gemini, Meta/Llama, DeepSeek, Grok, Mistral, Copilot.

Pricing: Essential $99/mo (1 site, 50 prompts, 5 articles), Professional $249/mo (2 sites, 150 prompts, 15 articles, crawler logs), Business $579/mo (5 sites, 350 prompts, 30 articles). Free trial available.

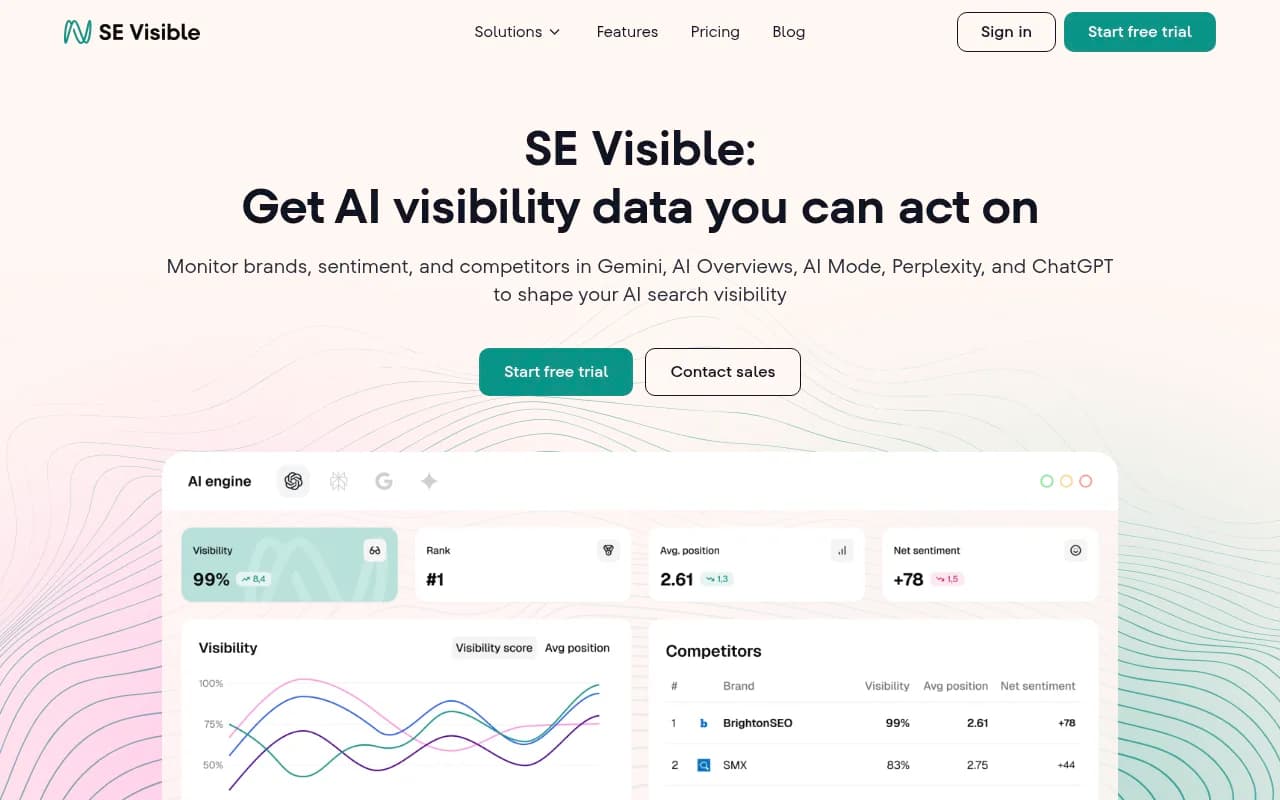

SE Visible: User-friendly tracking for teams new to AI visibility

SE Visible (from SE Ranking) focuses on making AI visibility tracking accessible for teams that don't have dedicated GEO specialists. The interface is clean, onboarding is fast, and the learning curve is gentle. It tracks citations across major LLMs and provides basic competitor benchmarking.

What it does well: simple setup, clear reporting, good for teams just starting to monitor AI visibility. What it lacks: no content gap analysis, no AI content generation, no crawler logs. It's a monitoring tool, not an optimization platform.

Good fit for: small B2B SaaS teams that want to start tracking AI visibility without a steep learning curve or large budget.

Semrush: Traditional SEO platform adding AI visibility features

Semrush is a comprehensive SEO platform that added AI visibility tracking in 2025. It monitors Google AI Overviews and provides basic citation tracking across a few LLMs. The advantage is integration with Semrush's existing keyword research, backlink analysis, and site audit tools.

The limitation: Semrush uses fixed prompt sets instead of letting you track custom prompts relevant to your product. You can't monitor the specific questions your prospects ask. The AI visibility features feel bolted onto a traditional SEO platform rather than purpose-built for AI search.

Good fit for: teams already using Semrush for SEO who want to add basic AI visibility monitoring without switching platforms.

Otterly.AI: Affordable monitoring for startups

Otterly.AI is one of the most affordable AI visibility trackers, making it accessible for early-stage startups. It monitors citations across ChatGPT, Perplexity, and a few other models, and provides basic reporting.

What it lacks: no content gap analysis, no AI content generation, no crawler logs, no visitor analytics. It's pure monitoring. You see where you're cited and where you're not, but you're on your own for figuring out what to do about it.

Good fit for: bootstrapped startups that need basic AI visibility tracking on a tight budget.

Peec.ai: Multi-language tracking for global B2B SaaS

Peec.ai stands out for its multi-language and multi-region capabilities. If your B2B SaaS serves customers in multiple countries and languages, Peec.ai lets you track AI visibility in each market separately.

It monitors citations across major LLMs and provides competitor benchmarking. The interface is straightforward and reporting is clear. What it lacks: no content gap analysis, no AI content generation, no crawler logs. Like Otterly.AI and SE Visible, it's monitoring-only.

Good fit for: B2B SaaS companies with international customers who need to track AI visibility across multiple languages and regions.

AthenaHQ: Monitoring-focused with good competitor tracking

AthenaHQ tracks citations across 8+ AI search engines and provides solid competitor benchmarking. The competitor heatmaps are useful for seeing which prompts each competitor dominates.

What it lacks: no content gap analysis, no content generation, no crawler logs. It's another monitoring-only platform. You get good visibility into where you stand relative to competitors, but no tools to close the gap.

Good fit for: teams that want strong competitor intelligence but are comfortable handling content optimization separately.

Comparison table: AI visibility tools for B2B SaaS

| Tool | Starting price | LLMs tracked | Content gap analysis | AI content generation | Crawler logs | Best for |

|---|---|---|---|---|---|---|

| Promptwatch | $99/mo | 10 | Yes | Yes | Yes | Teams that want to optimize, not just monitor |

| SE Visible | $39/mo | 5 | No | No | No | Small teams new to AI visibility |

| Semrush | $139/mo | 3 | No | No | No | Teams already using Semrush for SEO |

| Otterly.AI | $29/mo | 4 | No | No | No | Bootstrapped startups on tight budgets |

| Peec.ai | $99/mo | 6 | No | No | No | Global B2B SaaS with multi-language needs |

| AthenaHQ | $149/mo | 8 | No | No | No | Teams focused on competitor intelligence |

How to choose the right AI visibility tool for your B2B SaaS

Start by asking what you actually need to accomplish. If you just want to monitor where your brand appears in AI responses and don't plan to actively optimize, a basic monitoring tool like Otterly.AI or SE Visible is fine. You'll save money and avoid paying for features you won't use.

If you want to actively improve your AI visibility -- which is the only reason to track it in the first place -- you need a platform that shows you what's missing and helps you fix it. That means content gap analysis, AI-optimized content generation, and crawler logs. Tools like Promptwatch that combine monitoring, optimization, and content creation in one workflow save you from stitching together three separate platforms.

Consider your team's capacity. If you have a dedicated content team that can write AI-optimized articles from scratch, you might not need built-in content generation. If you're a lean team wearing multiple hats, having an AI writing agent that generates citation-worthy content based on real prompt data is a huge time saver.

Think about integration with your existing stack. If you're already deep in the Semrush ecosystem, adding their AI visibility features might make sense even if they're less robust than standalone platforms. If you're starting fresh, choose the tool that best fits your workflow rather than forcing yourself into a suboptimal solution for the sake of integration.

Budget matters, but don't optimize for the cheapest option if it leaves you stuck with data and no action plan. A $29/month monitoring tool that shows you're invisible but gives you no path to fix it is a worse investment than a $99/month optimization platform that actually improves your visibility.

What B2B SaaS teams get wrong about AI visibility

The biggest mistake is treating AI visibility like traditional SEO. Teams assume that if they rank well on Google, they'll automatically appear in AI responses. That's not how it works.

AI models don't just scrape Google's top 10 results. They synthesize information from multiple sources -- Reddit threads, YouTube videos, documentation pages, comparison sites, case studies, and yes, sometimes Google results. The content that ranks on Google often isn't the content that gets cited by ChatGPT or Claude.

AI models prioritize different signals than Google does. They want structured data, clear entity definitions, direct answers to specific questions, and content that acknowledges nuance and tradeoffs. Generic SEO content optimized for keyword density and backlinks often fails to get cited because it doesn't provide the depth and specificity AI models need.

Another mistake: optimizing for a single LLM. Teams focus on ChatGPT because it's the most popular, then wonder why they're invisible in Perplexity, Claude, and Gemini. Your prospects use multiple AI models. You need visibility across all of them.

The third mistake: monitoring without optimizing. Tracking your AI visibility is interesting. Improving it is what drives revenue. If your tool doesn't help you identify content gaps and create citation-worthy content, you're paying for a dashboard that makes you feel informed while your competitors eat your lunch.

The AI visibility workflow that actually works

-

Audit your current visibility. Run your key prompts across ChatGPT, Claude, Gemini, Perplexity, and other major models. See where your brand appears, where it doesn't, and how you compare to competitors. This baseline tells you how much work you have ahead.

-

Identify content gaps. Use your AI visibility tool's gap analysis to see which prompts competitors rank for that you don't. Look for patterns -- are you missing comparison content? Use case guides? Feature breakdowns? Integration documentation? The gaps reveal your content strategy.

-

Prioritize high-value prompts. Not all prompts are equally valuable. Focus on prompts with high volume, reasonable difficulty, and strong buyer intent. A prompt like "best CRM for small businesses" is more valuable than "what is a CRM" even if the latter has higher volume.

-

Create citation-worthy content. Write (or generate with AI) content that directly answers the prompts you're targeting. Use structured data, comparison tables, clear headings, and specific examples. Avoid generic filler. AI models cite content that provides depth and nuance, not keyword-stuffed fluff.

-

Fix crawler access issues. Check your AI crawler logs to ensure ChatGPT, Claude, and Perplexity can actually access your content. If they're blocked by robots.txt, hitting paywalls, or encountering errors, they can't cite you no matter how good your content is.

-

Track results and iterate. Monitor your visibility scores over time. See which pages get cited and which don't. Connect visibility improvements to traffic and pipeline. Double down on what works and fix what doesn't.

This workflow -- audit, identify gaps, prioritize, create content, fix access, track results -- is the action loop that separates teams that improve AI visibility from teams that just monitor it.

AI visibility in 2026: What's changing

AI search is evolving fast. Google AI Overviews now appear on 15% of searches. ChatGPT added a shopping feature that recommends products directly in responses. Perplexity launched a shopping assistant. Claude improved its citation accuracy. New models like DeepSeek and Grok entered the market.

The trend is clear: AI models are becoming the primary research layer for B2B buyers. They're not just answering simple questions anymore -- they're building vendor shortlists, comparing features, and making recommendations. If your brand isn't visible in those AI-generated answers, you're losing deals before prospects ever reach your website.

The tools are maturing too. Early AI visibility platforms were basic monitoring dashboards. The next generation combines monitoring with optimization -- content gap analysis, AI-optimized content generation, crawler logs, and traffic attribution. The platforms that win will be the ones that help teams take action, not just observe.

B2B SaaS companies that treat AI visibility as a core growth channel -- not a nice-to-have experiment -- will have a massive advantage. The companies that wait until AI search is "fully mature" will spend 2027 playing catch-up while competitors dominate the prompts that drive pipeline.

Start with the action loop, not the monitoring dashboard

Most teams approach AI visibility backwards. They start with monitoring, see that they're invisible for important prompts, then get stuck because their tool doesn't help them fix it.

Start with the action loop instead. Choose a platform that shows you what's missing, helps you create content that gets cited, and tracks whether it's working. Monitoring is useful only if it leads to optimization.

If you're a lean B2B SaaS team that wants to improve AI visibility without hiring a dedicated GEO specialist, tools like Promptwatch that combine gap analysis, content generation, and tracking in one workflow are the most efficient path. You spend less time stitching together platforms and more time actually improving visibility.

If you're a larger team with dedicated content resources, you might prefer a monitoring-focused platform paired with your own content creation process. Just make sure you're not paying for a dashboard that leaves you stuck with data but no action plan.

The goal isn't to track AI visibility. The goal is to be visible where your prospects are researching vendors. Choose tools that help you get there.